VTAM Pipeline: A Step-by-Step Guide to Validating Metabarcoding Data for Biomedical Research

This article provides a comprehensive guide to the VTAM (Validation, Taxonomic Assignment, and Analysis of Metabarcoding data) pipeline, a specialized tool for rigorous validation of amplicon sequence variants (ASVs) in...

VTAM Pipeline: A Step-by-Step Guide to Validating Metabarcoding Data for Biomedical Research

Abstract

This article provides a comprehensive guide to the VTAM (Validation, Taxonomic Assignment, and Analysis of Metabarcoding data) pipeline, a specialized tool for rigorous validation of amplicon sequence variants (ASVs) in microbiome and pathogen detection studies. Tailored for researchers and drug development professionals, we explore VTAM's foundational principles, detail its methodological workflow from input to output, address common troubleshooting and optimization strategies, and critically compare its validation performance against alternative bioinformatics tools. The guide synthesizes best practices for ensuring robust, reproducible metabarcoding data analysis crucial for clinical diagnostics and therapeutic development.

What is the VTAM Pipeline? Core Principles for Reliable Metabarcoding Analysis

Within the context of developing a robust VTAM (Validation and Taxonomic Assignment Module) pipeline for metabarcoding data research, this guide defines its core purpose and operational scope. Metabarcoding, the high-throughput taxonomic identification of organisms from environmental samples using standardized DNA barcodes, generates vast datasets prone to false positives from contamination, sequencing errors, and database inaccuracies. The VTAM pipeline is purpose-built to address these vulnerabilities through rigorous, stepwise validation, ensuring that only biologically meaningful Amplicon Sequence Variants (ASVs) or Operational Taxonomic Units (OTUs) are retained for ecological and biomedical interpretation. For drug development professionals and researchers, this validation is critical, as spurious signals can misdirect the discovery of microbial biomarkers or therapeutic targets.

Core Purpose of VTAM

The primary purpose of VTAM is to implement a stringent, customizable filter that separates genuine biological sequences from artifactual noise. It functions as a quality-control checkpoint within the broader metabarcoding workflow.

Table 1: Primary Objectives of the VTAM Pipeline

| Objective | Technical Description | Impact on Research |

|---|---|---|

| Contamination Removal | Filters sequences based on their presence/absence in negative controls using statistical thresholds (e.g., Fisher's exact test). | Reduces false positives from laboratory or reagent contaminants, crucial for low-biomass samples. |

| Error Correction | Implements a "replication filter" requiring sequences to appear in multiple PCR replicates or independent runs. | Mitigates effects of stochastic PCR and sequencing errors. |

| Threshold Management | Allows user-defined cut-offs for read count and sample prevalence. | Filters out rare, potentially spurious sequences while retaining true rare biosphere signals. |

| Taxonomic Validation | Optional step to check sequence assignment against a curated reference database. | Flags assignments that are unreliable due to database incompleteness or misannotation. |

Scope within the Metabarcoding Workflow

VTAM operates after initial bioinformatic processing (demultiplexing, primer trimming, merging of paired-end reads, and ASV/OTU clustering) and before downstream ecological or statistical analysis.

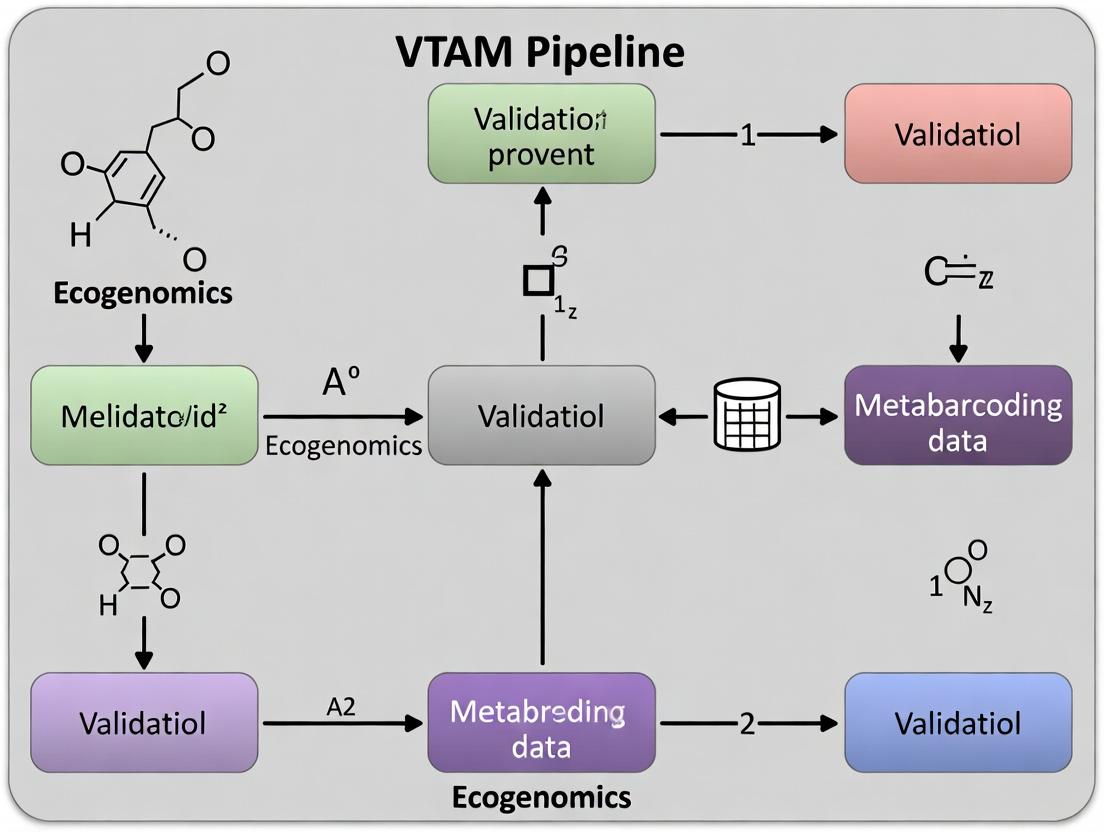

Diagram Title: VTAM Position in Metabarcoding Workflow

Detailed VTAM Methodology

The VTAM workflow is executed via a command-line interface, typically configured through a settings.ini file. The core validation steps are sequential.

Replication Filter

This filter requires an ASV to be present in a minimum number of PCR replicates (n) for a given sample to be retained.

Protocol:

- Input: ASV table (samples x ASVs) and a metadata file mapping samples to their respective PCR replicates.

- Parameter Setting: Define the minimum number of replicates (min_replicate) an ASV must be detected in (e.g., 2 out of 3).

- Execution: For each biological sample, VTAM groups its technical replicates. An ASV is kept for that sample only if its count is >0 in at least min_replicate replicates.

- Output: A filtered ASV table where ASV counts are summed across the validating replicates.

Control Filter

This filter removes ASVs present in negative controls based on a statistical test.

Protocol:

- Input: The filtered ASV table from the replication step and metadata identifying control samples.

- Statistical Test: A Fisher's exact test is performed for each ASV, comparing its presence/absence in true samples vs. control samples.

- Parameter Setting: Set a p-value threshold (e.g., p > 0.05). ASVs significantly associated with controls are discarded.

- Execution: The test is applied, and the ASV table is pruned.

Prevalence and Read Count Filters

Final filters based on global abundance and occurrence.

Protocol:

- Input: ASV table from the control filter.

- Prevalence Filter: Set a minimum percentage of samples an ASV must be present in (e.g., >5%). Removes spot-noise.

- Read Count Filter: Set a minimum total read count per ASV across all samples (e.g., >10 reads). Removes low-abundance noise.

- Output: The final, validated ASV table for downstream analysis.

Diagram Title: VTAM Core Filtering Steps

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 2: Key Reagents & Materials for VTAM-Validated Metabarcoding Experiments

| Item | Function in Workflow | Critical for VTAM? |

|---|---|---|

| Ultra-pure Water (e.g., PCR-grade) | Serves as the solvent for all molecular biology reagents and as the matrix for critical negative controls. | Yes. Essential for contamination assessment. |

| Mock Community DNA | A defined mix of genomic DNA from known organisms. Used as a positive control to assess primer bias, PCR efficiency, and bioinformatic fidelity. | Indirectly. Validates the pre-VTAM steps. |

| DNA Extraction Kit Blanks | A control where no sample is added during extraction. Identifies contamination from extraction kits/reagents. | Yes. Should be included as a control sample input for the VTAM Control Filter. |

| PCR-grade Polymerase & Nucleotides | High-fidelity, low-error-rate enzymes and pure dNTPs to minimize PCR-generated errors that create spurious ASVs. | Yes. Reduces input noise for VTAM replication filter. |

| Barcoded Primers & Adapter Kits | For sample multiplexing. High-quality, duplexed indices reduce index hopping (misassignment) artifacts. | Indirectly. Prevents sample cross-talk, a confounding factor. |

| Quantification Standards (e.g., Qubit dsDNA HS Assay) | Accurate quantification of library DNA ensures balanced sequencing, preventing sample dropout. | Indirectly. Ensures all samples/replicates are adequately sequenced for VTAM logic. |

Quantitative Data & Performance Metrics

The efficacy of VTAM is measured by its impact on dataset structure and the retention of positive control signals.

Table 3: Example VTAM Filtering Impact on a 16S rRNA Gene Dataset

| Filtering Stage | Number of ASVs Retained | % of Initial ASVs | Total Read Count | Key Statistic/Threshold Applied |

|---|---|---|---|---|

| Initial Dataset | 5,120 | 100% | 1,850,400 | N/A |

| After Replication Filter (min 2/3 reps) | 1,540 | 30.1% | 1,750,100 | Removed 3,580 singleton ASVs. |

| After Control Filter (Fisher's p > 0.05) | 1,210 | 23.6% | 1,720,300 | 270 ASVs significantly associated with blanks removed. |

| After Prevalence/Read Filter (>1% samples, >10 reads) | 892 | 17.4% | 1,715,650 | Final validated community for analysis. |

In the thesis framework for a VTAM pipeline, its purpose is precisely defined as the application of statistically grounded, experimental-control-aware validation filters to metabarcoding data. Its scope is deliberately positioned post-clustering and pre-analysis, acting as a critical gatekeeper. By enforcing detection replication, rigorously subtracting control-derived artifacts, and applying abundance thresholds, VTAM transforms a raw, noisy ASV table into a highly confident dataset. For researchers and drug developers, this process is not merely a bioinformatic step but a foundational component of rigorous, reproducible science, ensuring that subsequent conclusions about microbial community composition, dynamics, and therapeutic associations are built on validated molecular evidence.

1. Introduction

Within the rigorous framework of the VTAM (Validation, Taxonomic Assignment, and Analysis of Metabarcoding) pipeline, the initial bioinformatic processing of raw sequencing reads presents a critical vulnerability: the uncritical acceptance of Amplicon Sequence Variants (ASVs) or Operational Taxonomic Units (OTUs) without accounting for artifactual sequences. Two of the most pervasive artifacts are false positives (non-target amplification, index hopping, and contamination) and chimeric sequences (PCR-generated hybrids of two or more biological templates). Their presence directly compromises downstream analyses, leading to inflated diversity estimates, erroneous ecological inferences, and flawed hypotheses in drug discovery targeting microbial communities. This whitepaper details the technical origins, detection methodologies, and experimental validation protocols essential for robust metabarcoding research.

2. Quantitative Impact of Artifacts

The prevalence of chimeras and false positives is non-trivial and varies with experimental parameters. The following table synthesizes current data on their occurrence.

Table 1: Prevalence and Sources of Key Sequencing Artifacts

| Artifact Type | Typical Reported Prevalence | Primary Source | Impact on Data |

|---|---|---|---|

| Chimeric Sequences | 5% - 30% of raw reads (increases with PCR cycle number) | Incomplete extension during later PCR cycles. | Inflation of phantom taxa; false diversity. |

| Index Hopping (False Positives) | 0.1% - 10% of reads (platform/library prep dependent) | Cross-contamination of sample indexes on patterned flow cells. | Erosion of sample specificity; false cross-sample presence. |

| Non-Target Amplification | Highly variable (1% - 60%) | Primer mismatch to off-target genomic regions. | Dominance of irrelevant sequences (e.g., host DNA). |

| Contamination (Kit/Environment) | Can dominate low-biomass samples | Reagents, laboratory environment. | Complete distortion of community profile. |

3. Core Detection Methodologies & Experimental Protocols

3.1. In Silico Chimera Detection

Reference-Based Detection (e.g., against SILVA, UNITE):

- Protocol: The candidate ASV is aligned against a curated reference database. The sequence is split into growing segments from the 5' and 3' ends. Each segment is searched independently against the database. A chimeric flag is raised if the best hit for the 5' segment is from a different parent than the best hit for the 3' segment, with high confidence (e.g., >90% identity).

- Limitation: Relies on comprehensive references; misses novel chimeras.

De Novo Detection (e.g., UCHIME2, VSEARCH):

- Protocol: All sequences in a dataset are pairwise compared. A candidate is considered a potential chimera of two more abundant "parent" sequences if it can be divided into a left segment that matches one parent and a right segment that matches another, with the breakpoint where divergence occurs. The

uchime3_denovocommand in VSEARCH is a current standard. - Limitation: Requires a steep abundance differential between real parents and chimeras.

- Protocol: All sequences in a dataset are pairwise compared. A candidate is considered a potential chimera of two more abundant "parent" sequences if it can be divided into a left segment that matches one parent and a right segment that matches another, with the breakpoint where divergence occurs. The

3.2. Experimental Validation of Suspect Sequences

- Protocol: Tag-Tagged, Blunt-End Ligation PCR (TTBL-PCR)

- Purpose: To empirically confirm the biological existence of a low-abundance or suspect ASV flagged as a potential chimera or contaminant.

- Workflow:

- Primer Design: Design nested, ASV-specific primers targeting the internal region of the suspect sequence.

- Primary PCR: Perform PCR on the original environmental DNA extract using the ASV-specific primers. Use a high-fidelity, low-error polymerase. Purify the product.

- Blunt-Ending & Phosphorylation: Treat the purified PCR product with a blunt-end enzyme (e.g., T4 Polymerase) and T4 Polynucleotide Kinase.

- Ligation: Ligate the blunt-ended product into a linearized, blunt-ended cloning vector.

- Transformation & Colony Screening: Transform competent E. coli, plate, and pick colonies. Screen colonies by PCR with vector-specific and ASV-specific primers.

- Sanger Sequencing: Sequence positive clones from multiple independent colonies. Compare to the original suspect ASV. Consistent recovery of the identical sequence supports biological origin, while non-recovery or recovery of varied sequences supports artifactual origin.

4. The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents for Validation Workflows

| Reagent / Material | Function in Validation | Example Product/Type |

|---|---|---|

| High-Fidelity DNA Polymerase | Minimizes PCR errors and de novo chimera formation during re-amplification. | Q5 High-Fidelity, Phusion Plus. |

| Unique Dual Indexed (UDI) Primers | Drastically reduces index hopping false positives via dual index filtering. | Nextera XT Index Kit v2, IDT for Illumina UDIs. |

| Mock Microbial Community | Positive control for chimera & false positive rates. | ZymoBIOMICS Microbial Community Standard. |

| Minimal DNA/Elution Buffer | Negative control for contamination detection. | 10mM Tris-HCl, pH 8.0; Nuclease-free water. |

| Blunt-End Cloning Vector Kit | Essential for TTBL-PCR validation of single ASVs. | pJET1.2/blunt Cloning Kit. |

| PCR Decontamination Reagent | Destroys carryover contaminant amplicons. | Uracil-DNA Glycosylase (UDG) or dsDNA Denaturant. |

5. Visualizing the Validation Workflow within VTAM

Diagram 1: VTAM Validation Module for Artifact Detection

Diagram 2: TTBL-PCR Workflow for Empirical ASV Validation

6. Conclusion

The integrity of any hypothesis generated from metabarcoding data within the VTAM pipeline is contingent upon the rigorous exclusion of false positives and chimeras. These artifacts are not mere noise but systematic errors that demand dedicated modules for in silico detection and, for critical findings, empirical wet-lab validation. The integration of the protocols and quality controls outlined herein is not optional but a foundational requirement for producing actionable, reliable data for downstream research, including targeted drug discovery in complex microbiomes.

The Validation and Taxonomic Assignment Module (VTAM) pipeline is a dedicated bioinformatics workflow designed to curate and validate amplicon sequence variant (ASV) data from metabarcoding studies, with a particular emphasis on detecting and controlling for laboratory and reagent contamination. This process is critical for research in microbial ecology, pathogen discovery, and drug development, where false positives from contamination can severely distort results and downstream analyses. The Core VTAM Algorithm and its Heuristic Filtering Process constitute the analytical engine of this pipeline, implementing a series of rule-based filters to distinguish genuine biological signals from artefactual noise. This whitepaper provides an in-depth technical guide to the logic, methodologies, and implementation of this core filtering process.

The Core VTAM Heuristic Filtering Algorithm: A Stepwise Deconstruction

The heuristic filter processes ASV tables through a cascade of user-defined criteria. Each step removes ASVs that are more likely to be artefacts (e.g., PCR/sequencing errors, cross-sample contamination, or reagent-borne DNA) than true biological sequences.

Key Filtering Steps and Their Rationale

The algorithm typically applies the following filters in sequence:

- Replicate Filter: An ASV must be present in at least n out of N PCR replicates for a given sample. This filter targets stochastic PCR errors and index hopping (tag jumping).

- Control Filter: An ASV that appears in negative control samples (extraction or PCR blanks) above a defined threshold is removed from all samples. This is the primary defense against reagent and laboratory contamination.

- Variant Filter: Also known as the "expected genotype" filter. For a given marker and species, it assumes a fixed number of true biological variants (e.g., one or two alleles per diploid locus). It retains only the most abundant k ASVs for a species-sample combination, removing rare variants presumed to be PCR errors.

- Read Count Filter: Applies a minimum read count threshold (e.g., 10 reads) for an ASV to be retained in a sample, filtering out very low-abundance noise.

Algorithmic Workflow Diagram

Diagram Title: VTAM Heuristic Filtering Sequential Workflow

Quantitative Filtering Outcomes: A Synthetic Data Example

Table 1: Example Impact of Sequential VTAM Filtering on ASV Counts (Synthetic Dataset)

| Filtering Step | Total ASVs Remaining | ASVs Removed in Step | % of Original Remaining |

|---|---|---|---|

| Raw Input | 15,250 | - | 100% |

| After Replicate Filter (n=2/3) | 8,941 | 6,309 | 58.6% |

| After Control Filter | 7,205 | 1,736 | 47.2% |

| After Variant Filter (k=2) | 3,112 | 4,093 | 20.4% |

| After Read Count Filter (≥10 reads) | 2,850 | 262 | 18.7% |

Table 2: Common VTAM Filter Parameters and Their Typical Ranges

| Filter | Key Parameter | Typical Range | Primary Target Artefact |

|---|---|---|---|

| Replicate | Minimum Replicates (n) | 2 out of 3, or 3 out of 4 | PCR stochastic error, index hopping |

| Control | Max Count in Negative | 0, 5, or 10 reads | Reagent/lab contamination |

| Variant | Variants per Sample (k) | 1 (haploid) or 2 (diploid) | PCR point errors, PCR chimeras |

| Read Count | Minimum Threshold | 5, 10, or 20 reads | Sequencing errors, low-level bleed-through |

Experimental Protocols Supporting the Heuristic Approach

The design and validation of VTAM's filters are grounded in controlled experimental methodologies.

Protocol for Determining Control Filter Threshold

Objective: Empirically establish the maximum read count permissible in negative controls. Method:

- Experimental Setup: Include a minimum of three extraction blanks and three PCR no-template controls (NTCs) in every sequencing run.

- Sequencing & Bioinformatic Processing: Process controls alongside samples through the same pipeline (demultiplexing, primer removal, ASV inference).

- Data Collection: Record the read count for every ASV detected in each control.

- Statistical Analysis: For each ASV, calculate the mean and standard deviation of its count across all controls. A common threshold is the maximum mean count + 3 standard deviations observed for any bona fide contaminant ASV (e.g., from reagent DNA).

- Application: Any ASV with a count exceeding this threshold in any control sample is tagged for removal by the Control Filter.

Protocol for Validating the Variant Filter (Expected Genotype)

Objective: Confirm that the assumed number of true variants (k) per marker/species is biologically accurate. Method:

- Reference Material Analysis: Sequence a mock community comprising known species with validated, Sanger-sequenced genotypes for the target marker.

- VTAM Processing: Run the mock community data through VTAM, applying the Variant Filter with different k values (e.g., k=1, 2, 3).

- Performance Assessment: Calculate precision and recall. The optimal k maximizes the recovery of all and only the expected genuine variants from the mock community.

- Cross-Validation: Apply the optimized k to a subset of real biological samples and perform Sanger sequencing on PCR products from selected samples to confirm the VTAM output.

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Reagent Solutions for a VTAM-Supported Metabarcoding Study

| Item | Function in VTAM Context | Critical Consideration |

|---|---|---|

| Ultra-Pure Water (PCR-grade) | Solvent for all molecular biology reagents. | Primary source of bacterial/archaeal DNA contamination; must be monitored via NTCs. |

| DNA Extraction Kit (e.g., MoBio PowerSoil) | Isolates DNA from complex samples. | Kit reagents themselves often contain microbial DNA; extraction blanks are non-negotiable. |

| Polymerase (e.g., HotStart Taq) | Enzymatic amplification of target barcode. | Enzyme fidelity influences error rate; enzyme storage buffer can be contaminated. |

| Synthetic DNA Mock Community | Validates Variant Filter parameter k and overall pipeline accuracy. | Must include known genotype sequences for your specific marker gene. |

| Uniquely Tagged Primers (Dual-Indexing) | Allows sample multiplexing and specific assignment of reads. | Reduces, but does not eliminate, index hopping; enables replicate filtering. |

| Magnetic Bead Clean-up Kits | Purifies PCR products before sequencing. | Size-selection can bias variant representation; consistent protocol is vital. |

Logical Relationships in Filter Decision-Making

The algorithm's decision for each ASV is based on conditional logic across sample and control data.

Diagram Title: Decision Tree for VTAM Heuristic Filtering of a Single ASV

Within the broader research thesis on the Validation of Taxonomic Assignments in Metabarcoding (VTAM) pipeline, the accurate curation and understanding of input files are foundational. The VTAM pipeline is designed to rigorously control false positives and validate Amplicon Sequence Variants (ASVs) in metabarcoding studies, which are critical for applications in microbial ecology, biomarker discovery, and drug development. The pipeline's efficacy is contingent upon three core input files: raw sequencing data (FASTQ), a feature table (ASV Table), and taxonomic assignments. This guide provides an in-depth technical examination of these required files, their generation, and their role in producing validated, high-confidence outputs for downstream analysis.

Core Input Files: Specifications and Generation

FASTQ Files: Raw Sequencing Data

FASTQ is the standard text-based format for storing both a biological sequence (typically nucleotide) and its corresponding quality scores. It is the primary output from high-throughput sequencing platforms like Illumina.

File Structure: Each read is represented by four lines:

- Sequence Identifier (begins with

@): Contains instrument and run data. - The raw sequence letters (A, T, C, G, N).

- Separator line (often just a

+). - Quality scores: Encoded in Phred+33, where each character represents the probability of an incorrect base call.

Generation Protocol: FASTQ files are generated directly by the sequencing instrument's base-calling software (e.g., Illumina's bcl2fastq or DRAGEN). A typical paired-end metabarcoding run will produce two FASTQ files per sample (*_R1.fastq and *_R2.fastq).

Table 1: Common FASTQ Quality Score Encoding

| Phred Quality Score (Q) | Probability of Incorrect Base Call | Typical ASCII Character (Sanger/Illumina 1.8+) |

|---|---|---|

| 10 | 1 in 10 | + |

| 20 | 1 in 100 | 5 |

| 30 | 1 in 1000 | ? |

| 40 | 1 in 10,000 | I |

ASV Table: The Feature Table

The ASV (Amplicon Sequence Variant) table is a biomatrix where rows represent unique ASVs (biological features), columns represent samples, and values represent the read count (abundance) of each ASV in each sample.

File Format: Commonly stored as a tab-separated values (.tsv) file or in the Biological Observation Matrix (.biom) format, which is more efficient for large datasets.

Generation Protocol (Typical DADA2 Workflow):

- Filter and Trim: Using

filterAndTrim()in DADA2 to remove low-quality bases and reads. - Learn Error Rates: Model the error profile of the dataset with

learnErrors(). - Dereplication: Combine identical reads to reduce computation with

derepFastq(). - Sample Inference: Apply the core sample inference algorithm with

dada()to resolve true biological sequences. - Merge Paired Reads: For paired-end data, merge forward and reverse reads with

mergePairs(). - Construct Sequence Table: Build the ASV table with

makeSequenceTable(). This table is then typically filtered to remove chimeras usingremoveBimeraDenovo().

Table 2: Example ASV Table Snippet

| ASV_ID (Sequence Hash) | Sample_1 | Sample_2 | Sample_3 |

|---|---|---|---|

| ASV_001 (AACTG...) | 1502 | 890 | 0 |

| ASV_002 (TCAGA...) | 0 | 423 | 1201 |

| ASV_003 (GGCTA...) | 65 | 77 | 98 |

Taxonomy Table: Taxonomic Assignments

This file maps each ASV from the ASV table to a predicted taxonomic lineage (e.g., Kingdom, Phylum, Class, Order, Family, Genus, Species).

File Format: A tab-separated file where the first column matches the ASV_ID/sequence from the ASV table, followed by columns for each taxonomic rank and often a confidence score.

Generation Protocol (Using a Classifier):

- Classifier Training: A reference database (e.g., SILVA, UNITE, Greengenes) is used to train a naïve Bayes classifier. This is often done offline with tools like

RESCRIPtfor curating reference data. - Assignment: The ASV sequences are assigned taxonomy using the pre-trained classifier. In QIIME 2, the

classify-sklearncommand is used. In DADA2/R, theassignTaxonomy()function performs this task. - Species-Level Assignment (Optional): An additional step with

assignSpecies()can attempt exact matching to reference species.

Table 3: Example Taxonomy Assignment Table

| ASV_ID | Kingdom | Phylum | Class | Order | Family | Genus | Species | Confidence |

|---|---|---|---|---|---|---|---|---|

| ASV_001 | Bacteria | Bacteroidota | Bacteroidia | Bacteroidales | Prevotellaceae | Prevotella | NA | 0.98 |

| ASV_002 | Bacteria | Firmicutes | Clostridia | Oscillospirales | Ruminococcaceae | Faecalibacterium | prausnitzii | 0.99 |

| ASV_003 | Archaea | Euryarchaeota | Methanobacteria | Methanobacteriales | Methanobacteriaceae | Methanobrevibacter | NA | 0.96 |

Integration and Validation within the VTAM Pipeline

The VTAM pipeline uses these three inputs to perform validation steps that are absent from standard pipelines. Its core function is to apply a set of user-defined filters (e.g., based on negative control occurrence, replication rate, and taxonomic assignment) to remove likely false-positive ASVs.

VTAM Workflow Protocol:

- Input Merging: Combine the ASV table and Taxonomy table into a single annotated ASV list.

- Filter Application:

- Negative Control Filter: Remove ASVs present in negative controls above a defined threshold (e.g., >0.1% of total reads in controls).

- Replication Filter: Require an ASV to be present in a minimum number of PCR replicates per sample.

- Taxonomic Filter: Exclude ASVs assigned to unwanted taxa (e.g., chloroplasts, mitochondria) or with low-confidence assignments.

- Output Generation: Produce a validated ASV table and taxonomy file, ready for downstream ecological analysis (alpha/beta diversity, differential abundance).

Title: VTAM Pipeline Input & Validation Workflow

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 4: Key Reagents and Materials for Metabarcoding Workflow

| Item/Category | Function & Rationale |

|---|---|

| High-Fidelity DNA Polymerase (e.g., Q5, KAPA HiFi) | Critical for minimizing PCR amplification errors during library preparation, which reduces noise and improves ASV accuracy. |

| Validated Primer Sets (e.g., 16S V4, ITS2) | Target-specific oligonucleotides that define the amplified region. Must be selected for taxonomic resolution and minimal bias. |

| Magnetic Bead Cleanup Kits (e.g., AMPure XP) | For size selection and purification of PCR products, removing primer dimers and contaminants to ensure clean sequencing libraries. |

| Quantification Kits (e.g., Qubit dsDNA HS Assay) | Fluorometric quantification is essential for accurate pooling of libraries, as it is specific to double-stranded DNA unlike spectrophotometry. |

| PhiX Control v3 (Illumina) | Added to sequencing runs (1-5%) for quality control, error rate estimation, and balancing diversity on Illumina flow cells. |

| Negative Control Reagents (Nuclease-Free Water) | Used in extraction and PCR blanks to detect laboratory or reagent contamination, a vital input for the VTAM negative control filter. |

| Reference Databases (SILVA, UNITE, Greengenes) | Curated sets of reference sequences with taxonomy for training classifiers. Choice depends on marker gene and study domain. |

| Mock Microbial Community DNA (e.g., ZymoBIOMICS) | Contains known proportions of microbial strains. Used as a positive control to assess accuracy, precision, and bias of the entire wet-lab to bioinformatic pipeline. |

Within the rapidly evolving field of metabarcoding, data validation remains a critical bottleneck. False positives from contamination and index switching, alongside false negatives from amplification bias, can significantly skew ecological and biomedical conclusions. This document frames the VTAM (Validation of Taxonomic Assignments in Metabarcoding) pipeline within a broader thesis on rigorous, reproducible validation of metabarcoding data, establishing its specific niche and rationale for researchers, scientists, and drug development professionals.

The Validation Challenge: Quantifying the Problem

Metabarcoding pipelines involve sequential steps, each introducing potential error. The following table summarizes key sources of error and their typical estimated impact on data integrity, based on recent literature.

Table 1: Major Sources of Error in Metabarcoding Data Generation

| Error Source | Stage | Typical Impact Range (Estimated) | Consequence |

|---|---|---|---|

| Tag Jumping / Index Switching | Library Prep | 0.5% - 2.5% of reads per sample | False positives, cross-contamination |

| Environmental/Kit Contamination | Sample Collection to PCR | Variable; can dominate low-biomass samples | False positives, obscured signal |

| PCR Amplification Bias | Amplification | Up to 1000-fold variation in taxa amplification | False negatives, distorted abundance |

| Chimera Formation | Amplification | 5% - 20% of reads in some assays | Artificial, novel sequences |

| Database Misannotation | Bioinformatics | Dependent on reference database quality | Taxonomic misassignment |

VTAM's Niche: A Filter-First Philosophy

While many post-sequencing bioinformatics tools (e.g., DADA2, QIIME2, MOTHUR) focus on generating Amplicon Sequence Variants (ASVs) or Operational Taxonomic Units (OTUs) from all sequenced reads, VTAM occupies a distinct niche by implementing a filter-first methodology. Its core rationale is to rigorously filter out non-reliable sequences before the ASV-calling step, based on user-defined negative and positive control samples integrated directly into the experimental design.

Core Differentiators:

- Control-Driven: Explicitly uses control samples to define filtering thresholds.

- Replication-Aware: Requires variants to be present in multiple PCR replicates to pass filtering.

- Pipeline-Agnostic: Designed to output a filtered read set compatible with any downstream ASV/OTU pipeline.

VTAM's Operational Rationale: Detailed Methodologies

VTAM's workflow is built around specific experimental protocols. Below are the detailed methodologies for the key experiments that VTAM is designed to validate.

Protocol 1: Design and Inclusion of Control Samples

- Negative Controls: For each batch of DNA extraction, include a "blank" sample containing no biological material but subjected to the same reagents and procedures. For each batch of PCR amplification, include a "no-template" control (NTC) using PCR-grade water.

- Positive Controls: Spike a known, non-native biological specimen (e.g., a foreign fish species in soil samples) at a known concentration into select extraction blanks. This control assesses the detection limit and cross-contamination.

- PCR Replication: For each biological sample, perform a minimum of three independent PCR amplifications from the same extracted DNA. These are crucial for VTAM's replication filter.

Protocol 2: VTAM Analysis Workflow

- Input Preparation: Demultiplexed FASTQ files are assigned to categories:

sample,negative_control,positive_control. - Initial Variant Calling: Use a standard tool (VTAM wraps VSEARCH) to identify molecular variants from all files.

- Filter Steps:

- Control Filter: Remove any variant present in ≥ n negative control samples (user-defined n, often 1).

- Replicate Filter: Retain only variants present in ≥ m PCR replicates (user-defined m, typically 2 or 3) for a given biological sample.

- Positive Control Filter (Optional): Ensure variants from the spiked-in positive control are correctly retained, validating sensitivity.

- Output: A high-confidence set of variants and read counts for each biological sample, ready for taxonomic assignment and ecological analysis.

Visualizing the VTAM Ecosystem

Diagram 1: VTAM's Position in the Metabarcoding Pipeline

Diagram 2: VTAM's Internal Filtering Logic

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for VTAM-Supported Experiments

| Item | Function in VTAM Context | Example Product / Specification |

|---|---|---|

| PCR-Grade Water | Serves as the template for No-Template Controls (NTCs), critical for detecting reagent/lab-borne contamination. | Nuclease-Free, DNA/RNA-Free Water (e.g., ThermoFisher, Sigma). |

| Magnetic Bead Cleanup Kits | For consistent purification of PCR products pre-sequencing, reducing variability between replicates. | AMPure XP Beads (Beckman Coulter) or equivalent. |

| Unique Dual Index (UDI) Kits | Minimizes index-hopping artifacts. VTAM can filter remaining cross-talk via control filters. | Illumina Nextera UDI, IDT for Illumina UDI sets. |

| Synthetic Spike-in DNA | Non-native, quantified DNA used to create positive controls for sensitivity thresholds and pipeline validation. | gBlocks (IDT), Synthetic Metagenome Standards (e.g., ZymoBIOMICS). |

| High-Fidelity DNA Polymerase | Reduces PCR errors and chimera formation, generating more reliable sequences for VTAM's variant analysis. | Q5 Hot Start (NEB), KAPA HiFi HotStart ReadyMix. |

| Sample Tracking LIMS | Essential for unbreakable linkage between biological samples, their replicates, and control samples in metadata. | Benchling, Labguru, or in-house solutions. |

VTAM carves its niche in the bioinformatics ecosystem not as a replacement for established ASV callers, but as a specialized, upstream sentinel. Its rationale is rooted in the principle that the most sophisticated downstream analysis cannot recover ground truth from fundamentally compromised data. By enforcing a rigorous, control- and replication-based filtering paradigm, VTAM provides researchers, particularly in drug development where reproducibility is paramount, a formalized method to enhance the reliability of their metabarcoding datasets before biological interpretation begins.

Running VTAM: A Practical Step-by-Step Protocol for Researchers

Within the context of the VTAM (Validation and Taxonomic Assignment of Metabarcoding data) pipeline research, establishing a robust computational environment and preparing high-quality input data are foundational steps. The VTAM pipeline is designed for the rigorous validation of metabarcoding datasets, focusing on filtering out false positives (e.g., tag jumps, PCR and sequencing errors) and ensuring accurate taxonomic assignments for applications in biomonitoring, pathogen detection, and drug discovery research. This guide details the essential prerequisites for executing VTAM analyses effectively.

Software Dependencies

The VTAM pipeline is a Snakemake-based workflow that integrates several specialized tools. Installation is streamlined via Conda environments.

Table 1: Core Software Dependencies for VTAM

| Software/Tool | Version (Minimum) | Role in VTAM Pipeline | Installation Method |

|---|---|---|---|

| Snakemake | 5.10.0 | Workflow management and execution. | conda install -c bioconda snakemake |

| VTAM | 2.0.0+ | Core pipeline for validation and filtering. | conda install -c bioconda vtam |

| Cutadapt | 3.2 | Primer trimming and read quality control. | Included with VTAM environment. |

| VSEARCH | 2.15.0 | Dereplication, clustering, and chimera detection. | Included with VTAM environment. |

| MySQL/ MariaDB | 10.3+ | Database for storing run information, variants, and filters. | System package or conda install. |

| Python | 3.7+ | Core programming language for pipeline scripts. | Included with Conda environment. |

| Pandas | 1.2.0+ | Data manipulation within Python scripts. | Included with VTAM environment. |

Experimental Protocol 1: Conda Environment Setup

- Install Miniconda or Anaconda on your system.

- Create and activate a new Conda environment for VTAM:

- Verify installation by running:

vtam --help. - Start the MySQL service and initialize the VTAM database:

Input Data Preparation

Accurate input data is critical. VTAM requires a FastQ file pair (R1 & R2) for each sample, a sample information file, and a marker information file.

Table 2: Required Input Files and Specifications

| File Type | Format | Required Columns/Content | Purpose |

|---|---|---|---|

| Raw Sequencing Data | Paired-end FastQ (.fastq/.fq.gz) | Standard Illumina 1.8+ quality encoding. | Contains the raw metabarcoding reads. |

| Sample Information | Tab-separated values (.tsv) | sample, fastq1, fastq2, replicate, tag_fwd, tag_rev. |

Maps samples to files, barcodes, and replicates. |

| Marker Information | Tab-separated values (.tsv) | marker, primer_fwd, primer_rev, cutadapt_min_len, cutadapt_max_len, cutadapt_max_ee. |

Defines marker-specific primers and trimming parameters. |

Experimental Protocol 2: Input File Preparation

- Organize FastQ Files: Ensure filenames are consistent and placed in a dedicated directory (e.g.,

./data/fastq). - Create the Sample Information File:

- The

samplecolumn is a unique identifier. fastq1andfastq2are paths to the R1 and R2 files.replicatedenotes technical PCR replicates (e.g., 1, 2, 3).tag_fwdandtag_revare the nucleotide sequences of the forward and reverse sample tags (Multiplex Identifiers or MIDs).- Example snippet:

- The

- Create the Marker Information File:

- Define parameters for Cutadapt.

- Example for the

COImarker:

The VTAM Workflow Logic

Key Filtering Pathways in VTAM

VTAM applies sequential filters to eliminate erroneous sequences.

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Research Reagent Solutions for VTAM Input Preparation

| Item | Function in Metabarcoding for VTAM | Specification Notes |

|---|---|---|

| High-Fidelity DNA Polymerase | PCR amplification of target marker from environmental DNA. Minimizes polymerase-induced errors. | e.g., Q5 Hot Start (NEB) or Phusion Plus (Thermo). Critical for reducing false variants. |

| Dual Indexing Primer Sets | Attaches unique sample barcodes (tags) to amplicons during PCR for multiplexing. | Unique 8-12bp indices for forward and reverse primers. Essential for tag-jump filter. |

| Magnetic Bead Cleanup Kit | Purification and size-selection of PCR amplicons to remove primer dimers and non-specific products. | e.g., AMPure XP beads (Beckman Coulter). Affects size distribution input to VTAM. |

| Quantification Kit (Fluorometric) | Accurate measurement of amplicon library concentration for equitable pooling. | e.g., Qubit dsDNA HS Assay (Thermo). Prevents sequencing depth bias. |

| Next-Generation Sequencer | Generates paired-end reads of the amplified marker gene region. | Illumina MiSeq or NovaSeq platforms are standard. Outputs the primary FastQ data. |

| Environmental DNA Extraction Kit | Isolates total genomic DNA from complex sample matrices (soil, water, tissue). | Must be optimized for sample type to ensure unbiased lysis and inhibitor removal. |

Within the broader thesis on the Validation and Taxonomic Assignment Module (VTAM) pipeline for validating metabarcoding data, precise configuration is paramount. The config.yml file serves as the central control panel, determining the behavior, stringency, and reproducibility of the entire bioinformatic workflow. This guide provides an in-depth exploration of its key parameters, their quantitative impacts, and their role in ensuring robust research outcomes for drug development and ecological studies.

Core Parameter Sections and Functions

The VTAM config.yml file is organized into logical sections, each governing a specific phase of the validation pipeline.

Input/Output and Run Mode Configuration

This section defines data sources, destinations, and the pipeline's operational scope.

Title: I/O and Run Mode Data Flow

| Parameter Group | Key Parameter | Example Value | Function |

|---|---|---|---|

| Input/Output | fastq_info_tsv |

"path/to/samples.tsv" |

TSV file listing sample IDs and FASTQ paths. |

output_dir |

"vtam_results" |

Directory for all pipeline outputs. | |

| Run Control | run |

"filter" or "optimize" |

Determines if the run performs validation (filter) or parameter optimization (optimize). |

loglevel |

"info" or "debug" |

Controls verbosity of the log file. |

Filtering and Validation Parameters

These parameters control the core validation steps, directly impacting data stringency and false positive/negative rates.

Title: Sequential Filtering Stages in VTAM

Detailed Protocol for Filter Optimization:

- Objective: Empirically determine the optimal

min_replicate_numberthreshold. - Setup: In

config.yml, setrun: optimize. Define a range of values formin_replicate_number(e.g., 1 through 4). - Execution: Run VTAM. The pipeline executes the filtering process iteratively for each parameter value.

- Output Analysis: VTAM generates a plot (

optimize_replicate_number.png) showing the number of retained Variants (ASVs) versus the parameter value. The inflection point (elbow) often indicates the optimal trade-off between data retention and replication stringency. - Validation: The selected threshold should be validated against known mock community compositions if available.

| Filtering Parameter | Default | Typical Range (Empirical) | Impact on Data |

|---|---|---|---|

min_replicate_number |

2 | 2 - 4 | Higher values increase technical replication stringency, reducing false positives but potentially losing rare taxa. |

min_count_per_variant |

10 | 5 - 50 | Filters low-abundance reads (potential PCR/sequencing errors). Critical for noise reduction. |

max_variant_count |

100,000 | 10,000 - ∞ | Removes exceedingly abundant variants, potentially contaminants or non-target amplicons. |

min_sample_replicate_number |

1 | 1 - 3 | Requires an ASV to be present in N samples, filtering sporadic cross-contamination. |

The Scientist's Toolkit: Essential Research Reagent Solutions

| Item | Function in Metabarcoding Validation | Example Product/Catalog |

|---|---|---|

| Mock Community Standard | Provides known composition and abundance of DNA to calibrate pipelines, assess false negative/positive rates, and optimize config.yml parameters. |

ZymoBIOMICS Microbial Community Standard (D6300) |

| Negative Extraction Control | Identifies laboratory-derived contamination introduced during DNA extraction. Informs min_sample_replicate_number setting. |

Nuclease-free water processed alongside samples. |

| Positive PCR Control | Verifies PCR reaction efficacy. | Genomic DNA from a known, pure culture not present in samples. |

| Low-Binding Tubes & Tips | Minimizes DNA adsorption to surfaces, critical for preserving low-biomass samples and accurate min_count_per_variant thresholds. |

Eppendorf DNA LoBind tubes |

| High-Fidelity DNA Polymerase | Reduces PCR-induced sequence errors, decreasing spurious variant formation and reliance on stringent min_count_per_variant filtering. |

Q5 High-Fidelity DNA Polymerase (NEB M0491) |

| Size-Selection Beads | For clean-up of amplicon libraries, removing primer dimers that can affect cluster generation and downstream abundance metrics. | AMPure XP beads (Beckman Coulter A63881) |

Advanced: Parameter Interplay in Diagnostic Assay Development

For diagnostic and drug development applications, specificity is critical. Parameters must be tuned to distinguish true pathogens from background noise.

Title: Parameter Tuning for Diagnostic Specificity

Protocol for Diagnostic Threshold Optimization:

- Sample Set: Assemble a validated sample set with known positive (spiked pathogen) and negative (healthy cohort) samples.

- Baseline Run: Execute VTAM with conservative default parameters.

- Metric Calculation: Calculate per-parameter sensitivity (True Positive Rate) and specificity (True Negative Rate).

- ROC Analysis: For key parameters (e.g.,

min_count_per_variant), run VTAM across a swept range of values. Plot the Receiver Operating Characteristic (ROC) curve to select the threshold value that maximizes both sensitivity and specificity for the target application. - Lock Configuration: The final, validated

config.ymlparameters become part of the Standard Operating Procedure (SOP) for the diagnostic assay.

Within the context of the VTAM (Validation and Taxonomic Assignment of Metabarcoding data) pipeline, the initial filtering of Amplicon Sequence Variants (ASVs) is a critical first step. This process ensures the removal of spurious sequences generated by PCR and sequencing errors, thereby increasing the reliability of downstream ecological and taxonomic analyses. This guide details the methodology, parameters, and experimental rationale for executing the filter command, a cornerstone for validating metabarcoding data in research and drug discovery pipelines, where accurate microbial community profiling is paramount.

ThefilterCommand: Methodology and Protocol

The VTAM filter command operates on the principle of replication across PCR replicates and/or sequencing runs. Its core function is to retain only those ASVs that are present in a user-defined minimum number of replicates for a given sample, under a specific set of conditions (e.g., locus, variant).

2.1. Experimental Protocol

- Input Data Preparation: The command requires an ASV table (typically a

.tsvfile) generated by a denoising pipeline (e.g., DADA2, UNOISE3). This table must include columns forSample,Locus,Variant(ASV sequence),Replicate, andReadCount. - Parameter Configuration: Define the filtering threshold using the

--min_replicate(or--min_pcr_replicate) parameter. The optimal value is determined empirically based on experimental design. - Command Execution:

- Output: A filtered ASV table and an updated database. ASVs not meeting the replication threshold are logged and excluded from downstream steps.

2.2. Key Parameters and Their Impact

| Parameter | Typical Value Range | Function | Impact on Stringency |

|---|---|---|---|

--min_replicate |

2-4 (for triplicate PCRs) | Minimum number of replicates an ASV must appear in to be retained. | Higher value increases stringency, drastically reducing false positives but risking loss of rare true variants. |

--min_pcr_replicate |

2-3 (for triplicate PCRs) | Specifically targets PCR replicate count. | Similar to --min_replicate, but clarifies the replication level being assessed. |

--min_read_count |

10-100 | Absolute minimum read count for an ASV in a replicate to be considered "present". | Filters very low-abundance noise; higher values reduce sensitivity. |

Quantitative Data from Filtering Experiments

The efficacy of the filter step is demonstrated through internal VTAM benchmarks and related methodological studies. The following table summarizes typical outcomes:

Table 1: Impact of Replicate Filtering on ASV Retention and Putative Noise Reduction

| Experimental Scenario | Total ASVs Pre-Filter | --min_replicate Setting |

ASVs Post-Filter | % ASVs Retained | Estimated Noise Removed* |

|---|---|---|---|---|---|

| Mock Community (Known Species) | 1,250 | 2 | 98 | 7.8% | 92.2% |

| Environmental Sample (Soil) | 45,600 | 2 | 12,300 | 27.0% | 73.0% |

| Human Gut Microbiome | 32,100 | 3 | 8,920 | 27.8% | 72.2% |

| Negative Control Sample | 850 | 2 | 5 | 0.6% | 99.4% |

*Estimated Noise Removed = 100% - % ASVs Retained. This represents sequences likely arising from errors.

Visualizing the VTAM Filter Workflow

VTAM Filter Command Workflow in Pipeline

Logical Decision Process of thefilterCommand

Filter Command Decision Logic

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials and Reagents for Metabarcoding Validation Experiments

| Item | Function in ASV Validation | Example Product/Kit |

|---|---|---|

| High-Fidelity DNA Polymerase | Minimizes PCR amplification errors, reducing spurious variants at source. | Q5 High-Fidelity DNA Polymerase (NEB), Phusion Plus PCR Master Mix (Thermo). |

| PCR Replication Primers | Identical primer sets for technical replicates to enable the filtering logic. | Metabarcoding primer sets (e.g., 16S V4, ITS2) with unique dual-index barcodes. |

| Negative Control Reagents | Molecular-grade water and extraction blank kits to assess contamination. | ZymoBIOMICS DNase/RNase-Free Water, extraction kit blanks. |

| Positive Control (Mock Community) | Defined mix of genomic DNA from known species to benchmark filtering accuracy. | ZymoBIOMICS Microbial Community Standard, ATCC MSA-1003. |

| Size-Selective Magnetic Beads | For precise post-PCR cleanup and removal of primer dimers, improving ASV table quality. | AMPure XP beads (Beckman Coulter), SPRIselect beads (Beckman). |

| Bioinformatics Software | For upstream denoising and downstream analysis integrated with VTAM output. | DADA2, USEARCH, QIIME 2, R (phyloseq, tidyverse). |

| VTAM Pipeline | The core software enabling the replicate-based filtering protocol. | VTAM (https://github.com/aitgon/vtam). |

This whitepaper details the critical execution phase of the VTAM (Validation and Taxonomic Assignment Module) pipeline, a specialized computational framework designed for rigorous validation of metabarcoding data in biopharmaceutical and ecological research. The run command initiates core analytical processes, integrating quality-controlled sequence data with reference libraries and statistical models to produce validated taxonomic assignments. This step is fundamental for ensuring data integrity in downstream applications, including biomarker discovery and therapeutic target identification.

The broader VTAM pipeline thesis posits that robust, automated validation is the keystone for reliable metabarcoding analyses. Step 2, the execution of the run command, operationalizes this thesis. It transforms curated input data—filtered reads and parameter sets—into high-fidelity taxonomic profiles. For drug development professionals, this step mitigates the risk of false-positive or false-negative organism detection, which is crucial when analyzing microbial communities linked to disease states or drug metabolism.

Core Functionality of the 'Run' Command

The run command automates a sequential workflow of validation algorithms. Its primary functions are:

- Integration: Merges user-defined parameters (from Step 1) with the curated sequence data and reference databases.

- Algorithmic Processing: Executes the core VTAM validation algorithms, primarily based on Expectation-Maximization (EM) and probabilistic filtering.

- Output Generation: Produces a validated Amplitude Sequence Variant (ASV) or Operational Taxonomic Unit (OTU) table, log files, and diagnostic statistics.

Detailed Methodology & Protocol

The following protocol assumes completion of Step 1 (vtam optimize) and the preparation of a run.yml configuration file.

Pre-Execution Checklist

| Item | Specification | Purpose |

|---|---|---|

| Input File | filtered_reads.fasta |

Quality-controlled sequence data from prior steps. |

| Reference Database | custom_curated.fasta |

A tailored database of target marker gene sequences. |

| Configuration File | run.yml |

Defines parameters for the validation algorithm. |

| Known Sample File | known_samples.tsv (Optional) |

Controls for validation algorithm calibration. |

| VTAM Environment | Version ≥ 4.0.0 | Ensures access to latest algorithms and bug fixes. |

Command Execution Syntax

Internal Algorithmic Workflow

The execution follows a defined internal pipeline:

Title: VTAM Run Command Internal Workflow

Key Validation Algorithm (EM-based)

For a given ASV i and sample j, VTAM's core algorithm calculates a probability score P(i,j) that the ASV is a true positive and not a technical artifact (e.g., PCR/sequencing error).

Protocol:

- Initialization: Assign initial probabilities based on read count and replication across PCR replicates.

- Expectation Step (E-step): Estimate the expected number of true occurrences for each ASV across all samples and replicates.

- Maximization Step (M-step): Maximize the likelihood function to update parameters governing error rates and ASV prevalence.

- Iteration: Repeat E-step and M-step until convergence (Δ log-likelihood < 1e-5).

- Thresholding: Filter ASVs where final P(i,j) < user-defined cutoff (default: 0.95).

Data Outputs and Interpretation

The run command generates the following key outputs, summarized in the table below.

| Output File | Format | Key Metrics Contained | Significance for Research |

|---|---|---|---|

asv_table_validated.tsv |

Tab-separated | Final filtered ASV counts per sample. | Primary data for downstream statistical analysis (e.g., differential abundance). |

run_info.log |

Text | Run parameters, version, execution time. | Essential for reproducibility and audit trails. |

model_diagnostics.csv |

CSV | Per-iteration log-likelihood, convergence status. | Allows monitoring of algorithm performance and stability. |

filter_summary.tsv |

TSV | Counts of ASVs filtered at each stage. | Quantifies stringency of validation; critical for methods reporting. |

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in VTAM 'Run' Context | Example/Note |

|---|---|---|

| Curated Reference Database | Serves as the ground truth for taxonomic assignment. Must be tailored to the target gene (e.g., 16S, ITS, 18S). | SILVA, UNITE, or custom databases curated for specific pathogens. |

| Known Positive Control Samples | Biological replicates of mock communities with known composition. Used to calibrate and benchmark the validation algorithm's sensitivity/specificity. | ZymoBIOMICS Microbial Community Standard. |

| High-Fidelity PCR Enzyme Mix | Critical wet-lab reagent that minimizes amplification errors in the initial metabarcoding library prep, reducing input noise for the VTAM pipeline. | Q5 High-Fidelity DNA Polymerase. |

| Computational Environment Manager | Ensures exact versioning of VTAM and all dependencies (Python, R, packages) for reproducible execution across research teams. | Conda, Docker, or Singularity. |

| High-Performance Computing (HPC) Cluster | Provides necessary computational resources for executing the iterative EM algorithm on large, complex datasets (e.g., longitudinal human microbiome studies). | SLURM or SGE-managed cluster nodes. |

Troubleshooting and Optimization

Common issues during execution and their resolutions:

| Symptom | Potential Cause | Solution |

|---|---|---|

| Algorithm fails to converge | Overly permissive initial parameters; noisy input data. | Increase --max_iterations; review optimize step results; introduce stricter read count filters. |

| Excessive loss of ASVs post-run | Probability threshold (--cutoff) too high. |

Re-run with a lower cutoff (e.g., 0.80) and compare diagnostic plots. Validate with known controls. |

| Memory overflow error | Reference database or input file extremely large. | Split analysis by sample batch; increase allocated RAM on HPC; use a more targeted reference database. |

Integration with Downstream Analysis

The validated ASV table is the essential bridge to biological interpretation. It is directly compatible with standard ecological analysis packages (e.g., phyloseq in R, QIIME 2) for:

- Alpha and beta diversity analysis.

- Differential abundance testing (e.g., DESeq2, LEfSe).

- Network and functional inference analysis.

Title: Downstream Applications of Validated Data

The execution of the run command is the computational core of the VTAM pipeline thesis. By implementing a rigorous, probabilistic validation framework, it delivers a high-confidence taxonomic profile from complex metabarcoding data. This step is non-negotiable for generating the reliable datasets required to draw meaningful correlations between microbial communities and host phenotypes—a foundational task in modern drug discovery and development.

Within the VTAM (Validation and Taxonomic Assignment Management) pipeline for amplicon sequence variant (ASV) validation in metabarcoding research, the final and most critical step is the interpretation of filtered ASV tables and associated log files. This guide provides an in-depth technical framework for analyzing these outputs to ensure robust, reproducible conclusions for downstream applications in drug discovery and microbiome research.

The VTAM pipeline is designed to rigorously filter noise from metabarcoding datasets (e.g., from 16S, ITS, or 18S markers) using validation controls (negative and positive PCR controls). Its output—a filtered ASV table and a detailed log file—forms the cornerstone of validated ecological and taxonomic inferences. Accurate interpretation is paramount for hypothesis generation in therapeutic development, such as identifying pathogenic signatures or beneficial consortia.

Structure of Core Output Files

Filtered ASV Table

This is a biological observation matrix where ASVs have passed stringent, user-defined validation thresholds.

Table 1: Key Fields in a Filtered ASV Table

| Field Name | Data Type | Description & Significance |

|---|---|---|

asv_id |

String | Unique DNA sequence hash. Basis for all downstream analysis. |

taxonomy |

String | Assigned taxonomy (e.g., k__Bacteria;p__Firmicutes;c__Clostridia). |

sample_1_count |

Integer | Read count for the ASV in biological sample 1 after filtering. |

... |

Integer | ... for all other samples. |

mean_neg_control |

Float | Mean read count across all negative controls. Informs contamination risk. |

pass_filter |

Boolean | Indicates if ASV passed max_prev_negative and min_prev_positive thresholds. |

Table 2: Quantitative Summary of a Sample Filtered ASV Table

| Metric | Pre-Filtering | Post-VTAM Filtering | % Change |

|---|---|---|---|

| Total ASVs | 15,842 | 4,371 | -72.4% |

| Total Reads | 8,756,221 | 7,101,544 | -18.9% |

| ASVs in Negative Controls | 2,587 | 12 | -99.5% |

| Singletons Removed | 4,211 | 0 | -100% |

VTAM Log File

A chronological and structured record of the pipeline's decisions, critical for auditability and parameter optimization.

Table 3: Critical Sections in a VTAM Log File

| Log Section | Key Parameters & Metrics | Interpretation for Validation |

|---|---|---|

| Run Information | VTAM version, command, timestamp. | Ensures reproducibility. |

| Input Summary | Number of samples, controls, input ASVs. | Baseline dataset scope. |

| Filter Steps | max_prev_negative=0, min_prev_positive=1, min_replicate=2. |

Documents validation stringency. |

| Statistics per Filter | ASVs/reads removed at each step. | Identifies major noise sources. |

| Final Output | Paths to output files, final ASV/sample counts. | Confirms successful run. |

Experimental Protocols for Output Validation

Protocol: Cross-Validation with Independent Negative Controls

Objective: To empirically verify that contaminants labeled by VTAM are consistent across separate experimental batches.

- Run the VTAM pipeline on the main dataset, including its internal negative controls.

- Maintain a separate set of "validation negative controls" (extraction and PCR blanks) processed concurrently but excluded from the VTAM run.

- Map the sequences of ASVs filtered out as "contaminants" (primarily via

max_prev_negative) to the validation controls using a simple sequence alignment (e.g.,vsearch --usearch_global). - Quantitative Analysis: Calculate the percentage of filtered ASVs that are detected in the independent validation controls. A high overlap (>85%) confirms the specificity of the contaminant removal.

Protocol: Positive Control Recovery Efficiency

Objective: To assess sensitivity and ensure true biological signals are not disproportionately lost.

- Spike a known quantity of a synthetic (non-biological) control DNA (e.g., SynMock, ZymoBIOMICS) into your positive PCR controls.

- Process samples through the VTAM pipeline with

min_prev_positiveset appropriately. - In the filtered ASV table, identify the ASV corresponding to the spike-in sequence.

- Quantitative Analysis: Compute recovery rate:

(Spike-in reads in filtered table) / (Spike-in reads in raw data) * 100. Rates below 95% may indicate overly aggressive filtering.

Visualizing the Analysis Workflow

Title: VTAM Output Analysis and Validation Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Materials for VTAM Validation Experiments

| Item | Function in Validation | Example Product/Brand |

|---|---|---|

| Certified DNA-Free Water | Serves as the critical negative PCR control to detect reagent/lab-borne contamination. | ThermoFisher UltraPure DNase/RNase-Free Water |

| Mock Microbial Community | Standardized positive control to benchmark filtering efficiency and compute recovery rates. | ZymoBIOMICS Microbial Community Standard |

| Synthetic Spike-in Oligonucleotides | Non-biological positive control for absolute quantification of filtering stringency. | SynMock community (Custom designed oligos) |

| High-Fidelity PCR Enzyme | Minimizes polymerase errors during library prep, reducing false ASV generation. | NEB Q5 Hot Start High-Fidelity Master Mix |

| Magnetic Bead Cleanup Kit | For consistent post-PCR cleanup, reducing cross-contamination between samples. | Beckman Coulter AMPure XP Beads |

| Bioinformatics Container | Ensures reproducible execution of the VTAM pipeline and analysis scripts. | Docker image vtam/vtam:latest |

Within the broader thesis on establishing a robust VTAM (Vetting, Trimming, and Mapping) pipeline for validating metabarcoding data, a critical phase is the downstream integration of curated data into statistical analysis and visualization ecosystems. VTAM's output, typically a high-confidence Amplicon Sequence Variant (ASV) or Operational Taxonomic Unit (OTU) table with associated metadata, serves as the foundational input for biological interpretation. This technical guide details methodologies for seamless transition from the VTAM-validated data to actionable insights, catering to researchers and professionals in drug discovery and microbial ecology.

VTAM Output Data Structure

The core output from VTAM is a rigorously filtered biological observation matrix. The quantitative data structure is summarized below.

Table 1: Core VTAM Output Data Structure

| Component | Format | Description | Typical Downstream Use |

|---|---|---|---|

| ASV/OTU Table | CSV/TSV, BIOM (v2.1+) | Matrix with samples as columns and sequence variants as rows. Cells contain read counts. | Core input for diversity analysis, differential abundance. |

| Taxonomy Table | CSV/TSV | Taxonomic assignment (Kingdom to Species) for each sequence variant. | Taxonomic stratification, phylogeny-informed analysis. |

| Sample Metadata | CSV/TSV | Experimental variables (e.g., treatment, timepoint, patient ID, pH). | Statistical grouping, covariate adjustment, visualization. |

| Sequence File (FASTA) | .fasta/.fna | Representative sequences for each ASV/OTU. | Phylogenetic tree construction, BLAST validation. |

| Run Log & Parameters | .log/.yml | Record of VTAM filters and thresholds applied. | Reproducibility, method documentation. |

Downstream Integration Pathways

Import into Statistical Environments

Protocol 1.1: Import into R using phyloseq

Protocol 1.2: Import into Python using qiime2 or anndata

Core Statistical Analyses

Protocol 2.1: Alpha and Beta Diversity Analysis

Table 2: Key Statistical Tests for VTAM-Derived Data

| Analysis Goal | Recommended Test/Package | Input from VTAM | Key Output |

|---|---|---|---|

| Differential Abundance | DESeq2 (for over-dispersed count data), ANCOM-BC | ASV Table, Metadata | Log2 fold-change, p-adjusted values. |

| Community Difference | PERMANOVA (vegan::adonis2), MiRKAT |

Distance Matrix (Bray-Curtis/Unifrac) | F-statistic, R², p-value. |

| Taxonomic Composition | CLR Transformation (compositions), ALDEx2 |

ASV Table (compositional) | Clr-transformed abundances. |

| Correlation Networks | SpiecEasi (SPIEC-EASI), FlashWeave |

Filtered ASV Table | Microbial association networks. |

Visualization Integration

Protocol 3.1: Generating Publication-Quality Figures

- Stacked Bar Plots: Use

ggplot2(R) ormatplotlib/seaborn(Python) with taxonomy table for grouping. - Heatmaps: Employ

pheatmaporComplexHeatmapafter CLR transformation of ASV counts. - Phylogenetic Trees: Utilize

ggtreeto annotate trees with taxonomy and abundance data. - Interactive Dashboards: Deploy

shiny(R) ordash(Python) applications using VTAM output as the primary dataset.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Downstream VTAM Analysis

| Tool/Reagent | Function | Example/Provider |

|---|---|---|

| RStudio / Posit | Integrated development environment for R. Facilitates phyloseq, vegan, DESeq2 analysis. |

Posit, PBC |

| QIIME 2 | Containerized pipeline for microbiome analysis. Accepts VTAM BIOM output. | qiime2.org |

| Python (SciPy Stack) | Libraries (pandas, numpy, scikit-learn, scanpy) for custom data analysis. |

Anaconda Distribution |

| Phyloseq R Package | Primary object class and function suite for organizing and analyzing VTAM output. | Bioconductor |

| Geneious Prime | GUI for phylogenetic analysis, integrates ASV sequences and trees. | Biomatters Ltd |

| Git + GitHub | Version control for analysis scripts, ensuring reproducible workflows. | GitHub, GitLab |

| Jupyter Notebooks | Interactive, document-based coding for sharing complete analysis narratives. | Project Jupyter |

| BIOM Format | Standardized file format for sharing ASV tables across tools. | biom-format.org |

Experimental and Analytical Workflow Diagram

Title: Downstream VTAM Data Analysis Workflow

Title: VTAM Data Integration to Statistical and Visualization Modules

Effective downstream integration of VTAM's validated output is paramount for translating curated metabarcoding data into robust, statistically sound, and visually compelling scientific findings. By leveraging standardized data formats, open-source analytical environments, and reproducible protocols outlined in this guide, researchers can confidently extend the VTAM pipeline's rigor through to the final stages of discovery and reporting, thereby strengthening the overall thesis on metabarcoding validation.

Solving VTAM Challenges: Optimization Tips and Common Pitfalls

Within the context of developing and deploying the VTAM (Validation and Taxonomic Assignment Module) pipeline for rigorous metabarcoding data validation in biomedical and drug discovery research, technical execution errors are a significant bottleneck. This guide provides an in-depth analysis of three pervasive categories of errors—permission issues, file path problems, and YAML syntax errors—that researchers commonly encounter. Mastering their diagnosis is critical for ensuring reproducible, automated, and high-integrity bioinformatics workflows essential for downstream analyses in therapeutic target identification.

Permission Issues in Pipeline Execution

Permission errors halt pipelines by preventing read, write, or execute operations on files, directories, or scripts.

Core Concepts & Diagnostics

Unix-like systems use a permission model for User (u), Group (g), and Others (o). Key diagnostic commands:

ls -la: Displays permissions, ownership, and group.stat <file>: Shows detailed access data.id: Displays current user’s group memberships.

Table 1: Linux Permission Codes and Implications for VTAM

| Permission Symbol | Octal Value | Meaning for Files | Impact on VTAM Workflow |

|---|---|---|---|

r-- |

4 | Read only | Can read input FASTQ/FASTA, but cannot write output. |

-w- |

2 | Write only | Uncommon; would prevent reading configuration files. |

--x |

1 | Execute only | Script can be run, but modules cannot be read. |

rw- |

6 | Read and write | Can process and produce files, but not execute scripts. |

r-x |

5 | Read and execute | Ideal for pipeline scripts and tools. |

rwx |

7 | Read, write, execute | Full control (use cautiously). |

Experimental Protocol: Resolving Permission Denied Errors

Objective: Diagnose and rectify a "Permission denied" error when launching the VTAM pipeline runner script. Materials: A terminal on a Unix/Linux system (or WSL2 on Windows) with VTAM installed. Procedure:

- Attempt Execution: Run

./vtam_runner.py. Observe "Permission denied" error. - Inspect Permissions: Execute

ls -l vtam_runner.py. Output may resemble-rw-r--r--. - Add Execute Permission: For the user only, run

chmod u+x vtam_runner.py. - Verify Group Permissions: If the pipeline runs as a group, ensure appropriate group permissions with

chmod g+rx vtam_runner.py. - Check Directory Permissions: The parent directory must have execute (

x) permission for the user to traverse it. Usels -ld /path/to/vtamand modify withchmod u+x /path/to/vtamif needed. - Confirm Resolution: Re-run

./vtam_runner.py.

Key Consideration: Avoid recursive chmod 777 commands, as they pose severe security risks and compromise data integrity.

File Path Problems

Absolute and relative path misinterpretations are a common source of "File not found" errors in complex pipeline structures.

Path Types and Common Pitfalls

- Absolute Path: Specifies location from the root directory (

/). Environment-specific (e.g.,/mnt/lab_server/projects/vtam/data/input.fasta). - Relative Path: Specifies location relative to the current working directory (CWD). CWD-dependent (e.g.,

./data/input.fasta).

Table 2: Common Relative Path Symbols and Outcomes

| Symbol | Meaning | Example (if CWD=/home/researcher/vtam) |

Resolves To |

|---|---|---|---|

. |

Current directory | ./config.yaml |

/home/researcher/vtam/config.yaml |

.. |

Parent directory | ../tools/bin/script |

/home/researcher/tools/bin/script |

~ |

User's home directory | ~/data/sample1.fq |

/home/researcher/data/sample1.fq |

| (None) | Relative from CWD | data/sample1.fq |

/home/researcher/vtam/data/sample1.fq |

Experimental Protocol: Diagnosing Path-Related Failures

Objective: Identify the root cause of a missing file error in a VTAM workflow step. Materials: A terminal, a text editor, and a VTAM configuration file. Procedure:

- Log the Error: Note the exact error message and the failing command.

- Check the Configuration: Examine the path declared in the VTAM config file or command-line argument.

- Determine Path Type: Identify if the path is absolute or relative.

- Find Current Working Directory: Run

pwdin the terminal to confirm the CWD from which the pipeline was launched. - Resolve the Path Manually: Combine the CWD with the relative path from the config to see if the target file exists at that location using

ls -la /resolved/full/path. - Test Alternative Paths: Use absolute paths for critical data files to remove CWD ambiguity. For portability, consider using pipeline-internal path variables that are set at runtime.

Diagram Title: Path Resolution Logic Leading to Success or Failure

YAML Syntax in Configuration Files

YAML (YAML Ain't Markup Language) is ubiquitous for pipeline configuration (e.g., VTAM parameters, sample sheets, tool settings). Its reliance on indentation and specific characters makes it prone to subtle errors.

Critical YAML Syntax Rules

- Indentation: Uses spaces (never tabs). Consistent spacing denotes structure.

- Key-Value Pairs:

key: value. A space after the colon is mandatory. - Lists: Denoted by a leading hyphen and space (

- item1). - Multi-line Strings: Use

|(literal block) or>(folded block). - Special Characters: Strings with

:,{,},[,],,,&,*,#,?,-,<<should often be quoted.

Table 3: Common YAML Errors and Their Manifestations in VTAM

| Error Type | Invalid Example | Valid Example | Error Symptom |

|---|---|---|---|

| Tab Indentation | key:\n\tvalue: |

key:\n value: |

"mapping values are not allowed here" |

| Missing Colon Space | filtering_threshold:0.01 |

filtering_threshold: 0.01 |

May parse incorrectly as string "0.01" |

| Incorrect List Format | samples:\n sample1,\n sample2 |

samples:\n - sample1\n - sample2 |

Parses as a string, not a list. |

| Unquoted Reserved Char | primer: FP-ITS1 |

primer: "FP-ITS1" |

May be interpreted as a boolean (null). |

Experimental Protocol: Validating and Linting YAML Configuration

Objective: Systematically verify the integrity of a vtam_config.yaml file before pipeline execution.

Materials: A YAML configuration file and access to command-line tools.

Procedure:

- Use a YAML Linter: Run

yamllint vtam_config.yaml. This will catch indentation, syntax, and stylistic issues. - Leverage Python's Parser: Execute a simple Python validation script:

- Check for Tabs: Use

grep -n $'\t' vtam_config.yamlto identify any tab characters. - Validate Structure: Ensure required keys for VTAM (e.g.,

database_path,filtering_options,sample_info) are present and correctly nested. - Test in a Dry Run: If supported, run VTAM with a

--dry-runor--validateflag using the configuration.

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Tools for Diagnosing Pipeline Errors

| Tool / Reagent | Category | Primary Function in Diagnosis |

|---|---|---|

yamllint |

Software Linter | Validates YAML files for syntax, indentation, and best practices. |

shellcheck |

Static Analysis Tool | Analyzes shell scripts (used in pipeline wrappers) for common errors and pitfalls. |

pylint / flake8 |

Python Linter | Checks Python code quality and syntax, crucial for custom VTAM modules. |

tree |

System Utility | Displays directory structure visually to verify file locations and hierarchy. |

realpath |

System Utility | Converts relative file paths to absolute paths, clarifying file location. |

| Conda/Bioconda | Package Manager | Ensures all bioinformatics tools (e.g., Cutadapt, VSEARCH) and dependencies are correctly installed and isolated. |

| Docker/Singularity | Container Platform | Provides reproducible environments with fixed permissions and pre-resolved paths, eliminating "works on my machine" issues. |

| Sample Sheet Validator | Custom Script | A bespoke script to check the integrity, formatting, and path validity of sample manifest CSVs/TSVs before pipeline launch. |

Integrated Debugging Workflow

A systematic approach is required when an error arises in a VTAM run.

Diagram Title: Integrated Decision Tree for Diagnosing VTAM Errors

In the high-stakes research environment of metabarcoding for drug discovery, the VTAM pipeline's reliability is paramount. Permission issues, file path ambiguities, and YAML syntax errors represent a significant class of preventable failures. By adopting the diagnostic protocols, validation tools, and structured workflows outlined in this guide, researchers can minimize operational downtime, ensure data integrity, and maintain the rigorous standards required for validating therapeutic targets and biomarkers. Mastery of these fundamentals is not merely operational but foundational to reproducible computational science.