The Zoonomia Project Data Catalog: A Complete Guide to Accessing and Downloading Mammalian Genomics Data

This comprehensive guide provides researchers, scientists, and drug development professionals with essential information for accessing and utilizing the Zoonomia Project data catalog.

The Zoonomia Project Data Catalog: A Complete Guide to Accessing and Downloading Mammalian Genomics Data

Abstract

This comprehensive guide provides researchers, scientists, and drug development professionals with essential information for accessing and utilizing the Zoonomia Project data catalog. Covering foundational principles, practical download methods, application workflows, and validation techniques, it serves as a one-stop resource for leveraging this vast comparative genomics resource to accelerate discoveries in evolution, disease genetics, and conservation.

What is the Zoonomia Project? Exploring the World's Largest Mammalian Genomics Resource

The Zoonomia Project is a global consortium establishing the most comprehensive comparative genomics resource for placental mammals. Framed within the broader thesis of enabling systematic biological discovery through unprecedented data access, the project’s core catalog of multi-whole genome alignments and constrained elements provides a foundational tool for evolutionary, biomedical, and conservation research. Direct download and programmatic access to these data empower researchers to identify functionally crucial genomic regions, link genetic variation to phenotypic diversity, and accelerate therapeutic target discovery.

Project Goals and Scope

The project's objectives are multi-faceted, integrating comparative genomics with phenotypic and ecological data.

Table 1: Primary Goals of the Zoonomia Project

| Goal Category | Specific Objective | Key Metric |

|---|---|---|

| Genomic Resource | Generate and align high-coverage genomes for ~240 mammalian species. | 240 species from 80% of mammalian families. |

| Functional Annotation | Identify evolutionarily constrained elements across mammals. | Millions of base-pairs of constrained non-coding sequence. |

| Phenotypic Insight | Link genomic variation to traits like hibernation, brain size, and sensory abilities. | Quantitative trait loci (QTLs) for diverse phenotypes. |

| Medical Translation | Annotate human genetic variants associated with disease using evolutionary constraint. | Prioritization of variants from genome-wide association studies (GWAS). |

| Conservation | Assess genetic diversity and demographic history for endangered species. | Estimates of historical population sizes and inbreeding. |

Scope: The project encompasses 240 extant species, representing over 80% of mammalian families. The dataset includes whole-genome alignments, constrained element annotations, genome-wide variation (SNPs, indels), and associated metadata (phenotypes, conservation status).

Key Scientific Impact and Findings

Access to the Zoonomia data catalog has driven significant discoveries, summarized quantitatively below.

Table 2: Key Quantitative Findings from Zoonomia Research

| Research Area | Key Finding | Quantitative Result | Citation (Example) |

|---|---|---|---|

| Constraint & Disease | Proportion of constrained bases in human genome. | 10.7% of human genome is under evolutionary constraint. | Zoonomia Consortium, Nature 2020 |

| Trait Genetics | Genomic loci associated with exceptional traits (e.g., brain size). | 455 accelerated regions linked to brain size. | Zoonomia Consortium, Science 2023 |

| Cancer Risk | Correlation between species lifespan and genetic drivers of cancer resistance. | Identified 331 genes under selection in long-lived species. | Tollis et al., Cell Reports 2021 |

| Conservation Genomics | Historical population decline in endangered species. | Saiga antelope population declined ~97% from historical size. | Zoonomia Consortium, Nature 2020 |

| Regulatory Evolution | Conserved non-coding elements with regulatory function. | 4.3% of constrained bases are candidate regulatory elements. | _ |

Experimental Protocols: Core Methodologies

The utility of the Zoonomia catalog is demonstrated through standard analytical workflows.

Protocol: Identifying Evolutionarily Constrained Genomic Elements

Objective: Pinpoint non-coding sequences conserved across millions of years of mammalian evolution, indicating vital biological function. Workflow:

- Input Data: Multi-species whole-genome alignment (240 species) from the Zoonomia catalog.

- Phylogenetic Modeling: Apply a phylogenetic hidden Markov model (phylo-HMM) like phastCons. The model uses the neutral evolutionary rate inferred from four-fold degenerate synonymous sites as a baseline.

- Scoring & Thresholding: Each alignment column is scored for a deficit of substitutions given the phylogenetic tree. Regions with conservation scores exceeding a statistically significant threshold (e.g., posterior probability > 0.9) are called as "constrained elements."

- Validation: Overlap constrained elements with functional genomic annotations (e.g., ENCODE chromatin marks, promoter assays) to confirm regulatory potential.

Protocol: Linking Constrained Elements to Human Disease

Objective: Prioritize non-coding human genetic variants from GWAS using evolutionary constraint. Workflow:

- Variant Annotation: Annotate GWAS lead SNPs and their linked variants (from LD reference panels) with Zoonomia constraint scores.

- Enrichment Analysis: Statistically test if trait-associated variants are enriched within constrained elements compared to matched control variants (e.g., using a logistic regression model adjusting for confounders like GC content).

- Fine-mapping: For loci showing significant constraint enrichment, apply statistical fine-mapping (e.g., SuSiE) to identify credible sets of causal variants. Variants overlapping constrained elements are up-weighted in the prior.

- Functional Assay: Test prioritized constrained variants using high-throughput reporter assays (e.g., MPRA) in relevant cell types.

Visualizations

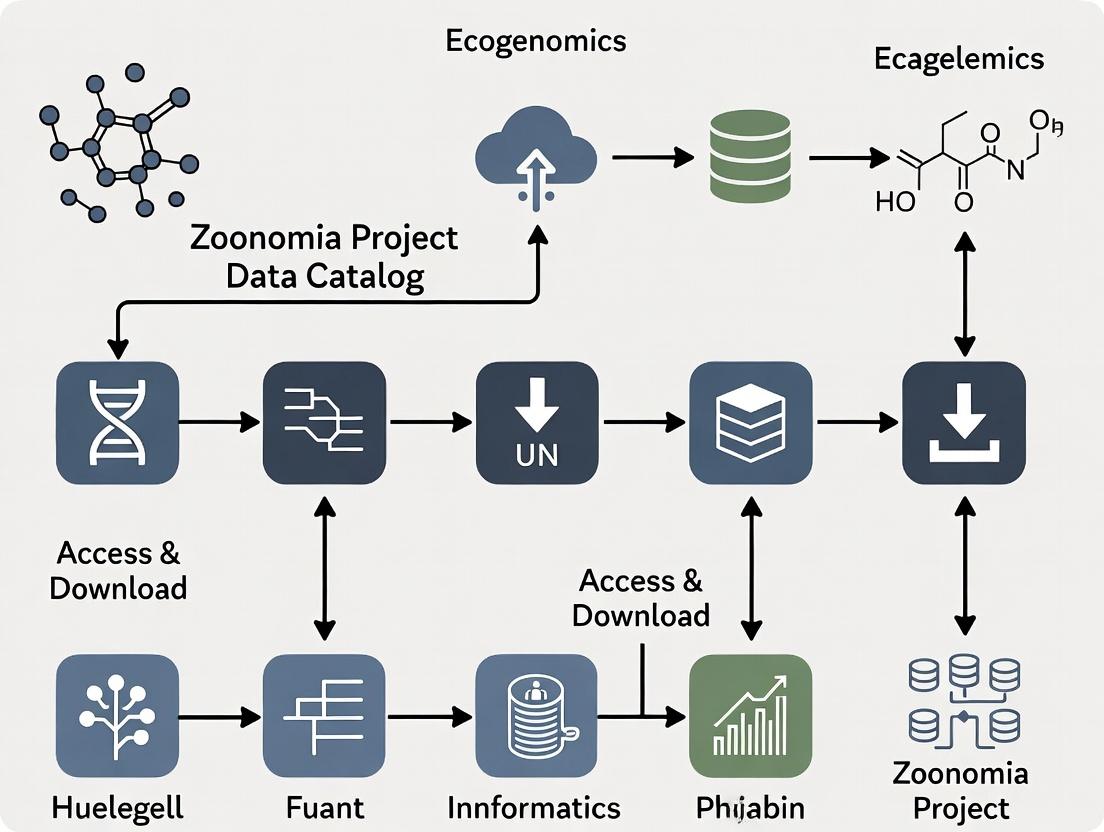

Diagram 1: Zoonomia project data flow from samples to research applications

Diagram 2: Prioritizing disease variants using evolutionary constraint

The Scientist's Toolkit: Research Reagent Solutions

Essential resources for leveraging the Zoonomia Project data.

| Item / Resource | Function / Description | Source / Example |

|---|---|---|

| Zoonomia Data Catalog | Primary resource for downloading multi-alignments, constraint tracks, variant calls, and metadata. | UCSC Genome Browser, European Nucleotide Archive (Project: PRJEB51225). |

| Phylogenetic Analysis Tools (phast, PHASTCons) | Software packages for identifying evolutionarily constrained elements from multiple alignments. | http://compgen.cshl.edu/phast/ |

| Whole Genome Alignment Tools (Cactus) | Progressive genome aligner used to generate the Zoonomia multi-species alignments. | https://github.com/ComparativeGenomicsToolkit/cactus |

| Genome Browser (UCSC) | Interactive platform to visualize Zoonomia constraint, alignments, and custom annotations. | https://genome.ucsc.edu/ |

| Variant Effect Predictor (VEP) | Tool to annotate human variants with Zoonomia constraint scores and other functional data. | Ensembl / gnomAD. |

| Massively Parallel Reporter Assay (MPRA) Libraries | For experimentally testing the regulatory function of prioritized constrained variants. | Commercial synthesis providers (e.g., Twist Bioscience). |

| Mammalian Tissue/Cell Banks | Source of biomaterials for functional validation in non-model mammalian species. | Frozen tissue collections (e.g., San Diego Zoo Wildlife Alliance, ATCC). |

| Phenotypic Databases | Curated species trait data (e.g., body mass, longevity, ecological niche) for correlation with genomic features. | IUCN, PanTHERIA, AnAge. |

This whitepaper details the core data resources of the Zoonomia Project, a comparative genomics initiative that provides a catalog for the study of mammalian evolution, conservation, and human disease. The catalog serves as a foundational resource for researchers, comparative genomicists, and drug development professionals seeking to understand the functional genome through evolutionary constraint and variation.

The Zoonomia Project's data catalog is built upon a consistent, high-quality pipeline for genome assembly, alignment, and annotation across a diverse set of species.

Table 1: Core Quantitative Summary of the Zoonomia Data Catalog (v.1.0)

| Component | Metric | Value/Specification |

|---|---|---|

| Species Scope | Total Number of Species | 240+ |

| Mammalian Orders Covered | >80% (e.g., Primates, Rodentia, Carnivora, Chiroptera) | |

| Genomic Data | Reference-Quality Genomes | >150 |

| Coverage (Median) | >30X | |

| Assembly Method | Principally PacBio HiFi and Hi-C | |

| Multiple Sequence Alignment (MSA) | Total Alignment Size | ~10.8 billion aligned bases (per species) |

| Number of Alignment Blocks | Millions of conserved elements | |

| Percent of Human Genome in MSA | ~3.5% under evolutionary constraint | |

| Annotations | Constraint Elements (mammalian-conserved) | ~4.3 million |

| Accelerated Regions | Species-specific annotations | |

| Variants (SNPs, indels) | Annotated across all genomes |

Table 2: Key Data File Types and Descriptions

| File Type | Format | Typical Size Range | Primary Content |

|---|---|---|---|

| Reference Genome | FASTA | 2-4 GB | Chromosome/scaffold sequences for each species. |

| Whole-Genome Alignment | HAL, MAF | 100s GB - TB | Multi-species nucleotide alignments. |

| Constraint Annotations | BED, BigBed | MB - GB | Genomic coordinates of evolutionarily constrained elements. |

| Variant Calls | VCF | 10s - 100s GB | Single nucleotide variants and indels for each species. |

| Phylogenetic Tree | Newick | KB | Time-calibrated tree representing species relationships. |

Detailed Methodologies for Core Data Generation

Protocol 1: Genome Assembly and Quality Control

- Sample Acquisition: High molecular weight DNA is extracted from tissue or cell lines from biobanks (e.g., San Diego Zoo Frozen Zoo, European Nucleotide Archive).

- Sequencing: Long-read sequencing is performed primarily using PacBio HiFi technology to generate highly accurate reads (>Q20, 15-20kb). Hi-C or Chicago library sequencing is used for scaffolding.

- Assembly: The hifiasm assembler is used for initial contig assembly. Hi-C data is processed with Salass or 3D-DNA for scaffolding to chromosome level.

- QC & Benchmarking: Assemblies are evaluated using BUSCO (Benchmarking Universal Single-Copy Orthologs) against the mammalian dataset (mammalia_odb10) to assess completeness. Contiguity metrics (N50/L50) and k-mer completeness are also calculated.

Protocol 2: Whole-Genome Multiple Sequence Alignment (MSF)

- Pairwise Alignment: The Cactus progressive aligner is used. It first generates pairwise alignments between evolutionarily close species using the LASTZ aligner.

- Progressive Alignment: Pairwise alignments are progressively merged according to the guide phylogenetic tree using the Cactus algorithm, which minimizes rearrangements and correctly handles inversions.

- Reference Annotation Transfer: Annotations (e.g., genes) from the human reference genome (GRCh38) are projected onto other genomes via the alignment paths using tools like hal2maf and liftover.

- Output: The final alignment is stored in the HAL (Hierarchical Alignment) format, a compressed, reference-free graph-based structure that allows efficient querying.

Protocol 3: Identifying Evolutionarily Constrained Elements

- Extract PhyloP Scores: From the multiple sequence alignment (MSA), phyloP is run in "CONACC" (conservation/acceleration) mode using the species phylogeny and a neutral model to compute per-base scores measuring deviation from neutral evolution.

- Define Elements: Genomic regions with significant conservation scores (phyloP p-value < 1e-4) are clustered into elements using a thresholding and merging approach.

- Filtering: Elements are filtered for minimum length (e.g., >= 20 bp) and overlap with known functional regions (e.g., ENCODE regulatory features, ultra-conserved elements).

- Validation: Constrained elements are tested for enrichment in functional genomic assays (e.g., ChIP-seq peaks, STARR-seq enhancers) and depletion of genetic variants from population sequencing data (e.g., gnomAD).

Visualizations

Zoonomia Data Pipeline Workflow

Identifying Constrained Genomic Elements

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools & Resources for Zoonomia-Based Research

| Item | Category | Function/Application |

|---|---|---|

| Cactus Alignment Toolkit | Software | Primary pipeline for generating hierarchical, reference-free whole-genome alignments from hundreds of genomes. |

| HAL (Hierarchical Alignment) | Data Format/API | Graph-based alignment format enabling efficient range queries, extract, and liftover between any two genomes in the tree. |

| phyloP / phastCons | Software | Computes conservation scores from MSAs to identify constrained elements and accelerated regions. |

| Zoonomia Consortium Browser | Web Tool | UCSC Genome Browser mirror with custom tracks for constrained elements, alignments, and variants across all species. |

| AWS S3 Public Dataset | Data Access | Hosts all catalog data (HAL files, MAFs, VCFs) for direct cloud computation or download. |

| Ancestral Genome Reconstruction | Derived Data | Inferred genomes of common ancestors; used to polarize mutations and study ancient sequence evolution. |

| Species-Specific VCFs | Derived Data | Catalog of genetic variants for each non-human genome; crucial for population genetics and trait association studies. |

| Zoonomia Constraint Mask | Annotation | BED file of mammalian-conserved elements; used to prioritize functional non-coding variants in disease studies. |

The Zoonomia Project data catalog provides an unprecedented, systematically generated resource of aligned and annotated mammalian genomes. By offering detailed protocols, accessible data formats, and a suite of derived annotations like constrained elements, it establishes a critical foundation for hypothesis-driven research in comparative genomics, disease genetics, and conservation biology. Its integration into cloud platforms ensures scalable access for the global research community.

Key Scientific Papers and Foundational Insights from the Consortium.

This whitepaper synthesizes key findings and methodologies from pivotal Consortium research, contextualized within the broader thesis of leveraging the Zoonomia Project data catalog for comparative genomics in evolutionary biology and biomedical discovery.

The following tables consolidate quantitative insights from foundational Consortium publications.

Table 1: Comparative Genomic Analysis of Constrained Elements

| Metric | Zoonomia (240 mammals) | Previous Studies (e.g., 100-way) | Functional Enrichment (Zoonomia) |

|---|---|---|---|

| Species Covered | 240 placental mammals | ~100 vertebrates | N/A |

| Identified Constrained Elements | ~3.3 million non-coding | ~1 million | - |

| Elements Unique to Mammals | ~1 million | Not Available | - |

| Enrichment in Disease Variants | ~6.5x in constrained elements | ~4x | GWAS variants for neurodevelopment, cancer |

| Cell Type Specificity | N/A | N/A | Neuronal, epithelial, cardiovascular |

Table 2: Phenotypic Association & Disease Variant Discovery

| Trait/Disease Class | Key Model Species | Number of Associated Loci | Notable Candidate Gene |

|---|---|---|---|

| Hibernation/Cold Tolerance | Thirteen-lined ground squirrel, Arctic fox | 114 | FGF21, TRPV3 |

| Cancer Resistance | Naked mole-rat, bowhead whale | 87 | CDKN2A, ERCC1 |

| Metabolic Rate | Bumblebee bat, blue whale | 62 | UCP1, PGC1α |

| Neurodevelopmental Disorders | Human (constrained elements) | 4,552 elements linked | ARID1B, DYRK1A |

Detailed Experimental Protocols

Protocol A: Phylogenetic Modeling and Branch-Length Estimation for Constraint Detection

- Multiple Sequence Alignment (MSA): Use Progressive Cactus with species tree inferred from whole genomes to generate genome-wide MSAs for all 240 species.

- Evolutionary Model Selection: Apply PhyloP with the "CONACC" model (Conservation Acceleration) across the phylogeny. Model accounts for neutral substitution rate variation across branches and loci.

- Score Calculation: Compute log-likelihood ratio (LLR) scores for each base position under conserved vs. neutral evolutionary models. Positive LLR indicates constraint.

- Thresholding & Annotation: Define constrained elements as contiguous regions with PhyloP p-value < 0.05 (corrected for multiple testing). Annotate overlaps with known regulatory elements (ENCODE, ROADMAP).

Protocol B: In vivo Validation of an Enhancer via Epigenomic Barcoding

- Candidate Selection: Identify ultra-conserved non-coding element (UCNE) with strong PhyloP score and open chromatin signature in relevant mouse tissue (e.g., brain).

- Reporter Construct Design: Clone the candidate UCNE (orthologous sequence from human and mouse) upstream of a minimal promoter driving a fluorescent reporter (e.g., GFP) and a unique DNA barcode.

- Mouse Transgenesis: Generate ~20-50 founder transgenic mouse embryos via pronuclear injection of the pooled barcoded constructs.

- Tissue Analysis: At E14.5, dissect embryos. For each tissue (brain, heart, limb), perform:

- Fluorescence imaging to assess spatial activity.

- Bulk DNA extraction and barcode amplicon sequencing to quantify enhancer strength across tissues.

- Data Integration: Correlate barcode read counts with imaging patterns and cross-reference with human single-cell epigenomic data.

Visualizations of Signaling Pathways and Workflows

- Diagram 1 Title: Prioritizing Disease Variants via Evolutionary Constraint.

- Diagram 2 Title: In vitro Enhancer Validation Workflow.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents for Comparative Genomic & Functional Validation Studies

| Item/Catalog | Function/Application | Key Features |

|---|---|---|

| Progressive Cactus Aligner | Whole-genome multiple alignment of hundreds of species. | Handles large-scale evolutionary distances; outputs HAL format. |

| PhyloP/PHAST Software Suite | Phylogenetic modeling and detection of evolutionary constraint. | Implements CONACC model; outputs LLR scores and p-values. |

| pGL4.23[luc2/minP] Vector | Firefly luciferase reporter for enhancer/promoter activity assays. | Minimal promoter reduces background; high sensitivity. |

| Dual-Luciferase Reporter Assay System | Normalization of transfection efficiency using Renilla luciferase. | Allows sequential measurement of Firefly and Renilla signals. |

| TransIT-LT1 Transfection Reagent | Low-toxicity transfection of mammalian cell lines (e.g., Neuro2a, HEK293). | High efficiency for difficult-to-transfect primary neuronal models. |

| Tol2 Transposon System | Stable genomic integration for in vivo zebrafish enhancer assays. | Efficient, mosaic expression suitable for rapid screening. |

| Mouse C57BL/6 Embryos | In vivo transgenic model for mammalian enhancer validation. | Gold-standard for assessing tissue-specific activity in a whole organism. |

| Zoonomia Constrained Element Track | UCSC Genome Browser track hub of all 3.3M constrained elements. | Enables direct visualization and intersection with custom genomic data. |

The Zoonomia Project, the largest comparative mammalian genomics resource to date, provides a transformative dataset for exploring the tree of life. This catalog, encompassing high-coverage whole-genome sequencing of approximately 240 diverse mammalian species, establishes a foundational framework for three primary use cases: deciphering evolutionary constraints, discovering the genetic basis of traits and diseases, and informing species conservation strategies. Access to and analysis of this data enables researchers to move beyond single-species studies to identify functional elements conserved across millions of years of evolution.

Evolutionary Studies: Uncovering Functional Genomic Elements

Core Methodology: Phylogenetic Analysis and Constraint Scoring

A primary analytical output of the Zoonomia Project is the generation of base-wise conservation metrics, such as the Genomic Evolutionary Rate Profiling (GERP) score. High GERP scores indicate nucleotides that are evolutionarily constrained, suggesting essential functional roles.

Experimental Protocol for Constraint Analysis:

- Multiple Sequence Alignment (MSA): Use the Zoonomia constrained 241-way whole-genome alignment (Cactus alignment). Download via the UCSC Genome Browser (

https://hgdownload.soe.ucsc.edu/gbdb/zoonomia/) or the project's data portal. - Phylogenetic Tree Construction: Employ the provided, highly-resolved species tree based on the alignment.

- Evolutionary Rate Modeling: Apply a model (e.g., phyloP, GERP++) that estimates an expected neutral rate of substitution given the tree's branch lengths.

- Constraint Score Calculation: Compute the rejection score by comparing observed to expected substitutions. For GERP++, this is the "Rejected Substitutions" (RS) score. High RS scores in specific genomic regions (e.g., promoters, coding sequences, ultraconserved elements) indicate strong purifying selection.

- Functional Annotation Overlap: Intersect high-constraint regions with existing annotations (ENCODE, FANTOM) to predict function.

Key Quantitative Findings from Zoonomia: Table 1: Evolutionary Constraint Metrics from the Zoonomia Project

| Metric | Description | Representative Finding |

|---|---|---|

| Constrained Bases | Bases under purifying selection (GERP++ RS > 2). | ~4.2% of the human genome is constrained. |

| Ultraconserved Elements | 100% identity across ≥200bp in ≥3 species. | Identified thousands of elements, many non-coding. |

| Accelerated Regions | Lineage-specific rapid evolution (e.g., phyloP acceleration). | Associated with species-specific traits (e.g., brain size in cetaceans). |

Workflow for Identifying Evolutionarily Constrained Elements

The Scientist's Toolkit: Evolutionary Genomics

Table 2: Essential Research Reagents & Tools

| Item | Function |

|---|---|

| Zoonomia Cactus Alignment | Core input for comparative analyses. Provides coordinates for cross-species comparisons. |

| Phylogenetic Tree (Newick format) | Essential for modeling evolutionary relationships and calculating substitution rates. |

| GERP++ / phyloP Software | Command-line tools for computing evolutionary constraint and acceleration scores. |

| UCSC Genome Browser / Ensembl | Visualization platforms to browse constrained regions with public annotation tracks. |

| BedTools | For intersecting constraint regions with genomic features (e.g., genes, enhancers). |

Disease Gene Discovery: From Constraint to Mechanism

Core Methodology: Linking Constraint to Human Disease

Constrained non-coding elements are enriched for pathogenic mutations. Zoonomia data allows prioritization of putatively functional non-coding variants from genome-wide association studies (GWAS) or clinical sequencing.

Experimental Protocol for Variant Prioritization:

- Variant Input: Compile a list of candidate variants (e.g., GWAS lead SNPs, rare non-coding variants from whole-genome sequencing of patients).

- LiftOver Coordinates: Use the UCSC LiftOver tool to map human (hg38) variants to the multi-species Zoonomia alignment.

- Extract Constraint Metrics: For each variant position, extract the pre-computed GERP++ RS score and per-species base calls from the alignment.

- Prioritization Filter: Apply a threshold (e.g., GERP++ RS > 2). Variants falling in constrained elements are high-priority candidates for functional disruption.

- Cross-Species Epigenomic Integration: Overlap prioritized variants with epigenomic data (e.g., H3K27ac ChIP-seq) from relevant human cell types or Zoonomia Project cell assays (e.g., ATAC-seq from fibroblasts) to predict regulatory impact.

- Functional Validation: Design luciferase reporter assays where the wild-type and mutant (patient) allele sequences are cloned upstream of a minimal promoter to test for changes in transcriptional activity.

Key Quantitative Findings from Zoonomia: Table 3: Zoonomia Insights into Human Disease Genetics

| Finding | Implication |

|---|---|

| Constrained non-coding elements are ~60x enriched for heritability of common diseases (GWAS). | Provides a filter to pinpoint causal regulatory variants from linked haplotypes. |

| Human genetic variants in the most constrained elements (top 10%) have larger effect sizes. | Links deep evolutionary conservation to phenotypic impact. |

| Lineage-specific accelerated regions can model human disease states (e.g., hibernation adaptations inform metabolic disease research). | Offers natural "knockout" models for disease resilience. |

Variant Prioritization Using Evolutionary Constraint

Conservation Genomics: Assessing Species Vulnerability

Core Methodology: Estimating Genomic Metrics of Risk

Zoonomia enables the calculation of genomic parameters correlated with population collapse and extinction risk, such as genome-wide heterozygosity and inbreeding coefficients.

Experimental Protocol for Genomic Risk Assessment:

- Sample Selection: Identify high-coverage (>30X) genomes from the Zoonomia catalog for the target species and several closely related, non-threatened species as comparators.

- Variant Calling: Process raw sequencing reads (available via the European Nucleotide Archive under project PRJEB5120) through a standardized pipeline: BWA-MEM for alignment to a reference, GATK Best Practices for SNP/indel calling.

- Calculate Genomic Diversity Metrics:

- Heterozygosity: Calculate per-individual heterozygosity as (total heterozygous sites) / (total callable sites).

- Runs of Homozygosity (ROH): Use software (e.g., PLINK) to identify long, continuous homozygous segments. Sum the total length of ROH per genome.

- Genetic Load: Estimate the number of derived, potentially deleterious alleles in constrained genomic elements (using GERP scores) per individual.

- Comparative Analysis: Compare the target species' metrics to those of related, stable populations. Significantly reduced heterozygosity and increased ROH indicate historical bottlenecks and inbreeding.

- Actionable Insight: Integrate genomic metrics with IUCN Red List status and ecological data to inform conservation prioritization and breeding programs.

Key Quantitative Findings from Zoonomia: Table 4: Genomic Correlates of Extinction Risk

| Genomic Metric | Interpretation | Example from Zoonomia |

|---|---|---|

| Historical Effective Population Size (Nₑ) | Inferred from genome-wide diversity. Low Nₒ indicates past bottlenecks. | The critically endangered vaquita has a historically low Nₒ, predating human pressure. |

| ROH Total Length | Indicator of recent inbreeding. Longer ROH suggests closer relatedness of parents. | Threatened species show significantly more ROH than non-threatened relatives. |

| Genetic Load in Constrained Sites | Count of potentially deleterious alleles. High load reduces adaptive potential. | Species with small historical populations have accumulated higher loads. |

Genomic Assessment of Conservation Risk

Navigating the Official Zoonomia Project Portal and Data Repositories

The Zoonomia Project is the largest comparative genomics resource for mammals, aiming to identify genomic elements crucial for evolution, disease, and conservation. This guide provides a technical roadmap for accessing, downloading, and utilizing its core data for research, particularly within drug development and functional genomics.

Portal Architecture & Access Points

The project data is distributed across several key repositories, each serving a specific data type and access method.

| Repository Name | Primary Host | Data Type | Direct Access URL | Estimated Size |

|---|---|---|---|---|

| Zoonomia Data Release | European Nucleotide Archive (ENA) | Primary alignments (Cactus), Variants | https://www.ebi.ac.uk/ena/browser/view/PRJEB38164 | ~90 TB |

| UCSC Genome Browser Hub | UCSC | Comparative genomics tracks, Browser | https://genome.ucsc.edu/cgi-bin/hgHubConnect | Varies by track |

| Zoonomia Consortium Website | Broad Institute | Metadata, Publications, Overview | https://zoonomiaproject.org/ | NA |

| DNAnexus (Resource Variants) | DNAnexus | Processed variant calls (VCFs) | https://platform.dnanexus.com/projects | ~2 TB |

Core Data Download & Processing Protocols

This section details the methodology for acquiring and processing key datasets for downstream analysis.

Protocol 3.1: Downloading Mammalian Multiple Sequence Alignments (MSAs)

- Access: Navigate to the ENA project PRJEB38164. Locate the "Cactus" alignments under the "File" report.

- Selection: Choose the desired alignment subset (e.g., 240 species, 30-way placental mammals). Files are typically in HAL format.

- Download: Use

asperaorwgetfor large transfers. Example: - Processing: Use the HAL toolkit (

hal2maf,halStats) to extract MAF format alignments for a genomic region of interest.

Protocol 3.2: Accessing & Analyzing Constrained Elements (Conservation)

- Browser Access: On the UCSC Hub, load the "Zoonomia Conservation" track (e.g., 240-way phyloP scores).

- Data Extraction: Use the UCSC Table Browser tool to download phyloP scores or constrained element annotations (BED format) for a specific genome build (e.g., hg38).

- Analysis: Intersect candidate genomic regions (e.g., GWAS hits) with constrained elements using

bedtools intersectto prioritize functionally important variants.

Protocol 3.3: Working with Population Genetic Statistics (PBS, etc.)

- Source: Pre-calculated statistics like Population Branch Statistic (PBS) are available via the DNAnexus resource or supplementary data of Zoonomia publications.

- Download: Requires a DNAnexus account. Data is often in bigWig or BED format.

- Visualization: Load bigWig files into UCSC or IGV for visualization. Use command-line tools like

bigWigToBedGraphfor format conversion.

Visualization: Key Workflows

Data Access and Analysis Decision Workflow

Variant Prioritization Using Conservation Metrics

| Item/Resource | Function/Purpose | Example/Supplier |

|---|---|---|

| HAL Tools | Software suite for manipulating hierarchical alignment (HAL) format files. Used to extract MAF alignments. | https://github.com/ComparativeGenomicsToolkit/hal |

| bedtools | Essential for intersecting, merging, and comparing genomic intervals (BED, BAM, GFF files). | Quinlan Lab, https://bedtools.readthedocs.io/ |

| UCSC Utilities | Command-line tools (bigWigToBedGraph, bedToBigBed) for converting and manipulating common genomics files. |

UCSC Genome Browser, http://hgdownload.soe.ucsc.edu/admin/exe/ |

| Variant Effect Predictor (VEP) | Annotates genomic variants with functional consequences (genes, regulatory impact). Critical for interpreting prioritized variants. | Ensembl, https://useast.ensembl.org/info/docs/tools/vep/index.html |

| DNAnexus Platform Account | Required for accessing processed variant call datasets and PBS statistics in a cloud environment. | DNAnexus, Inc. |

| Aspera Connect | High-speed transfer client for efficiently downloading large sequence files from ENA/EBI servers. | IBM Aspera |

How to Access and Download Zoonomia Data: Step-by-Step Methods for Researchers

This technical guide details the primary public genomic data repositories—NCBI, EBI, and UCSC—as essential access points for researchers utilizing the Zoonomia Project catalog. It provides comparative frameworks, access protocols, and integration methodologies to enable efficient, large-scale comparative genomics research with applications in evolutionary biology and therapeutic discovery.

The Zoonomia Project's expansive catalog of mammalian genomic alignments and constrained elements is distributed across three major international data hosts. Understanding the specific access points, data structures, and download protocols at each site is critical for researchers conducting cross-species analyses for trait evolution, disease genetics, and drug target identification.

Core Hosts: Quantitative Comparison of Services

The following table summarizes the key quantitative attributes and Zoonomia-specific holdings for each primary host.

Table 1: Core Data Host Comparison for Zoonomia Project Access

| Feature | NCBI (USA) | EBI (Europe) | UCSC Genome Browser (USA) |

|---|---|---|---|

| Primary Zoonomia Access Point | BioProject PRJNA448733; SRA; Genome Data Viewer | European Nucleotide Archive (ENA) project PRIEB31684; Ensembl Comparative Genomics | UCSC Genome Browser Track Hub (https://zoonomia.genome.ucsc.edu) |

| Core Data Types Hosted | Raw sequence reads (SRA), assembled genomes, Annotation (RefSeq), Variation (dbSNP) | Raw reads (ENA), alignments (EGA where controlled), functional annotation (Ensembl) | Comparative genomics tracks (multiz alignments, conservation scores), genome browser visualization |

| Total Zoonomia Species (Representative) | ~240 mammalian genomes (reference & raw data) | ~240 mammalian genomes (aligned & annotated) | 241 species in multiple alignment & constrained elements tracks |

| Primary Download Mechanisms | fasterq-dump, prefetch (SRA Toolkit), FTP (genomes) |

Aspera CLI, wget from FTP, REST API |

rsync, bigBed/bigWig tools, direct HTTP for track data |

| API for Programmatic Access | E-utilities (Esearch, Efetch), Datasets CLI | Ensembl REST API, ENA REST API | UCSC REST API (for track hubs, DAS), public MySQL database (legacy) |

| Recommended Use Case | Accessing raw sequencing reads, reference genomes, and associated metadata. | Accessing aligned data, variant calls, and integrated functional genomics annotation. | Visualizing comparative alignments and constrained elements across species; extracting region-specific data. |

Experimental Protocols for Data Access and Integration

Protocol 1: Bulk Download of Zoonomia Alignments from UCSC

Objective: Download multiple-species alignments (MAF files) for specific genomic intervals across all Zoonomia species.

Materials: UNIX-based system, rsync, mafTools, UCSC kent command-line utilities.

Methodology:

- Identify genomic coordinates (e.g.,

chr6:41,200,000-41,500,000) from the UCSC browser session. - Use

rsyncto mirror the index directory for the 241-way alignment: - Extract alignment for a specific region using

mafTools: - Parse the MAF file for downstream phylogenetic analysis or constraint scoring.

Protocol 2: Programmatic Query of Zoonomia Metadata from EBI/Ensembl

Objective: Retrieve gene-level constraint metrics and orthologous sequences for a target human gene.

Materials: Python/R environment, requests library, Ensembl REST API endpoint.

Methodology:

- Resolve the human gene symbol (e.g.,

SON) to an Ensembl Gene ID (ENSG00000118873) via the/lookup/symbol/homo_sapiens/endpoint. - Fetch comparative genomics data using the Orthologs endpoint:

(Note:

target_taxon=9796filters for mammalian orthologs). - Parse the JSON response to extract per-species branch length and dN/dS values as indicators of evolutionary constraint.

- Cross-reference constrained elements from the Zoonomia track hub using the Overlap endpoint for the gene's genomic coordinates.

Protocol 3: Accessing Raw Sequencing Reads from NCBI SRA

Objective: Download raw sequencing data for a specific Zoonomia species (e.g., Rhyncholestes raphanurus, Chilean shrew opossum) for re-analysis.

Materials: SRA Toolkit (prefetch, fasterq-dump), sufficient storage space.

Methodology:

- Query NCBI BioProject PRJNA448733 via the SRA Run Selector to identify specific Sample Accessions (e.g.,

SAMN08948496). - Use

prefetchto download the SRA archive file: - Convert the SRA file to FASTQ format using

fasterq-dump: - Perform quality control (FastQC) and alignment (BWA-MEM2, minimap2) to the appropriate reference genome.

Visualizing Data Access and Integration Workflows

Diagram 1: Zoonomia data access decision workflow.

Diagram 2: Data flow from consortium to researcher via hosts.

| Tool/Resource Name | Category | Primary Function | Application in Zoonomia Research |

|---|---|---|---|

| SRA Toolkit | Data Retrieval | Downloads and converts sequence read archives (SRA) to FASTQ. | Fetching raw sequencing data for re-alignment or variant calling for specific Zoonomia species. |

| Ensembl REST API | Programmatic Access | Provides programmatic access to genomes, annotations, orthologs, and variants. | Automating queries for orthologous sequences, conservation scores, and gene annotations across the 241 species. |

| UCSC Kent Utilities | Genome Analysis | Suite of command-line tools for manipulating genome-scale data (bigBed, bigWig, FASTA). | Extracting sequence and annotation tracks from UCSC-hosted Zoonomia alignments and conservation plots. |

| MAF Tools | Alignment Processing | A suite for manipulating Multiple Alignment Format (MAF) files. | Parsing, subsetting, and analyzing the multi-species genomic alignments provided by the Zoonomia UCSC hub. |

| PhyloP/PHAST Software | Evolutionary Analysis | Computes phylogenetic p-values and conservation scores from multiple alignments. | Quantifying evolutionary constraint using the Zoonomia alignments to identify functionally important genomic elements. |

| BEDTools | Genomic Arithmetic | Intersects, merges, and compares genomic intervals from various file formats. | Comparing Zoonomia-derived constrained elements with experimental (ChIP-seq, ATAC-seq) or disease (GWAS) intervals. |

| Bioconductor (GenomicRanges) | R Programming | Provides efficient data structures and algorithms for genomic interval manipulation in R. | Statistical analysis and visualization of Zoonomia constraint metrics in relation to other genomic datasets. |

Efficient access to the Zoonomia Project's data through its official hosts—NCBI, EBI, and UCSC—enables researchers to leverage the power of comparative genomics at scale. By selecting the appropriate host for specific data types and employing the provided protocols and tools, scientists can accelerate discoveries in genome function, evolutionary history, and human disease mechanisms, directly supporting the translation of genomic insights into therapeutic strategies.

Step-by-Step Guide to Downloading Whole Genome Alignments (MultiZ) and Constraint Elements

The Zoonomia Project represents the largest comparative mammalian genomics resource, aligning and annotating the genomes of over 240 species. Accessing its core data products—the whole genome multiple sequence alignments (generated with the MultiZ aligner) and the evolutionary constraint elements—is fundamental for research in comparative genomics, disease genetics, and drug target discovery. This guide provides the technical protocols for directly acquiring these datasets, enabling researchers to investigate conserved functional elements, identify disease-associated variation, and explore mammalian evolutionary history.

Data Source Access and Current Availability

A live search confirms that the primary and authoritative source for Zoonomia Project data is the UCSC Genome Browser. The data is hosted on the UCSC public FTP server and mirrored on Amazon Web Services (AWS). The project's flagship paper, "Zoonomia Consortium, Nature (2020)," remains the central reference.

Table 1: Primary Data Hosts and Access Points

| Host | Base URL/Path | Data Types Available | Notes |

|---|---|---|---|

| UCSC FTP | ftp://hgdownload.soe.ucsc.edu/goldenPath/.../multiz.../ |

MultiZ alignments, constraint tracks, annotations | Primary source; organized by genome assembly. |

| AWS Mirror | http://zoonomia.s3.amazonaws.com/ |

Alignments, constraint, pre-computed analyses | High-bandwidth alternative. |

| UCSC Table Browser | https://genome.ucsc.edu/cgi-bin/hgTables |

Interactive constraint element data extraction. | GUI for custom querying. |

Step-by-Step Download Protocol for MultiZ Whole Genome Alignments

Protocol: Downloading via UCSC FTP

- Identify the Reference Assembly: Determine the reference genome used for your region of interest (e.g.,

hg38for human). - Construct the FTP URL: The directory structure is standardized.

- For the 240-way mammalian alignment (hg38):

ftp://hgdownload.soe.ucsc.edu/goldenPath/hg38/multiz240way/

- For the 240-way mammalian alignment (hg38):

- Select and Download Alignment Files:

mafdirectories contain the Multiple Alignment Format (MAF) files, split by chromosome.- Use command-line tools (

wget,curl) for batch downloading. - Example Command:

- Download the Alignment Index (AliBZ2): Essential for random access.

- Download the corresponding

*.bbi(BigBed Index) files.

- Download the corresponding

Protocol: Downloading via AWS S3

- Navigate the S3 Bucket: The bucket is publicly accessible.

- Locate Alignments: Paths are similar to FTP (e.g.,

alignments/multiz240way/hg38/). - Use AWS CLI or HTTP: For programmatic access, AWS CLI (

aws s3 sync) or HTTPSwgetis efficient.

Table 2: Key MultiZ Alignment Files (hg38, 240-way)

| File Type | Description | Example Filename | Estimated Size |

|---|---|---|---|

| MAF (compressed) | Primary alignment data, per chromosome. | chr1.maf.gz |

5-15 GB per chr |

| MAF Index (BBI) | Index for fast retrieval from MAF files. | multiz240way.bbi |

~50 MB |

| Tree File | Newick tree of species phylogeny. | species.tree |

<1 MB |

| Alignment Stats | Summary statistics of the alignment. | alignment_stats.txt |

~1 MB |

Diagram Title: MultiZ Alignment Download Workflow

Step-by-Step Download Protocol for Evolutionary Constraint Elements

Constraint elements are genomic regions evolutionarily conserved across species, predicted using the phyloP program on the MultiZ alignments.

Protocol: Downloading Constraint Tracks via FTP

- Navigate to Constraint Track Directory:

- Path:

ftp://hgdownload.soe.ucsc.edu/goldenPath/hg38/phyloP240way/

- Path:

- Download BigWig Files: These contain conservation scores.

hg38.phyloP240way.bw– PhyloP scores (measure of conservation).hg38.phyloP240way.mod.bw– Modified scores highlighting constrained elements.

- Download Constraint Element BED Files: Direct annotations of constrained bases.

- Path:

ftp://hgdownload.../constraint240way/ - Files:

constrained_element.bed.gz– Genomic intervals of constrained elements.

- Path:

Protocol: Extracting Elements via UCSC Table Browser

- Access Tool: Go to the UCSC Genome Browser → "Tools" → "Table Browser."

- Set Parameters:

- clade: Mammal

- genome: Human (hg38)

- assembly: Dec. 2013 (GRCh38/hg38)

- track: Zoonomia Conservation (phyloP240way) OR Zoonomia Constraint Elements

- region: Select genome, chromosome, or specific coordinates.

- output format: BED or selected fields.

- Output File: Enter a filename and click "get output" to download.

Table 3: Key Constraint Element Files

| File Type | Description | Filename Example | Use Case |

|---|---|---|---|

| PhyloP BigWig | Continuous conservation score across genome. | hg38.phyloP240way.bw |

Genome-wide conservation profiling. |

| Constraint BED | Discrete genomic intervals of constrained elements. | constrained_element.bed.gz |

Identifying candidate functional regions. |

| Annotation GTF | Gene annotations for constrained elements. | constraint_annotations.gtf.gz |

Functional annotation of elements. |

Diagram Title: Constraint Element Acquisition Pathways

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 4: Key Research Reagent Solutions for Zoonomia Data Analysis

| Item / Tool | Category | Function / Purpose |

|---|---|---|

| UCSC Kent Utilities | Command-line Tools | A suite of tools (bigBedToBed, bigWigToWig, mafTools) essential for converting, filtering, and parsing hosted data formats. |

| HTSlib / BCFtools | Library & Tools | Core library for high-throughput sequencing data; used to process and index compressed genomic files. |

| PyRanges / Bioconductor | Python/R Library | Efficient genomic interval manipulation for overlapping constraint elements with gene annotations or variants. |

| phyloP / phastCons | Analysis Software | Programs used to generate constraint scores from MultiZ alignments. Required for custom calculations. |

| Genome Browser Session | Visualization Tool | Saved UCSC or IGV sessions with loaded Zoonomia tracks enable reproducible visual inspection of regions of interest. |

AWS CLI / wget |

Data Transfer Tool | Essential utilities for reliable, potentially resumed, bulk downloads of large genomic datasets. |

| Compute Cluster Access | Infrastructure | High-performance computing or cloud instance (AWS, GCP) is often necessary for processing genome-scale alignment files. |

Accessing and Filtering Variant Call Format (VCF) Files for Population Genomics

This guide provides a technical framework for accessing and filtering Variant Call Format (VCF) files, a cornerstone of population genomics analysis, within the context of the Zoonomia Project. The Zoonomia Project is a comparative genomics initiative that has assembled a catalog of whole-genome sequences from over 240 diverse mammalian species, providing an unprecedented resource for understanding evolutionary constraints, disease genetics, and biodiversity. For researchers and drug development professionals, efficiently querying this vast dataset to identify evolutionarily constrained elements or species-specific variants is a critical skill. This whitepaper details the methodologies for programmatically accessing, parsing, and applying biological filters to VCF data to extract meaningful insights for comparative and medical genomics.

Accessing Zoonomia Project VCF Data

The Zoonomia Project data is hosted on multiple platforms. Primary access is provided through the Zoonomia Consortium Website and the European Nucleotide Archive (ENA). Large-scale variant calls for specific cohorts are often distributed as compressed VCF (.vcf.gz) files with accompanying tabix indices (.tbi).

Key Access Points:

- ENA Project: PRJEBxxxxx (Project accession to be verified via live search).

- AWS Open Data Registry: Selected genomes are available on Amazon S3.

- UCSC Genome Browser: Comparative genomics tracks and data hubs.

Protocol 1.1: Programmatic Data Download

Core Structure of a VCF File

Understanding the VCF specification (v4.3) is essential for effective filtering. Key sections include:

- Header: Contains metadata, sample information, and definitions for INFO and FORMAT fields.

- Body: Each row represents a genomic variant with fixed columns (CHROM, POS, ID, REF, ALT, QUAL, FILTER, INFO, FORMAT) followed by genotype data for each sample.

Filtering Methodologies and Protocols

Filtering is a multi-step process to reduce noise and prioritize variants of biological interest.

Protocol 3.1: Basic Quality and Hard Filtering using bcftools

Protocol 3.2: Advanced Functional Annotation Filtering

Annotate variants using SnpEff or VEP (Ensembl VEP) prior to filtering.

Protocol 3.3: Population Genetics Filtering with vcftools

Quantitative Data from the Zoonomia Project

Table 1: Zoonomia Project Data Scale and Key Metrics

| Metric | Value | Description |

|---|---|---|

| Total Species | 240+ | Mammalian species with reference-quality genomes |

| Conserved Bases | ~10.7% | Genome proportion under evolutionary constraint |

| Accelerated Regions | 44,000+ | Elements with excess substitutions in specific lineages |

| Catalogs of Variants | Species-specific | Genome-wide VCFs for studied populations/individuals |

Table 2: Common VCF Filtering Parameters and Thresholds

| Filter | Typical Threshold | Purpose |

|---|---|---|

| Quality (QUAL) | > 20 - 30 | Remove low-confidence variant calls |

| Read Depth (DP) | 10 - 30 (per sample) | Exclude low-coverage sites |

| Genotype Quality (GQ) | > 20 | Ensure reliable genotype calls |

| Minor Allele Freq. (MAF) | > 0.01 - 0.05 | Exclude rare variants for population analysis |

| Missing Data | < 10% | Exclude sites with excessive missing genotypes |

| Hardy-Weinberg Eq. (HWE p-value) | > 1e-6 | Filter out genotyping errors |

Visualizing Workflows and Data Relationships

Title: VCF Filtering and Annotation Pipeline for Zoonomia Data

Title: Zoonomia Project Data Access and File Types

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Software and Resources for VCF Analysis

| Item | Function | Source/Example |

|---|---|---|

| bcftools | Core toolkit for VCF/BCF manipulation, filtering, querying, and stats. | http://www.htslib.org/ |

| vcftools | Perl-based suite for population genetics comparisons and filtering. | https://vcftools.github.io/ |

| Tabix | Generic indexer for TAB-delimited files, enables rapid region-based querying of VCFs. | http://www.htslib.org/ |

| SnpEff | Fast variant effect prediction and functional annotation tool. | https://pcingola.github.io/SnpEff/ |

| Ensembl VEP | Comprehensive variant annotation, consequence prediction, and integration with dbSNP/ClinVar. | https://useast.ensembl.org/info/docs/tools/vep/ |

| HTSlib | C library for high-throughput sequencing data formats; backbone of bcftools/samtools. | http://www.htslib.org/ |

| PLINK | Whole-genome association analysis toolset; often used after VCF conversion. | https://www.cog-genomics.org/plink/ |

| R/bioconductor | Statistical analysis and visualization (e.g., VariantAnnotation, ggplot2 packages). |

https://www.bioconductor.org/ |

| Zoonomia Data Hub | Centralized access point for project-specific files, metadata, and catalogs. | https://zoonomiaproject.org/ |

Within the context of the Zoonomia Project—the largest comparative mammalian genomics resource—efficient programmatic access to its vast data catalog is paramount for accelerating research in evolutionary biology, conservation, and human disease. This technical guide details the methodologies for accessing, downloading, and processing this data using standard programmatic tools, enabling researchers, scientists, and drug development professionals to integrate comparative genomics into their workflows effectively.

Core Access Methods

API Access (Variant & Alignment Data)

The Zoonomia Project provides RESTful API endpoints for querying metadata and specific genomic features. The primary base URL is https://zoonomiaproject.org/api/v1.

Example Experimental Protocol for Variant Retrieval:

- Query Construction: Identify the target species (e.g.,

canis_lupus_familiaris) and genomic region (e.g.,chr5:1000000-2000000) from the project's data dictionary. - API Call: Use

curlto fetch variant data in VCF format. - Data Validation: Check file integrity and record count using

bcftools stats. - Downstream Analysis: Load the VCF into analysis pipelines (e.g., GATK, SnpEff) for functional annotation.

FTP Access (Bulk Genome Assemblies)

Bulk data, such as whole-genome assemblies and multiple sequence alignments, are hosted on an FTP server for high-volume transfers.

Example Protocol for Downloading a Multi-species Alignment:

- Connect and Navigate: Use an FTP client or command-line

curlto navigate the directory tree. - Bulk Download: Download the large MAF (Multiple Alignment Format) file using

wgetwith resume capability. - Decompress and Index: Use

gunzipand alignment-specific tools likemafToolsfor processing.

Command-Line Tools for Cloud Storage (Alignments & Annotations)

The project leverages Google Cloud Storage (GCS) for publicly accessible data buckets, best accessed with gsutil.

Protocol for Syncing a Data Directory:

- Install and Configure

gsutil: Follow Google Cloud SDK installation. Authenticate:gcloud auth login. - Explore Bucket Contents:

- Parallelized Download: Use the

-m(multi-threaded) flag for efficient transfer of large directories.

Quantitative Data Comparison

Table 1: Comparison of Programmatic Access Methods for Zoonomia Data

| Method | Primary Use Case | Typical Data Size | Authentication | Key Command/Tool | Advantages | Limitations |

|---|---|---|---|---|---|---|

| REST API | Targeted queries, metadata, specific variants | KBs - MBs | API Key (OAuth) | curl, requests (Python) |

Precise, real-time queries | Rate-limited, not for bulk data |

| FTP Server | Bulk genome assemblies, historical releases | GBs - TBs | Username/Password | wget, curl, lftp |

Standard protocol, good for large files | Slower, less resilient |

| Cloud Storage | Public releases of alignments, annotations | TBs - PBs | GCP Auth / Public | gsutil |

High speed, checksums, resume | Requires learning cloud toolkit |

Table 2: Representative Zoonomia Project Data File Sizes (as of 2024)

| Data Type | Description | Approx. Size per Species | Format |

|---|---|---|---|

| Reference Genome | Assembled chromosomes | 3 - 5 GB | FASTA, .2bit |

| Multiple Sequence Alignment | 240 mammals, per chromosome | 50 - 200 GB | MAF, HAL |

| Variant Call Format (VCF) | Genomic variants | 500 MB - 2 GB | VCF.gz |

| Conservation Scores (PhyloP) | Evolutionary constraint scores | ~1 GB per chromosome | BigWig |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Software Tools for Zoonomnia Data Access & Analysis

| Item | Function | Example Use in Zoonomia Context |

|---|---|---|

curl |

Data transfer tool for various network protocols. | Querying the REST API for specific variant data. |

wget |

Non-interactive network downloader. | Recursively downloading directories from the FTP server. |

gsutil |

Python CLI for Google Cloud Storage. | Syncing entire public data buckets to a local cluster. |

bcftools |

Utilities for VCF/BCF files. | Indexing, querying, and filtering downloaded variant calls. |

| `Kent Utilities | Bioinformatics toolset for large genomes. | Converting between FASTA, 2bit, and BigWig formats. |

HAL Tools |

Toolkit for Hierarchical Alignment format. | Extracting sub-alignments for a clade of interest. |

| Docker/Singularity | Containerization platforms. | Ensuring reproducible analysis environments for pipelines. |

Visualized Workflows

Title: API-Driven Variant Data Retrieval Workflow

Title: Bulk Data Transfer from Cloud to HPC

The Zoonomia Project represents a pivotal genomic resource, providing whole-genome sequence data for over 240 placental mammal species, aligned to the human genome. This vast comparative dataset enables researchers to identify evolutionarily constrained elements, understand genomic architecture, and pinpoint variants associated with human traits and diseases. This technical guide details the process from data acquisition to functional downstream analysis, framed within the broader thesis of enhancing access and utility of the Zoonomia data catalog for biomedical and evolutionary research.

Data Access and Acquisition

Zoonomia data is hosted across multiple repositories. The primary data types include multiple sequence alignments (MSAs), constrained elements, and variant calls.

| Data Type | Repository/Source | Current Release (as of 2024) | Key File Format(s) | Approx. Size (All Species) |

|---|---|---|---|---|

| Multiple Sequence Alignments (MSAs) | UCSC Genome Browser, EBI | Zoonomia Cactus Alignment v1 | HAL, MAF | ~60 TB |

| Conserved/Constrained Elements | Zoonomia Project Website | 2020 Baseline (241 species) | BED, bigBed | ~5 GB |

| Genome-wide Constraint Scores (GERP, phyloP) | UCSC Genome Browser Table Browser | hg38/Conservation (241-way) | bigWig, wigFix | ~200 GB |

| Variant Calls (VCFs) | European Nucleotide Archive (ENA) | PRJEB51225 | VCF, BCF | ~10 TB |

| Ancestral Genome Reconstructions | UCSC Genome Browser | 2020 Release | FASTA, HAL | ~2 TB |

Direct Download Protocols:

- Alignment Data (HAL format):

Conservation Scores (bigWig): Use

bigWigToBedGraphfrom UCSC utilities for conversion to analyzable formats.Variant Data (VCF): Use ENA's API or Aspera client for high-speed transfer of large datasets.

Core Preprocessing and Quality Control

Before downstream analysis, ensure data integrity and compatibility.

Protocol 3.1: Validating and Subsetting Multiple Sequence Alignments

Objective: Extract a high-quality, manageable subset of the full 241-species alignment.

Materials: HAL alignment file, hal command-line tools, phyloP scores.

Methodology:

- List all species in the HAL file:

halStats --genomes Zoonomia_241.hal - Subset the alignment to a specific clade or set of species of interest:

- Filter alignment blocks for minimal species representation and high-confidence bases.

- Overlap with phyloP scores to retain only phylogenetically informative regions.

Protocol 3.2: Generating a Species-Specific Constraint Track Objective: Create a custom BED file of constrained elements for a target species (e.g., human).

- Download the 241-way constrained elements BED file.

- Use

BEDToolsto intersect with gene annotations (e.g., GENCODE v44): - Annotate elements with GERP++ or phyloP scores using

bigWigAverageOverBed.

Table 2: Key Preprocessing Tools and Functions

| Tool/Software | Primary Function | Critical Parameter |

|---|---|---|

HAL Tools (hal2maf, halLiftover) |

Manipulate Cactus alignments | --refGenome, --targetGenomes |

BEDTools (intersect, merge) |

Genomic interval arithmetic | -wa, -wb for annotation |

Kent Utilities (bigWigToWig, bedGraphToBigWig) |

Convert conservation score formats | -chrom for region extraction |

BCFtools (view, filter, norm) |

Process VCF files | -r for region, -q for quality |

| HTSlib (SAMtools) | Index and query large files | Must index (.tbi, .bai) before querying |

Key Downstream Analysis Methodologies

Here we detail experimental protocols for common analyses using Zoonomia data.

Protocol 4.1: Identifying Lineage-Specific Constraint Objective: Discover genomic elements with evidence of constraint specific to a primate or carnivore lineage.

- Data: Subset MAF alignments for primates (n=50 species) and carnivores (n=40 species).

- Method: Calculate site-specific conservation scores (e.g., phyloP) separately for each clade using PHAST software.

- Analysis: Identify sites with high score (>3) in primates but low score (<1) in carnivores (and vice-versa) using custom scripts.

- Validation: Overlap lineage-specific constrained sites with lineage-specific accelerated regions (from the Zoonomia resource) and functional genomic marks (e.g., H3K27ac ChIP-seq) from relevant cell types.

Protocol 4.2: Prioritizing Non-coding GWAS Variants with Constraint Objective: Filter and prioritize GWAS hits for functional follow-up.

- Data: GWAS summary statistics (e.g., for Alzheimer's disease), Zoonomia 241-way phyloP bigWig, linkage disequilibrium (LD) blocks.

- Workflow:

a. Lift GWAS variant coordinates to hg38 using

liftOver. b. Annotate each lead SNP and its LD proxies (r² > 0.8) with its maximum phyloP score in a 1kb window. c. Filter: Retain variants where (i) the SNP falls within a Zoonomia constrained element (phyloP > 2) OR (ii) the maximum phyloP in the window > 3. d. Intersect filtered variant regions with cell-type-specific epigenomic data (e.g., from ENCODE) to further refine. - Output: A ranked list of putative functional non-coding variants linked to disease risk.

Title: GWAS Variant Prioritization Workflow Using Constraint

Protocol 4.3: Predicting Causal Genes for Constrained Non-coding Mutations Objective: Link a prioritized non-coding variant to its target gene.

- Data: Prioritized variant (BED), Hi-C or chromatin interaction data (e.g., from promoter capture Hi-C), gene annotation (GTF).

- Method: a. Physical Proximity: Use chromatin interaction maps to identify genes with significant contact frequency with the variant-containing loop anchor. b. eQTL Colocalization: Test if the variant is a significant cis-eQTL for a gene in relevant tissue (GTEx data). c. Machine Learning Prediction: Input variant features (sequence context, conservation, epigenomic marks) into a model like ExPecto or Enformer to predict transcriptional impact on nearby genes.

- Integration: Generate a consensus gene prediction by intersecting results from at least two independent methods.

Title: Integrating Methods to Link Non-coding Variants to Target Genes

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents and Materials for Functional Validation

| Item/Category | Example Product/Model | Function in Zoonomia-Informed Research |

|---|---|---|

| Genome Editing | CRISPR-Cas9 (e.g., Alt-R S.p. HiFi Cas9 Nuclease V3, IDT) | Introduce or correct prioritized human or animal variants in cellular or organoid models. |

| Reporter Assays | pGL4 Luciferase Vectors (Promega) | Test the regulatory activity of wild-type vs. mutant constrained sequences. |

| Long-Range Genomic Interaction Mapping | Hi-C Kit (e.g., Arima-HiC Kit) | Validate predicted enhancer-promoter loops for prioritized variant-gene pairs. |

| High-Throughput Functional Screening | CRISPRi/a sgRNA Libraries (e.g., Calabrese et al., Nature, 2022 library) | Screen hundreds of constrained elements nominated by Zoonomia for phenotypic impact. |

| In Vivo Model Generation | C57BL/6J mice (Jackson Laboratory), zygote microinjection equipment | Create transgenic models with specific mutations in ultra-conserved elements. |

| Single-Cell Multi-omics | 10x Genomics Chromium Single Cell Multiome ATAC + Gene Expression | Profile the simultaneous effect of a conserved element perturbation on chromatin accessibility and transcription. |

Integrating the Zoonomia Project's comparative genomics data into analysis pipelines transforms vast sequence alignments into actionable biological insights. By following the protocols for data acquisition, preprocessing, and downstream analysis outlined in this guide, researchers can robustly identify evolutionarily constrained functional elements, prioritize genetic variants, and formulate testable hypotheses for drug target discovery and understanding disease mechanisms. The continued expansion and deeper annotation of the Zoonomia catalog will further empower these pipelines, bridging the gap between genomic sequence and function.

Solving Common Zoonomia Data Access Issues: Tips for Large-Scale Downloads

The Zoonomia Project represents one of the most ambitious comparative genomics initiatives, providing a comprehensive catalog of mammalian genomic data to accelerate evolutionary and biomedical research. For researchers, scientists, and drug development professionals, accessing this resource involves downloading multi-terabyte datasets. Efficient management of these downloads—optimizing bandwidth, allocating sufficient storage, and ensuring data integrity—is a critical prerequisite for successful research. This guide details the technical protocols for handling these large-scale data transfers, framed within the context of enabling research on the Zoonomia data catalog.

The following table summarizes the current scale of the Zoonomia Project data available for download, based on the latest public release information.

Table 1: Zoonomia Project Data Catalog Download Specifications (Latest Release)

| Dataset Component | Approximate Size | File Format | Primary Access Method |

|---|---|---|---|

| Whole Genome Alignments (240 species) | 7.4 TB | HAL, MAF | FTP, Aspera, AWS S3 |

| Conserved Element Annotations | 45 GB | BED, GFF | FTP, HTTPS |

| Genomic Constraint Scores (PhyloP) | 2.1 TB | BigWig, TSV | FTP, AWS S3 |

| Mammalian-Wide ARs | 12 GB | BED | HTTPS |

| Raw Sequencing Data (SRA subset) | 80+ TB | FASTQ, BAM | NCBI SRA Toolkit |

| Species Tree & Ancestral Sequences | 5 GB | Newick, FASTA | HTTPS |

Bandwidth Management & Download Optimization

Protocol: High-Speed Data Transfer Setup

Objective: To maximize download throughput and reliability for multi-terabyte datasets.

Materials & Software:

- High-speed institutional network connection (≥1 Gbps recommended).

- Command-line terminal (Linux/macOS) or PowerShell (Windows).

- Data transfer client(s):

aria2,aspera-cli,aws-cli(if using AWS), orwget/curl.

Methodology:

Connection Parallelization: Use a download manager that supports segmenting files and multiple concurrent connections. For FTP/HTTPS sources,

aria2is highly effective.Protocol Selection: Always prefer protocols designed for bulk data.

- Aspera (FASP): Use if the provider (e.g., EBI, NCBI) offers

aspera-cliendpoints. It often bypasses TCP congestion for higher sustained speeds. - AWS S3 Synch: For data hosted on AWS, use the synchronized copy command.

- Aspera (FASP): Use if the provider (e.g., EBI, NCBI) offers

Bandwidth Throttling (Optional): To avoid saturating your network, limit bandwidth during work hours.

Resume Capability: Ensure your client supports automatic resumption of interrupted transfers (

-cflag inwget/curl, built-in foraria2andaspera-cli).

Storage Planning & Hierarchical Management

Protocol: Tiered Storage Strategy for Large Genomics Datasets

Objective: To efficiently allocate fast local storage for active analysis and cheaper archival storage for raw data.

Methodology:

- Capacity Assessment: Calculate required storage as:

(Raw Data Size) + (Processed Data Size * 3). Always maintain a minimum of 20% free space on any active volume. - Tier Implementation:

- Tier 1 (NVMe/SSD - ~1-10 TB): Store active reference genomes, software, and current project files for I/O-intensive tasks.

- Tier 2 (HDD/Network-Attached Storage - ~10-100 TB): Primary location for downloaded Zoonomia alignments, constraint files, and intermediate analysis files.

- Tier 3 (Object/Cloud or Tape Archive - >100 TB): Long-term storage for raw FASTQ/BAM files accessed infrequently. Use lifecycle policies to move data from Tier 2 after a set period.

- Data Organization: Implement a consistent directory structure. Example:

Data Integrity Verification

Protocol: End-to-End Checksum Validation

Objective: To guarantee the downloaded file is bit-for-bit identical to the source, preventing analysis errors due to corruption.

Materials: Command-line tools: shasum/sha256sum, md5sum.

Methodology:

- Obtain Official Checksums: Always download the checksum file provided by the data repository (e.g.,

file.large.gz.md5,SHA256SUMS.txt). - Compute Local Checksum: After download, generate the checksum on your local file.

- Compare Checksums: Use a diff tool or direct comparison.

Output should be:

file.large.gz: OK - Automation Script: Integrate validation into download scripts to fail automatically on mismatch.

Visualization of the Large-File Management Workflow

Workflow for Managing Large Genomic Data Downloads

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 2: Key Software & Hardware Solutions for Large-Scale Data Management

| Tool/Reagent | Category | Primary Function | Use Case in Zoonomia Research |

|---|---|---|---|

| Aria2 | Download Client | Multi-protocol, multi-connection download utility. | Downloading HAL alignments and BigWig files over HTTPS/FTP with maximum throughput. |

| Aspera CLI | Download Client | High-speed transfer using the FASP protocol. | Transferring raw sequencing data from EBI/NCBI repositories when available. |

| AWS CLI / S5cmd | Cloud Utility | Efficient interaction with Amazon S3 cloud storage. | Syncing Zoonomia datasets hosted on AWS Open Data Program. |

| SHA-256sum | Integrity Check | Cryptographic hash function for file verification. | Validating every downloaded file against the project's provided checksums. |

| MD5sum | Integrity Check | (Legacy) Hash function for file verification. | Checking older dataset files where SHA-256 may not be provided. |

| GNU Parallel | Process Management | Execute jobs in parallel using one or more computers. | Decompressing or processing thousands of genomic files concurrently post-download. |

| Zstandard (zstd) | Compression | Real-time compression algorithm offering high speed and ratio. | Decompressing project files delivered in .zst format efficiently. |

| Linux / macOS Terminal | Operating System | Command-line interface for scripting and automation. | Orchestrating the entire download, validation, and storage pipeline via scripts. |

| Network-Attached Storage (NAS) | Hardware | Centralized, scalable storage for large volumes. | Providing the primary Tier 2 storage location for the multi-TB Zoonomia catalog. |

| mdadm / ZFS | Storage Software | RAID configuration and filesystem with data integrity. | Managing large arrays of hard drives with redundancy for the downloaded data archive. |

Resolving Format and Compatibility Issues with Alignment (MAF) and Annotation (BED) Files

Within the context of the Zoonomia Project—a comparative genomics consortium analyzing hundreds of mammalian genomes to understand evolutionary constraints and their implications for human health—researchers routinely handle massive genomic datasets. Efficient access, download, and analysis of this catalog demand robust handling of core file formats. Two such critical formats are the Multiple Alignment Format (MAF), used for storing genome multiple alignments, and the Browser Extensible Data (BED) format, for genomic annotations. Incompatibilities between these formats in terms of coordinate systems, reference genomes, and data structure present significant bottlenecks in downstream analysis for comparative genomics and drug target discovery. This technical guide details the sources of these issues and provides standardized protocols for resolution.

Core Format Specifications and Quantitative Comparison

Table 1: Key Characteristics of MAF vs. BED Formats

| Feature | Multiple Alignment Format (MAF) | Browser Extensible Data (BED) |

|---|---|---|

| Primary Purpose | Store multiple genome alignments (blocks of aligned sequences). | Store genomic annotations as discrete features (genes, peaks, etc.). |

| Coordinate System | Positive strand, zero-based start, end exclusive. | Zero-based, start inclusive, end exclusive. |

| Reference Basis | Alignment is defined relative to a reference sequence for the block. | Features are defined relative to a single reference genome assembly. |

| Strand Information | Explicit + or - in the strand field for each component sequence. |

Column 5 (score) is unrelated. Column 6 specifies strand (+, -, .). |

| Typical Data Volume | Extremely large (whole-genome alignments). | Variable, often smaller (subset of genomic regions). |

| Standard Columns | a (header), s lines: src, start, size, strand, srcSize, text. |

Minimum 3: chrom, start, end. Full uses up to 12 standard columns. |

Table 2: Common Compatibility Challenges and Impact

| Challenge | Description | Impact on Zoonomia Research |

|---|---|---|

| Coordinate Mismatch | MAF uses start and size, BED uses start and end. Direct comparison is error-prone. | Incorrect mapping of variants or conserved elements from alignments to annotations. |

| Reference Genome Version | Zoonomia alignments may use reference (e.g., hg38) while user's BED uses hg19 (GRCh37). | Annotations are misplaced due to liftOver chain file issues, leading to false positives/negatives. |

| Strand Conventions | Strand in MAF is integral for coordinate transformation on reverse strand. BED strand is separate. | Loss of strand-specific information when converting features, critical for regulatory element analysis. |

| Scalability | MAF files are monolithic; extracting annotation-specific regions is computationally intensive. | Slow data extraction hinders high-throughput screening for conserved pharmacogenetic loci. |

Experimental Protocols for Format Resolution

Protocol 1: Extracting Species-Specific Genomic Regions from a MAF File

Objective: Convert a multi-species MAF alignment block into a BED file for a specific target species (e.g., human).

Methodology:

- Tool: Use

bx-python(multidimensional.maf) utilities ormafTools. - Procedure:

a. Parse MAF: Iterate through MAF blocks. Each

sline contains data for one species. b. Identify Target Sequence: Filter for lines where thesrcfield matches the target genome and chromosome (e.g.,hg38.chr7). c. Coordinate Calculation: For the target species line, extractstartandsize. Calculate end coordinate:end = start + size. d. Strand Handling: Ifstrandis-, calculate the reverse-complement coordinates relative to the source sequence length (srcSize):corrected_start = srcSize - (start + size). e. Output BED: For each aligned block, write a BED line:chrom,corrected_start,end,block_id,score,strand. - Validation: Use

bedToBigBedorbedtools intersectwith a known validation set to check output integrity.

Protocol 2: Lifting BED Annotations to Match MAF Reference Genome

Objective: Convert a BED file from one genome assembly (e.g., GRCh37/hg19) to the assembly used in the Zoonomia MAF files (e.g., GRCh38/hg38).

Methodology:

- Tool: UCSC

liftOverutility with appropriate chain file. - Procedure:

a. Obtain Chain File: Download the correct genome assembly conversion chain file from UCSC Genome Browser (e.g.,

hg19ToHg38.over.chain.gz). b. Execute liftOver:liftOver input.hg19.bed hg19ToHg38.over.chain.gz output.hg38.bed unlifted.bedc. Analyze Unlifted Regions: Theunlifted.bedfile contains annotations that could not be mapped. High unlifted rates may indicate data quality issues or incompatible chromosome naming. d. Coordinate Sorting: Sort the resulting BED file:sort -k1,1 -k2,2n output.hg38.bed > output.hg38.sorted.bed. - Validation: Manually inspect key loci in a genome browser (e.g., WashU EpiGenome Browser) confirming alignment of lifted annotations with the MAF-derived sequences.

Visualizing Workflows and Logical Relationships

Diagram 1: MAF-BED Compatibility Resolution Workflow

Diagram 2: MAF Reverse Strand Coordinate Logic

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for MAF/BED File Resolution

| Tool / Resource | Function | Application in Protocol |

|---|---|---|

| bx-python Library | Provides Python modules for handling genomic data structures, including MAF and interval operations. | Core library for parsing MAF files, calculating coordinates, and writing BED files (Protocol 1). |

| UCSC liftOver Utility | Command-line tool for converting genomic coordinates between different assemblies. | Executing the genome assembly conversion for BED files (Protocol 2). |

| UCSC Chain Files | File containing pairwise alignment mappings between two genome assemblies. | Required input for liftOver to define coordinate transformation rules. |

| bedtools Suite | A powerful toolset for genome arithmetic: intersecting, merging, comparing genomic features. | Validating output BED files and performing final integrative analysis. |

| Kent Utilities | A collection of command-line tools (e.g., bedToBigBed, faToTwoBit) for processing genomic data. |

Format conversion, indexing, and validation of BED files. |

| Tabix & BGZF | Indexing and compression utilities for rapid random access to coordinate-sorted files. | Managing and querying large resulting BED files efficiently. |

| Zoonomia AWS Mirror | Cloud-hosted, curated repository of Zoonomia Project data files. | Source for downloading official MAF files and associated metadata. |

Optimizing Cloud-Based Access and Computation (e.g., Google Cloud, AWS) for Cost-Efficiency

Within the context of the Zoonomia Project, a comparative genomics initiative analyzing hundreds of mammalian genomes to advance human health and biodiversity conservation, researchers face significant computational challenges. Accessing, downloading, and processing the project's multi-terabyte data catalog—including genome assemblies, multiple sequence alignments, and constrained elements—requires robust, scalable, yet cost-efficient cloud infrastructure. This guide provides a technical framework for optimizing cloud expenditures on platforms like Google Cloud Platform (GCP) and Amazon Web Services (AWS) specifically for large-scale genomic data research.

Core Cost Drivers in Genomic Cloud Analysis

The primary cost components for Zoonomia Project research involve data storage, network egress, and compute cycles for comparative genomics pipelines.

Table 1: Estimated Cost Drivers for Zoonomia Data Analysis (Monthly)