Mastering COG Database Annotation: A Comprehensive Guide for Microbial Genome Analysis in Biomedical Research

This article provides a complete resource for researchers utilizing the Clusters of Orthologous Groups (COG) database for microbial genome functional annotation.

Mastering COG Database Annotation: A Comprehensive Guide for Microbial Genome Analysis in Biomedical Research

Abstract

This article provides a complete resource for researchers utilizing the Clusters of Orthologous Groups (COG) database for microbial genome functional annotation. We explore the database's core principles and evolution, detail practical annotation methodologies and pipelines, address common analytical challenges and optimization strategies, and present rigorous validation frameworks against alternative tools. Tailored for scientists and drug development professionals, this guide bridges foundational theory with advanced application to enhance microbiome, pathogenesis, and antimicrobial discovery research.

Understanding COGs: The Foundational Framework for Microbial Functional Genomics

Historical Context and Evolution

The Clusters of Orthologous Genes (COG) database was initiated in 1997 by the National Center for Biotechnology Information (NCBI) as a pivotal tool for comparative genomics. Its creation was driven by the completion of the first microbial genomes, which necessitated a systematic approach for functional annotation and evolutionary classification of gene products. The core philosophy was to identify orthologous relationships—genes diverged after a speciation event—across multiple phylogenetic lineages, thereby inferring conserved functional modules. Over two decades, COG has evolved through major updates, with the latest version (2020) reflecting a vast expansion from the original 21 complete genomes to encompass thousands of prokaryotic and eukaryotic genomes, integrating advances in sequencing technology and phylogenetic methodology.

Scope and Core Architecture

The COG database categorizes proteins from complete genomes into clusters presumed to have evolved from a single ancestral gene. Its scope extends across the Tree of Life, though it remains most comprehensive for bacteria and archaea. The architecture is built on the principle of "genome context," combining sequence similarity, phylogenetic patterns, and functional conservation.

Table 1: Key Quantitative Metrics of the COG Database (2020 Update)

| Metric | Description | Count/Percentage |

|---|---|---|

| Number of Genomes Analyzed | Prokaryotic and eukaryotic genomes included. | > 4,500 |

| Total COGs Identified | Unique orthologous clusters. | 5,136 |

| Proteins Classified | Individual proteins assigned to a COG. | ~ 2.2 million |

| Functional Categories | Broad functional groups (e.g., Metabolism, Information Storage). | 25 |

| Coverage of Typical Bacterial Genome | Percentage of genes assignable to a COG. | 70-80% |

Core Philosophy and Application in Microbial Genome Annotation Research

The philosophical underpinning of COG is that evolutionary conservation predicts function. This principle is central to microbial genome annotation pipelines, where assigning a new gene to a COG provides an immediate, computationally derived functional hypothesis. Within a thesis on microbial annotation, COG serves as the benchmark for functional prediction, enabling the study of metabolic pathway evolution, horizontal gene transfer, and core versus dispensable genomes. Its system allows for the differentiation between orthologs (direct evolutionary counterparts) and paralogs (genes duplicated within a genome), which is critical for accurate annotation.

Methodological Protocol for COG-Based Annotation

This protocol details the standard workflow for annotating a newly sequenced microbial genome using the COG database.

Experimental Protocol: COG Assignment and Functional Inference

1. Input Preparation:

- Assemble the microbial genome sequence and predict open reading frames (ORFs) using tools like Prodigal or GLIMMER.

- Translate ORFs into protein sequences.

2. Sequence Comparison:

- Perform a BLASTP search of all predicted protein sequences against the COG protein database (e.g.,

cog-20.fa). Use an E-value cutoff of 0.001.

3. Orthology Assignment (COGNITOR Method):

- For each query protein, identify the best BLAST hit(s) across all genomes in the COG database.

- Apply the "beads-on-a-string" algorithm: A query protein is assigned to a COG if it is consistently more similar to proteins from different species within that COG than to any proteins from outside the cluster.

- Manual curation or refined automated systems (like EggNOG) may resolve complex cases involving paralogs.

4. Functional Categorization:

- Map the assigned COG ID to its predefined functional category (e.g., [J] Translation, ribosomal structure and biogenesis).

- Annotate the genome file (GBK format) with the COG identifier and functional code.

5. Downstream Analysis:

- Calculate genome statistics: percentage of genes in each COG category, core COGs present in all strains, etc.

- Perform comparative genomics by comparing COG category profiles across multiple genomes.

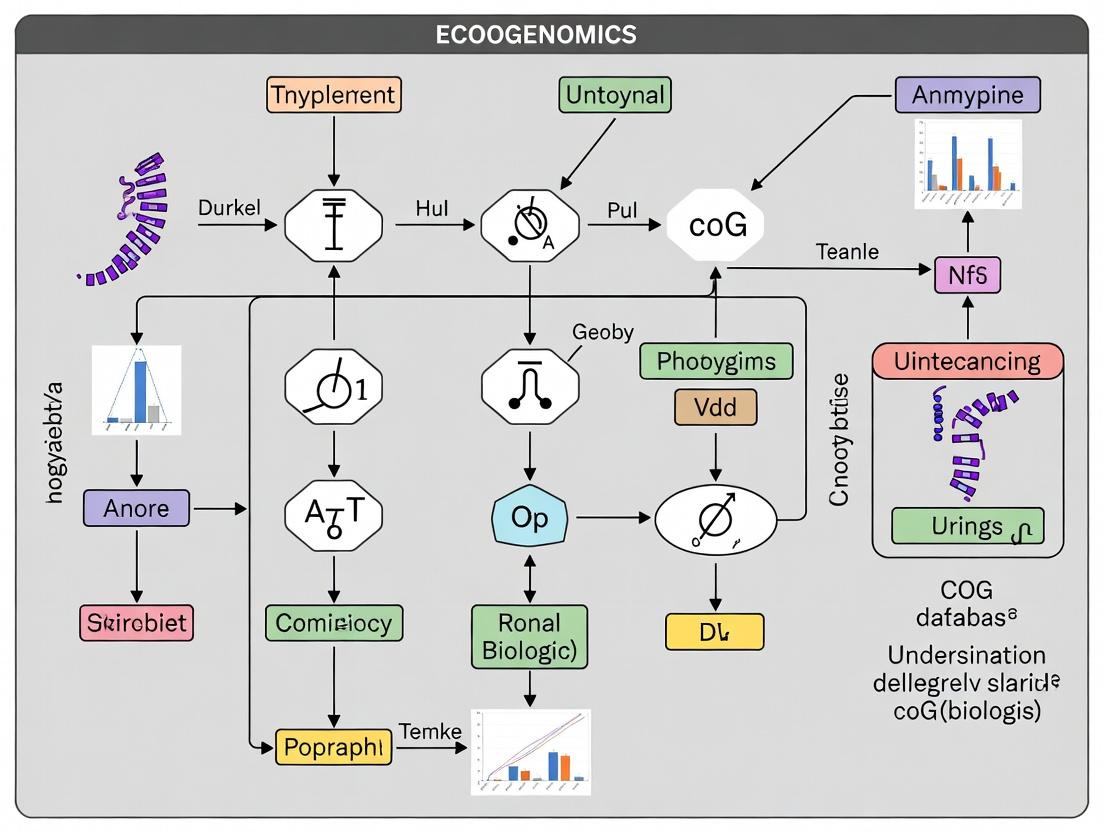

Diagram Title: COG-Based Genome Annotation Workflow

Key Signaling and Metabolic Pathways Elucidated by COG Analysis

COG analysis is instrumental in reconstructing pathways. For instance, the bacterial two-component signal transduction system involves a histidine kinase (COG0642) and a response regulator (COG0745).

Diagram Title: Two-Component Signal Transduction Pathway

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 2: Key Research Reagents and Tools for COG-Based Studies

| Item | Function/Description | Example/Supplier |

|---|---|---|

| COG Protein Database | The core dataset of clustered orthologous groups for sequence comparison. | NCBI FTP Site (cog-20.fa) |

| BLAST+ Suite | Command-line tools for performing the essential sequence similarity search. | NCBI (blastp) |

| EggNOG-mapper Web Tool | A contemporary, scalable tool for faster COG/NOG assignments. | http://eggnog-mapper.embl.de |

| Prodigal Software | Accurate and fast prokaryotic gene finder for ORF prediction. | (Hyatt et al., 2010) |

| Functional Category Table | Mapping file linking COG IDs to 4-letter codes and functional categories. | Included in COG download |

| Comparative Genomics Platform | Software for visualizing COG distributions across genomes. | MicroScope, PhyloProfile |

Current Status and Integration with Modern 'Omics'

The contemporary COG framework is integrated into larger orthology databases like EggNOG and the Orthologous Matrix (OMA). It remains a foundational resource, though current microbial annotation research often uses these extended databases for broader coverage. Its role in a modern thesis is as a curated, phylogenetically informed benchmark against which newer machine-learning annotation tools are validated. The core philosophy of evolutionary conservation continues to guide the functional interpretation of metagenomic and pan-genomic data in drug discovery, particularly in identifying essential bacterial pathways as antibiotic targets.

The Clusters of Orthologous Groups (COG) database represents a cornerstone in microbial genome annotation, providing a systematic framework for the functional classification of gene products from completely sequenced genomes. Within the broader thesis of leveraging comparative genomics for functional prediction and evolutionary analysis, the COG system serves as an essential tool. It enables researchers to infer gene function through evolutionary relationships, moving beyond sequence similarity to identify conserved functional modules across diverse phylogenetic lineages. This technical guide dissects the system's architecture, offering a detailed roadmap for its application in contemporary microbial research and drug target discovery.

Hierarchical Structure and Functional Categories

The COG system is built on a multi-layered hierarchical logic. The fundamental unit is the COG itself, defined as a group of genes from at least three distinct phylogenetic lineages presumed to have evolved from a single ancestral gene (orthologs). These COGs are then aggregated into broader functional categories.

The system organizes proteins into 25 major functional categories, denoted by single letters. These are further grouped into four overarching supercategories.

Table 1: COG Functional Categories and Supercategories

| Category Code | Category Description | Supercategory |

|---|---|---|

| J | Translation, ribosomal structure and biogenesis | Information Storage and Processing |

| A | RNA processing and modification | Information Storage and Processing |

| K | Transcription | Information Storage and Processing |

| L | Replication, recombination and repair | Information Storage and Processing |

| B | Chromatin structure and dynamics | Information Storage and Processing |

| D | Cell cycle control, cell division, chromosome partitioning | Cellular Processes and Signaling |

| Y | Nuclear structure | Cellular Processes and Signaling |

| V | Defense mechanisms | Cellular Processes and Signaling |

| T | Signal transduction mechanisms | Cellular Processes and Signaling |

| M | Cell wall/membrane/envelope biogenesis | Cellular Processes and Signaling |

| N | Cell motility | Cellular Processes and Signaling |

| Z | Cytoskeleton | Cellular Processes and Signaling |

| W | Extracellular structures | Cellular Processes and Signaling |

| U | Intracellular trafficking, secretion, and vesicular transport | Cellular Processes and Signaling |

| O | Posttranslational modification, protein turnover, chaperones | Cellular Processes and Signaling |

| C | Energy production and conversion | Metabolism |

| G | Carbohydrate transport and metabolism | Metabolism |

| E | Amino acid transport and metabolism | Metabolism |

| F | Nucleotide transport and metabolism | Metabolism |

| H | Coenzyme transport and metabolism | Metabolism |

| I | Lipid transport and metabolism | Metabolism |

| P | Inorganic ion transport and metabolism | Metabolism |

| Q | Secondary metabolites biosynthesis, transport and catabolism | Metabolism |

| R | General function prediction only | Poorly Characterized |

| S | Function unknown | Poorly Characterized |

Table 2: Quantitative Overview of the Latest COG Database Release (eggNOG 6.0)

| Metric | Value | Description |

|---|---|---|

| Total COGs/NOGs | ~4.6 million | Orthologous groups across all taxonomic levels. |

| Reference Genomes | 10,209 | Representative genomes used for core orthology assignment. |

| Covered Species | 1,78 million | Distinct species across all domains of life. |

| Proteins Annotated | 129 million | Total proteins classified within the hierarchical groups. |

| Bacterial COGs (Level 2) | ~85,000 | Orthologous groups specific to the bacterial domain. |

| Core Universal COGs | ~250 | COGs present in >90% of sequenced bacterial genomes. |

Experimental Protocol for COG-Based Genome Annotation

This protocol details a standard computational pipeline for annotating a newly sequenced bacterial genome using the COG framework.

Protocol: Functional Annotation via COG Assignment

Objective: To assign putative functional categories to predicted protein-coding genes in a microbial genome assembly.

Input: A FASTA file of assembled contigs/scaffolds or a FASTA file of predicted protein sequences.

Software & Dependencies: HMMER, Diamond BLAST, eggNOG-mapper, Python environment.

Procedure:

Gene Prediction: Use a tool such as Prodigal to identify open reading frames (ORFs) and extract protein sequences.

Orthology Assignment: Employ eggNOG-mapper, the current standard tool leveraging the expanded eggNOG/COG databases.

- Download and install the eggNOG-mapper software and necessary databases.

- Run annotation: This step performs sequence searches (HMMER/DIAMOND) against the pre-computed orthology groups.

Data Analysis: The primary output file (

annotation.emapper.annotations) will contain:- Query protein ID

- Assigned COG ID (e.g., COG0001)

- Assigned functional category letter(s) (e.g.,

J,KM) - Description

- Statistical scores

Functional Summary: Parse the output to generate a count table of proteins assigned to each COG functional category. This provides a high-level functional profile of the genome.

Validation & Manual Curation: For critical genes (e.g., potential drug targets), verify assignments by examining alignment scores, domain architecture (using Pfam), and consistency of annotation within the predicted operonic context.

Visualizing the COG Annotation Workflow and Logic

Diagram 1: COG annotation workflow (76 chars)

Diagram 2: Hierarchical structure of COG system (76 chars)

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Tools for COG-Based Research

| Item/Tool Name | Provider/Resource | Function in COG Annotation Research |

|---|---|---|

| eggNOG-mapper v2+ | http://eggnog-mapper.embl.de | Core software for fast, genome-scale functional annotation using pre-computed orthology groups from eggNOG/COG databases. |

| eggNOG 6.0 Database | eggNOG Consortium | The underlying, expanded database containing hierarchical orthology groups, functional descriptions, and evolutionary histories across all life forms. |

| HMMER Suite (v3.3) | http://hmmer.org | Toolkit for profile hidden Markov model searches, used for sensitive detection of remote homologs during orthology assignment. |

| DIAMOND | https://github.com/bbuchfink/diamond | Ultra-fast protein sequence aligner, used as an alternative to BLAST for large-scale searches against protein databases. |

| Prodigal | https://github.com/hyattpd/Prodigal | Fast, reliable gene-finding software for prokaryotic genomes, generating the initial protein sequences for annotation. |

| COG Functional Category Table | NCBI/eggNOG Website | Reference table (as in Table 1 of this guide) used to interpret the single-letter category codes assigned to each protein. |

| Custom Python/R Scripts | Researcher-developed | Essential for parsing large annotation output files, generating summary statistics, and creating custom visualizations of the functional profile. |

| High-Performance Computing (HPC) Cluster or Cloud Instance | Institutional or AWS/GCP | Necessary computational resources to run annotation pipelines on large genomes or metagenomic datasets within a practical timeframe. |

This whitepaper, framed within a broader thesis on COG database microbial genome annotation research, explores how Cluster of Orthologous Groups (COG) analysis transcends mere functional cataloging. It provides profound biological insights into microbial evolution, from deciphering the conserved core genome essential for survival to identifying genetic determinants that facilitate specialization and niche adaptation. This systematic approach is foundational for comparative genomics and pangenome studies, offering a framework to link genotype with ecological phenotype.

The Core Genome: Unveiling Essential Life Functions

The core genome, comprised of genes present in all strains of a species or genus, is elucidated through COG comparison. Analysis consistently reveals that core functions are dominated by housekeeping roles.

Table 1: Representative Core Genome COG Categories Across Bacterial Genera

| COG Category Code | Category Description | Typical % in Core Genome | Key Functions |

|---|---|---|---|

| J | Translation, ribosomal structure/biogenesis | 15-25% | rRNA processing, tRNA charging, peptide bond formation. |

| F | Nucleotide transport/metabolism | 5-10% | Purine/pyrimidine synthesis, salvage pathways. |

| H | Coenzyme transport/metabolism | 5-8% | Synthesis of vitamins, prosthetic groups, carriers. |

| C | Energy production/conversion | 10-15% | Oxidative phosphorylation, TCA cycle, electron transport. |

| O | Posttranslational modification/protein turnover | 5-10% | Chaperones, proteases, protein folding/repair. |

| E | Amino acid transport/metabolism | 8-12% | Biosynthesis and transport of amino acids. |

Experimental Protocol: Core Genome Identification via COG Annotation

- Genome Acquisition & Quality Control: Assemble high-quality, closed genomes for multiple strains (e.g., 10-100) of a target microbial species using Illumina/Nanopore hybrid assembly. Assess quality with CheckM (completeness >95%, contamination <5%).

- Proteome Prediction: Use Prodigal to predict all protein-coding sequences (CDS) for each genome.

- COG Assignment: Perform RPS-BLAST or DIAMOND search of all CDS against the CDD database (containing COG profiles) using an E-value cutoff of 1e-5. Assign the best-hit COG ID and functional category to each protein.

- Pangenome Calculation: Use specialized software (e.g., Roary, Panaroo) to cluster orthologous genes. Input includes the GFF3 files and COG annotations for all strains.

- Core Genome Definition: Extract the set of gene clusters (orthologs) present in ≥99% (strict) or ≥95% (soft core) of the analyzed strains. Summarize the COG category distribution of this core set.

Title: Workflow for Core Genome COG Analysis

Niche Adaptation: Decoding the Accessory and Unique Genomes

Genes absent from the core (accessory/unique) are primary drivers of niche adaptation. COG analysis of these variable genomes highlights categories enriched in environmental interaction.

Table 2: COG Categories Frequently Enriched in Accessory Genomes of Niche-Adapted Pathogens

| COG Category Code | Category Description | Association with Niche Adaptation | Example Functions |

|---|---|---|---|

| G | Carbohydrate transport/metabolism | Carbon source utilization | Pectin degradation (plant pathogen), lactose fermentation (gut commensal). |

| P | Inorganic ion transport/metabolism | Survival in extreme environments | Heavy metal resistance (e.g., Cu, Zn), acid tolerance islands. |

| Q | Secondary metabolite biosynthesis | Defense, competition, signaling | Antibiotics, siderophores, pigments. |

| V | Defense mechanisms | Host evasion & persistence | Restriction-modification systems, toxin-antitoxin systems, capsule synthesis. |

| U | Intracellular trafficking/secretion | Host-pathogen interaction | Type III-VI secretion system effectors, adhesins. |

| N | Cell motility | Colonization & dissemination | Flagellar biosynthesis, chemotaxis proteins. |

Experimental Protocol: Identifying Niche-Specific COG Enrichment

- Comparative Cohort Design: Assemble two groups of genomes: one from a specific niche (e.g., clinical isolates) and a control from a different environment (e.g., environmental isolates).

- COG Annotation & Pangenome Partition: Perform annotation as in Section 2. Classify genes into Core, Accessory (present in 15-95% of strains), and Unique (<15%) for the entire dataset.

- Statistical Enrichment Analysis: Using the Accessory/Unique gene sets from each cohort, perform a Fisher's exact test or chi-squared test on the counts of genes per COG category. Correct for multiple testing (Benjamini-Hochberg).

- Functional Validation: For enriched COGs (e.g., secondary metabolism, 'Q'), construct gene knockout mutants and compare fitness (growth curve, competitive index) between mutant and wild-type in the purported niche condition (e.g., low iron, host cell model).

Signaling and Regulation: A Network View

COG analysis often reveals coordinated adaptation through regulatory systems. A key pathway is the EnvZ/OmpR two-component system regulating outer membrane porosity in response to osmolarity, frequently identified in variable genomes.

Title: EnvZ/OmpR Osmotic Adaptation Pathway

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for COG-Based Genomic Research

| Item | Function/Application | Key Provider/Example |

|---|---|---|

| CDD & COG Database | Source of curated profiles for functional annotation via RPS-BLAST. | NCBI's Conserved Domain Database (CDD). |

| Prodigal Software | Reliable, fast prediction of protein-coding genes in bacterial/archaeal genomes. | Hyatt et al., BMC Bioinformatics. |

| Roary/Panaroo | High-speed pangenome pipeline; clusters orthologs, identifies core/accessory genome. | Page et al., Bioinformatics (Roary). |

| DIAMOND | Ultra-fast protein sequence aligner for large-scale annotation against COG databases. | Buchfink et al., Nature Methods. |

| EggNOG-Mapper | Web/CLI tool for functional annotation, including COGs, from protein sequences. | Cantalapiedra et al., Mol. Biol. Evol. |

| CheckM/CheckM2 | Assesses genome completeness and contamination using lineage-specific marker sets. | Parks et al., Genome Research (CheckM). |

| Anti-Flagellin Antibody | Validates motility phenotype predicted by enrichment in COG category 'N'. | Commercial (e.g., Invivogen, Sigma). |

| Iron-Depleted Culture Media | Functional validation of siderophore biosynthesis genes (often in COG category 'Q'). | Chelex-treated media or specific formulations (e.g., RPMI + apotransferrin). |

The Clusters of Orthologous Groups (COG) database, initiated by Roman Tatusov and colleagues in 1997, established the foundational paradigm for comparative genomics and functional annotation of prokaryotic genomes. This framework has evolved into the eggNOG (evolutionary genealogy of genes: Non-supervised Orthologous Groups) database, a cornerstone resource for microbial genome annotation within modern bioinformatics. This whitepaper contextualizes this evolution within the ongoing thesis of leveraging orthology for predicting gene function, elucidating evolutionary pathways, and identifying novel drug targets in microbial genomes.

Historical Evolution: Quantitative Milestones

The transition from COG to eggNOG represents significant scaling in genomic data handling, algorithm sophistication, and functional coverage.

Table 1: Quantitative Evolution from COG to eggNOG

| Feature | COG (Original 1997) | eggNOG 6.0 (2023) | Change Factor |

|---|---|---|---|

| Number of Genomes | 7 (3 Archaea, 4 Bacteria) | 13,838 (Viruses, Archaea, Bacteria, Eukaryotes) | ~1,977x |

| Number of Proteins | ~50,000 | 67.6 Million | ~1,352x |

| Core Orthologous Groups | 2,801 COGs | 1.9 Million Hierarchical Orthologous Groups | ~678x |

| Functional Annotation | 17 Functional Categories | GO Terms, KEGG, SMART, Pfam, CAZy, CARD, MEROPS | Multi-Domain |

| Update Mechanism | Static Releases | Continuous Integration (eggNOG-mapper updates) | Dynamic |

Core Technical Architecture & Methodology

eggNOG Construction Workflow

The modern eggNOG framework employs a sophisticated, automated pipeline for constructing orthologous groups.

Experimental Protocol: eggNOG Hierarchical Orthology Inference

- Data Acquisition: All available proteomes from UniProt, Ensembl, and RefSeq are collected.

- Sequence Clustering (SIMAP): All-vs-all protein similarity comparisons are performed using DIAMOND/MMseqs2. A similarity network is built based on bi-directional best hits and alignment metrics (E-value < 1e-5, alignment coverage > 80%).

- Hierarchical Clustering: Proteins are clustered into families using the HMM-FAST/CCD algorithm across two taxonomic levels:

- Level 1: euNOGs - Clusters within major taxonomic groups (e.g., Bacteria, Archaea).

- Level 2: metaNOGs - Clusters derived from the entire set of organisms, capturing deeper evolutionary relationships.

- Tree and HMM Generation: For each cluster, a multiple sequence alignment (MSA) is built using MAFFT. A phylogenetic tree is inferred with FastTree. A consensus Hidden Markov Model (HMM) profile is built from the MSA using

hmmbuild. - Functional Annotation: Functional terms from Gene Ontology (GO), KEGG Orthology (KO), and Carbohydrate-Active Enzymes (CAZy) are transferred to clusters via a majority-rule consensus from annotated member proteins.

- Database Deployment: Results are stored in a MySQL/PostgreSQL database with a REST API (http://eggnog6.embl.de) for programmatic access.

Diagram 1: eggNOG Construction Pipeline

Functional Annotation with eggNOG-mapper

The primary tool for users is eggNOG-mapper, which annotates novel sequences using precomputed eggNOG orthology data.

Experimental Protocol: Genome-Wide Annotation with eggNOG-mapper v2

- Input: FASTA file of protein or nucleotide sequences.

- Seed Ortholog Search: Query sequences are searched against the eggNOG HMM profile database using

hmmscan(HMMER3) and DIAMOND (for fast pre-filtering). The best-hit HMM profile defines the candidate Orthologous Group (OG). - Orthology Assignment: The query is placed within the phylogenetic tree of the candidate OG using a maximum-likelihood approach (

TreeBeST). The most likely descendant node (and its associated taxonomic scope) is selected. - Functional Transfer: Annotation from the assigned OG (GO terms, KEGG pathways, EC numbers, etc.) is transferred to the query sequence.

- Output: Tab-delimited file containing query ID, assigned OG, functional description, GO terms, KEGG KO, Pathway, Module, and CAZY annotations.

Diagram 2: eggNOG-mapper Annotation Process

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Resources for Orthology-Based Annotation Research

| Item / Resource | Function & Purpose | Access / Example |

|---|---|---|

| eggNOG-mapper Software | Command-line/Web tool for fast functional annotation using precomputed eggNOG clusters. | http://eggnog-mapper.embl.de; pip install eggnog-mapper |

| eggNOG 6.0 Database | The core database of hierarchical OGs, alignments, trees, and annotations. | http://eggnog6.embl.de; Downloads via FTP |

| DIAMOND Software | Ultra-fast protein sequence aligner used for the initial similarity search step. | https://github.com/bbuchfink/diamond |

| HMMER Suite | Profile HMM tools (hmmscan, hmmbuild) for sensitive protein domain detection. |

http://hmmer.org |

| MAFFT | Algorithm for generating multiple sequence alignments from OG members. | https://mafft.cbrc.jp |

| FastTree | Tool for inferring approximate maximum-likelihood phylogenetic trees for large OGs. | http://www.microbesonline.org/fasttree |

| CARD Database | Antibiotic resistance gene ontology, integrated into eggNOG for resistance profiling. | https://card.mcmaster.ca |

| MEROPS Database | Peptidase database, integrated for protease function annotation. | https://www.ebi.ac.uk/merops |

Application in Drug Development: Pathway Analysis Case Study

eggNOG's KEGG Orthology (KO) annotation enables rapid reconstruction of metabolic and signaling pathways in pathogenic microbes, identifying potential drug targets.

Experimental Protocol: Targeting a Pathogen-Specific Biosynthesis Pathway

- Genome Annotation: Annotate the draft genome of a target drug-resistant bacterium using

eggNOG-mapper(Protocol 3.2). - KO Extraction: Parse the output to extract all assigned KEGG Orthology (KO) identifiers.

- Pathway Mapping: Use the KEGG Mapper – Reconstruct Pathway tool (https://www.kegg.jp/kegg/mapper.html) to map KOs to the KEGG reference pathway database.

- Gap Analysis & Essentiality: Identify pathways present in the pathogen but absent in the human host. Cross-reference with essential gene databases (e.g., DEG) to prioritize non-host, essential pathway components (e.g., diaminopimelate synthesis in peptidoglycan formation).

- Target Validation: Select a key enzyme (e.g., dapB, KO:K00215). Retrieve its eggNOG alignment and phylogenetic tree to assess sequence conservation across pathogen strains and identify variable regions for potential specific inhibitor design.

Diagram 3: Drug Target ID via eggNOG & KEGG

Current Status and Future Directions

The eggNOG framework has transitioned from a static classification system to a dynamic, continuously updated ecosystem. Current research integrates machine learning for improved orthology prediction, expands pan-genome analyses across microbial species complexes, and deepens functional annotations with protein language model embeddings. Its integration with antimicrobial resistance (CARD) and virulence factor databases solidifies its role as an indispensable platform for microbial genomics in basic research and applied drug discovery, directly extending the thesis of Tatusov's original COG concept into the era of big data genomic science.

The Clusters of Orthologous Genes (COG) database provides a pivotal framework for microbial genome annotation by categorizing proteins from sequenced genomes into orthologous groups based on evolutionary relationships. This phylogenetic classification is fundamental for assigning putative functions to novel gene sequences. Within the broader thesis of microbial genome annotation research, the COG database serves as the foundational scaffold that enables the three primary use cases discussed herein. By providing a standardized, phylogenetically-inferred functional vocabulary, COGs allow for the consistent interpretation of genomic data across pathogens, complex microbial communities, and divergent species, directly powering insights in pathogen profiling, metagenomic analysis, and comparative genomics.

Pathogen Profiling: Virulence and Resistance Annotation

Pathogen profiling leverages COG annotation to identify genetic determinants of virulence and antimicrobial resistance (AMR), transforming raw genome sequences into actionable public health intelligence.

Core Methodology:

- Genome Assembly & Annotation: Isolate genomic DNA from the pathogen. Sequence using a short- or long-read platform (or hybrid). Assemble reads into contigs and scaffolds. Annotate the assembled genome using COG database resources (e.g., via the EggNOG-mapper or WebMGA tools) which assign COG functional categories (e.g., [M] Cell wall/membrane/envelope biogenesis, [V] Defense mechanisms) to predicted coding sequences (CDS).

- Target Identification: Screen the COG-annotated CDS against specialized virulence factor databases (e.g., VFDB) and AMR gene databases (e.g., CARD, ResFinder) using BLAST-based tools.

- Contextual Analysis: Examine the genomic context of identified virulence/AMR genes (e.g., proximity to mobile genetic elements like plasmids or transposons, identified via COG categories or [L]) to assess horizontal transfer potential.

Key Quantitative Data: Table 1: Common COG Categories Enriched in Pathogen Genomes

| COG Category Code | Functional Description | Example Genes/Functions | Typical % of Genome in Pathogens |

|---|---|---|---|

| V | Defense mechanisms | Antibiotic efflux pumps, toxin-antitoxin systems | 2-5% |

| U | Intracellular trafficking and secretion | Type III/IV secretion system components | 1-4% |

| M | Cell wall/membrane biogenesis | Capsular polysaccharide synthesis, adhesion proteins | 5-10% |

| P | Inorganic ion transport | Siderophore systems for iron acquisition | 1-3% |

| X | Mobilome: prophages, transposons | Integrases, transposases (often flanking AMR genes) | 1-10% (variable) |

Experimental Protocol for AMR Gene Detection: Protocol: In-silico AMR Profiling from a Bacterial Genome

- Input: High-quality assembled genome (FASTA format).

- Gene Prediction: Use Prokka or RASTtk to predict all open reading frames (ORFs).

- COG & Functional Annotation: Annotate ORFs using EggNOG-mapper (v5.0+) against the COG database.

- AMR Screening: Use

abricate(v1.0+) with the CARD and ResFinder databases. Minimum thresholds: 80% nucleotide identity, 60% coverage. - Visualization: Generate a summary report of AMR genes, their COG categories, and associated drug classes.

Metagenomics: Functional Characterization of Communities

Metagenomics applies COG annotation to DNA extracted directly from environmental or clinical samples, enabling functional profiling of microbial communities without cultivation.

Core Methodology:

- Shotgun Sequencing: Extract total DNA from sample (e.g., stool, soil, water). Prepare library and sequence on Illumina or NovaSeq platforms to obtain sufficient depth (e.g., 10-20 Gb per sample).

- Read-Based or Assembly-Based Analysis:

- Read-Based: Directly align quality-filtered sequencing reads to a reference database of COG protein sequences using tools like DIAMOND. Aggregate counts per COG category.

- Assembly-Based: De novo assemble reads into contigs using metaSPAdes. Predict genes on contigs >1kb. Annotate predicted genes against the COG database.

- Functional Profiling: Normalize COG counts by sequencing depth to compare functional potential across samples. Statistical analysis (e.g., STAMP, LEfSe) identifies differentially abundant COG categories between sample groups (e.g., healthy vs. disease).

Key Quantitative Data: Table 2: COG Functional Categories in Human Gut Metagenomics

| Broad Functional Group | Specific COG Categories | Typical Relative Abundance in Healthy Gut | Notes on Dysbiosis |

|---|---|---|---|

| Metabolism | [G] Carbohydrate, [E] Amino Acid, [F] Nucleotide | ~50-60% of assigned COGs | Often decreased in inflammatory bowel disease |

| Information Storage & Processing | [J] Translation, [K] Transcription, [L] Replication | ~15-20% of assigned COGs | Stable core functions |

| Cellular Processes & Signaling | [M] Cell wall, [T] Signal transduction, [V] Defense | ~20-25% of assigned COGs | [V] may increase with pathogen load |

Diagram Title: Metagenomic Functional Profiling Workflow Using COGs

Comparative Genomics: Inference of Evolutionary Trajectories

Comparative genomics uses COG annotations as stable functional units to trace gene gain, loss, and rearrangement across microbial lineages, informing evolutionary biology and pan-genome analyses.

Core Methodology:

- Dataset Curation: Select a phylogenetically representative set of genomes (e.g., all E. coli strains or a diverse bacterial phylum).

- Uniform Annotation: Annotate all genomes uniformly using the same COG assignment pipeline (critical for consistency).

- Pan-Genome Calculation: Classify genes into: Core Genome (COGs present in ≥99% strains), Accessory Genome (COGs present in 1-99% strains), and Unique Genes (strain-specific COGs).

- Phylogenetic Inference: Construct a phylogenetic tree based on core genome SNPs or concatenated core COG sequences. Map the presence/absence of accessory COGs onto the tree to infer horizontal gene transfer events and adaptive evolution.

Key Quantitative Data: Table 3: Pan-Genome Statistics for a Bacterial Species Complex

| Pan-Genome Component | Definition | Typical Size Range (No. of COGs) | Functional Enrichment |

|---|---|---|---|

| Core Genome | Present in all (>99%) isolates | 2,000 - 4,000 COGs | [J] Translation, [K] Transcription, [L] Replication |

| Accessory (Shell) Genome | Present in some isolates | 5,000 - 15,000+ COGs | [V] Defense, [P] Inorganic ions, [X] Mobilome |

| Unique (Cloud) Genome | Strain-specific | Highly variable (10s - 100s) | Often hypotheticals or phage-related |

Experimental Protocol for Core/Accessory COG Analysis: Protocol: Pan-Genome Analysis with COG Functional Layer

- Input: Collection of assembled genomes (FASTA) for target species.

- Annotation: Run

prokka --cogson each genome independently, or useeggnog-mapperin batch mode for standardized COG assignment. - Orthology Clustering: Use OrthoFinder or Panaroo to cluster all predicted protein sequences into orthologous groups, integrating COG IDs where available.

- Matrix Construction: Generate a binary (presence/absence) matrix of orthogroups (COGs) x strains.

- Analysis: Use

roaryto calculate core/accessory thresholds andggplot2in R for visualization (e.g., heatmaps, pie charts of COG categories in each component).

Diagram Title: Comparative Genomics Pipeline with COG Annotation

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 4: Key Reagents and Tools for COG-Based Genomic Analyses

| Item/Tool Name | Category | Primary Function in Workflow |

|---|---|---|

| Nextera XT DNA Library Prep Kit (Illumina) | Wet-lab Reagent | Prepares multiplexed, sequencing-ready libraries from low-input genomic or metagenomic DNA. |

| QIAamp PowerFecal Pro DNA Kit (Qiagen) | Wet-lab Reagent | Extracts high-quality, inhibitor-free total DNA from complex microbial samples (stool, soil). |

| EggNOG-mapper (v5.0+) | Bioinformatics Tool | Performs fast, functional annotation of protein sequences, including COG category assignment, against the EggNOG/COG database. |

| DIAMOND (v2.1+) | Bioinformatics Tool | Ultra-fast protein sequence aligner used for matching metagenomic reads or genes to COG reference databases. |

| Prokka | Bioinformatics Tool | Rapid prokaryotic genome annotator that integrates COG assignments via external databases. |

| Panaroo (v1.3+) | Bioinformatics Tool | Robust pan-genome analysis pipeline that identifies core and accessory genes, handling annotation data (e.g., COGs). |

| CARD & ResFinder Databases | Reference Data | Curated repositories of AMR genes, used in conjunction with COG output for pathogen profiling. |

| VFDB | Reference Data | Database of bacterial virulence factors, used to annotate COG-identified genes in pathogens. |

| STAMP Software | Statistical Tool | Statistical analysis of taxonomic and functional profiles (e.g., COG abundance tables) for metagenomics. |

Step-by-Step: COG Annotation Pipelines and Practical Applications in Research

Within the framework of microbial genome annotation research utilizing the Clusters of Orthologous Groups (COG) database, the precise preparation of data—from raw sequencing reads to predicted protein sequences—is a foundational step. This in-depth guide details the technical pipeline required to transform raw genomic data into a structured input for functional annotation, a critical prerequisite for downstream applications in comparative genomics, metabolic pathway reconstruction, and drug target identification.

The Data Preparation Pipeline: A Technical Workflow

Initial Quality Control and Read Trimming

Raw sequence data from platforms like Illumina or Nanopore requires stringent quality assessment.

- Experimental Protocol (FastQC & Trimmomatic):

- Quality Report: Execute

fastqc *.fastq.gzon all raw read files to generate HTML reports summarizing per-base sequence quality, GC content, adapter contamination, and sequence duplication levels. - Adapter Trimming & Filtering: Run Trimmomatic in paired-end mode:

- Post-trimming QC: Re-run FastQC on the trimmed read files (

*_paired.fq.gz) to confirm quality improvements.

- Quality Report: Execute

Genome Assembly

De novo assembly reconstructs the genome from overlapping reads.

- Experimental Protocol (SPAdes for Illumina Reads):

- Assembly Execution: For isolate Illumina data, run SPAdes with careful k-mer selection and error correction.

- Output: The primary assembly is typically found in

spades_assembly_output/scaffolds.fasta. For final contigs, usecontigs.fasta.

Assembly Quality Assessment

Assembly metrics determine the reliability of the reconstructed genome for downstream analysis.

Table 1: Quantitative Metrics for Assembly Quality Assessment

| Metric | Tool | Optimal Range (for bacterial genomes) | Interpretation |

|---|---|---|---|

| Total Length (bp) | QUAST | Species-dependent | Total size of the assembly. |

| Number of Contigs | QUAST | Minimize (aim for 1-100) | Fewer contigs indicate better continuity. |

| N50 (bp) | QUAST | Maximize | Length of the shortest contig at 50% of total assembly length. Higher is better. |

| L50 (count) | QUAST | Minimize | Number of contigs that span the N50 length. Lower is better. |

| Completeness (%) | CheckM | >95% (for isolates) | Estimated percentage of single-copy marker genes present. |

| Contamination (%) | CheckM | <5% | Estimated percentage of marker genes present in multiple copies. |

- Experimental Protocol (QUAST & CheckM):

- Structural Evaluation: Run QUAST on the assembly file.

- Biological Evaluation: Run CheckM to assess completeness and contamination using conserved marker sets.

Gene Prediction & Protein Sequence Extraction

Identifying protein-coding sequences (CDS) is the final step before COG annotation.

- Experimental Protocol (Prokka):

- Annotation Pipeline: Prokka integrates several tools for rapid prokaryotic genome annotation.

- Output Extraction: The predicted protein sequences in FASTA format are found in

prokka_annotation/my_genome.faa. This file is the direct input for COG annotation tools like eggNOG-mapper or webMGA.

Visualization of the Core Workflow

Genome to Protein Prediction Pipeline

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools and Resources for the Workflow

| Item | Function/Description | Key Parameter/Note |

|---|---|---|

| Illumina DNA Prep Kit | Library preparation for Illumina sequencers. Provides end-repair, A-tailing, and adapter ligation. | Insert size selection is critical for assembly continuity. |

| ONT Ligation Sequencing Kit (SQK-LSK114) | Library preparation for Oxford Nanopore long-read sequencing. | Enables hybrid assembly, improving contiguity. |

| NEBnext Ultra II FS DNA Library Prep Kit | Alternative for Illumina, with rapid fragmentation and library prep. | Useful for high-throughput isolate sequencing. |

| Qubit dsDNA HS Assay Kit | Fluorometric quantification of DNA concentration post-extraction and pre-library prep. | More accurate for sequencing than spectrophotometry (A260/A280). |

| SPRIselect Beads | Magnetic beads for size selection and clean-up during library prep and post-PCR. | Ratios determine fragment size retention. |

| Prokaryotic Reference Genomes (NCBI RefSeq) | High-quality reference genomes for related species used for assembly validation and comparison. | Essential for reference-guided assembly or alignment-based QC. |

| COG/eggNOG Database | Database of orthologous groups and functional annotations. The target for final protein sequence classification. | Local installation (eggNOG-mapper) recommended for large-scale analysis. |

| HPC Cluster or Cloud Compute (AWS/GCP) | Computational resource for memory- and CPU-intensive steps (assembly, CheckM). | Assembly of complex genomes may require >100 GB RAM. |

This guide serves as a technical annex to the broader thesis "A Comparative Framework for Functional Annotation in Microbial Genomics: Leveraging the COG Database for Drug Target Discovery." Accurate functional annotation of microbial genomes is a cornerstone of modern microbiological research, with direct implications for understanding pathogenesis, metabolism, and the identification of novel drug targets. This document provides an in-depth, technical comparison of four prominent methodologies for assigning Clusters of Orthologous Groups (COG) functions: the web-based tools eggNOG-mapper and WebMGA, the standalone suite COGNIZER, and custom Standalone BLAST workflows against the COG database.

Core Functionality and Characteristics

The following table summarizes the fundamental attributes of each annotation approach.

Table 1: Core Tool Characteristics and Operational Metrics

| Feature | eggNOG-mapper v2 | WebMGA | COGNIZER | Standalone BLAST + COG |

|---|---|---|---|---|

| Access Mode | Web Server / Standalone | Web Server | Standalone Suite | Standalone Workflow |

| Primary Method | Fast orthology mapping via precomputed eggNOG clusters (HMMs & DIAMOND). | Fast similarity search (RAPSearch2) & COG assignment algorithm. | Integrated pipeline: BLAST, RPS-BLAST, HMMER against multiple DBs. | Direct BLASTp/RPS-BLAST against curated COG protein sequences. |

| COG Database Version | Integrated (v5.0+), auto-updated. | Custom, periodically updated (COG2020). | User-configurable (COG, KOG, etc.). | User-dependent (NCBI COG FTP). |

| Typical Runtime (1000 aa seq) | ~2-5 minutes (Web) | ~1-3 minutes (Web) | ~10-30 minutes (Local) | ~15-45 minutes (Local, DB-dep.) |

| Maximum Input (Web) | 1M chars / 20k seqs (batch) | 50k sequences per job | N/A (Standalone) | N/A (Standalone) |

| Output Complexity | Comprehensive (GO, KEGG, COG, etc.) | COG-focused, functional categories. | Multi-database summary tables. | Raw BLAST results, requires parsing for COG. |

| Customization Level | Moderate (parameters adjustable). | Low (fixed parameters). | High (modular, scriptable). | Very High (full control). |

Performance and Accuracy Benchmarks

Data synthesized from recent benchmarking studies (2022-2024) highlight trade-offs between speed and annotation depth.

Table 2: Benchmarking Performance on a Standard 10,000-Protein Microbial Genome

| Metric | eggNOG-mapper | WebMGA | COGNIZER | Standalone BLAST (Best-Hit) |

|---|---|---|---|---|

| Annotation Coverage (%) | 85-92% | 80-88% | 82-90% | 75-85% |

| Computational Speed | Fastest | Very Fast | Moderate | Slowest |

| False Positive Rate (Est.) | Low (<5%) | Low-Medium (~5-8%) | Low (<5%) | Variable (High if cutoff lax) |

| Multi-domain Handling | Excellent (HMM-based) | Good | Excellent (RPS-BLAST) | Poor (single best hit) |

| Functional Consistency | High | High | High | Medium |

Detailed Experimental Protocols

Protocol for eggNOG-mapper (Web Server)

Objective: To obtain functional annotations (COG, GO, KEGG) for a set of microbial protein sequences.

- Input Preparation: Compile protein sequences in FASTA format. Ensure headers are concise (max 30 chars). For large genomes (>5k proteins), use the batch option.

- Job Submission: Navigate to the eggNOG-mapper 2.0 web interface. Upload the FASTA file. Select the appropriate taxonomic scope (e.g.,

bacteria). Choose annotation sources (COG,GO,KEGG). SetHMMsearch type for best accuracy. - Post-processing: Download the resulting

.annotationsfile. The key columnCOG_categoryprovides the single-letter COG code. Use the accompanying.emapper.seed_orthologsfile for hit quality metrics.

Protocol for Custom Standalone BLAST Workflow

Objective: To assign COGs via direct homology search against the official NCBI COG database.

- Database Construction:

a. Download the COG protein sequence FASTA file (

cog.fa) from the NCBI FTP site. b. Format the database:makeblastdb -in cog.fa -dbtype prot -parse_seqids -out COG_DB. - Sequence Search:

a. Run BLASTp:

blastp -query your_proteins.fa -db COG_DB -outfmt "6 qseqid sseqid pident length evalue qcovs" -evalue 1e-5 -max_target_seqs 1 -out blast_results.tsv. b. For domain-level annotation, use RPS-BLAST against the Conserved Domain Database (CDD) profiles, which include COGs. - COG ID Mapping:

a. Parse

blast_results.tsvto extract subject IDs (sseqid), which are COG protein IDs. b. Map these IDs to COG functional categories using thecog2003-2014.csvmapping file from NCBI, applying a conservative E-value threshold (e.g., <1e-10) and query coverage (>70%).

Visualization of Workflow Logic

Tool Selection Decision Pathway

Decision Tree for COG Annotation Tool Selection

Standalone BLAST-to-COG Workflow

Standalone BLAST COG Assignment Pipeline

Table 3: Key Reagent Solutions and Computational Resources for COG Annotation

| Item | Function in Annotation Workflow | Example/Source |

|---|---|---|

| Protein Sequence Data (FASTA) | The primary input; quality dictates annotation accuracy. | Assembled genome ORFs from RAST, Prokka, or in-house pipelines. |

| Reference Database (COG) | The gold-standard functional classification system used for mapping. | NCBI COG FTP (cog.fa, cog2003-2014.csv) or eggNOG/InterPro integrated DBs. |

| Homology Search Software | Engine for identifying sequence similarity to known COGs. | DIAMOND (fast), BLAST+ suite (standard), HMMER (profile-based). |

| High-Performance Compute (HPC) Node | Enables local standalone analysis of large-scale genomic datasets. | Local cluster or cloud instance (AWS, GCP) with multi-core CPUs and adequate RAM. |

| Parsing & Scripting Environment | For filtering, mapping, and analyzing raw output data. | Python (Biopython, Pandas), R (tidyverse), or custom Perl/Bash scripts. |

| Functional Enrichment Tool | To interpret COG category results in a biological context (post-annotation). | clusterProfiler (R), GOseq, or custom hypergeometric test scripts. |

This guide provides a detailed protocol for functional annotation using eggNOG-mapper v5.0+. Within a broader thesis on microbial genome annotation research leveraging the Clusters of Orthologous Groups (COG) database, this tool is indispensable. eggNOG-mapper provides a high-throughput, standardized method to transfer functional annotations from the eggNOG database (which integrates COGs, KEGG, Gene Ontology, etc.) to novel genomic or metagenomic sequences. This enables consistent, comparative analysis essential for studies on microbial evolution, functional potential, and identifying drug targets.

eggNOG-mapper v5.0+ uses fast, homology-based searches (DIAMOND/MMseqs2) against precomputed clusters within the eggNOG 5.0+ database. Key quantitative metrics defining its performance and scope are summarized below.

Table 1: eggNOG Database (v5.0.2) Quantitative Scope

| Metric | Value | Description/Implication |

|---|---|---|

| Source Species | 12,535 | Broad taxonomic coverage for annotation transfer. |

| Annotated Proteins | 66.9 million | Extensive reference dataset. |

| Orthologous Groups | 4.4 million | Core functional units for annotation. |

| COG Categories Covered | 24 (100%) | Full coverage of the classic COG functional categories. |

| KEGG Pathways Mapped | ~11,000 | Enables pathway reconstruction. |

| GO Terms Associated | ~6.7 million | Supports detailed ontological analysis. |

Table 2: eggNOG-mapper v5.0+ Default Parameters & Performance

| Parameter/Feature | Default Setting | Rationale/Impact |

|---|---|---|

| Search Tool | DIAMOND (--dmnd_db) | Optimized for speed vs. sensitivity balance. |

| Search Mode | --seedorthologevalue 0.001 | Stringency threshold for initial hit. |

| Hit Filtering | --querycover 20 --subjectcover 20 | Ensures meaningful sequence overlap. |

| Annotation Transfer | --tax_scope auto | Restricts to best-matching taxonomic level. |

| GO Annotation | --go_evidence non-electronic | Limits to curated, high-quality evidence codes. |

| Typical Runtime | ~1,000 seqs/min* | Enables rapid annotation of large datasets. |

*On a modern server; dependent on hardware and database selected.

Experimental Protocol: A Step-by-Step Methodology

This protocol assumes access to a Linux-based server or high-performance computing cluster.

A. Software Installation

Prerequisites: Install Python (≥3.7), DIAMOND (≥2.0), and HMMER.

Install eggNOG-mapper: Use the Python package manager.

Download the eggNOG Database: This is the largest step (~20 GB).

B. Preparing Input Sequences

- Format input protein sequences in FASTA format. Nucleotide sequences require prior gene prediction.

C. Executing the Annotation

Run the core annotation command, specifying the database location and desired outputs.

D. Interpreting Output Files

Key output files include:

output_annotations.emapper.annotations: Main tab-separated file with COG, KEGG, GO, and description.output_annotations.emapper.seed_orthologs: Best DIAMOND hits against the eggNOG database.output_annotations.emapper.gene_ontology: Detailed GO term assignments.

Visualization of the Workflow

Diagram 1: eggNOG-mapper v5.0+ Annotation Pipeline

Diagram 2: Data Integration from Annotation to Thesis Analysis

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 3: Key Research Reagent Solutions for eggNOG-based Annotation

| Item/Reagent | Function in the Protocol | Notes for Researchers |

|---|---|---|

| eggNOG-mapper Software (v5.0+) | Core annotation engine. | Always check for updates and note version for reproducibility. |

| eggNOG Protein Database (v5.0.2+) | Reference knowledgebase for homology search. | Requires significant storage (~20 GB). Version must match software. |

| DIAMOND (≥v2.0) | Ultra-fast protein aligner for seed ortholog detection. | Alternative: MMseqs2 for sensitive mode (-m mmseqs). |

| High-Performance Computing (HPC) Cluster | Executes searches and analyses on large genomes/metagenomes. | Essential for projects with >100,000 protein sequences. |

| Custom Python/R Scripts | Post-processing of .emapper.annotations files for downstream analysis. |

Used for generating count tables, visualizations, and statistical tests. |

| Functional Enrichment Tools (e.g., clusterProfiler) | Statistically evaluates over-represented COG/KEGG/GO terms. | Crucial for linking annotation data to biological hypotheses in thesis research. |

Within the broader thesis on microbial genome annotation research using the Clusters of Orthologous Genes (COG) database, the interpretation of output files is a critical, final analytical step. This guide provides an in-depth technical examination of COG assignment results, their associated functional categories, and the statistical metrics that validate homology hits. Mastery of this process is essential for researchers, scientists, and drug development professionals aiming to infer protein function, predict metabolic pathways, and identify potential therapeutic targets from genomic data.

Structure of a Standard COG Assignment Output File

A typical output file from tools like eggNOG-mapper, WebMGA, or rpsBLAST against the CDD database contains several core columns of data. The precise format may vary, but the following fields are fundamental:

- Query Sequence ID: Identifier of the input protein/gene.

- COG ID: The assigned Clusters of Orthologous Groups identifier (e.g., COG0001).

- Functional Category Letter(s): One or more single-letter codes representing COG functional categories.

- Description: A brief functional description of the assigned COG.

- Hit Statistics: Metrics such as E-value, Bit-Score, Percent Identity, and Query/Coverage.

Table 1: Core Fields in a COG Assignment Output File

| Field Name | Example Data | Description |

|---|---|---|

| Query_ID | contig_001_gene_10 |

Identifier for the query sequence. |

| COG_ID | COG0124 |

Unique identifier for the assigned COG cluster. |

| Category | J |

Single-letter functional category code. |

| Description | Ribosomal protein S7 |

Predicted functional annotation. |

| E-value | 3.2e-45 |

Statistical significance of the match; lower is better. |

| Bit-Score | 187.5 |

Normalized score indicating match quality; higher is better. |

| % Identity | 98.7 |

Percentage of identical residues in the alignment. |

| Query Coverage | 100 |

Percentage of the query sequence length aligned. |

Decoding COG Functional Categories

The COG database organizes proteins into 25 functional categories (A-Z, with some letters retired). Interpreting these categories is key to understanding the functional landscape of a genome.

Table 2: The 25 COG Functional Categories

| Code | Functional Category | General Role |

|---|---|---|

| J | Translation, ribosomal structure and biogenesis | Protein synthesis |

| A | RNA processing and modification | RNA metabolism |

| K | Transcription | DNA -> RNA |

| L | Replication, recombination and repair | DNA maintenance |

| B | Chromatin structure and dynamics | Nuclear organization |

| D | Cell cycle control, cell division, chromosome partitioning | Cell division |

| Y | Nuclear structure | - |

| V | Defense mechanisms | Phage resistance, toxins |

| T | Signal transduction mechanisms | Signaling pathways |

| M | Cell wall/membrane/envelope biogenesis | Structural components |

| N | Cell motility | Flagella, chemotaxis |

| Z | Cytoskeleton | Cell shape, division |

| W | Extracellular structures | - |

| U | Intracellular trafficking, secretion, and vesicular transport | Protein transport |

| O | Posttranslational modification, protein turnover, chaperones | Protein folding/degradation |

| C | Energy production and conversion | Metabolism (energy) |

| G | Carbohydrate transport and metabolism | Sugar metabolism |

| E | Amino acid transport and metabolism | Amino acid metabolism |

| F | Nucleotide transport and metabolism | Nucleotide metabolism |

| H | Coenzyme transport and metabolism | Vitamin/cofactor metabolism |

| I | Lipid transport and metabolism | Lipid metabolism |

| P | Inorganic ion transport and metabolism | Ion transport |

| Q | Secondary metabolites biosynthesis, transport and catabolism | Specialized compounds |

| R | General function prediction only | Broad, unknown specificity |

| S | Function unknown | No predictable function |

Categories R and S are particularly important to note, as they represent annotations of limited specificity.

Critical Interpretation of Hit Statistics

Hit statistics determine the reliability of an assignment. A multi-parameter threshold is recommended.

Experimental Protocol: Validating COG Assignments

- Objective: To filter raw COG assignment output for high-confidence annotations.

- Methodology:

- Run Annotation: Execute

eggNOG-mapper(v2.1.12+) with default parameters against the COG database. - Primary Filter: Retain only hits with an E-value ≤ 1e-10. This stringent cutoff minimizes false positives.

- Secondary Filter: Apply a Bit-Score threshold relative to the database and query length; a common rule-of-thumb is Bit-Score ≥ 50.

- Coverage Check: Require a Query Coverage ≥ 70% to ensure the match spans most of the protein of interest.

- Manual Curation: For critical genes (e.g., potential drug targets), verify top hits by inspecting alignment files and checking for conserved domain architecture via CD-Search.

- Run Annotation: Execute

Table 3: Recommended Thresholds for High-Confidence COG Assignments

| Statistical Parameter | High-Confidence Threshold | Purpose & Rationale |

|---|---|---|

| E-value | ≤ 1e-10 | Filters statistically insignificant, random matches. |

| Bit-Score | ≥ 50 | Provides a normalized measure of alignment quality independent of database size. |

| Query Coverage | ≥ 70% | Ensures the functional assignment is based on the majority of the query protein. |

| Percent Identity | ≥ 30% (for orthology) | Suggests potential orthology, though value varies with protein family. |

From Assignments to Biological Insight: Workflow

The following diagram illustrates the logical workflow from raw sequence data to biological interpretation within a microbial genomics thesis.

Diagram Title: COG Assignment Analysis Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Tools for COG-Based Annotation Research

| Item | Function & Explanation |

|---|---|

| eggNOG-mapper (v2.1.12+) | A public web/server tool for fast functional annotation using precomputed orthology assignments, including COGs. It scales to large genomes and metagenomes. |

| CD-Search (NCBI) | The Conserved Domain Database search interface. Essential for verifying COG assignments by visualizing domain architecture and checking for multi-domain conflicts. |

| rpsBLAST+ Suite | Local command-line tool for Reverse Position-Specific BLAST against COG position-specific scoring matrices (PSSMs). Provides full control over parameters. |

| COG Database FTP | The source data (COG PSSMs, category definitions, functional lists). Required for building custom local search databases or for detailed reference. |

| Python (Pandas/Matplotlib) | For parsing, filtering, and visualizing output files. Crucial for generating custom functional category bar plots and summary statistics. |

| Cytoscape | Network visualization software. Used to create diagrams of metabolic or signaling pathways inferred from COG category assignments (e.g., all category [C] and [G] proteins). |

This technical guide details the critical downstream analysis phase following the annotation of microbial genomes using the Clusters of Orthologous Groups (COG) database. The core thesis posits that systematic COG annotation, when coupled with rigorous downstream visualization and statistical enrichment analysis, transforms raw genomic data into actionable biological insight. This phase is essential for hypothesis generation in comparative genomics, understanding metabolic potential, and identifying drug targets by mapping annotated gene functions onto biological pathways and processes.

A typical analysis begins by quantifying gene assignments across the 26 primary COG functional categories. The following table presents a comparative profile between two hypothetical bacterial genomes, Pseudomonas aeruginosa PAO1 and Escherichia coli K-12, derived from public annotation projects.

Table 1: Comparative COG Functional Category Distribution

| COG Code | Category Description | P. aeruginosa PAO1 (Count / %) | E. coli K-12 (Count / %) |

|---|---|---|---|

| J | Translation, ribosomal structure and biogenesis | 182 / 3.2% | 152 / 3.5% |

| K | Transcription | 350 / 6.2% | 255 / 5.9% |

| L | Replication, recombination and repair | 220 / 3.9% | 180 / 4.2% |

| E | Amino acid transport and metabolism | 420 / 7.4% | 310 / 7.2% |

| G | Carbohydrate transport and metabolism | 280 / 4.9% | 320 / 7.4% |

| C | Energy production and conversion | 320 / 5.6% | 240 / 5.6% |

| S | Function unknown | 850 / 15.0% | 600 / 13.9% |

| - | Not in COGs | 1100 / 19.4% | 950 / 22.0% |

| Total | All Genes | 5672 | 4320 |

Experimental Protocols for Enrichment Analysis

Protocol 3.1: Statistical Overrepresentation Analysis (ORA)

- Objective: To identify COG categories significantly overrepresented in a gene set of interest (e.g., differentially expressed genes, genes in a genomic island) compared to a background set (e.g., the complete genome).

- Methodology:

- Define Gene Sets: Create a 'target' list (genes of interest) and a 'background' list (reference genome).

- COG Mapping: Annotate all genes in both sets with COG categories using

eggNOG-mapperorWebMGA. - Contingency Table: For each COG category, construct a 2x2 table: genes in/not in the target set vs. genes in/not in the category.

- Statistical Test: Apply a one-tailed Fisher's exact test or hypergeometric test to each category. Correct for multiple hypothesis testing using the Benjamini-Hochberg procedure (FDR < 0.05).

- Calculation: Enrichment Score = (CountTarget / SizeTarget) / (CountBackground / SizeBackground).

Protocol 3.2: Gene Set Enrichment Analysis (GSEA)-Style Approach

- Objective: To detect subtle but coordinated shifts in COG functional profiles across a ranked gene list (e.g., by log2 fold-change from RNA-seq).

- Methodology:

- Rank Gene List: Rank all genes in the genome by a metric of interest (e.g., expression difference).

- Calculate Enrichment Score (ES): Walk down the ranked list, increasing a running-sum statistic when a gene belongs to the COG category, decreasing it otherwise. The maximum deviation from zero is the ES.

- Significance Assessment: Permute the gene labels (n=1000) to generate a null distribution of ES. The nominal p-value is the proportion of permutations yielding an ES greater than the observed ES.

- Normalization: Normalize ES to account for category size, generating a Normalized Enrichment Score (NES).

Visualizing Functional Profiles and Pathways

Diagram 1: Downstream Analysis Workflow from COG Annotation

Diagram 2: Enrichment Analysis Logic for a Single COG Category

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for COG-Based Downstream Analysis

| Item | Function & Explanation |

|---|---|

eggNOG-mapper v2+ |

Web/standalone tool for functional annotation against COG, KEGG, and Gene Ontology databases from protein sequences. |

clusterProfiler (R) |

Comprehensive R package for statistical analysis and visualization of functional profiles (including custom COG sets). |

Cytoscape with enrichmentMap |

Network visualization platform and app to create interactive maps of enriched COG categories and their overlap. |

| STRING Database | Resource to build protein-protein interaction networks for genes belonging to a significantly enriched COG category. |

| KEGG Mapper – Search&Color Pathway | Tool to map a list of genes (e.g., from an enriched COG) onto KEGG reference pathways for visual metabolic reconstruction. |

| MicrobiomeAnalyst | Web-based platform with a 'Functional Analysis' module that accepts COG abundance tables for comparative and enrichment analysis. |

ggplot2 & pheatmap (R) |

Critical R packages for generating publication-quality bar charts, dot plots, and heatmaps of COG enrichment results. |

Within the broader thesis on advancing microbial genome annotation research using the Clusters of Orthologous Groups (COG) database, a critical challenge is the functional interpretation of COG assignments. While COG provides a phylogenetic classification of proteins, its full utility is unlocked by integrating its data with curated pathway repositories (KEGG, MetaCyc) and structured vocabularies (Gene Ontology, GO). This integration transforms simple protein lists into mechanistic models of microbial physiology, metabolism, and adaptation, directly impacting hypotheses in microbial ecology, synthetic biology, and antimicrobial drug discovery.

Table 1: Core Databases for COG Data Integration

| Database | Primary Scope | Update Frequency (as of 2024) | Key Linkage to COGs |

|---|---|---|---|

| COG Database | Phylogenetic classification of proteins from prokaryotic genomes. | Last major update: 2014 (v. 2020). Core set stable. | Source framework. Each COG ID (e.g., COG0001) represents an orthologous group. |

| KEGG (Kyoto Encyclopedia of Genes and Genomes) | Integrated database of pathways, diseases, drugs, and chemical substances. | Regular monthly updates. | Maps KEGG Orthology (KO) identifiers to COGs via the gene2ko and ko2cog files. |

| MetaCyc | Curated database of experimentally elucidated metabolic pathways and enzymes. | Quarterly updates. | Links enzyme nomenclature (EC numbers) to proteins, which can be traced to COG members. |

| Gene Ontology (GO) | Standardized vocabulary (ontologies) for biological processes, molecular functions, and cellular components. | Daily updates. | GO terms are associated with COGs via manual curation and inter-database mappings (e.g., from UniProt). |

Table 2: Typical Annotation Coverage Statistics for a Model Bacterial Genome (Escherichia coli K-12)

| Annotation Type | Number of Genes Annotated | Percentage of Genome | Primary Integration Method |

|---|---|---|---|

| COG Assignment | 4,147 | ~98% | Direct assignment by RPS-BLAST/COGNITOR. |

| KEGG Pathway Map | 2,583 | ~61% | KO assignment followed by pathway mapping. |

| MetaCyc Pathway | 1,892 | ~45% | EC number assignment followed by pathway mapping. |

| GO Term | 3,856 | ~91% | Mapping via UniProtKB cross-references. |

Experimental Protocols for Integration

Protocol 1: From Genome Sequence to Integrated Annotations

- Objective: Generate a comprehensive functional profile for a newly sequenced microbial genome.

- Input: Assembled genome (FASTA format of protein sequences).

- Tools & Reagents: High-performance computing cluster, BLAST+ suite, custom Perl/Python/R scripts.

- COG Assignment: Perform RPS-BLAST of all protein sequences against the CDD profile of the COG database (

cog-20.cog.db). Use an E-value cutoff of 0.01. Assign the best-hit COG ID and functional category to each protein. - KO Assignment: Use

kofamscanor BLAST against the KOfam HMM/profile database to assign KO identifiers. Alternatively, use the precomputed mapping file (ko2cog) to infer KOs from COGs (less precise). - Pathway Reconstruction: Input the list of KO identifiers into KEGG's

KEGG Mapper – Reconstruct Pathwaytool. For MetaCyc, use thePathway Toolssoftware with assigned EC numbers (derived from COG annotation or via UniProt). - GO Annotation: Use

InterProScanto identify protein domains and assign GO terms via the InterPro2GO mapping. Supplement by querying the UniProtKB API with protein IDs to retrieve curated GO associations. - Data Integration: Merge all annotation tables (COG ID, KO, EC, GO) using protein identifiers as the primary key. Resolve conflicts by prioritizing direct experimental evidence codes in GO.

- COG Assignment: Perform RPS-BLAST of all protein sequences against the CDD profile of the COG database (

Protocol 2: Enrichment Analysis for Comparative Genomics

- Objective: Identify biologically meaningful differences (e.g., pathways, GO terms) between two sets of COG-annotated genes (e.g., pathogen vs. non-pathogen).

- Input: Two lists of COG IDs.

- Tools & Reagents: R statistical environment with

clusterProfiler,topGO, orPhyperfunction.- Background Set: Define the universe of all COG IDs present in the pangenome of the studied clade.

- Conversion: Translate the input COG ID lists to the corresponding identifier for the target resource (e.g., KO IDs for KEGG, GO terms for GO) using the mapping files.

- Statistical Test: Perform a hypergeometric test or Fisher's exact test for each pathway/GO term to assess over-representation in the gene set of interest.

- Multiple Testing Correction: Apply the Benjamini-Hochberg procedure to control the False Discovery Rate (FDR). Consider terms with an FDR-adjusted p-value < 0.05 as significantly enriched.

Visualization of Workflows and Relationships

Diagram Title: COG Data Integration Workflow

Diagram Title: COG IDs Mapped to a KEGG Metabolic Pathway

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for COG-Based Integration Studies

| Item/Reagent | Function in Integration Research | Example/Supplier |

|---|---|---|

| CDD & COG Profile Database | Core set of position-specific scoring matrices (PSSMs) for identifying COG membership via homology search. | NCBI's Conserved Domain Database (CDD) release. |

| KOfam HMM Profiles | Curated set of hidden Markov models for precise assignment of KEGG Orthology (KO) identifiers. | KEGG official repository (KofamKOALA). |

| Pathway Tools Software | Bioinformatics software environment for pathway prediction, visualization, and analysis using MetaCyc. | SRI Bioinformatics (Biocyc.org). |

| InterProScan Suite | Integrated tool for protein domain/family recognition, providing cross-references to GO terms. | EMBL-EBI InterPro consortium. |

| UniProtKB Mapping Files | Precomputed tables linking UniProtKB accessions to COG, KO, and GO identifiers. | UniProt FTP server. |

| clusterProfiler R Package | Statistical package for functional enrichment analysis of GO terms and KEGG pathways. | Bioconductor project. |

| Custom Python/R Script Library | For parsing BLAST outputs, merging annotation tables, and managing identifier mapping. | In-house or public repositories (e.g., GitHub). |

Solving Common COG Annotation Challenges: Accuracy, Speed, and Interpretability

Within the broader thesis of COG (Clusters of Orthologous Genes) database-driven microbial genome annotation research, low annotation rates remain a critical bottleneck. This technical guide examines the synergistic optimization of prediction algorithm parameters and strategic reference database selection to maximize functional assignment coverage and accuracy, directly impacting downstream applications in drug target discovery and metabolic pathway analysis.

Despite advances in sequencing, a significant proportion of genes in novel microbial genomes receive no functional annotation ("hypothetical proteins"). This gap impedes research in antibiotic resistance, microbiome function, and novel enzyme discovery. This guide addresses this through a dual-pronged, evidence-based approach.

Core Parameter Tuning for Annotation Pipelines

Optimal parameter settings for gene-calling and homology search tools drastically affect sensitivity and specificity.

Gene Prediction Parameter Optimization

Mis-annotations often begin at the gene-calling stage. Key parameters for tools like Prodigal and Glimmer require tuning for non-model organisms.

Table 1: Impact of Key Prodigal Parameters on Annotation Yield

| Parameter | Default Value | Tuned Range | Effect on Annotation Rate | Recommended for (G+C%) |

|---|---|---|---|---|

-p (Procedure) |

single | meta for metagenomes |

Increases ORF detection in fragmented assemblies | All metagenomic samples |

-g (Genetic Code) |

11 | 4 (Mycoplasma), 25 (Protists) | Prevents frameshift errors, increases valid hits | Divergent phyla |

| Translation Table | 11 | Adjust per phylogeny | Reduces false-negative gene calls | High/Low G+C% genomes |

| Min Gene Length | 90 bp | 60-75 bp for compact genomes | Captures small functional RNAs/peptides | Mycoplasma, organelles |

Homology Search Parameter Tuning

Sensitivity of tools like BLAST, DIAMOND, and HMMER is controlled by statistical thresholds.

Table 2: E-value and Coverage Thresholds for COG Assignment

| Search Tool | Default E-value | Optimized E-value | Min. Query Coverage | Avg. % Increase in Assignments |

|---|---|---|---|---|

| BLASTP | 0.001 | 0.01 - 0.1 | 50% | 8-12% |

| DIAMOND (Sensitive) | 0.001 | 0.1 | 60% | 15-20% |

| HMMER (Pfam) | 0.01 | 0.1 (per-domain) | Align full domain | 10-15% for remote homologs |

Experimental Protocol: Systematic Parameter Sweep

- Input: A curated benchmark set of 100 microbial genomes with validated "gold-standard" annotations.

- Tool Suite: Install Prodigal v2.6.3, DIAMOND v2.1.8, HMMER v3.3.2.

- Procedure:

a. Run gene prediction with varying

-gand min-length parameters. b. Perform homology searches against the COG database (Release 2020) using a grid of E-values (1e-10, 1e-5, 1e-3, 0.1) and minimum coverage thresholds (40%, 50%, 60%, 70%). c. Compare outputs to the gold standard using precision (TP/(TP+FP)) and recall (TP/(TP+FN)) metrics. - Validation: Use conserved single-copy orthologs (e.g., via CheckM) to assess false negatives.

Title: Parameter Optimization Workflow

Strategic Database Selection and Integration

The choice and combination of reference databases are as critical as algorithmic parameters.

Table 3: Database Characteristics and Annotation Yield

| Database | Scope | Avg. % Genes Annotated (Bacterial Genome) | Redundancy | Update Frequency | Key Use Case |

|---|---|---|---|---|---|

| COG | Orthologous groups, functional class | 60-70% | Low | Bi-annual | Core cellular process inference |

| EggNOG | Hierarchical orthology, expanded | 65-75% | Medium | Annual | Broad phylogenetic analysis |

| KEGG | Pathways, modules, BRITE hierarchies | 50-65% | Low | Monthly | Metabolic pathway reconstruction |

| UniRef90 | Clustered protein sequences | 70-80% | High | Daily | Maximizing raw hit rate |

| Pfam | Protein domain families | 55-70% (domain-level) | Low | Quarterly | Identifying functional motifs |

| Custom COG+ | COG + niche-specific HMMs | 75-85% | Tailored | As needed | Novel environmental/genomic clades |

Experimental Protocol: Creating a Custom Integrated Database

- Base: Download latest COG (ftp.ncbi.nih.gov/pub/COG/COG2020), Pfam (Pfam-A.hmm), and UniRef90 databases.

- Curation: Add organism-specific HMMs built from aligned protein sequences of closely related, well-annotated strains (using

hmmbuild). - Integration: Create a concatenated FASTA file for BLAST searches and a combined HMM profile database for

hmmscan. - Priority Rules: Establish a hierarchical assignment logic: COG category > Pfam domain > UniRef90 hit > Custom HMM hit to resolve conflicting assignments.

Title: Hierarchical Database Assignment Logic

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Reagents and Resources for Annotation Experiments

| Item/Resource | Function in Annotation Pipeline | Example/Supplier |

|---|---|---|

| Benchmark Genome Sets | Gold-standard for validating parameter changes. | GOLD (Genomes OnLine Database) curated sets, RefSeq representative genomes. |

| HMM Profile Libraries | Detect remote homology via conserved domains. | Pfam, TIGRFAMs, custom HMMs built with HMMER suite. |

| High-Performance Computing (HPC) Cluster | Enables large-scale parameter sweeps and database searches. | Local university cluster, cloud solutions (AWS ParallelCluster, Google Cloud SLURM). |

| Containerized Software | Ensures reproducibility of tool versions and parameters. | Docker/Singularity images for Prodigal, DIAMOND, InterProScan. |

| Custom Python/R Scripts | Parses output files, calculates metrics, integrates results. | Biopython, tidyverse, custom scripts for COG category aggregation. |