Implementing FAIR Data Principles in One Health Genomics: A Practical Guide for Researchers

This article provides a comprehensive roadmap for implementing FAIR (Findable, Accessible, Interoperable, and Reusable) data principles in One Health genomics.

Implementing FAIR Data Principles in One Health Genomics: A Practical Guide for Researchers

Abstract

This article provides a comprehensive roadmap for implementing FAIR (Findable, Accessible, Interoperable, and Reusable) data principles in One Health genomics. It addresses the unique challenges of integrating diverse data types from human, animal, and environmental sources. Targeting researchers, scientists, and drug development professionals, the content moves from foundational concepts to practical applications, common troubleshooting strategies, and validation frameworks. The article emphasizes how FAIRification enhances cross-disciplinary collaboration, accelerates pathogen surveillance, and fosters more effective therapeutic discovery in a connected ecosystem.

What Are FAIR Principles and Why Are They Critical for One Health Genomics?

Within the One Health genomics research paradigm—which integrates human, animal, and environmental health—data generation is vast and complex. The effective translation of genomic insights into actionable public health or drug development outcomes is contingent upon robust data stewardship. This application note elucidates the FAIR Guiding Principles, defining them as foundational protocols for enhancing data utility and machine-actionability in collaborative, cross-species research initiatives.

The Four Pillars: Application Notes

Findable

Data and metadata must be easy to locate by both humans and computers. The core identifier is a globally unique and persistent identifier (PID).

- Key Protocol: Metadata and Data Identifier Assignment.

- Objective: To ensure every dataset is discoverable through rich, indexed metadata.

- Materials: Dataset, metadata schema (e.g., MIxS for genomics), repository API (e.g., ENA, NCBI, institutional repository).

- Methodology:

- Assign a Persistent Identifier (e.g., DOI, Accession number) to the final, versioned dataset.

- Describe the data with rich metadata using a relevant, community-accepted schema.

- Register or deposit both the PID and metadata in a searchable resource (e.g., data repository, catalog).

- Ensure metadata remains accessible even if the underlying data is deprecated.

Accessible

Data is retrievable using standard, open protocols, potentially with authentication and authorization where necessary.

- Key Protocol: Standardized Data Retrieval Workflow.

- Objective: To enable automated and manual data access via a standardized communication protocol.

- Materials: Data repository, authentication token (if applicable), data access protocol.

- Methodology:

- The data is stored in a trusted repository with a defined access policy (open, embargoed, controlled).

- Access is facilitated via a standardized, free, and open protocol (e.g., HTTPS, FTP, API).

- For controlled access, an authorization process (e.g., via OAuth 2.0) is clearly defined.

- Metadata is always accessible, even if data access is restricted.

Interoperable

Data integrates with other datasets and can be utilized by applications or workflows for analysis, storage, and processing.

- Key Protocol: Metadata and Vocabulary Harmonization.

- Objective: To enable data integration from diverse One Health domains (e.g., clinical, genomic, environmental).

- Materials: Source datasets, shared conceptual model (e.g., OBO Foundry ontology), data mapping tool (e.g., Python/R scripts).

- Methodology:

- Use formal, accessible, shared, and broadly applicable knowledge representations (e.g., SNOMED CT, ENVO, NCBI Taxonomy) for metadata fields.

- Use community-standard data formats (e.g., FASTQ, VCF, CRAM for genomics) where possible.

- Reference other related data using their PIDs within the metadata.

- Apply syntactic (format) and semantic (meaning) mapping tools to align heterogeneous datasets.

Reusable

Data and metadata are sufficiently well-described to be replicated, combined, or used in new research.

- Key Protocol: Comprehensive Provenance and Readme Documentation.

- Objective: To maximize future utility and reproducibility of the dataset.

- Materials: Data processing logs, laboratory notebooks, citation information, licensing framework.

- Methodology:

- Document all aspects of data provenance: who created it, with what tools, parameters, and processing steps.

- Provide a clear, machine-readable data usage license (e.g., CCO, MIT, or controlled-access terms).

- Accurately link data to its source (a publication, grant, or originating project) using PIDs.

- Meet domain-relevant community standards in both metadata and data quality.

Quantitative Data on FAIR Implementation Impact

Table 1: Comparative Analysis of Data Reuse and Efficiency Metrics

| Metric | Non-FAIR Aligned Data | FAIR Aligned Data | Measurement Source |

|---|---|---|---|

| Data Discovery Time | Hours to days (manual search) | Minutes (automated query) | Observational study of repository searches |

| Integration Preparation Effort | High (80% time on cleaning/mapping) | Reduced (focus on analysis) | Survey of bioinformatics workflows |

| Reuse Citation Rate | Lower, often uncited | Significantly higher | Citation tracking in public repositories |

| Machine-Actionability | Low (requires human interpretation) | High (automated metadata parsing) | Assessment of API access and metadata richness |

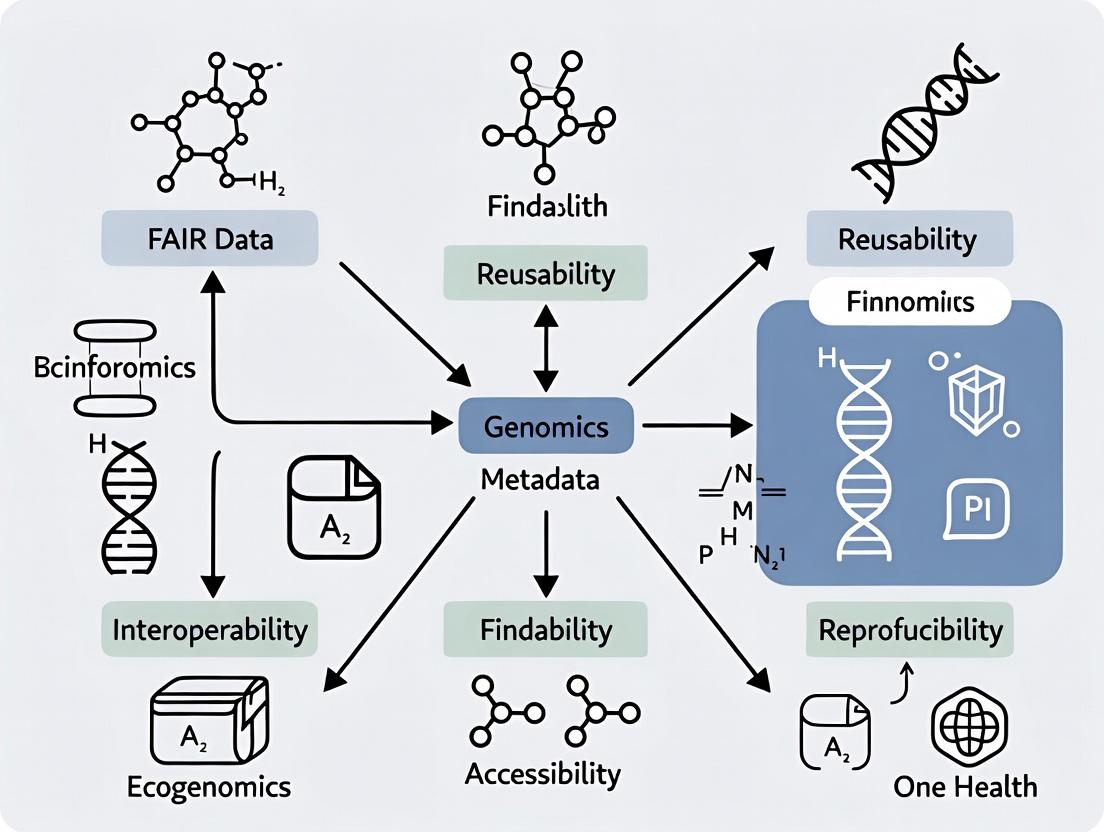

Visualizations

FAIR Principles Logical Framework

FAIR Data Workflow in One Health Genomics

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials and Tools for FAIR One Health Genomics Data Management

| Item Category | Specific Example/Solution | Function in FAIR Context |

|---|---|---|

| Persistent Identifiers | DOI (DataCite), Accession Number (ENA/SRA) | Provides globally unique, permanent reference for data (Findable). |

| Metadata Standards | MIxS (Minimum Information about any Sequence), INSDC checklist | Schema to capture essential contextual data (Findable, Reusable). |

| Ontologies/Vocabularies | NCBI Taxonomy, ENVO, SNOMED CT | Controlled vocabularies for species, environment, phenotype (Interoperable). |

| Trusted Repository | ENA, NCBI SRA, Zenodo, Institutional Repo | Preserves data, provides PID, implements access control (Accessible). |

| Data Formats | CRAM, VCF, FASTA/FASTQ | Community-standard, often compressed/lossless formats (Interoperable). |

| Provenance Tracker | Research Object Crates (RO-Crate), Electronic Lab Notebooks | Packages data, code, and workflow to document lineage (Reusable). |

| Access Protocol | HTTPS, FTP, Aspera, API (e.g., ENA API) | Standardized methods for automated data retrieval (Accessible). |

| Usage License | Creative Commons (CC0, BY), Custom Data Use Agreement | Clearly communicates permissions for reuse (Reusable). |

Application Notes on FAIR Data Integration for One Health Genomics

One Health research necessitates the integration of disparate, multi-scale datasets from human clinical, veterinary, and environmental surveillance. Adherence to the FAIR principles (Findable, Accessible, Interoperable, Reusable) is critical for enabling cross-domain data analysis and accelerating translational insights.

Table 1: Core Quantitative Metrics for Integrated One Health Genomic Surveillance

| Metric Category | Human Clinical Data | Animal/Veterinary Data | Environmental Data (e.g., Wastewater) | Integrated FAIR Goal |

|---|---|---|---|---|

| Typical Sequencing Depth | 100-150x (WGS) | 30-100x (WGS) | 500-10,000x (amplicon) | Standardized metadata for depth & platform |

| Key Metadata Fields | Age, symptom onset, geolocation | Species, health status, husbandry | Sample type (water/soil), pH, temp | Use of controlled vocabularies (SNOMED CT, ENVO) |

| Primary File Format | CRAM/BAM, FASTQ, VCF | FASTQ, VCF | FASTQ, count tables | Cloud-optimized formats (e.g., .zarr) |

| Public Repository | NCBI SRA, dbGaP | NCBI SRA, ENA | NCBI SRA, ENA, NDJSON | Persistent identifiers (DOIs) for datasets |

| Minimum Sample Size (Per Study) | 500-1000 isolates | 200-500 isolates | 50-200 sampling sites | Sample size justification linked to data reusability |

Table 2: FAIR Compliance Checklist for a One Health Genomics Project

| FAIR Principle | Implementation Requirement | Compliance Tool/Standard |

|---|---|---|

| Findable | Unique, persistent identifier (PID) for dataset. Rich, searchable metadata. | DataCite DOI, NCBI BioProject ID |

| Accessible | Standardized, open communication protocol. Metadata accessible even if data is restricted. | HTTPS, OAuth 2.0, ENA API |

| Interoperable | Use of formal, accessible, shared knowledge representations. Qualified references to other metadata. | OBO Foundry ontologies (GO, CHEBI), MIxS standards |

| Reusable | Detailed provenance and data usage license. Domain-relevant community standards. | CCO waiver, TRUST principles, INSDC submission. |

Protocols

Protocol 1: Integrated Metagenomic Sequencing for Pathogen Detection in Human, Animal, and Environmental Matrices

Objective: To uniformly process diverse sample types for untargeted detection of bacterial and viral pathogens, enabling cross-species comparison.

Materials: See "The Scientist's Toolkit" below. Procedure:

- Sample Collection & Nucleic Acid Extraction:

- Human/Animal: Collect nasal/oropharyngeal swabs or feces in universal transport medium. Extract total nucleic acid using a bead-beating protocol (e.g., MagMAX Viral/Pathogen Kit) to ensure lysis of hardy pathogens.

- Environmental: Collect 50-100mL of wastewater or surface water. Concentrate via centrifugal filtration (100kDa membrane). Process pellet as per human/animal samples.

- Library Preparation & Sequencing:

- Treat DNA/RNA extract with DNase I to enrich for RNA viruses. Perform reverse transcription for RNA.

- Use a shotgun metagenomic sequencing kit (e.g., Nextera XT DNA Library Prep) for all samples. Critical: Use unique dual indices (UDIs) for each sample to prevent index hopping and allow pooling of all sample types in a single sequencing run.

- Sequence on an Illumina NextSeq 2000 platform to generate 2x150 bp paired-end reads, targeting 20-50 million reads per sample.

- Bioinformatic Analysis (FAIR-Oriented Workflow):

- Demultiplexing & QC: Use

bcl-convertorbcl2fastq. Assess quality withFastQC. - Host Depletion: Map reads to appropriate host genomes (human GRCh38, bovine ARS-UCD1.2, etc.) using

Kraken2with a standard database and remove matching reads. - Taxonomic & Pathogenic Profiling: Analyze non-host reads with

Kraken2/Brackenagainst the standardized "PlusPF" database (includes archaea, bacteria, viruses, plasmids, fungi, protozoa). Output results inmOTHur-standard format for interoperability. - Contig Assembly & Annotation: Assemble depleted reads with

metaSPAdes. Predict open reading frames withProdigal. Annotate againstResFinder,VFDB, andCARDdatabases for antimicrobial resistance and virulence genes.

- Demultiplexing & QC: Use

Protocol 2: Phylogenomic Integration of Isolate Data Across One Health Domains

Objective: To construct unified phylogenetic trees integrating pathogen isolates from human, animal, and environmental sources to trace transmission pathways.

Procedure:

- Data Curation (FAIR Focus):

- Gather whole-genome sequencing (WGS) data from public repositories (SRA, ENA) and in-house studies. Document all source metadata using the MIxS (Minimum Information about any (x) Sequence) checklists.

- Ensure all isolates have associated spatiotemporal metadata (collection date, latitude, longitude, source: human/animal/environment).

- Core Genome Alignment:

- Assemble all WGS reads to draft genomes using

shovill(wrapper forSPAdes). - Annotate genomes uniformly with

ProkkaorBakta. - Identify the core genome alignment using

Roary(≥99% presence in all isolates) orParSNPfor a more robust alignment.

- Assemble all WGS reads to draft genomes using

- Phylogenetic Inference & Integration:

- Filter the core genome alignment for recombination using

Gubbins. - Construct a maximum-likelihood phylogeny using

IQ-TREE2with automatic model selection and 1000 ultrafast bootstrap replicates. - Visual Integration: Use

Microreactto create an interactive visualization. Upload the tree file, and a CSV table containing the FAIR metadata (source, location, date, antimicrobial resistance profile). This creates a shareable, reusable resource linking genomic data to contextual metadata.

- Filter the core genome alignment for recombination using

Diagrams

One Health FAIR Data Integration Workflow

One Health AMR Transmission & Selection Cycle

The Scientist's Toolkit: Key Research Reagent Solutions

| Item/Category | Function in One Health Genomics | Example Product/Brand |

|---|---|---|

| Universal Nucleic Acid Preservation Medium | Stabilizes DNA/RNA from diverse sample types at point of collection, ensuring integrity for downstream omics. | Norgen's Biotek Sample Preservation Kit, DNA/RNA Shield (Zymo Research) |

| Broad-Spectrum Nuclease Inhibitors | Critical for environmental samples (e.g., wastewater) which contain high levels of RNases and DNases. | SUPERase•In RNase Inhibitor, Baseline-ZERO DNase |

| Metagenomic Library Prep Kit | Enables unbiased, shotgun sequencing of total nucleic acid from any source without prior amplification bias. | Illumina DNA Prep, KAPA HyperPlus Kit |

| Unique Dual Index (UDI) Oligos | Allows massive multiplexing of human, animal, and environmental samples in one sequencing run, preventing index hopping. | Illumina CD Indexes, IDT for Illumina UDIs |

| Host Depletion Probes | Removes abundant host (human, animal) reads to increase sensitivity for pathogen detection in clinical/veterinary samples. | Human/Bovine/Canine rRNA Depletion Kit (New England Biolabs) |

| Positive Control Synthetic Community | Validates entire workflow from extraction to sequencing across sample types; ensures cross-lab comparability (FAIR). | ZymoBIOMICS Microbial Community Standard |

| Cloud-Based Analysis Platform | Provides scalable, reproducible computational environment for integrating large datasets with FAIR principles. | Terra.bio, Galaxy Project, CZ ID (Chan Zuckerberg ID) |

Application Notes on FAIR Data Implementation in One Health Genomics

The integration of FAIR (Findable, Accessible, Interoperable, Reusable) data principles into One Health genomics is critical for addressing complex threats like pandemics and antimicrobial resistance (AMR). These notes outline the application of FAIR in building actionable surveillance systems.

Table 1: Impact of FAIR-Compliant Data Sharing on Pathogen Surveillance Timelines (Comparative Analysis)

| Metric | Non-FAIR Ecosystem (Traditional Submission) | FAIR-Compliant Ecosystem (Streamlined Pipeline) |

|---|---|---|

| Data Submission to Public Repository | 30-180 days (Post-publication) | ≤ 7 days (Real-time, pre-publication) |

| Time to Primary Analysis (e.g., Variant Calling) | 2-4 weeks (Heterogeneous pipelines) | 24-48 hours (Standardized workflows) |

| Inter-Lab Data Integration for Meta-Analysis | Months (Manual harmonization) | Days (Automated via shared ontologies) |

| Identification of Emerging Variant/Resistance Gene | 6-12 months lag | Potential for early warning (<1 month) |

Table 2: Key AMR Gene Databases & Their FAIRness Indicators

| Database Name | Primary Focus | Findability (Unique PID) | Interoperability (Standard Ontology) | Reusability (Clear License) |

|---|---|---|---|---|

| CARD | Comprehensive Antibiotic Resistance Database | DOI for releases | RO-Crate, ARO ontology | CC BY-SA 4.0 |

| NCBI AMRFinderPlus | NCBI's pathogen resistance detection | BioProject/BioSample IDs | NCBI Taxonomy, SNPeff | Public domain |

| ResFinder | Acquired antimicrobial resistance genes | None by default | Custom nomenclature | CC BY-NC 4.0 |

| MEGARes | AMR hierarchy for metagenomics | DOI | MEGARes ontology | CC BY 4.0 |

Detailed Experimental Protocols

Protocol 1: End-to-End FAIR-Compliant Metagenomic Sequencing for AMR Tracking in One Health Samples

Objective: To generate and publish sequence data from environmental, animal, or human samples with embedded FAIR metadata for AMR gene surveillance.

I. Sample Collection & Metadata Annotation

- Sample Collection: Collect sample (e.g., wastewater, nasal swab, agricultural run-off) using appropriate sterile techniques.

- Instantiate Metadata Template: At the point of collection, complete a standardized metadata sheet (e.g., ISA-Tab format or NCBI BioSample checklist).

- Mandatory Fields: Include

sample type,host/environment,geographic location (latitude/longitude),collection date/time,AMR exposure risk (if known),collector name. Assign a unique local Sample ID.

II. DNA Extraction & Library Preparation

- Extract total genomic DNA using a broad-spectrum kit (e.g., DNeasy PowerSoil Pro Kit for environmental samples).

- Quantify DNA using fluorometry (e.g., Qubit dsDNA HS Assay).

- Prepare sequencing library using a kit compatible with your platform (e.g., Illumina DNA Prep). Include negative extraction and library preparation controls.

- Critical FAIR Step: Record all kit catalog numbers, lot numbers, and protocol deviations in the experimental metadata file. Link this file to the Sample ID.

III. Sequencing & Primary Data Output

- Sequence on an appropriate platform (e.g., Illumina NextSeq 2000 for 2x150bp paired-end reads).

- Generate raw FASTQ files. The sequencing facility should provide a

run manifestlinking each FASTQ file to the submitted Sample ID.

IV. Computational Analysis & FAIR Data Packaging

- Quality Control: Use

FastQCandTrimmomaticto assess and trim adapter/low-quality sequences. - AMR Gene Profiling: Use a standardized containerized workflow:

- Taxonomic Profiling: Use

Kraken2/Brackenagainst a standard database (e.g., GTDB) for co-occurring pathogen identification. - FAIR Packaging: Create a RO-Crate (Research Object Crate) containing:

- Raw FASTQ files (or links to repository).

- Final analysis outputs (JSON, TSV).

- The detailed metadata file (

metadata.jsonld). - A

DockerfileorSingularitydefinition of the analysis environment. - A

READMEdescribing the crate contents in plain language.

V. Data Deposition in Public Repositories

- Upload raw sequence reads and minimal metadata to the ENA, SRA, or GISAID (for notifiable pathogens) using their submission portals. This assigns a unique BioProject (PRJNA...) and BioSample (SAMN...) accession.

- Deposit the analysis-ready RO-Crate in a domain-specific repository like Zenodo or Figshare, which will assign a DOI. In the description, link back to the SRA/ENA accessions.

- Register the study in a public dashboard (e.g., WHO's EPI-BRAIN, AMR Register) using the provided DOIs and accessions.

Protocol 2: Standardized Phylogenomic Analysis for Pathogen Outbreak Tracking

Objective: To reconstruct a phylogeny from publicly available FAIR genomic data to trace transmission dynamics during a suspected outbreak.

I. FAIR Data Retrieval

- Find & Access: Query public repositories using programmatic tools.

II. Core Genome Alignment & Variant Calling

- Assembly & Annotation: Assemble reads using

SKESAorShovill. Annotate assemblies withProkkaorBakta. - Define Core Genome: Use

RoaryorPanarooto identify the core genome (genes present in ≥99% of isolates) from the annotated GFF files. - Create Alignment: Extract core gene sequences and concatenate them using

HarvestSuite (parsnp)or a custom script to generate a multi-FASTA alignment file.

III. Phylogenetic Inference & Visualization

- Model Testing & Tree Building: Use

IQ-TREE2for rapid model selection and maximum-likelihood tree inference.

- Temporal Signal & Dating: For data with collection dates, use

BEAST2to generate a time-scaled phylogeny and estimate evolutionary rates. - Visualization: Annotate the resulting tree (

core_genome_alignment.fasta.treefile) with metadata (location, host, AMR profile) usingNextstrain AuspiceorMicroreact.

Mandatory Visualizations

Title: FAIR Data Pipeline for One Health Threat Intelligence

Title: AMR Metagenomic Analysis Workflow

The Scientist's Toolkit: Research Reagent & Resource Solutions

| Item/Category | Example Product/Resource | Function in FAIR One Health Genomics |

|---|---|---|

| Standardized Metadata Tool | ISAcreator / CEDAR | Creates structured, ontology-annotated metadata templates to ensure Interoperability from sample collection. |

| All-in-One DNA Extraction Kit | DNeasy PowerSoil Pro Kit (QIAGEN) | Provides consistent, high-yield DNA from diverse, complex One Health sample matrices (soil, stool, swabs). |

| Metagenomic Library Prep Kit | Illumina DNA Prep | A standardized, widely adopted protocol for preparing sequencing libraries, ensuring data consistency across labs. |

| Negative Control | ZymoBIOMICS Microbial Community Standard | A defined mock microbial community used as a process control to monitor contamination and assay performance. |

| Analysis Container | Docker / Singularity Image | Packages the exact software environment (e.g., with AMRFinderPlus, Kraken2) to guarantee reproducible (Reusable) results. |

| Data Packaging Standard | RO-Crate | A structured format to bundle data, code, and metadata into a single, reusable research object with a clear license. |

| Public Data Repository | European Nucleotide Archive (ENA) / Zenodo | Provides globally unique, persistent identifiers (PIDs) for Findability and long-term archival Access. |

| Ontology for Annotation | NCBI Taxonomy ID, ARO Ontology | Standardized vocabulary for describing organisms and AMR genes, critical for Interoperability in data integration. |

Key Stakeholders and Data Types in the One Health Genomics Ecosystem

The integration of genomics across human, animal, plant, and environmental health—the One Health approach—generates complex, multi-scale data. Adherence to FAIR (Findable, Accessible, Interoperable, Reusable) principles is paramount for enabling cross-sectoral analysis and accelerating translational discovery. This document details the key stakeholders, data types, and practical protocols within this ecosystem, framed as essential application notes for implementing FAIR-compliant research.

Stakeholder Analysis and Roles

Stakeholders are entities that generate, fund, regulate, use, or are impacted by One Health genomic data. Their roles and data interactions are summarized below.

Table 1: Key Stakeholders in the One Health Genomics Ecosystem

| Stakeholder Category | Primary Representatives | Core Interest & Role in Data Lifecycle |

|---|---|---|

| Data Generators | Public Health Agencies, Veterinary Diagnostic Labs, Agricultural Research Institutes, Environmental Monitoring Bodies, Academic Research Labs | Produce raw and processed genomic (e.g., WGS, metagenomic) and associated metadata. Responsible for initial data quality and annotation. |

| Data Integrators & Repositories | NCBI, ENA, DDBJ, BV-BRC, EFSA, WHO Data Repositories, Institutional Data Lakes | Curate, archive, and provide access to datasets. Implement data standards and accession systems for findability. |

| Data Analysts & Researchers | Bioinformaticians, Epidemiologists, Microbial Ecologists, Comparative Genomicists, Phylodynamic Modelers | Analyze integrated datasets to identify pathogens, AMR genes, transmission pathways, and evolutionary trends. Primary users of FAIR data. |

| Policy & Decision Makers | Government Health & Agriculture Departments (e.g., CDC, USDA, EFSA), Drug/ Vaccine Regulatory Agencies (e.g., FDA, EMA), WHO, OIE | Use evidence from data analysis to inform surveillance programs, outbreak responses, antimicrobial use policies, and therapeutic approvals. |

| Funders & Initiatives | NIH, Wellcome Trust, EU Horizon Europe, The Global Fund, BMGF | Define data sharing mandates, fund infrastructure (e.g., cloud platforms), and drive consortium-based projects like the European COVID-19 Data Platform. |

| Private Sector | Pharmaceutical & Diagnostic Companies, Agri-tech, Biotechnology Firms, Zoonotic Surveillance Start-ups | Utilize genomic insights for drug/vaccine target discovery, diagnostic assay development, and precision agriculture solutions. Often contributors and end-users. |

| Affected Communities | Patients, Farmers, Consumers, Environmental Advocacy Groups | Subjects and beneficiaries of research. Increasingly engaged via citizen science data collection and demand for transparent data use. |

Data Typology and Specifications

One Health genomics data is heterogeneous. FAIR implementation requires standardized description and formatting.

Table 2: Core Data Types and FAIRification Requirements

| Data Type | Common Formats | Key Metadata Standards (for Interoperability) | Typical Volume per Sample | Primary Use Case |

|---|---|---|---|---|

| Whole Genome Sequencing (WGS) | FASTQ, BAM, CRAM, VCF, FASTA | MIxS (Minimum Information about any (x) Sequence), INSDC sample checklist | 0.5 - 100 GB | Pathogen identification, outbreak溯源, AMR & virulence profiling. |

| Metagenomic Sequencing | FASTQ, SAM/BAM, BIOM, Kraken2 report | MIxS (especially for environmental & host-associated samples) | 10 - 200 GB | Microbiome characterization, pathogen discovery in environmental reservoirs. |

| Antimicrobial Resistance (AMR) Data | ARO/ CARD Ontology terms, MIC values, TSV | MIABIS-AMR, WHO GLASS AMR data structure | KB - MB | Tracking resistance patterns, correlating genotype with phenotype. |

| Epidemiological & Clinical Metadata | CSV, TSV, JSON, REDCap exports | OBO Foundry ontologies (e.g., IDO, OBI, SNOMED CT), FHIR profiles | KB - MB | Linking genomic data to host, location, time, clinical outcome, and exposure. |

| Geospatial & Environmental Data | Shapefiles, GeoJSON, NetCDF, CSV with coordinates | Darwin Core, ENVO (Environment Ontology), OGC standards | KB - GB | Mapping disease spread, correlating outbreaks with environmental factors. |

| Phylogenetic & Phylodynamic Data | Newick, Nexus, BEAST XML, JSON (auspice) | Data derived from CORE data types with temporal & spatial metadata. | MB - GB | Inferring evolutionary relationships and transmission dynamics. |

Application Notes & Protocols

Protocol 1: FAIR-Compliant Submission of Pathogen WGS Data to Public Repositories

Objective: To submit raw and assembled pathogen sequencing data with minimal mandatory metadata to the European Nucleotide Archive (ENA), ensuring findability and reuse.

- Sample Preparation & Sequencing: Extract nucleic acid, prepare library, sequence on Illumina/PacBio/ONT platform. Generate paired-end FASTQ files.

- Data Preprocessing: Use

fastporTrimmomaticfor adapter removal and quality trimming. Assess quality withFastQC. - Assembly & Annotation: Assemble trimmed reads using

SPAdes(bacteria) orIVAR(viruses). Annotate assembly usingProkkaorVADR. - Metadata Curation: Prepare two metadata files:

- Sample Checklist: Complete the ENA pathogen checklist (aligned with MIxS), including: isolate name, host, collection date/location, isolation source.

- Experiment & Run Information: Specify library layout, instrument, sequencing protocol.

- Submission via Webin-CLI:

- Output: Receipt of ENA study (PRJEB...), sample (ERS...), experiment (ERX...), run (ERR...), and assembly (GCA_...) accession numbers for persistent citation.

Protocol 2: Integrated Analysis of Cross-Species AMR Outbreak Data

Objective: To identify shared AMR genes and putative transmission clusters from WGS data of bacterial isolates collected from humans, animals, and the environment during an outbreak.

- Data Retrieval: Download relevant FASTQ or assembled genomes from repositories using accessions. Ensure data use agreements are respected.

- Uniform AMR Gene Detection: Process all samples through the AMRFinderPlus tool with a standardized database.

- Core Genome Multilocus Sequence Typing (cgMLST): Use a species-specific scheme (e.g., in

chewBBACAorEnteroBase) to determine high-resolution sequence types and assess genetic relatedness. - Phylogenetic Inference: Align core genome SNPs using

SnippyorParSNP. Build a maximum-likelihood tree withIQ-TREE. - Integration & Visualization: Integrate AMR genotypes (from Step 2), cgMLST clusters, epidemiological metadata (location, host species), and phylogeny in a unified visualization using

MicroreactorPhandango. - Interpretation: Identify AMR genes common across host species. Define transmission clusters based on combined genetic distance (e.g., ≤10 cgMLST allele differences) and epidemiological links.

Visualization of Ecosystem Relationships & Workflows

Diagram 1: Stakeholder Data Flow in One Health Genomics

Diagram 2: FAIR Data Integration Workflow for Outbreak Analysis

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 3: Key Reagents and Materials for One Health Genomic Surveillance

| Item | Function/Application | Example Product/Kit |

|---|---|---|

| Cross-Kingdom Nucleic Acid Extraction Kits | Efficiently extracts DNA/RNA from diverse matrices: tissue, feces, soil, water. Essential for standardized metagenomics. | QIAamp DNA/RNA Mini Kit (Qiagen), ZymoBIOMICS DNA/RNA Miniprep Kit. |

| Targeted Enrichment Probes (Pan-pathogen) | Enriches for pathogen sequences in complex host/environmental backgrounds, increasing sensitivity. | Twist Comprehensive Viral Research Panel, ViroCap. |

| High-Throughput Sequencing Reagents | Provides the chemistry for generating raw sequencing data on major platforms. | Illumina NovaSeq 6000 Reagent Kits, Oxford Nanopore Ligation Sequencing Kit. |

| Positive Control Reference Materials | Acts as a quantified, characterized control for assay validation and inter-lab comparison. | ATCC Microbiome Standard, Exactmer RNA/DVA Reference Materials. |

| Bioinformatics Pipeline Software | Containerized, standardized analysis suites for reproducible data processing. | nf-core pipelines (e.g., nf-core/mag, nf-core/sarek), CZ ID Cloud. |

| Ontology and Metadata Curation Tools | Aids in annotating samples with controlled vocabulary terms for interoperability. | OLS (Ontology Lookup Service) API, ezTag for MIxS. |

Application Note 001: Quantifying Data Silos in One Health Genomic Repositories

The proliferation of specialized, independently managed databases in One Health genomics creates significant data silos. These silos impede cross-species and cross-domain analysis, directly contradicting the FAIR (Findable, Accessible, Interoperable, Reusable) principles. The following table quantifies the scale and isolation of key public data repositories.

Table 1: Scale and Isolation Metrics of Major One Health Genomic Data Repositories

| Repository Name | Primary Domain | Estimated Records (as of 2024) | Unique, Non-Standardized Metadata Fields | Public API Availability | Cross-Reference to Other Silos (Avg. Links per Record) |

|---|---|---|---|---|---|

| NCBI GenBank | Human & Pathogen Genomics | >250 million sequences | ~15% (e.g., host health status, collection location variants) | Yes (E-utilities) | 2.1 |

| ENA (European Nucleotide Archive) | All Domains | ~50 Petabases of data | ~20% (focus on environmental sample context) | Yes (JSON/XML) | 1.8 |

| GISAID | Viral Pathogen (e.g., Influenza, SARS-CoV-2) | ~17 million sequences | High - proprietary clinical & patient metadata schema | Restricted API | 0.9 |

| PATRIC | Bacterial Pathogens | ~2 million genomes | ~25% (antibiotic resistance phenotypes) | Yes | 3.0 |

| VetMetagen | Animal Microbiome | ~500,000 samples | Very High - animal husbandry-specific terms | No (web portal only) | 0.5 |

| One Health Commission Curated Listings | Aggregated Resources | ~300 linked resources | Extreme heterogeneity | No | N/A |

Experimental Protocol 1.1: Assessing Interoperability via Metadata Field Mapping

Objective: To quantify the interoperability gap between two genomic data silos by mapping their core metadata fields to a common standard (e.g., Darwin Core, INSDC checklist).

Materials:

- Metadata manifests from two repositories (e.g., 1000 random samples each from GISAID and VetMetagen).

- Controlled vocabulary references (e.g., SNOMED CT, ENVO, OBI).

- Semantic mapping tool (e.g., OXFORDSemanticMapper, manual spreadsheet).

Procedure:

- Extraction: Programmatically extract all metadata fields and values for the selected samples from each repository's API or download portal.

- Normalization: Convert all field names to a common case (e.g., lowersnakecase). Identify fields describing the same conceptual entity (e.g.,

collection_date,sample_collection_date,date). - Mapping: For each consolidated field, attempt to map its values to a term in a relevant controlled vocabulary. Document instances where:

- A direct match exists.

- A match requires value transformation (e.g., "Jan 5, 2023" to "2023-01-05").

- No suitable term exists (proprietary or local jargon).

- Calculation: Compute the "Interoperability Score" as:

(Number of fields directly mappable to a standard / Total number of unique consolidated fields) * 100. - Analysis: The lower the score, the greater the semantic silo effect, necessitating more complex integration workflows.

Title: Workflow for Metadata Interoperability Assessment

Application Note 002: Technical and Procedural Integration Challenges

Beyond the existence of silos, the integration process itself faces technical and governance hurdles. These challenges prevent the seamless data flow required for holistic One Health analysis.

Table 2: Technical & Procedural Integration Challenges in One Health Genomics

| Challenge Category | Specific Issue | Prevalence (Survey of 50 Research Groups) | Impact on FAIR Principles |

|---|---|---|---|

| Technical Heterogeneity | Incompatible APIs (SOAP vs. REST, differing authentication) | 92% | Accessibility, Interoperability |

| Disparate data formats (FASTQ, BAM, proprietary .raw) | 88% | Interoperability, Reusability | |

| Semantic Heterogeneity | Inconsistent use of ontologies (e.g., disease, phenotype) | 98% | Interoperability, Reusability |

| Local/institutional metadata schemas | 85% | Findability, Interoperability | |

| Governance & Policy | Differing data access & sharing agreements (GDPR vs. Nagoya) | 95% | Accessibility |

| Lack of standardized Material Transfer Agreements (MTAs) for data | 78% | Accessibility, Reusability | |

| Resource Constraints | Computational burden of data harmonization | 90% | Accessibility, Reusability |

| Lack of bioinformatics expertise for integration tasks | 82% | All FAIR Principles |

Experimental Protocol 2.1: Benchmarking Cross-Silo Query Performance

Objective: To empirically measure the time and computational resource cost of executing a federated query across multiple genomic data silos compared to a query on a pre-integrated warehouse.

Materials:

- Query: "Retrieve all Salmonella enterica genome assemblies from cattle hosts with associated antibiotic resistance phenotype 'tetracycline resistant'".

- Target Silos: NCBI Pathogen Detection, PATRIC, ENA.

- Pre-integrated warehouse: A local knowledge graph integrating the above sources.

- Compute infrastructure: 8-core CPU, 32GB RAM server.

Procedure:

- Federated Query Setup: Develop individual query scripts for each silo's API, transforming the core query into the respective query language. Develop a master script to execute sub-queries in parallel, merge results, and deduplicate records.

- Warehouse Query Setup: Formulate a single query (e.g., in SPARQL for a knowledge graph, SQL for a warehouse) against the pre-integrated resource.

- Execution: Run each query method 10 times, recording:

- Total wall-clock time.

- CPU time.

- Volume of intermediate data downloaded.

- Manual effort required for result harmonization (in person-minutes).

- Analysis: Compare mean execution times and resource consumption. The performance gap highlights the efficiency cost of siloed architectures.

Title: Federated vs. Warehouse Query Pathways

The Scientist's Toolkit: Research Reagent Solutions for Data Integration

Table 3: Essential Tools and Platforms for Addressing Integration Challenges

| Item Name | Category | Primary Function | Relevance to FAIR |

|---|---|---|---|

| BioPython & BioConductor | Programming Libraries | Provide parsers and modules for reading, writing, and processing diverse biological data formats (e.g., GenBank, FASTQ). | Enhances Interoperability and Reusability by handling technical heterogeneity. |

| Ontology Lookup Service (OLS) | Semantic Tool | A repository for biomedical ontologies, enabling API-based searching and mapping of terms to standardize metadata. | Critical for overcoming semantic heterogeneity, directly enabling Interoperability. |

| Galaxy Project / nf-core | Workflow Systems | Offer pre-built, shareable computational workflows that can chain together tools from different silos into a reproducible pipeline. | Promotes Reusability and mitigates resource constraint challenges. |

| LinkML (Linked Data Modeling Language) | Data Modeling Framework | A framework for creating schemas to define and standardize metadata structures, generating validation tools and transformation code. | Addresses semantic and structural heterogeneity at the source, improving Findability and Interoperability. |

| Data Use Ontology (DUO) | Governance Tool | Standardizes machine-readable codes for data use restrictions, facilitating automated compliance checking in federated queries. | Helps navigate governance challenges, improving regulated Accessibility. |

| CWL (Common Workflow Language) | Workflow Standard | An open standard for describing analysis workflows and tools in a portable, scalable, and reproducible way across platforms. | Decouples workflows from execution environments, enhancing Reusability and Interoperability. |

A Step-by-Step Framework for FAIRifying One Health Genomic Data

Application Notes and Protocols

Within the framework of a broader thesis on implementing FAIR (Findable, Accessible, Interoperable, Reusable) data principles in One Health genomics research, the adoption of standardized metadata schemas and ontologies is the foundational first step. This protocol details the selection and application of key cross-domain semantic resources, notably those from the OBO Foundry ecosystem and the EDAM ontology, to enable data integration across human, animal, and environmental health studies.

Research Reagent Solutions (Semantic Tools)

A curated list of essential resources for semantic annotation and data structuring in One Health genomics.

| Item / Resource | Function in Protocol |

|---|---|

| OBO Foundry Registry | A curated portal to find, evaluate, and select interoperable, open biological and biomedical ontologies (e.g., GO, OBI, ENVO). |

| EDAM Ontology | A comprehensive ontology of bioscientific data analysis and data management concepts, tools, and formats. Critical for workflow annotation. |

| Ontology Lookup Service (OLS) | A repository for browsing, searching, and visualizing ontologies. Used for identifying and validating ontology terms. |

| ROBOT Tool | A command-line tool for automating ontology development, validation, and processing tasks (e.g., merging, reasoning). |

| Protégé Desktop Software | An open-source platform to view, edit, and reason over ontology files in OWL or RDF formats. |

Protocol 1: Selecting and Mapping Ontologies for a One Health Genomics Study

Objective: To establish a coherent set of ontology terms for annotating metadata from a multi-omics study investigating antimicrobial resistance (AMR) at a human-livestock interface.

Materials:

- Computing device with internet access.

- Spreadsheet software or a dedicated metadata curation tool (e.g., CEDAR).

- List of core data entities requiring annotation (e.g., host species, sample type, assay, pathogen, phenotype).

Methodology:

- Entity Listing: Enumerate all key variables and concepts from the experimental design. Example: Sample: Bovine fecal swab; Assay: Whole Genome Sequencing; Measured Trait: Presence of blaCTX-M-15 gene.

- Ontology Discovery: For each concept, query the OBO Foundry website and the EBI OLS.

- For Bovine: Search OLS for "cow" or "Bos taurus." Select the NCBI Taxonomy Ontology term

NCBITaxon:9913. - For fecal swab: Search for "specimen" or "swab." Map to the Ontology for Biomedical Investigations (OBI) term

OBI:0001479(specimen from organism). - For Whole Genome Sequencing: Search EDAM ontology via its dedicated portal. Map to

EDAM:topic:3690(Whole genome sequencing) andEDAM:operation:2945(Sequence assembly). - For Antimicrobial Resistance Phenotype: Search the OBO Foundry. Map to the Microbial Phenotype Ontology (MPO) term

MPO:000131(increased resistance to antibiotic). - For blaCTX-M-15 gene: Map to the Gene Ontology (GO) molecular function term

GO:0140259(CTX-M-15 beta-lactamase activity).

- For Bovine: Search OLS for "cow" or "Bos taurus." Select the NCBI Taxonomy Ontology term

- Term Validation: Use ROBOT's

reasoncommand or Protégé's reasoner (e.g., ELK) to check logical consistency of the combined set of terms. - Metadata Table Population: Create the project's sample metadata sheet using the identified IRIs (Internationalized Resource Identifiers) in a dedicated column (e.g.,

sample_type_iri).

Protocol 2: Annotating a Bioinformatics Workflow with EDAM

Objective: To formally describe a genomic analysis workflow using EDAM terms, enhancing reproducibility and tool discovery.

Materials:

- Written description of the bioinformatics pipeline steps.

- EDAM ontology browser (https://edamontology.org/page).

Methodology:

- Workflow Decomposition: Break down the pipeline into discrete steps (e.g., Quality Control, Read Assembly, Gene Annotation, Variant Calling).

- EDAM Concept Mapping: For each step, identify relevant EDAM concepts:

- Operation: The analytical function (e.g., "Sequence trimming" maps to

EDAM:operation_0293). - Topic: The scientific domain (e.g., "Sequence assembly" maps to

EDAM:topic_0091). - Input & Output Data: The format and type of data (e.g., "FastQ file" maps to

EDAM:format_1930; "Sequence assembly" maps toEDAM:data_0924).

- Operation: The analytical function (e.g., "Sequence trimming" maps to

- Annotation File Creation: Document the mapping in a machine-readable JSON-LD or CWL (Common Workflow Language) file, linking each workflow component to its EDAM IRI.

Table 1: Coverage of Core One Health Concepts in Selected OBO Foundry Ontologies.

| Ontology Name (Acronym) | Domain Focus | Number of Terms (Approx.) | Example Term for One Health | Term IRI |

|---|---|---|---|---|

| Environment Ontology (ENVO) | Biomes, environmental features | ~7,000 | Wastewater | ENVO:00002013 |

| Phenotype And Trait Ontology (PATO) | Phenotypic qualities | ~3,000 | Increased severity | PATO:0002252 |

| NCBI Taxonomy (NCBITaxon) | Organism classification | >2M | Homo sapiens | NCBITaxon:9606 |

| Infectious Disease Ontology (IDO) | Infectious diseases | ~1,500 | Antimicrobial resistance disposition | IDO:0000591 |

| Gene Ontology (GO) | Molecular functions, processes | ~45,000 | Antibiotic catabolic process | GO:0017001 |

Table 2: EDAM Ontology Top-Level Branch Statistics.

| EDAM Top-Level Branch | Number of Concepts | Core Use Case in Genomics |

|---|---|---|

| Operation | ~1,400 | Describes functions/processes (e.g., Sequence alignment). |

| Topic | ~900 | Describes the scientific domain (e.g., Metagenomics). |

| Data | ~900 | Describes types of data (e.g., Sequence alignment map). |

| Format | ~700 | Describes data formats (e.g., FASTA format). |

Visualization of Ontology Integration Workflow

Diagram 1: Ontology Mapping for FAIR One Health Metadata

Diagram 2: EDAM Annotation of a Genomics Pipeline

Within the FAIR (Findable, Accessible, Interoperable, Reusable) data ecosystem for One Health genomics research, Persistent Identifiers (PIDs) and rich metadata are the foundational pillars for findability. This principle ensures that datasets from integrated human, animal, and environmental studies are uniquely and permanently identifiable, and are described with sufficient detail to be discovered by both humans and computational agents. This application note outlines protocols and best practices for implementing PIDs and crafting rich metadata schemas to maximize data discovery across disciplinary boundaries.

Core Concepts and Current Landscape

Persistent Identifiers (PIDs)

PIDs are long-lasting references to digital objects that remain stable even if the object's location changes. In One Health genomics, they are applied to datasets, samples, authors, instruments, and grants.

Table 1: Common PID Systems in Life Sciences

| PID Type | Example | Resolver URL | Primary Use in One Health Genomics |

|---|---|---|---|

| Digital Object Identifier (DOI) | 10.5072/example-xyz |

https://doi.org | Citing and linking to published datasets in repositories. |

| Archival Resource Key (ARK) | ark:/13030/m5br8st1 |

https://n2t.net | Identifying samples and specimens within biobanks. |

| ORCID iD | 0000-0002-1825-0097 |

https://orcid.org | Uniquely identifying researchers across systems. |

| Research Organization Registry (ROR) | https://ror.org/05k73za52 |

https://ror.org | Identifying affiliated institutions. |

| PubMed ID (PMID) | 12345678 |

https://pubmed.ncbi.nlm.nih.gov | Linking datasets to peer-reviewed literature. |

Rich Metadata

Metadata is structured information that describes, explains, locates, or otherwise makes a resource easier to retrieve, use, or manage. Rich metadata goes beyond basic titles and creators to include detailed experimental, biological, and methodological context.

Table 2: Essential Metadata Elements for One Health Genomics Datasets

| Category | Element | Description | Recommended Standard/Vocabulary |

|---|---|---|---|

| Administrative | Creator, Publisher, License | Attribution and usage rights. | DataCite Metadata Schema, Dublin Core |

| Descriptive | Title, Description, Keywords | Human-readable discovery. | ENVO (environment), NCBITaxon (species), DOID (disease) |

| Structural | File Format, Size, Version | Technical characteristics. | EDAM Bioschemas |

| Contextual (One Health) | Host Species, Pathogen, Sample Type, Geographic Location, Collection Date | Critical for cross-domain integration. | OBI (sample), GAZ (location), PHI-base (pathogen-host interaction) |

Application Protocols

Protocol 1: Minting a PID for a New Genomics Dataset

Objective: To assign a globally unique, persistent identifier to a dataset prior to public deposition. Materials: Finalized dataset, metadata spreadsheet, institutional/login credentials for a data repository. Procedure: 1. Repository Selection: Choose a FAIR-aligned repository (e.g., ENA, SRA, Zenodo, institutional repository) that mints DOIs or other PIDs. 2. Metadata Preparation: Complete the repository's submission form using the rich metadata schema outlined in Table 2. Prioritize controlled vocabulary terms. 3. Dataset Upload: Transfer dataset files via FTP, API, or web interface as per repository guidelines. 4. Private PID Generation: Upon submission, the repository will typically provide a private accession number or draft DOI for curation. 5. Curation & Validation: Respond to any queries from repository curators. Ensure metadata accurately reflects the data. 6. Public PID Minting: After final approval, the repository publicly mints the PID (e.g., DOI). This PID is now the canonical citation link.

Protocol 2: Creating a Machine-Actionable Metadata Record

Objective: To generate a metadata record that is both human-readable and machine-parsable for automated discovery.

Materials: Experimental protocol, data dictionary, codebook.

Procedure:

1. Schema Selection: Adopt a formal metadata schema (e.g., DataCite, ISA-Tab, MIxS standards from GSC).

2. Element Population: For each schema element, provide the most granular information possible.

Use PIDs where applicable: Link to ORCID iDs (creators), ROR IDs (affiliations), BioSample IDs.

Use Ontology Terms: For fields like "disease," "tissue," or "environmental medium," provide the term's unique URI (e.g., http://purl.obolibrary.org/obo/ENVO_01001516 for "wastewater").

3. Serialization: Convert the filled schema into a machine-readable format such as JSON-LD, RDF/XML, or Turtle. Many repositories perform this automatically upon web form entry.

4. Validation: Use schema validators (e.g., GoFAIR's METS, ISA-Tab validator) to ensure syntactic and semantic correctness.

5. Publication & Linking: Publish the metadata record alongside the dataset, ensuring it is linked via the dataset's PID.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for PID and Metadata Management

| Item | Function | Example Tools/Services |

|---|---|---|

| PID Service | Mints and manages persistent identifiers. | DataCite, Crossref, EZID |

| Metadata Schema | Provides the structural framework for description. | DataCite Schema, ISA Model, MIxS (GSC) |

| Ontology Browser | Finds standardized vocabulary terms (URIs). | OLS, BioPortal, Ontobee |

| Metadata Editor | Assists in creating and validating metadata files. | ISAcreator, CEDAR Workbench, repo submission forms |

| Metadata Validator | Checks compliance with chosen schema. | GoFAIR METS, JSON-LD Playground, ISA-Tab validator |

| Repository Finder | Identifies appropriate repositories for data deposition. | re3data, FAIRsharing |

Visualizing the PID and Metadata Ecosystem

Diagram Title: PID and Metadata Flow for Data Discovery

Within a One Health genomics framework—integrating human, animal, and environmental data—the FAIR principles (Findable, Accessible, Interoperable, Reusable) are paramount. This application note addresses the critical third step: designing data accessibility that balances the inherent openness required for collaborative, cross-sectoral research with the stringent ethical, privacy, and security controls demanded by genomic and health data. True accessibility is not merely about being "open"; it is about providing structured, secure, and ethically compliant pathways to data.

Quantitative Landscape: Current Practices & Challenges

Table 1: Prevalence of Data Access Controls in Public Genomic Repositories (2023-2024)

| Repository / Platform | Primary Data Type | Open Access (No Login) | Registered Access (Basic Login) | Managed/Controlled Access (Review Process) | Embargo Period Options |

|---|---|---|---|---|---|

| NCBI SRA | Raw Sequencing | 72% | 28% (Bulk Data) | <1% (for sensitive human data) | Yes |

| ENA | Raw Sequencing | 85% | 15% | <1% | Yes |

| dbGaP | Phenotype+Genotype | 0% | 0% | 100% | Optional |

| EGA | Sensitive Genomics | 0% | 0% | 100% | Yes |

| BV-BRC | Pathogen Genomics | 89% | 11% (Tool Access) | 1% (Select Agents) | Yes |

Table 2: Researcher-Reported Barriers to Accessing Managed Data (Survey, n=450)

| Barrier Category | Specific Issue | Percentage Reporting as "Major Hurdle" |

|---|---|---|

| Procedural | Lengthy approval process (>30 days) | 67% |

| Lack of clarity in application requirements | 58% | |

| Technical | Difficulties in secure data transfer | 42% |

| Incompatible computing environments | 39% | |

| Legal/Ethical | Navigating complex Data Use Agreements (DUAs) | 71% |

| Institutional signing delays for DUAs | 65% |

Core Protocols for Implementing Balanced Access

Protocol 3.1: Establishing a Tiered Data Access Framework

Objective: To create a standardized, risk-based classification system for One Health genomics datasets that dictates appropriate access controls.

Materials & Reagents:

- Data classification rubric (see Table 3).

- Institutional review board (IRB) or ethics committee guidelines.

- Secure, web-based platform supporting role-based access control (RBAC).

Procedure:

- Data Sensitivity Assessment: a. For each dataset, conduct a risk assessment evaluating: (i) Identifiability risk (e.g., human genomic data with phenotype = high risk; anonymized animal pathogen sequences = low risk). (ii) Potential for harm (e.g., misuse of dual-use research of concern (DURC) pathogens). b. Classify data into one of four tiers (Table 3).

Control Mapping: a. Map each tier to a specific access governance model: - Tier 1 (Open): Direct download via FTP/API. - Tier 2 (Registered): Require user registration with institutional email; track downloads. - Tier 3 (Controlled): Implement a Data Access Committee (DAC) for review. Require a brief research proposal and DUA. - Tier 4 (Secure/Compute): No data download allowed. Provide access only within a secure, isolated computational environment (e.g., GA4GH Passport-based login, virtual desktop with audit logs).

Implementation: a. Configure the data repository's RBAC system according to the tier mapping. b. For Tiers 3 & 4, establish clear, publicly accessible DAC governance documents and application forms.

Table 3: Tiered Data Classification for One Health Genomics

| Tier | Description | Example | Recommended Access Model | Average Approval Time Goal |

|---|---|---|---|---|

| 1 | Public, non-sensitive | Assembled, non-DURC pathogen genomes, environmental metagenomic aggregates | Open Download | Immediate |

| 2 | Low-risk sensitive | Non-identifiable animal health metadata, de-identified microbiome data | Registered Access | < 24 hours |

| 3 | Identifiable or moderately sensitive | Human genomic variants with basic demographics, DURC pathogen data with location | Managed Access (DAC Review) | < 30 days |

| 4 | Highly sensitive | Integrated human+clinical+location data, detailed outbreak surveillance data with identifiers | Secure Compute Environment | < 30 days + technical setup |

Diagram Title: Tiered Data Access Control Workflow

Protocol 3.2: Automated Data Use Agreement (DUA) Compliance Checking

Objective: To expedite the DUA negotiation process for Tier 3 data using machine-readable agreements and automated compliance scoring.

Materials:

- GA4GH Data Use Ontology (DUO) codes.

- Machine-readable DUA template (e.g., in JSON schema).

- DUA management platform with API (e.g., REMS, Ledger).

Procedure:

- Tag Datasets with DUO Codes: During metadata submission, data submitters must tag datasets with relevant DUO codes (e.g.,

DUO:0000042= "population origins or ancestry research",DUO:0000011= "health/medical/biomedical research"). - Researcher Application Profiling: In the access application, researchers describe their project. The system maps this description to requested DUO codes.

- Automated Matching Engine:

a. The system compares dataset DUO codes (

D_set) with researcher-requested DUO codes (R_req) and their approved DUO permissions (R_perm) from their institution. b. An algorithmic check runs:IF (R_req ∩ D_set) ⊆ R_perm THEN "Preliminary Match" ELSE "Flag for DAC Review". c. A compatibility score (e.g., 95% match) is generated for the DAC to expedite final review. - Digital Signing & Tracking: Upon approval, a standardized, machine-readable DUA is generated for electronic signing. All usage is logged against the DUA's unique ID.

Diagram Title: Automated DUA Compliance Matching System

The Scientist's Toolkit: Key Reagent Solutions

Table 4: Essential Tools for Implementing Controlled Access Systems

| Tool / Solution Category | Specific Example(s) | Function in Access Design |

|---|---|---|

| Authentication & Authorization | ELIXIR AAI, Google Identity Platform, Microsoft Entra ID | Provides federated user login, enabling researchers to use their institutional credentials across multiple repositories (Registered Access). |

| Data Access Committee (DAC) Management | REMS (Resource Entitlement Management System), DACs.eu | A platform to manage the entire lifecycle of controlled access applications: submission, review, voting, and decision communication. |

| Machine-Readable Data Use Agreements | GA4GH DUO (Data Use Ontology), ADA-M (Machine-readable DUA) | Standardized codes and formats that allow computational matching of data use restrictions to researcher purposes, automating compliance checks. |

| Secure Compute Environments | Terra (BioData Catalyst), Seven Bridges, IRON | Cloud-based workspaces where Tier 4 data can be analyzed without being downloaded to a local machine, with strict audit trails and computational governance. |

| Audit Logging & Monitoring | ELK Stack (Elasticsearch, Logstash, Kibana), Splunk | Captures all access events (who, what, when) for security monitoring, breach detection, and compliance reporting for funded projects. |

Effective accessibility in One Health genomics requires moving beyond a binary open/closed model. By implementing a risk-proportional, tiered access framework supported by protocols for automated compliance checking and standardized toolkits, data stewards can fulfill the FAIR principle of Accessibility. This ensures data is "as open as possible, as closed as necessary," fostering collaborative innovation while upholding the highest ethical and security standards critical for public trust.

Within the One Health paradigm—which integrates human, animal, and environmental health—genomics research generates vast, heterogeneous datasets. Adherence to FAIR (Findable, Accessible, Interoperable, Reusable) principles is paramount. This application note addresses the critical "I" in FAIR: Interoperability. It details the protocols for schema alignment and the implementation of common data models (CDMs) to enable seamless data integration across disparate One Health genomics platforms, thereby accelerating translational research and drug development.

Core Concepts & Quantitative Landscape

Table 1: Prevalence of Data Interoperability Challenges in One Health Genomics (2023-2024 Survey)

| Challenge Category | Percentage of Research Projects Reporting Issue | Primary Impacted Domain |

|---|---|---|

| Inconsistent Metadata Schemas | 87% | All (Human, Veterinary, Environmental) |

| Non-standard Ontology Use | 72% | Pathogen Surveillance |

| Proprietary/Closed Data Formats | 65% | Clinical Trial Data |

| Lack of Semantic Alignment | 91% | Multi-host Genomic Studies |

Table 2: Performance Metrics of Schema Alignment Techniques

| Alignment Technique | Average Precision (%) | Average Recall (%) | Computational Cost (Relative Units) | Best Suited For |

|---|---|---|---|---|

| Lexical Matching | 68 | 75 | 1 | Initial coarse alignment |

| Structural Similarity | 72 | 70 | 3 | JSON/XML schemas |

| Ontology-Based Mapping | 94 | 89 | 7 | High-value metadata fields |

| Machine Learning (Embedding) | 88 | 85 | 10 | Large, complex schemas |

Protocols for Schema Alignment & CDM Implementation

Protocol 3.1: Cross-Domain Metadata Schema Audit and Mapping

Objective: To identify semantic and structural discrepancies between source schemas and a target CDM. Materials: Source database dumps (e.g., ENA, VetBioBank, environmental sensor APIs), Ontology tools (OLS API, Zooma), Alignment software (e.g., OpenRefine, custom Python scripts). Procedure:

- Schema Extraction: Programmatically extract all metadata field names, data types, constraints, and descriptions from source databases.

- Lexical Normalization: Apply case-folding, punctuation removal, and stemming to all field names.

- Ontology Tagging: For each normalized field, query the OLS API with relevant ontologies (e.g., OBI, ENVO, NCI Thesaurus) to propose standard terms.

- Candidate Generation: Generate alignment candidates using a hybrid matcher (combining lexical similarity >0.8 and ontological parent-child relationships).

- Expert Curation: Present candidate mappings to domain experts (microbiologist, veterinarian, ecologist) for validation via a structured web interface. Store ratified mappings in a Mapping Registry (JSON-LD format).

Protocol 3.2: Implementation of a One Health Common Data Model (OH-CDM)

Objective: To instantiate a validated, practical CDM for integrated analysis. Materials: Mapping Registry from Protocol 3.1, Database system (PostgreSQL, GraphDB), Semantic tooling (R2RML, SDM-RDFizer), Validation suite (SHACL shapes). Procedure:

- CDM Specification: Define the core OH-CDM structure using a layered approach:

- Core Layer: Universal entities (Project, Sample, Organism, Location, Date).

- Extension Layer: Domain-specific modules (e.g., AMR markers, zoonotic risk score, environmental covariates).

- ETL Pipeline Development: Implement R2RML (RDB to RDF Mapping Language) scripts to transform source data, guided by the Mapping Registry, into the OH-CDM RDF representation.

- Quality Enforcement: Apply SHACL (Shapes Constraint Language) shapes to validate incoming data for cardinality, data type, and value set compliance (e.g.,

sh:infor controlled terms like "hosthealthstatus"). - Materialization: Load validated RDF into a triple store (GraphDB) and create optimized relational views for high-performance genomic querying.

Protocol 3.3: Benchmarking Interoperability Gains

Objective: To quantitatively measure improvements in data integration efficiency post-CDM adoption. Materials: Pre- and post-CDM integrated datasets, Query workload (10 complex integrative queries), Performance monitoring stack (Prometheus, Grafana). Procedure:

- Baseline Measurement: Execute the query workload against a federated query system linking original source schemas. Record time-to-completion, query complexity (lines of code), and failure rate.

- Intervention Measurement: Execute the identical workload against the OH-CDM materialized view.

- Analysis: Calculate the improvement ratio for time-to-completion and the reduction in query complexity. Survey researchers on perceived ease of use.

Visualization of Workflows and Relationships

Diagram 1: Schema Alignment and CDM Implementation Workflow (87 chars)

Diagram 2: OH-CDM Layered Structure with Extensions (78 chars)

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Interoperability in One Health Genomics

| Item | Function/Description | Example Product/Standard |

|---|---|---|

| Ontology Lookup Service (OLS) | Provides a unified interface to query and navigate over 200 biomedical ontologies for term mapping. | EMBL-EBI OLS API |

| R2RML Engine | A standard language for expressing customized mappings from relational databases to RDF datasets, critical for ETL to a CDM. | CARML, Morph-RDB |

| SHACL Validation Engine | Ensures transformed data conforms to the expected CDM structure, data types, and business rules. | TopBraid SHACL API, pySHACL |

| Schema Matching Library | Provides algorithmic functions (lexical, structural, semantic) to compute similarity between schema elements. | Python: schemamatch, rdflib; Java: AgreementMakerLight |

| Graph Database | A native storage and query engine for highly interconnected data, ideal for materializing the OH-CDM. | Neo4j, GraphDB (for RDF), Amazon Neptune |

| FAIR Data Point Software | A middleware solution that exposes metadata about datasets and services following FAIR principles, acting as an interoperability gateway. | FAIR Data Point (FDP) |

| Bioinformatics Workflow Manager | Orchestrates analytic pipelines across integrated data, ensuring reproducibility. | Nextflow, Snakemake, Cromwell (WDL) |

Application Notes

Within the FAIR principles, Reusability (R1) is the ultimate goal, ensuring that data and metadata are sufficiently well-described to be replicated, combined, and used in new research. For One Health genomics—which integrates human, animal, and environmental data—achieving R1 requires robust legal frameworks (licensing), detailed historical tracking (provenance), and adherence to community-sanctioned formats and vocabularies. This section provides protocols for implementing these pillars.

Licensing Frameworks for Genomic Data

Clear licensing resolves ambiguity regarding how data can be accessed, used, and redistributed. The choice of license is critical for enabling downstream reuse in both academic and commercial drug development contexts.

Table 1: Common Licenses for Genomic Data and Software

| License | Type | Key Permissions | Key Restrictions | Best For |

|---|---|---|---|---|

| Creative Commons CC-BY 4.0 | Data, Metadata | Commercial use, modification, distribution | Attribution required | Published datasets, articles |

| Creative Commons CC0 1.0 | Data, Metadata | Public domain dedication; no restrictions | None | Maximizing data integration & reuse |

| Open Database License (ODbL) | Databases | Commercial use, modification, distribution | Share-alike; attribution; keep open | Databases requiring downstream openness |

| MIT License | Software | Commercial use, modification, private use | Attribution; include original license | Software tools, pipelines |

| GNU GPLv3 | Software | Commercial use, modification | Share-alike/copyleft | Software where derivatives must remain open |

| Apache License 2.0 | Software | Commercial use, modification, patent grant | Attribution; state changes | Software with patent concerns |

Provenance Capture (Data Lineage)

Provenance documents the origin, custody, and transformations of data. It is essential for assessing quality, reproducibility, and trust, especially in complex One Health analyses.

Protocol 3.1: Capturing Computational Workflow Provenance Using RO-Crate Objective: Package a genomic analysis workflow (e.g., pathogen variant calling) with complete provenance using the Research Object Crate (RO-Crate) standard.

- Assemble Components: Gather all input files (raw FASTQ, reference genome), software tools (versioned containers, e.g., Docker/Singularity), configuration files, and the workflow script (e.g., Nextflow, CWL).

- Create

ro-crate-metadata.json: This is the core provenance document.- Describe the Crate: Use

@idand@type. Set"conformsTo": "https://w3id.org/ro/crate/1.1". - Describe Entities: For each file, tool, and dataset, add an entry with properties:

@type(e.g.,"File","SoftwareSourceCode","ComputationalWorkflow"),name,description,author,license,version. - Define Actions: Add a

CreateAction(orRunAction) describing the workflow execution. Link it via"object"to input files and"instrument"to the software/tools. Link it via"result"to output files. - Link to People/Orgs: Use

PersonandOrganizationtypes for authors and funders.

- Describe the Crate: Use

- Validate: Use the RO-Crate validator (online or Python library) to ensure compliance.

- Publish: Deposit the entire RO-Crate (metadata file + data files or references) in a FAIR-compliant repository like WorkflowHub or Zenodo.

Adherence to Community Standards

Standards ensure interoperability. The following table summarizes critical standards for One Health genomics.

Table 2: Essential Community Standards for One Health Genomics

| Category | Standard/Schema | Purpose | Governing Body |

|---|---|---|---|

| Metadata | MIxS (Minimum Information about any (x) Sequence) | Standardized environmental, host-associated, and pathogen metadata. | Genomics Standards Consortium |

| Pathogen Genomics | INSDC Standards (FASTA, FASTQ, SAM/BAM) | Universal formats for raw reads, assemblies, alignments. | INSDC (ENA, GenBank, DDBJ) |

| Pathogen Metadata | Public Health Alliance for Genomic Epidemiology (PHA4GE) templates | Contextual data for outbreak investigation. | PHA4GE |

| Antimicrobial Resistance | NCBI AMRFinderPlus data models | Standardized reporting of AMR genes/mutations. | NCBI |

| Variants | HGVS Nomenclature | Precise description of sequence variants. | HGVS |

| Data Packaging | RO-Crate | Packaging research outputs with metadata & provenance. | Research Object Alliance |

| Ontologies | SNOMED CT, NCBI Taxonomy, ENVO (Environment Ontology) | Semantic tagging of host, pathogen, and environmental terms. | Respective ontology bodies |

Protocol 4.1: Annotating a Microbial Genome Assembly with Community Standards Objective: Prepare a finished bacterial genome assembly for submission to a public repository with FAIR-compliant metadata.

- Quality Control: Assess assembly using CheckM for completeness and contamination.

- Functional Annotation: Use PROKKA or NCBI's PGAP to annotate genes. For AMR genes, cross-reference with CARD or ResFinder using AMRFinderPlus.

- Metadata Compilation: Create a metadata spreadsheet using the relevant MIxS checklist (e.g., MIGS.ba for bacteria). Populate fields including:

- Investigation Type:

"pathogen surveillance" - Project Name: Include grant ID.

- Geographic Location (lat/lon): From sample collection.

- Host/Sample Information: Use ontology terms (e.g., NCBI Taxonomy ID for host species).

- Sequencing Method & Platform.

- Investigation Type:

- Submission: Submit assembly (FASTA), annotations (GFF), and metadata to the International Nucleotide Sequence Database Collaboration (INSDC) via ENA, GenBank, or DDBJ submission portals.

The Scientist's Toolkit

Table 3: Research Reagent Solutions for Genomic Data Reusability

| Item/Category | Example(s) | Function in Ensuring Reusability |

|---|---|---|

| Workflow Management Systems | Nextflow, Snakemake, Common Workflow Language (CWL) | Define reproducible, portable, and version-controlled computational pipelines. |

| Containerization Platforms | Docker, Singularity, Podman | Package software and dependencies into isolated, executable units for consistent execution across environments. |

| Provenance Capture Tools | RO-Crate (Python library), YesWorkflow, ProvONE-compliant tools | Generate standardized records of data lineage and computational steps. |

| Metadata Validation Tools | ISA tools (for ISA-Tab format), MIxS validation scripts | Check metadata files for completeness and compliance with community schemas. |

| Ontology Services | Ontology Lookup Service (OLS), Bioportal | Find and map standardized controlled vocabulary terms for metadata annotation. |

| License Selection Services | Choose a License (choosealicense.com), Creative Commons License Chooser | Guide researchers in selecting an appropriate open license for data/code. |

| FAIR Data Repositories | European Nucleotide Archive (ENA), Zenodo, WorkflowHub, NG-STAR | Domain-specific and general repositories that enforce metadata standards, provide persistent identifiers (DOIs), and respect licensing. |

Visualizations

Title: Three Pillars of FAIR Data Reusability

Title: Genomic Analysis Workflow with Provenance Tracking

Overcoming Common FAIR Implementation Hurdles in One Health Projects

Troubleshooting Heterogeneous Data Formats and Legacy Systems

1. Introduction Within the One Health genomics research paradigm, achieving FAIR (Findable, Accessible, Interoperable, Reusable) data principles is paramount for integrating insights across human, animal, and environmental health. A primary obstacle is the proliferation of heterogeneous data formats and reliance on legacy systems in both sequencing facilities and diagnostic laboratories. These challenges directly undermine interoperability and reusability. This application note provides structured protocols for troubleshooting and mitigating these issues to enable robust data integration for cross-species genomic analysis and drug target discovery.

2. Quantitative Overview of Common Data Heterogeneity Challenges The following table summarizes key problematic formats and their prevalence in legacy genomic and clinical systems.

Table 1: Common Legacy Data Formats and Associated Challenges in One Health Genomics

| Data Type | Common Legacy Format(s) | Prevalence Estimate in Archived Data* | Primary FAIR Limitation | Typical Source System |

|---|---|---|---|---|

| Sequencing Reads | SFF, QSEQ, Native Platform Formats (e.g., old Illumina) | ~15-20% | Accessibility, Interoperability | Early NGS Platforms (pre-2012) |

| Genetic Variants | Private LIS formats, CHROM, FILE | ~25-30% | Interoperability, Reusability | Hospital LIS, Old VC Pipelines |

| Microarray Data | CEL (Genotyping), GPR (Expression) | ~10-15% | Findability, Interoperability | Affymetrix, Old Agilent Systems |

| Clinical Phenotypes | Non-standard CSV, EDI 837, HL7v2 | ~40-50% | Interoperability, Reusability | EHRs, Diagnostic Lab Systems |

| Pathogen Metadata | Proprietary DB dumps, Spreadsheets | ~30-40% | Findability, Reusability | Laboratory Information Management Systems (LIMS) |

*Prevalence estimates based on analysis of public repository metadata and industry surveys (2022-2024).

3. Core Experimental Protocol: A Unified Pipeline for Legacy Data Harmonization This protocol describes a methodological framework for converting heterogeneous data into FAIR-aligned, analysis-ready formats.

Protocol Title: Retrospective Harmonization of Heterogeneous Genomic and Phenotypic Data for One Health Integration.

3.1. Materials and Reagent Solutions

Table 2: Research Reagent Solutions & Essential Tools for Data Harmonization

| Item / Tool Name | Category | Function / Purpose |

|---|---|---|

| Bioinformatics File Format Converters (e.g., biobambam2, HTSeq) | Software Tool | Converts legacy sequencing formats (SFF, QSEQ) to standard FASTQ/BAM. |

| EDIA (Electronic Data Interchange Adaptor) Framework | Middleware | Parses and maps non-standard clinical data (HL7v2, EDI) to OMOP CDM or FHIR standards. |

| Curation Tool (e.g., CEDAR, OpenRefine) | Metadata Tool | Enforces metadata annotation using One Health-relevant ontologies (NCBI Taxonomy, SNOMED CT, ENVO). |

| Containerized Pipeline (Nextflow/Snakemake) | Workflow System | Ensures reproducible conversion and processing across all data types. |

| Persistent Identifier Minter (e.g., EZID, DataCite) | Web Service | Assigns unique, permanent identifiers (DOIs, ARKs) to harmonized datasets for findability. |

3.2. Step-by-Step Methodology

Inventory and Profiling:

- Catalog all data assets, identifying file formats, encoding, and associated metadata schemas.

- Use tools like

file(Unix) and custom scripts to detect MIME types and validate structure integrity.

Format Conversion to Community Standards:

- Sequencing Data: For SFF/QSEQ, use

sff2fastqorbamtofastq. Convert proprietary microarray data to standard TAB-delimited formats using platform-specific SDKs. - Variant Data: Transform to VCF/BCF using GATK's

ConvertToVCFor bcftools. For tabular data, define mapping rules to VCF columns. - Clinical/Phenotypic Data: Implement an ETL (Extract, Transform, Load) pipeline using the EDIA framework to map to a common data model (e.g., OMOP CDM).

- Sequencing Data: For SFF/QSEQ, use

Metadata Annotation and Ontology Mapping:

- For each dataset, create a machine-readable metadata file (e.g., in JSON-LD).

- Populate fields using controlled vocabularies:

host species(NCBI Taxonomy),disease(SNOMED CT),isolation source(ENVO).

Persistent Identifier Assignment and Repository Deposition:

- Mint a DOI for the fully harmonized dataset.

- Deposit data and its rich metadata into a FAIR-compliant repository (e.g., ENA, NCBI BioProject, Zenodo) following their specific submission protocols.

4. Visualization of Workflows and Logical Relationships

Diagram 1: Legacy Data Harmonization Workflow for One Health

Diagram 2: System Architecture for Interoperability

Application Notes

In the context of a broader thesis on implementing FAIR (Findable, Accessible, Interoperable, Reusable) principles in One Health genomics research, robust metadata collection is the non-negotiable foundation. This protocol addresses the critical bottleneck of time-intensive, inconsistent metadata reporting by providing structured templates and tool recommendations.

Table 1: Quantitative Comparison of Metadata Management Tools

| Tool Name | Primary Function | Cost Model | Key Feature for One Health | FAIR Alignment |

|---|---|---|---|---|

| ISA Framework | Investigation/Study/Assay metadata structuring | Open Source | Hierarchical design for multi-omics, multi-species studies | High (Interoperability) |

| CEDAR | Metadata authoring with ontologies | Freemium | AI-assisted, ontology-driven template creation | Very High (Interoperability) |

| NMDC EDGE | Domain-specific metadata entry | Open Source | Built-in environmental & biosample packages | High (Findability) |

| OS-M | Open-source metadata collection app | Open Source | Offline-capable, designed for field collection | High (Accessibility) |