Implementing FAIR Data Principles for Pathogen Genomics: A Guide for Researchers and Drug Developers

This article provides a comprehensive guide for researchers, scientists, and drug development professionals on applying the FAIR (Findable, Accessible, Interoperable, Reusable) data principles to pathogen genomics.

Implementing FAIR Data Principles for Pathogen Genomics: A Guide for Researchers and Drug Developers

Abstract

This article provides a comprehensive guide for researchers, scientists, and drug development professionals on applying the FAIR (Findable, Accessible, Interoperable, Reusable) data principles to pathogen genomics. We explore the foundational importance of FAIR data in accelerating outbreak response and therapeutic discovery. The guide details methodological steps for implementation, addresses common challenges in data sharing and metadata management, and evaluates real-world applications and platforms. By synthesizing current standards and best practices, this resource aims to enhance data utility and foster global collaboration in infectious disease research.

Why FAIR Data is Critical for Modern Pathogen Genomics and Pandemic Preparedness

Within the critical domain of pathogen genomics research, the acceleration of outbreak response, therapeutic development, and surveillance systems is fundamentally constrained by data fragmentation and siloing. This whitepaper posits that the systematic application of the FAIR Guiding Principles—making data Findable, Accessible, Interoperable, and Reusable—is not merely a best practice but an operational imperative for modern public health and biomedical research. By providing an in-depth technical guide to implementing these pillars for pathogen sequence data, metadata, and associated clinical/epidemiological information, we establish a framework for fostering robust, collaborative, and rapid-response science in the face of emerging infectious threats.

The Four Pillars: A Technical Deconstruction

Findable

The first step is to ensure data can be discovered by both humans and computational agents.

- Core Requirements: Data and metadata must be assigned a globally unique and persistent identifier (PID). Rich metadata must be registered or indexed in a searchable resource.

- Technical Implementation for Pathogens:

- Persistent Identifiers: Use DOIs (via repositories like Zenodo, Figshare) for datasets, and BioSample/SRA accession numbers (NCBI, ENA, DDBJ) for sequence records.

- Rich Metadata: Adhere to community-standardized metadata schemas such as the MIxS (Minimum Information about any (x) Sequence) pathogen package from the Genomic Standards Consortium or the INSDC pathogen metadata model.

Table 1: Key Metadata Standards for Findable Pathogen Data

| Standard/Schema | Scope | Governing Body | Key Fields for Pathogens |

|---|---|---|---|

| MIxS (Pathogen) | Minimum information for sequence data | Genomic Standards Consortium | Host taxon, host health status, collection date/location, antimicrobial resistance markers. |

| INSDC Pathogen | Submission and archiving | International Nucleotide Sequence Database Collaboration (INSDC) | Sample isolation source, collection date, geographic location, isolate name. |

| CDC’s case report forms | Epidemiological context | U.S. Centers for Disease Control and Prevention | Clinical outcome, exposure history, symptom onset date, vaccination status. |

Accessible

Data is retrievable by their identifier using a standardized communications protocol.

- Core Requirements: The protocol is open, free, and universally implementable. Authentication and authorization procedures may be required, but metadata should remain accessible.

- Technical Implementation for Pathogens:

- Protocols: Use standardized APIs (e.g., ENA API, NCBI’s E-utilities, GA4GH Passports/DRS) for programmatic access.

- Access Management: Clearly state access conditions (e.g., open, embargoed, controlled). For sensitive human-derived pathogen data (e.g., HIV, TB), implement controlled-access mechanisms aligned with frameworks like GA4GH’s Data Use Ontology (DUO).

Interoperable

Data must integrate with other data and work with applications or workflows for analysis, storage, and processing.

- Core Requirements: Use formal, accessible, shared, and broadly applicable languages and vocabularies for knowledge representation.

- Technical Implementation for Pathogens:

- Ontologies & Vocabularies: Use controlled terms from ontologies like:

- NCBI Taxonomy: For organism names.

- SNOMED CT / MeSH: For clinical phenotypes and diseases.

- Geonames: For geographic locations.

- AMR (Antibiotic Resistance Ontology): For standardized resistance gene annotation.

- Data Formats: Use community-accepted, structured formats (e.g., FASTQ, BAM, VCF for sequences; JSON-LD, RDF for enriched metadata).

- Ontologies & Vocabularies: Use controlled terms from ontologies like:

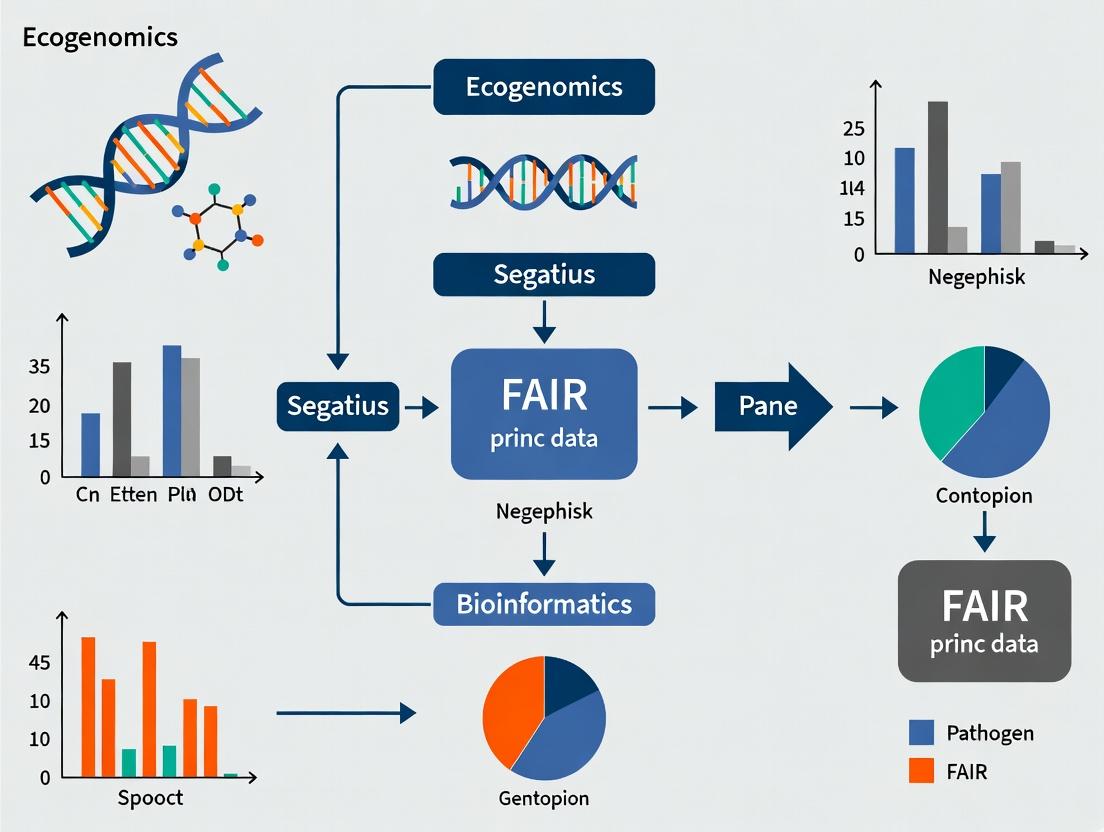

Diagram: FAIR Data Interoperability Workflow for Pathogen Genomics

Title: Pathogen Data Interoperability Workflow

Reusable

Data are sufficiently well-described to be replicated and/or combined in different settings.

- Core Requirements: Data meet domain-relevant community standards, have clear usage licenses, and are associated with detailed provenance.

- Technical Implementation for Pathogens:

- Provenance: Use standards like W3C PROV to document the lineage of data from sample to sequence file, including computational tools and parameters (e.g., CWL, Nextflow workflows).

- Licensing: Apply explicit machine-readable licenses (e.g., Creative Commons CC-BY for data, MIT/BSD for code).

- Community Standards: Adhere to reporting guidelines like the FAIRsharing.org listed standards for genomic epidemiology.

Experimental Protocol: Implementing a FAIR Pathogen Genomics Workflow

Protocol Title: End-to-End FAIR-Compliant Sequencing and Submission of a Viral Pathogen Isolate.

Objective: To generate, process, and publicly share viral genome sequence data in accordance with FAIR principles.

1. Sample Collection & Metadata Generation:

- Collect clinical specimen with informed consent and ethical approval.

- Record metadata in a structured template (see Table 1) using ontology terms from the start (e.g., host species:

NCBI:txid9606; symptom:SNOMED_CT:386661006for fever). - Assign a unique internal sample ID linked to all downstream data.

2. Nucleic Acid Extraction & Sequencing:

- Extract viral RNA/DNA using a validated kit (see Toolkit).

- Prepare sequencing library using a protocol appropriate for the platform (e.g., Illumina COVIDSeq, Oxford Nanopore ARTIC protocol).

- Sequence on an NGS platform. Generate raw data files (FASTQ).

3. Bioinformatic Analysis & Provenance Capture:

- Quality Control: Use FastQC (v0.11.9). Record command:

fastqc sample_1.fastq.gz. - Genome Assembly: Use IVar (v1.3.1) for amplicon-based data or BWA-MEM2 (v2.2.1) for shotgun data. Record all parameters in a Nextflow or CWL workflow script.

- Variant Calling: Use bcftools (v1.13). The exact command and reference genome (with version and accession) must be documented.

4. FAIR Packaging & Submission:

- Package Data Bundle: Final bundle includes: (a) Raw FASTQ files, (b) Consensus genome (FASTA), (c) Variant calls (VCF), (d) Structured metadata file (in MIxS format), (e) A README file describing provenance and licensing.

- Assign License: Attach a

LICENSEfile (e.g., CC-BY 4.0). - Submit to Repositories:

- Submit sequence data and core metadata to an INSDC partner (SRA, ENA, DDBJ). This provides the persistent accession numbers.

- For broader data (analysis files, full provenance), submit to a general repository like Zenodo to obtain a DOI. Link the DOI and INSDC accessions together in metadata.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 2: Key Reagents & Tools for FAIR Pathogen Genomics Research

| Item | Category | Function/Explanation | Example Product/Standard |

|---|---|---|---|

| Nucleic Acid Extraction Kit | Wet-lab | Isolates high-quality viral RNA/DNA from clinical matrices, foundational for sequencing. | QIAamp Viral RNA Mini Kit, MagMAX Viral/Pathogen Kit |

| Library Prep Kit | Wet-lab | Prepares sequencing libraries from nucleic acids, often target-enriched (amplicon-based). | Illumina COVIDSeq Test, ARTIC Network primers & protocol |

| Structured Metadata Template | Data Management | Ensures consistent, complete metadata collection at the point of sample origin. | MIxS checklist spreadsheet, GSC data-harmonization templates |

| Bioinformatics Workflow Manager | Computational | Captures and executes analysis pipelines, ensuring reproducibility and provenance. | Nextflow, Snakemake, Common Workflow Language (CWL) |

| Ontology Browser/Validator | Data Curation | Enables selection and validation of controlled vocabulary terms for metadata fields. | OLS (Ontology Lookup Service), Bioportal |

| Trusted Repository | Data Sharing | Provides persistent identifiers, metadata indexing, and stable archiving per FAIR principles. | INSDC (SRA/ENA/DDBJ), Zenodo, GenBank |

Visualizing the FAIR Data Lifecycle

Diagram: The FAIR Pathogen Data Lifecycle

Title: FAIR Pathogen Data Lifecycle

The systematic application of the FAIR principles to pathogen data transforms isolated data points into a cohesive, global knowledge graph. This technical guide demonstrates that through the adoption of persistent identifiers, rich standardized metadata, interoperable formats, and clear provenance, the pathogen genomics community can build a resilient and responsive data ecosystem. This is foundational to the broader thesis that FAIR compliance is a non-negotiable cornerstone for accelerating therapeutic discovery, enhancing real-time surveillance, and ultimately mitigating the impact of infectious diseases on global health.

The application of FAIR (Findable, Accessible, Interoperable, Reusable) principles to pathogen genomic data represents a paradigm shift in pandemic preparedness and therapeutic development. This whitepaper details the technical implementation and quantifiable impact of FAIR genomic data, framing it within the broader thesis that standardized, machine-actionable data is the critical enabler for rapid scientific response. By ensuring data is structured for computational use, researchers can bypass traditional bottlenecks in data wrangling, accelerating timelines from sample to insight.

Core FAIR Implementation Protocols for Pathogen Genomics

Experimental Protocol: Generating FAIR-Compliant Genomic Surveillance Data

Objective: To sequence a pathogen sample (e.g., SARS-CoV-2, Influenza A) and generate a FAIR-compliant genomic record for public repositories.

Materials & Workflow:

- Sample Collection: Collect clinical specimen using approved swabs and viral transport media.

- Nucleic Acid Extraction: Use automated extraction kits (e.g., Qiagen QIAamp Viral RNA Mini Kit) to obtain high-quality RNA/DNA.

- Library Preparation & Sequencing: Employ targeted amplicon or metagenomic sequencing approaches (e.g., Oxford Nanopore GridION, Illumina MiSeq). Use primers from validated schemes (e.g., ARTIC Network primers for SARS-CoV-2).

- Bioinformatic Analysis:

- Basecalling & Quality Control: Generate FASTQ files. Apply quality filters (e.g., Phred score >30).

- Genome Assembly: Map reads to a reference genome using BWA or minimap2. Generate consensus sequence with iVar or bcftools.

- Variant Calling: Identify single nucleotide polymorphisms (SNPs) and indels using LoFreq or Nextclade.

- FAIR Metadata Annotation: Compile metadata using standardized ontologies (e.g., NCBI BioSample attributes, GSCID MIxS).

- Deposition: Submit raw reads (FASTQ), assembled genome (FASTA), and annotated metadata to an INSDC repository (NCBI SRA, ENA, DDBJ) or pathogen-specific hub (GISAID).

Experimental Protocol: In Silico Drug Target Screening Using FAIR Data Repositories

Objective: To computationally identify potential drug targets from FAIR genomic datasets of a pathogen population.

Materials & Workflow:

- Data Retrieval: Programmatically query public API endpoints (e.g., ENA API, NCBI Datasets API) to retrieve all available genome assemblies and associated metadata for a target pathogen.

- Data Homogenization: Use bioinformatics pipelines (e.g., Snakemake, Nextflow) to uniformly process genomes through alignment (MAFFT) and core genome definition (Roary).

- Variant Analysis & Conservation Scoring: Calculate nucleotide and amino acid conservation scores across the core genome. Identify hypervariable versus invariant regions.

- Prioritization of Invariant Regions: Filter invariant genomic regions to those that are:

- Essential (cross-reference with transposon mutagenesis data from resources like DEG).

- Expressed (cross-reference with transcriptomic datasets).

- Structurally characterized (cross-reference with PDB).

- Have no human homolog (via BLAST against human proteome).

- Virtual Screening Preparation: Model the 3D structure of prioritized target proteins (e.g., via AlphaFold2) for use in molecular docking simulations.

Quantitative Impact of FAIR Data Implementation

Table 1: Impact of FAIR Data on Outbreak Response Timelines

| Metric | Pre-FAIR (Traditional) | FAIR-Enabled | Data Source / Study |

|---|---|---|---|

| Time from sample to public genome (days) | 21 - 30 | 3 - 7 | NCBI, 2023 Overview |

| Time to identify outbreak origin | Weeks to months | Days | Grubaugh et al., 2019 |

| Time for global data aggregation for analysis | Manual, inconsistent | Near real-time (<24h) | GISAID, 2024 Report |

Table 2: Impact of FAIR Data on Drug Discovery Parameters

| Metric | Non-FAIR Data | FAIR-Enabled Data | Example |

|---|---|---|---|

| Target identification cycle time | 12-18 months | 3-6 months | SARS-CoV-2 protease target identification (Zhang et al., 2020) |

| Success rate of in silico screening (hit-to-lead) | < 5% | 10-15% | Studies leveraging CARD & ChEMBL databases (2023 review) |

| Volume of genomes usable in population-level resistance analysis | Hundreds (limited by curation) | Millions (automated pipelines) | Global Mycobacterium tuberculosis drug resistance surveillance (WHO, 2023) |

Visualization of Key Workflows and Relationships

FAIR Data Pipeline from Sample to Public Health Insight

FAIR-Enabled Computational Drug Discovery Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for FAIR Pathogen Genomics Research

| Item / Solution | Function in FAIR Workflow |

|---|---|

| ONT GridION / Illumina MiSeq | Portable or benchtop sequencers for generating raw genomic read data (FASTQ), the primary data layer. |

| ARTIC Network Primer Pools | Standardized, multiplexed primer sets for pathogen-specific amplification, ensuring consistent, comparable genome coverage. |

| Qiagen CLC Genomics Server / Illumina DRAGEN | Commercial bioinformatics platforms with reproducible workflow pipelines, aiding in interoperable analysis. |

| Snakemake / Nextflow Workflow Managers | Tools for creating reproducible, shareable, and scalable bioinformatic analysis pipelines. |

| NCBI BioSample Submission Portal & Templates | Standardized interfaces and formats for attaching rich, structured metadata to genomic sequences upon deposition. |

| EDAM Ontology / GSC MIxS | Controlled vocabularies and minimum information standards to annotate data and metadata for interoperability. |

| ENA / SRA / GISAID Submission APIs | Programmatic interfaces for submitting and retrieving data, enabling automation and integration (Accessibility). |

| AlphaFold2 Protein Structure Prediction (via API) | Access to predicted 3D protein models for novel pathogen targets where experimental structures are absent. |

| ChEMBL / PubChem Databases | FAIR chemical databases linking compounds to biological targets and activities, crucial for drug repurposing studies. |

| RShiny / Jupyter Notebooks | Interactive environments for packaging analysis code, data, and visualizations into reusable research compendia. |

This whitepaper delineates the interconnected ecosystem and technical workflows enabling pathogen genomics research for public health and pharmaceutical R&D. Framed within the imperative for FAIR (Findable, Accessible, Interoperable, and Reusable) data principles, it details the roles of key stakeholders, from public health surveillance to therapeutic discovery, and provides the technical protocols that underpin this collaborative pipeline.

The rapid characterization of pathogens—viruses, bacteria, fungi—is critical for outbreak response and developing countermeasures. FAIR data principles provide the foundational framework ensuring that genomic data generated at public health laboratories can be seamlessly integrated and analyzed by pharmaceutical R&D teams to accelerate vaccine and therapeutic development. This guide explores the stakeholders, technical ecosystems, and methodologies that make this translation possible.

Stakeholder Ecosystem & Data Flow

The pathogen genomics value chain involves multiple specialized actors. Their interaction is governed by data-sharing agreements and standardized protocols adhering to FAIR principles.

Table 1: Key Stakeholders and Primary Functions

| Stakeholder | Primary Function | Key Output for Downstream Users |

|---|---|---|

| Public Health Laboratories | Pathogen detection, outbreak surveillance, whole genome sequencing (WGS). | Raw sequencing reads, consensus genomes, metadata (time, location, clinical data). |

| National/International Repositories (e.g., NCBI, GISAID) | Centralized, curated data storage and sharing. | Annotated genomic sequences, standardized metadata fields, accession IDs. |

| Academic & Research Institutes | Basic research, pathogen biology, assay development. | Novel insights into virulence, transmission, host-pathogen interactions. |

| Bioinformatics & AI/ML Companies | Data analysis pipeline development, variant calling, predictive modeling. | Processed data, lineage reports, risk assessment scores, predictive alerts. |

| Pharma/Biotech R&D | Therapeutic target identification, vaccine design, drug discovery. | Candidate antigens, small-molecule targets, lead compounds, clinical trial designs. |

| Regulatory Agencies (e.g., FDA, EMA) | Evaluation of data quality for product approval. | Guidelines, standards for regulatory-grade genomic data submission. |

Diagram 1: Stakeholder data flow and interactions.

Core Technical Workflow: From Sample to Therapeutic Insight

The following section details the experimental and computational protocols that form the backbone of the ecosystem.

Public Health Lab Protocol: Pathogen Whole Genome Sequencing (WGS)

Detailed Experimental Protocol: Illumina COVIDSeq (ARTIC v4) Workflow for SARS-CoV-2

- Objective: Generate high-coverage, whole-genome sequence of SARS-CoV-2 from clinical specimen.

- Principle: Multiplex PCR amplicon sequencing using primer pools covering the entire viral genome.

- Materials & Reagents: See Scientist's Toolkit below.

- Procedure:

- Nucleic Acid Extraction: Using a magnetic bead-based RNA extraction kit (e.g., QIAamp Viral RNA Mini Kit), extract RNA from 140µL of viral transport media. Elute in 60µL of AVE buffer.

- Reverse Transcription & cDNA Amplification: Use the LunaScript RT SuperMix Kit. Assemble 10µL reaction with 5µL RNA. Cycle: 55°C for 2 min, 95°C for 1 min; then 45 cycles of 95°C for 15 sec, 58°C for 5 min.

- Multiplex PCR (2nd Round): Using the COVIDSeq Primer Pool v4 (ARTIC), perform two separate 25µL PCR reactions per sample. Cycle: 95°C for 3 min; 35 cycles of 95°C for 15 sec, 62°C for 5 min; 68°C for 5 min.

- PCR Clean-up: Pool the two reactions per sample and clean using AMPure XP beads (0.8x ratio). Elute in 25µL nuclease-free water.

- Library Preparation: Use the Illumina COVIDSeq Test Kit. Tagment and amplify cleaned amplicons with unique dual indices (UDIs). Clean up with AMPure XP beads (0.8x ratio).

- Quantification & Pooling: Quantify libraries using Qubit dsDNA HS Assay. Pool libraries equimolarly.

- Sequencing: Denature and dilute pool. Load onto Illumina MiSeq or NextSeq 550 system using a 300-cycle v2 kit (2x150 bp paired-end).

Bioinformatics Protocol: Variant Calling & Lineage Assignment

Detailed Computational Protocol: Illumina DRAGEN COVID Lineage App

- Objective: Process raw FASTQ files to consensus genome and assign phylogenetic lineage.

- Input: Paired-end FASTQ files, reference genome (MN908947.3).

- Software: Illumina DRAGEN Bio-IT Platform (v3.10).

- Procedure:

- Read Mapping & Alignment: Use DRAGEN's accelerated alignment. Command:

--enable-map-align true --enable-variant-caller true. - Variant Calling: Identify SNPs and indels against reference. Minimum allele frequency (AF) threshold: 0.5 for mixed bases (IUPAC codes).

- Consensus Generation: Generate FASTA file by applying called variants to reference. Sites with coverage <20x are masked as 'N'.

- Lineage Assignment: Use built-in Pango lineage assignment algorithm (based on scorpio) to compare consensus to lineage definition files.

- Output: Consensus FASTA, variant call file (VCF), lineage report (JSON/CSV).

- Read Mapping & Alignment: Use DRAGEN's accelerated alignment. Command:

Table 2: Key Sequencing & Bioinformatics Performance Metrics

| Metric | Public Health Lab Benchmark | Pharma R&D Requirement | Common Tool for Measurement |

|---|---|---|---|

| Mean Coverage Depth | >1000x for amplicon | >500x for hybrid-capture | Samtools depth, mosdepth |

| Genome Completeness | >90% at 20x depth | >95% at 50x depth | custom script |

| Variant Calling Sensitivity | >99% for AF>0.5 | >99.5% for AF>0.1 | iVar, GATK |

| Lineage Assignment Accuracy | >99% for major lineages | >99.9% for sub-lineages | Pangolin, UShER |

| Turnaround Time (Sample to Data) | 24-48 hours | 72-96 hours (in-depth) | N/A |

Diagram 2: End-to-end technical workflow from sample to target.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents & Materials for Pathogen Genomics Research

| Item (Example Product) | Function in Workflow | Critical Specification/Note |

|---|---|---|

| Viral RNA Extraction Kit (QIAamp Viral RNA Mini Kit) | Isolates high-purity RNA from clinical samples. | LOD, elution volume, compatibility with downstream RT-PCR. |

| Multiplex PCR Primer Pools (ARTIC Network V4) | Amplifies entire pathogen genome in short, overlapping fragments. | Genome coverage, robustness against novel variants. |

| Library Prep Kit with UDIs (Illumina COVIDSeq Test) | Attaches unique indices for sample multiplexing on sequencer. | Unique dual indices (UDIs) prevent index hopping artifacts. |

| DNA Clean-up Beads (AMPure XP) | Size-selects and purifies DNA fragments post-amplification. | Bead-to-sample ratio is critical for size selection. |

| Sequencing Control (PhiX Control v3) | Provides balanced nucleotide diversity for run quality control. | Typically spiked at 1-5% to calibrate base calling. |

| Positive Control RNA (SARS-CoV-2 RNA) | Validates entire workflow from extraction to sequencing. | Quantified genomic copies, must be from a non-variant of concern if used for assay validation. |

| Bioinformatics Pipeline (DRAGEN, iVar, nCoV-2019 pipeline) | Automated analysis from FASTQ to consensus and lineage. | Must be versioned, containerized (Docker/Singularity) for reproducibility. |

Integrating FAIR Data into Pharma R&D

For pharmaceutical researchers, FAIR data from public repositories enables several critical activities:

- Target Identification: Structural bioinformatics analysis of conserved vs. variable regions in the pathogen proteome.

- Variant Impact Assessment: In silico modeling of mutations on drug binding (e.g., protease inhibitors) or antibody escape.

- Epidemiological Intelligence: Tracking lineage prevalence to inform clinical trial site selection for vaccines/therapeutics.

Protocol for Pharma: In Silico Neutralization Assay Prediction

- Objective: Predict the impact of spike protein mutations on monoclonal antibody (mAb) binding.

- Input: FASTA files of spike sequences from public repositories.

- Tools: PyMOL, HADDOCK, or AlphaFold2 for structure prediction; BioPython for sequence analysis.

- Procedure:

- Sequence Alignment: Align query spike sequences to reference (Wuhan-Hu-1) using ClustalOmega.

- Mutation Mapping: Map amino acid substitutions to the 3D structure of the spike-antibody complex (PDB: 7LSS).

- Docking Simulation (Optional): For novel mutations, use HADDOCK to perform protein-protein docking and calculate binding energy (ΔG).

- Analysis: Compare ΔG of mutant complex vs. wild-type. A significant increase suggests potential neutralization escape.

The ecosystem connecting public health laboratories to pharmaceutical R&D is fundamentally powered by the rigorous application of FAIR data principles to pathogen genomics. Standardized wet-lab protocols, robust bioinformatics pipelines, and clear definitions of key reagents and performance metrics create a reliable, high-velocity pipeline. This seamless flow of actionable genomic intelligence is essential for preparing and responding to current and future pandemic threats through accelerated therapeutic and vaccine development.

In the pursuit of FAIR (Findable, Accessible, Interoperable, and Reusable) data principles for pathogen genomics, the adoption of consistent data standards and ontologies is paramount. These frameworks ensure that genomic data generated globally can be integrated, compared, and analyzed effectively, accelerating outbreak response and therapeutic development. This guide provides a technical examination of three pivotal schemas in this domain.

The International Nucleotide Sequence Database Collaboration (INSDC)

The INSDC is a long-standing, foundational partnership between DDBJ, EMBL-EBI, and NCBI. It establishes the universal standard for the archiving and sharing of nucleotide sequence data and associated metadata.

- Core Principle: A data-sharing pact where once submitted, data is synchronously exchanged and maintained across the three nodes.

- Primary Standards: The INSDC Sequence Data Model and its accompanying Feature Table Definition are the core technical specifications. Key metadata is structured using controlled vocabularies.

- FAIR Alignment: It is the bedrock for Findable (global accession numbers like SRR001234) and Accessible data. Interoperability is enforced through its rigid, community-agreed data model.

Key INSDC Submission Workflow and Standards

The process of submitting data to INSDC involves a structured pathway to ensure data integrity and compliance.

Diagram Title: INSDC Data Submission and Standardization Pathway

Table: Core INSDC Components & Quantitative Scope (2024)

| Component | Primary Function | Key Standards/Formats | Example Accession Prefix | Approx. Data Volume (Public) |

|---|---|---|---|---|

| Sequence Read Archive (SRA) | Stores raw sequencing reads & alignment data. | SRA Metadata XML, FASTQ, BAM, CRAM. | SRR, DRR, ERR | >50 Petabases |

| BioSample | Describes the biological source of a specimen. | BioSample Attributes (e.g., strain, host, geo. location). | SAMN, SAME | >20 million records |

| GenBank/ENA/DDBJ | Archives assembled & annotated nucleotide sequences. | FASTA, INSDC Feature Table, GenBank Flat File. | LC, LT, CP | >3 billion records |

Experimental Protocol: Submitting a Viral Genome to INSDC

Preparation:

- Assemble the viral genome from sequencing reads into a consensus sequence (FASTA format).

- Prepare annotation in the INSDC Feature Table format, marking features like

CDS,gene, andmat_peptide. - Collect metadata: host, collection date/location, isolate name, sequencing platform.

BioSample Registration:

- Log into the NCBI, ENA, or DDBJ submission portal.

- Create a BioSample record using the

Pathogen.cl.1orVirus.1package, populating mandatory attributes. - Record the resulting BioSample accession (e.g., SAMN40567890).

Sequence & Annotation Submission:

- Use the BankIt (web) or tbl2asn (command-line) tool.

- Input the FASTA file, Feature Table file, and a simple metadata template (

.sbtfile). - Link the BioSample accession. Validate files and submit.

SRA Submission (if raw reads are included):

- Create an SRA metadata file describing the experiment, linking to the BioSample.

- Upload FASTQ/BAM files via FTP or Aspera.

- Submit metadata; the system links files to the metadata record.

Completion: The database processes the submission, issues a nucleotide accession (e.g., LC789012), and propagates data across all INSDC partners.

GISAID

Founded initially for influenza data, GISAID (Global Initiative on Sharing All Influenza Data) pioneered a sharing mechanism that balances open data access with recognition of data producers, which proved critical during the COVID-19 pandemic.

- Core Principle: Acknowledgment-first data sharing via its EpiPledge framework. Users agree to terms including collaboration offers and citation of originating labs.

- Primary Standards: The EpiCoV database schema for SARS-CoV-2 and other pathogens. It incorporates specific fields essential for public health tracking.

- FAIR Alignment: Enhances Accessibility by providing a trusted platform during outbreaks. Reusability is ensured through clear terms of use and rich, standardized metadata.

Table: Comparison of INSDC and GISAID Schemas

| Feature | INSDC (Generalist) | GISAID (Public Health Focus) |

|---|---|---|

| Governance Model | Open, unconditional data release post-submission. | Access granted after agreeing to EpiPledge terms. |

| Core Metadata Schema | Generic (BioSample, SRA). | Public health-specific (EpiCoV fields: patient age, hospitalization status, vaccination). |

| Data Identity | Globally unique, stable accession numbers. | Unique EpiCoV Isolate ID (e.g., hCoV-19/USA/CA-CDPH-100/2024). |

| Primary Use Case | Broad biological discovery, archiving. | Real-time epidemic tracking, phylodynamics, vaccine strain selection. |

Public Health-Specific Schemas & Ontologies

Beyond overarching platforms, specialized ontologies provide the semantic glue for true interoperability under FAIR principles.

- Public Health Ontology (PHO): Models concepts like disease outbreak, surveillance, and intervention.

- Infectious Disease Ontology (IDO) Suite: A set of interoperable ontologies for infectious diseases. IDO-Core is extended for specific pathogens (e.g., IDO-COVID-19).

- Schema.org VOC Vocabulary: A community-developed vocabulary for SARS-CoV-2 variants, defining terms like

SARS-CoV-2DeltaVariantwith precise logical definitions, enabling precise querying across databases.

Diagram Title: Layered Data Standardization for FAIR Pathogen Data

The Scientist's Toolkit: Key Research Reagent Solutions

Table: Essential Materials for Pathogen Genomics & Data Submission

| Item | Function | Example Product/Kit |

|---|---|---|

| Nucleic Acid Extraction Kit | Isolates viral RNA/DNA from clinical/swab samples. | QIAamp Viral RNA Mini Kit, MagMAX Viral/Pathogen Kit. |

| Reverse Transcription & Amplification Mix | Converts RNA to cDNA and amplifies target regions (e.g., for tiling amplicon schemes). | SuperScript IV One-Step RT-PCR, ARTIC Network primer pools. |

| High-Throughput Sequencing Library Prep Kit | Prepares amplified DNA for sequencing on platforms like Illumina. | Illumina DNA Prep, Nextera XT. |

| Long-Read Sequencing Kit | Prepares libraries for platforms like Oxford Nanopore or PacBio for genome completion. | Oxford Nanopore Ligation Sequencing Kit. |

| Bioinformatics Pipeline Software | For consensus genome assembly, variant calling, and phylogenetic analysis. | iVar, BCFtools, Nextclade, UShER. |

| Metadata Curation Tool | Assists in formatting sample metadata to INSDC or GISAID specifications. | GISAID Metadata Template, ENA metadata validator. |

The synergistic application of INSDC (for universal archiving), GISAID (for rapid, tracked public health sharing), and public health ontologies (for semantic interoperability) creates a robust infrastructure for FAIR pathogen genomic data. For researchers and drug developers, selecting the appropriate schema depends on the use case: INSDC for broad discovery and permanent archiving, and GISAID for actionable, real-time public health intelligence combined with structured recognition. Adherence to these standards is not merely administrative; it is the foundation for collaborative, rapid-response science in an era of emerging pathogens.

A Step-by-Step Guide to Making Your Pathogen Genomic Data FAIR

In the realm of pathogen genomics research, data generation has outpaced the development of systems to manage, share, and reuse it. The FAIR principles (Findable, Accessible, Interoperable, and Reusable) provide a framework to address this gap. This guide focuses on the critical first step: metadata curation. Within the FAIR framework, comprehensive metadata is the cornerstone of findability and the provision of essential context, enabling data to become a true asset for scientists and drug development professionals in combating infectious diseases.

The Role of Metadata in FAIR Compliance

Metadata, often described as "data about data," serves multiple essential functions. It acts as a discovery mechanism, allowing datasets to be found by both humans and computational agents via rich, standardized descriptions. It provides context and provenance, detailing the experimental conditions, sequencing parameters, and analytical methods, which is vital for reproducibility and accurate interpretation. Finally, it enables interoperability and integration by using controlled vocabularies and ontologies, allowing disparate datasets from different labs or consortia to be combined for large-scale, powerful analyses, such as tracking pathogen evolution or identifying drug resistance markers.

Essential Metadata Fields: A Tiered Framework

A tiered approach to metadata ensures essential information is captured without being overwhelmingly complex. The following table categorizes core fields for a pathogen genomics sequencing experiment.

Table 1: Essential Metadata Fields for Pathogen Genomics Sequencing Data

| Field Category | Field Name | Description | Example/Controlled Vocabulary | Importance for FAIR |

|---|---|---|---|---|

| Core Identifier | Persistent Unique ID | A globally unique, persistent identifier for the dataset. | DOI, Accession Number (e.g., ENA, SRA, GISAID ID) | Findable, Reusable |

| Core Identifier | Sample ID | A unique identifier for the biological specimen. | Lab-specific ID, Biosample accession (e.g., SAMN...) | Findable |

| Biological Context | Pathogen Species | The taxonomic name of the pathogen. | Mycobacterium tuberculosis, SARS-CoV-2 | Findable, Interoperable |

| Biological Context | Host Species | The taxonomic name of the host organism. | Homo sapiens, Mus musculus | Interoperable, Reusable |

| Biological Context | Collection Date | Date the specimen was collected. | YYYY-MM-DD | Reusable |

| Biological Context | Geographic Location | Location of specimen collection (at least country). | Country, Region (e.g., USA: New York) | Reusable |

| Experimental Design | Sample Type | Type of biological material sampled. | Nasopharyngeal swab, Blood, Bacterial isolate | Reusable |

| Experimental Design | Sequencing Platform | Instrument used for sequencing. | Illumina NovaSeq 6000, Oxford Nanopore GridION | Reusable |

| Experimental Design | Library Preparation Kit | Kit used for library construction. | Illumina DNA Prep, Nextera XT | Reusable |

| Experimental Design | Target Enrichment Method | Method for enriching pathogen genetic material. | Amplicon-based (ARTIC), Hybrid capture, Metagenomic | Reusable |

| Data Provenance | Data Generator / Submitter | Person or institute responsible for data generation. | PI Name, Institute Name | Findable, Accessible |

| Data Provenance | Analysis Pipeline & Version | Software and version used for primary analysis (e.g., assembly, variant calling). | nf-core/viralrecon v.2.5, BWA-MEM v.0.7.17 | Reusable |

Detailed Protocol: Metadata Curation Workflow

This protocol outlines a standardized workflow for curating metadata for a pathogen whole-genome sequencing project prior to public repository submission.

Materials and Reagents

Table 2: Research Reagent Solutions & Essential Materials for Metadata Curation

| Item | Function |

|---|---|

| Metadata Spreadsheet Template | A pre-formatted table (e.g., .tsv, .xlsx) with required field columns and vocabulary guidelines to ensure consistency across samples. |

| Controlled Vocabulary/Ontology Source | Reference resources (e.g., NCBI Taxonomy, EDAM Ontology, Environment Ontology (ENVO)) to provide standardized terms for fields like species or sample type. |

| Data Dictionary | A document defining each metadata field, its format, allowed values, and whether it is mandatory or optional. |

| Repository Submission Portal | The web interface of the chosen public data repository (e.g., ENA, SRA, GISAID). |

| Metadata Validation Tool | Repository-specific tools (e.g., ENA's Webin command line tool) to check for errors and compliance before final submission. |

Procedure

Project Planning & Template Setup:

- Before sample collection, select a target public repository and download its specific metadata template or data dictionary.

- Create an internal project spreadsheet that maps all repository-required fields. Add any lab-internal fields for quality control.

Data Entry at Point of Generation:

- Enter basic sample information (Sample ID, Collection Date, Location, Sample Type) immediately upon specimen receipt or processing. Do not rely on memory or paper notes.

- Use controlled vocabularies (e.g., select "Nasopharyngeal swab" from a dropdown list, not free text like "nose swab").

Experimental Process Annotation:

- Upon library preparation and sequencing, immediately log the detailed technical metadata: DNA/RNA extraction kit, library prep kit, sequencing platform, and instrument run ID.

- Record any deviations from the standard operating procedure.

Bioinformatics Provenance Linking:

- Upon primary data analysis, document the computational tools, their versions, and key parameters (e.g., reference genome accession, minimum coverage depth) used for read alignment, variant calling, or genome assembly.

- Link the final data files (FASTQ, VCF, FASTA) to their respective sample IDs and analysis run.

Validation and Submission:

- Use the repository's validation tool to check the completed metadata sheet for formatting errors, missing mandatory fields, or vocabulary mismatches.

- Correct any errors identified.

- Submit the validated metadata along with the raw or processed data files to the repository via its portal. The metadata and data will be assigned a persistent accession number.

Visualizing the Workflow and Relationships

Diagram 1: Pathogen Genomics Metadata Curation Workflow (76 characters)

Diagram 2: Metadata as the Engine for FAIR Principles (60 characters)

Within the broader framework of FAIR (Findable, Accessible, Interoperable, Reusable) data principles for pathogen genomics research, the implementation of robust systems for Persistent Identifiers (PIDs) and dedicated repositories is a critical step. This step ensures that complex genomic datasets, essential for tracking outbreaks, understanding evolution, and developing countermeasures, remain globally accessible and citable for the long term. This guide provides a technical overview of current PID systems, repository architectures, and protocols for data deposition, tailored for researchers, scientists, and drug development professionals in the field.

Persistent Identifiers (PIDs): Core Infrastructure

PIDs are long-lasting references to digital objects, independent of their physical location. They are foundational for the Findable and Accessible FAIR principles.

Key PID Systems and Their Applications

The table below summarizes the primary PID systems relevant to pathogen genomics data and related research objects.

Table 1: Comparison of Primary Persistent Identifier Systems

| System | Identifier Prefix | Managed By | Typical Granularity | Key Application in Pathogen Genomics |

|---|---|---|---|---|

| Digital Object Identifier (DOI) | 10.xxxx |

Registration Agencies (e.g., DataCite, Crossref) | Dataset, Sample, Publication, Software | Citing a complete genomic surveillance dataset deposited in a repository. |

| Archival Resource Key (ARK) | ark:/xxxx |

Various institutions (e.g., CDL, BnF) | Dataset, File, Physical Specimen | Identifying specific genome sequences within an institutional archive. |

| Persistent URL (PURL) | Custom domain | Various (e.g., OCLC) | Web resource, Ontology | Resolving to stable versions of controlled vocabularies (e.g., SNOMED CT). |

| Life Science Identifiers (LSID) | urn:lsid: |

Not centrally managed | Database record, Taxon | Legacy system for identifying taxonomic classifications or specific gene records. |

| Sample and Specimen IDs | Various (e.g., ENA sample ID, BioSample) | INSDC, Biobanks | Physical/Digital Sample | Linking a sequenced genome to the originating clinical/environmental sample metadata. |

Protocol: Minting a DOI for a Pathogen Genomes Dataset

Objective: To assign a citable, persistent DOI to a curated SARS-CoV-2 variant surveillance dataset via DataCite.

Required Materials:

- DataCite Member Account: Provides credentials for the DOI minting service.

- Metadata Schema: Prepared metadata following DataCite schema (v4.4).

- Stable Repository URL: The base URL where the dataset is permanently hosted.

- ORCID iD: For contributor identification.

Methodology:

- Prepare Dataset & Metadata: Finalize and validate the dataset (e.g., FASTQ files, consensus genomes, metadata TSV). Compile required metadata: Creators (with ORCIDs), Title, Publisher (repository name), Publication Year, Resource Type ("Dataset"), and a detailed description.

- Upload to Repository: Deposit the dataset in a FAIR-aligned repository (e.g., ENA, Zenodo, institutional repository) that provides integrated DOI minting or allows integration with DataCite.

- Submit Metadata: Via the repository's interface or the DataCite REST API, POST the XML or JSON-formatted metadata. Critical fields include:

<identifier identifierType="DOI">(a placeholder suffix is provided by the member).<url>containing the persistent landing page URL for the dataset.<relatedIdentifier>to link to associated publications or underlying raw data accessions.

- Reserve/Mint DOI: The service will validate the metadata. Upon success, it will "reserve" the DOI, creating the metadata record. Once the dataset is publicly available, the DOI state is changed to "findable," activating the resolution link.

- Validation: Test the new DOI by resolving it (

https://doi.org/10.xxxx/xxxxx). It should redirect to the dataset landing page.

Title: Workflow for Minting a DataCite DOI

Repositories for Pathogen Genomic Data

Repositories provide the infrastructure for storage, management, preservation, and access, enabling Accessibility and Reusability.

Repository Landscape and Selection Criteria

Table 2: Key Repository Characteristics for Pathogen Genomics

| Repository Name | Primary Scope | PID Issued | Data Model Compliance | Key Feature for FAIRness |

|---|---|---|---|---|

| ENA / SRA / DDBJ (INSDC) | Raw reads, assemblies, annotated sequences | Accession Numbers (stable, not PIDs per se) | INSDC, MIxS | Global partnership, mandatory metadata, automated processing pipelines. |

| GISAID | Pathogen (esp. influenza, coronavirus) genomes | Accession ID | GISAID-specific | Promotes rapid sharing during outbreaks with controlled access and attribution. |

| Zenodo (CERN) | General-purpose (datasets, software, reports) | DOI | DataCite, domain-specific via communities | Links to GitHub, provides versioning, long-term EU-funded preservation. |

| NCBI GenBank | Annotated sequence collection | Accession.Version | INSDC | Integrated with PubMed, BioSample, and BioProject for rich context. |

| BV-BRC | Bacterial & viral pathogens, with analysis tools | Accession ID, DOIs for "workspaces" | Submissions API, standardized metadata | Combids repository with bioinformatics analysis suite and private workspaces. |

Protocol: Depositing Genome Assemblies to the European Nucleotide Archive (ENA)

Objective: To submit consensus genome assemblies (FASTA) and associated contextual metadata for a batch of Mycobacterium tuberculosis isolates.

Required Materials:

- Webin Account: Registered credentials for ENA's submission portal.

- Annotated FASTA Files: One per isolate, with sequences ≥200bp.

- Metadata Spreadsheets: Prepared offline using ENA template for samples and assays.

- Checklist ID: Identifier for the required metadata fields (e.g., ERC000033 for "Pathogen: pathogen surveillance").

- Command-Line Validation Tools (optional):

webin-clifor bulk validation.

Methodology:

- Metadata Preparation:

- Sample Metadata: Create a TSV with columns for

sample_alias,tax_id(1773),scientific_name,collection_date,geographic_location,host_health_state, etc., as per the chosen checklist. - Assay/Sequence Metadata: Create a TSV linking each FASTA file to a sample alias, specifying

instrument_model,library_layout(SINGLE/PAIRED), etc.

- Sample Metadata: Create a TSV with columns for

- Submission Portal Login: Access the ENA Webin submission interface.

- Create Project (BioProject): If novel, register a new BioProject describing the overarching study goals.

- Register Samples: Upload the sample metadata TSV. The system validates and returns stable ENA sample accession numbers (e.g., ERSxxxxxxx).

- Register Sequences: In the "Genome Assembly" submission type, upload the FASTA files and the assay metadata TSV, linking each file to the newly minted sample accessions.

- Validation and Confirmation: ENA performs automated validation (sequence format, taxonomy). Upon passing, the system assigns sequence accession numbers (e.g., ERSxxxxxxx for contigs, GCA_xxxx for full assembly). The records become public according to the specified release date.

- Post-Submission: The accessions should be recorded in lab databases and cited in manuscripts.

Title: ENA Genome Assembly Submission Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for PID and Repository Management

| Item / Solution | Provider / Example | Primary Function in PID/Repository Context |

|---|---|---|

| Metadata Schema Crosswalk Tool | FAIRsharing.org, RDA DSA WG tools | Maps local lab metadata to standardized repository schemas (e.g., DataCite to INSDC), ensuring interoperability. |

| Command-Line Submission Client | ENA webin-cli, NCBI prefetch/fasterq-dump |

Automates and scripts bulk uploads/downloads of datasets to/from major repositories, enabling reproducibility. |

| ORCID iD | orcid.org | A persistent identifier for researchers, crucial for unambiguous attribution in dataset and publication metadata. |

| Data Repository Gateway | ELIXIR's FAIRsharing, re3data.org | Registry to discover and select an appropriate, discipline-specific repository based on policies and capabilities. |

| PID Graph Resolver | FREYA PID Graph, DataCite Commons | Service that follows PIDs to discover all related scholarly objects (e.g., which papers cite a given dataset). |

| Curation & Validation Software | CLC Genomics Workbench, CyVerse DE, BV-BRC platform | Validates data integrity (e.g., FASTQ quality, assembly completeness) prior to submission, reducing errors. |

| Institutional Repository (IR) Platform | DSpace, Figshare for Institutions, Dataverse | Provides a local, managed repository for data not suited for domain-specific databases, often with DOI minting. |

Integration with the FAIR Data Cycle

PIDs and repositories are not endpoints but connectors within the FAIR data cycle. A dataset with a DOI (Findable) in a trusted repository (Accessible) that uses standardized metadata and vocabularies (Interoperable) and provides clear licensing and provenance (Reusable) becomes a true asset for global pathogen genomics research. This enables meta-analyses, data integration across studies, and machine-actionability, accelerating the response to emerging infectious diseases and the development of targeted therapeutics and vaccines.

Within the FAIR (Findable, Accessible, Interoperable, Reusable) data principles framework for pathogen genomics, Interoperability is paramount. It demands that data and metadata integrate with other datasets and workflows. This technical guide details the core technical pillars of interoperability: standardized file formats, controlled nomenclatures, and Linked Data practices, enabling collaborative analysis and accelerating therapeutic development.

Standardized Genomic Data File Formats

Utilizing community-endorsed, open formats is the first step toward technical interoperability. The table below summarizes key formats.

Table 1: Core File Formats for Pathogen Genomics Interoperability

| Format | Primary Use Case | Key Features for Interoperability | Common Tools |

|---|---|---|---|

| FASTQ | Raw sequencing reads | Plain text, universally accepted; quality scores stored per base. | BWA, Bowtie2, FastQC |

| FASTA | Nucleotide/amino acid sequences | Simple header & sequence structure; foundational for databases. | BLAST, samtools |

| SAM/BAM/CRAM | Aligned sequencing reads | BAM/CRAM are compressed binary; standardized alignment fields; CRAM offers reference-based compression. | SAMtools, GATK, IGV |

| VCF (gVCF) | Variant calls | Hierarchical, structured metadata header; fixed columns for genomic position, REF/ALT alleles; supports complex variants. | BCFtools, SnpEff, GATK |

| GFF3/GTF | Genome annotations | Tab-delimited with standardized column definitions for features (genes, CDS); defines relationships. | Apollo, genome browsers |

| NCBI SRA | Archival of raw data | NCBI-standardized binary format for massive sequencing data storage and retrieval. | SRA Toolkit, fastq-dump |

Controlled Vocabularies and Nomenclature

Consistent naming of biological entities is critical. Adherence to public, curated ontologies ensures unambiguous data integration.

Table 2: Essential Ontologies for Pathogen Genomics Metadata

| Ontology (Acronym) | Scope | Example Terms/Use Case |

|---|---|---|

| NCBI Taxonomy | Organism names | Severe acute respiratory syndrome coronavirus 2 (TaxID: 2697049) |

| Sequence Ontology (SO) | Genomic features & variations | SO:0001816 (missense_variant), SO:0000673 (transcript) |

| Evidence & Conclusion Ontology (ECO) | Types of evidence | ECO:0000213 (nucleotide sequencing assay evidence) |

| Disease Ontology (DO) | Human diseases | DOID:2945 (severe acute respiratory syndrome) |

| BRENDA Tissue / Enzyme Ontology | Enzyme kinetics & tissues | BTO:0000089 (blood), EC:3.4.22.69 (SARS coronavirus main proteinase) |

Protocol 1: Annotating a Variant Call File (VCF) with Standard Ontologies

- Objective: Enhance a VCF file's interoperability by adding ontology-based annotations to its INFO field.

- Materials: VCF file, annotated reference database (e.g., dbSNP, Ensembl VEP), computing environment.

- Methodology:

- Prepare Input: Ensure your VCF file is bgzip-compressed and indexed using

tabix. - Tool Selection: Use Ensembl's VEP (Variant Effect Predictor) or SnpEff.

- Command Execution (VEP Example):

- Prepare Input: Ensure your VCF file is bgzip-compressed and indexed using

Implementing Linked Data Principles

Linked Data uses URIs and RDF to create a web of machine-actionable knowledge graphs, connecting disparate datasets.

Protocol 2: Publishing a Genome Annotation as Linked Data

- Objective: Convert a GFF3 annotation file into RDF triples using a dedicated ontology.

- Materials: GFF3 file, ontology (e.g., Sequence Ontology OWL file), RDF converter (e.g., SPARQL-Generate, custom script), triplestore (e.g., Virtuoso, GraphDB).

- Methodology:

- Model Mapping: Define how GFF3 columns map to RDF predicates. For example:

seqid->rdfs:isDefinedBysource->dcterms:sourcetype->rdf:type(using an SO term URI)start,end->so:has_start,so:has_end

- Conversion: Execute a SPARQL-Generate script to iterate over the GFF3 file and produce RDF/XML or Turtle format.

- Publication: Load RDF triples into a triplestore with a persistent URL endpoint. Use content negotiation (

Accept: application/rdf+xml) to serve both human and machine-readable views.

- Model Mapping: Define how GFF3 columns map to RDF predicates. For example:

Visualization of Interoperability Workflow

Pathogen Genomics Data Interoperability Pipeline

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Key Reagents & Resources for Interoperable Pathogen Genomics

| Item | Function in Interoperability Context | Example/Provider |

|---|---|---|

| Reference Genome | Provides coordinate system for alignment and variant calling; must be versioned. | NCBI RefSeq (e.g., NC_045512.2 for SARS-CoV-2) |

| Curated Variant Database | Source of pre-annotated variants using standard nomenclatures for cross-study comparison. | COG-UK Mutation Explorer, GISAID, dbSNP |

| Ontology Lookup Service | Web API to search and resolve terms from controlled vocabularies. | EBI OLS, Ontology Lookup Service |

| Metadata Template | Structured form (e.g., CEDAR, ISA-Tab) to capture sample and experiment metadata consistently. | GA4GH Metadata Standards, INSDC SRA checklist |

| Containerization Software | Ensures computational workflow reproducibility across environments. | Docker, Singularity |

| Workflow Language | Describes analysis steps in a portable, executable format. | Nextflow, WDL, CWL |

| Triplestore | Database optimized for storage and query of RDF graph data. | Apache Jena Fuseki, Ontotext GraphDB |

Within the FAIR (Findable, Accessible, Interoperable, Reusable) data framework for pathogen genomics, the "Reusable" principle hinges on the thoughtful curation of three pillars: licensing, provenance, and documentation. For researchers and drug development professionals, reusable data accelerates outbreak response, therapeutic discovery, and surveillance. This guide provides technical methodologies to ensure genomic data remains a persistent, trustworthy, and well-defined resource beyond its initial publication.

Licensing: Defining Permissions for Reuse

A clear license removes ambiguity about how data can be used, shared, and integrated, which is critical for collaborative science and commercial drug development.

Key License Types for Scientific Data

Table 1: Common Data Licenses for Pathogen Genomics

| License | Key Provisions | Best For | Limitations |

|---|---|---|---|

| CC0 (Public Domain Dedication) | Waives all copyright, allowing any use without attribution. | Maximizing data integration and reuse in global databases (e.g., GISAID, NCBI). | No requirement for attribution; original producers may not receive credit. |

| CC BY (Attribution) | Allows any use if original work is credited. | Balancing reuse with academic credit. Mandated by many research funders. | Requires tracking of attribution chains. |

| Open Data Commons Open Database License (ODbL) | Allows sharing, creation, and adaptation if derivatives are shared alike and attribution is given. | Databases where derived data/products must remain open. | "Share-alike" clause can be restrictive for some commercial uses. |

| Custom Institutional License | Tailored terms, often addressing specific commercial use or material transfer. | Data with strong commercial potential or biosecurity concerns. | Creates friction and limits interoperability; requires legal review. |

Protocol: Implementing a License for a Genomic Dataset

- Determine Funders & Publisher Requirements: Check mandates from NIH, Wellcome Trust, journals (e.g., Nature, Science).

- Assess Data Sensitivity: For non-sensitive data, CC0 or CC BY is recommended. For data with potential commercialization paths, consider a non-restrictive custom license.

- Attach License Metadata: Embed the license in machine-readable form (e.g.,

license.mdfile,dc:rightsin Dublin Core,licensefield in a DataCite JSON schema). - Document Human-Readable Summary: Include a

LICENSEorREADMEfile in the repository root explaining permissions in plain language.

Provenance: Capturing the Data Lineage

Provenance (or lineage) documents the origin, custody, and transformations of data. It is essential for reproducibility, audit trails in drug development, and assessing data quality.

Experimental Protocol: Capturing Wet-Lab to Bioinformatics Provenance

A. Sample Processing & Sequencing:

- Input: Primary specimen (e.g., nasopharyngeal swab).

- Method: RNA extraction (e.g., Qiagen Viral RNA Mini Kit), library prep (e.g., Illumina COVIDSeq protocol), sequencing on NovaSeq 6000.

- Provenance Capture: Record batch numbers for kits, instrument IDs, sequencing run parameters (

.xmloutput), and QC metrics (FastQC report).

B. Genomic Assembly & Analysis:

- Input: Raw FASTQ files.

- Method: Use a versioned pipeline (e.g.,

nf-core/viralrecon v2.5). Steps include adapter trimming (Trim Galore!), read alignment (BWA), variant calling (iVar), consensus generation. - Provenance Capture: Use a workflow manager (Nextflow, Snakemake) that automatically generates a

.htmlor.jsonreport with software versions, command lines, and parameters. Container images (Docker, Singularity) should be tagged with hashes.

C. Data Curation & Submission:

- Input: Consensus sequence (FASTA) and metadata.

- Method: Validation using

checkv, metadata annotation with controlled vocabularies (e.g., GISAID submission fields). - Provenance Capture: Final dataset should link to raw data (SRA accession), processing code (GitHub DOI), and all intermediate reports.

Diagram Title: Provenance Capture Workflow for Pathogen Genomics

Rich Documentation: Beyond Basic Metadata

Documentation bridges the semantic gap between data and user. It should answer what, why, how, and when for both humans and machines.

The Scientist's Toolkit: Essential Reagents for FAIR Documentation

Table 2: Key Research Reagent Solutions for Data Documentation

| Tool / Standard | Category | Function in Documentation |

|---|---|---|

| README file (markdown) | Human-Readable | Primary guide describing dataset purpose, structure, and usage examples. |

| Data Dictionary (CSV/JSON) | Machine-Actionable | Defines each variable, its format, allowed values, and ontological terms (e.g., "hosthealthstatus": ["healthy", "asymptomatic", "symptomatic"]). |

| Minimum Information Standards (e.g., MIxS) | Reporting Standard | Ensures all required environmental, host, and sequencing metadata fields are populated. |

| EDAM Ontology | Terminology | Provides standardized terms for bioinformatics operations, data types, and formats. |

| JSON-LD with Schema.org | Semantic Markup | Embeds structured metadata (license, creator, temporal/geospatial coverage) in web pages for machine discovery. |

| CWL (Common Workflow Language) / RO-Crate | Workflow Packaging | Packages data, code, and workflow descriptions into a reusable, executable research object with clear dependencies. |

Protocol: Creating a Richly Documented Dataset Package

Structure Your Repository:

Generate Machine-Readable Metadata: Use the DataCite schema to create an

dataset_metadata.xmlfile including:Identifier (DOI),Creator,Title,Publisher,PublicationYear,ResourceType,Subjects (from NCBI Taxon ID or MeSH),Rights (License URL).Link to Community Resources: In your README, explicitly link related datasets, preprints/publications (via DOI), and entries in pathogen-specific databases (GISAID accession EPIISLxxxxx).

Crafting reusable data is an active engineering process. By explicitly defining licenses, meticulously capturing provenance, and investing in rich multi-layered documentation, pathogen genomics researchers create a robust foundation for secondary analysis, meta-studies, and machine learning. This transforms static data archives into dynamic, trustworthy components of the global scientific infrastructure, directly supporting faster responses to emerging pathogens and more efficient therapeutic development.

The integration of FAIR (Findable, Accessible, Interoperable, Reusable) data principles is pivotal for advancing pathogen genomics research. This technical guide details the application of these principles to two critical domains: public health surveillance projects and clinical trial datasets. Within the broader thesis on FAIRification of genomic data, these use cases present unique challenges—surveillance requires real-time, global data sharing for outbreak response, while clinical trials demand rigorous privacy and standardization for regulatory approval. Implementing FAIR here bridges disparate data ecosystems, enabling meta-analyses, predictive modeling, and accelerated therapeutic development.

Current Landscape & Quantitative Analysis

Recent surveys and reports highlight the state of FAIR implementation in these fields. The following table summarizes key quantitative findings.

Table 1: Current State of FAIR Implementation in Genomic Health Data

| Metric | Surveillance Projects | Clinical Trial Datasets | Source / Study |

|---|---|---|---|

| Adherence to Unique, Persistent IDs | 65% (for viral isolates) | 45% (for biosamples) | NIH 2024 Survey of Public Data Repositories |

| Use of Standardized Metadata Schema | 78% (GSCID/INSDC) | 34% (BRIDG or CDISC SEND) | GA4GH 2023 Implementation Report |

| Average Time to Public Data Release | 14 days (range: 1-90) | 24 months post-trial completion | Analysis of 2022-2023 ENA/ClinVar submissions |

| Data Reuse Rate (annual downloads/citations) | High (Avg: 1,200) | Low (Avg: 85) | PubMed Central & Figshare 2024 Metrics |

| Full F-UCR (FAIR-Use Compliance Rate) | 41% | 18% | FAIRshake 2024 Assessment Toolkit Scores |

Methodological Protocols for FAIRification

Protocol for FAIRification of Surveillance Sequence Data

This protocol outlines the steps for processing raw pathogen genomic data from sequencers to a FAIR-compliant public repository.

Title: FAIR-Centric Workflow for Pathogen Surveillance Genomics Objective: To generate, annotate, and deposit surveillance sequence data in a findable, accessible, interoperable, and reusable manner. Materials: See "Scientist's Toolkit" below. Procedure:

- Sample ID Harmonization: Assign a globally unique ID (e.g., LSID, DOI) to each isolate, linked to local lab ID via a persistent identifier service.

- Standardized Metadata Capture: Using the GSCID's "MIxS" minimum information checklist, populate fields for host, geo-location (ISO 3166), collection date, and sequencing protocol. A REDCap or OHDSI form ensures structured capture.

- Bioinformatic Processing: Process reads through a nf-core/viralrecon Nextflow pipeline. Key outputs: consensus genome (FASTA), variant calls (VCF), and QC report.

- Data Annotation: Annotate sequences with controlled vocabularies (e.g., NCBI Taxonomy ID, Ontology for Biomedical Investigations (OBI) terms for instruments).

- Repository Deposit: Submit FASTA files, VCFs, and full metadata to both INSDC (ENA/SRA) and a specialized archive like GISAID. The metadata is converted to the repository's required format (e.g., ENA XML).

- Linking and Provenance: The workflow run is registered with a Research Object Crate (RO-Crate), capturing all scripts, versions, and input parameters, which is itself assigned a DOI from Zenodo. Validation: Use the FAIR Data Stewardship Wizard to assess the final dataset's compliance against FAIR metrics.

Protocol for Clinical Trial Genomic Data Management

This protocol ensures clinical trial genomic data meets FAIR principles while complying with privacy (GDPR, HIPAA) and clinical standards (ICH-GCP).

Title: Implementation of FAIR Principles in Clinical Trial Genomics Objective: To structure clinical genomic trial data for regulatory submission, internal reuse, and controlled external sharing. Materials: See "Scientist's Toolkit" below. Procedure:

- Pre-Trial Schema Mapping: Align all planned genomic variables (e.g., SNP arrays, WES, RNA-Seq) with the CDISC SEND and BRIDG model standards. Define a SDTM-like structure for derived genomic biomarkers.

- De-identification & Consent Management: Genomic data is pseudonymized using a trusted third-party. Consent status for data sharing is linked to each participant's ID using the ACED-ID model for granular consent.

- Standardized Biosample Metadata: Annotate all biosamples using the BCR (Biospecimen Core Resource) format, integrating with CDISC SDTM for clinical data linkage.

- Secure, Accessible Storage: Processed genomic data (e.g., VCFs) are stored in a GA4GH Passport-enabled repository (e.g., DNAnexus, Terra). Access is governed by a Data Use Ontology (DUO)-based authorization system.

- Submission Package Creation: For regulatory submission (e.g., to FDA), data is formatted per the FDA's SSI (Study Data Standards Catalog). Annotated programmatic access points (APIs) are documented for reviewers.

- Post-Trial Deposit to Controlled-Access DB: Upon trial completion, submit to a controlled-access repository like dbGaP or EGA, using their structured submission portals. Metadata is rich with protocol and analysis details.

Visualizing FAIR Implementation Workflows

Title: Comparative FAIR Workflows for Surveillance and Clinical Trials

Title: FAIR Digital Object Architecture for Data Access

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Tools & Resources for FAIR Implementation

| Category | Item / Solution | Primary Function in FAIR Context |

|---|---|---|

| Identifier Services | DOI, Life Science ID (LSID), identifiers.org Resolution Service | Provides Findable, persistent, and globally unique identifiers for datasets, samples, and publications. |

| Metadata Standards | MIxS (GSC), CDISC SEND/SDTM, BRIDG Model, ABCD (BioCADDIE) | Provides Interoperable, community-agreed schemas for describing data context. |

| Ontologies & Vocabularies | EDAM (bioinformatics), OBI (investigations), DUO (data use), NCBI Taxonomy | Enables Interoperability and machine-actionability by using controlled, defined terms. |

| Workflow & Provenance | Nextflow/nf-core, Research Object Crate (RO-Crate), W3C PROV | Captures reusable, reproducible analysis pipelines and their execution provenance. |

| Repository & Platforms | ENA/SRA (INSDC), GISAID, dbGaP, EGA, Terra.bio, DNAnexus | Provides Accessible, long-term storage, often with specific submission standards and access controls. |

| Assessment Tools | FAIR Data Stewardship Wizard, FAIRshake Toolkit, F-UJI Automated Assessor | Evaluates and scores the level of FAIR compliance for digital resources. |

| Access Governance | GA4GH Passport & DUO, ACLs (Access Control Lists), eConsent Platforms | Manages Access according to ethical and legal constraints, enabling compliant data reuse. |

Overcoming Common FAIR Implementation Hurdles in Genomic Research

The FAIR (Findable, Accessible, Interoperable, Reusable) principles provide a framework for optimizing the reuse of pathogen genomic data. However, their implementation directly confronts the triad of privacy (protecting individual health information), security (protecting data from unauthorized access or misuse), and sovereignty (respecting national or regional laws governing data location and use). This guide outlines technical methodologies to navigate these challenges, enabling robust data sharing for global health security without compromising ethical and legal mandates.

Quantitative Landscape of Data Sharing Challenges

Table 1: Prevalence of Concerns in Pathogen Genomic Data Sharing (2020-2024)

| Concern Category | % of Surveys Citing as "Major Barrier" | Common Jurisdictions with Strict Regulations |

|---|---|---|

| Patient Privacy & Re-identification Risk | 78% | EU (GDPR), USA (HIPAA), South Korea (PIPA) |

| National Data Sovereignty | 65% | China, India, Brazil, Russia, South Africa |

| Intellectual Property & Biosecurity | 45% | Global, especially for Dual-Use Research of Concern (DURC) |

| Technical Security (Breach Risk) | 52% | Universal concern across all jurisdictions |

Table 2: Comparison of Technical Solutions for Mitigation

| Solution | Privacy Strength | Impact on Data Utility | Computational Overhead |

|---|---|---|---|

| Full Data Anonymization | Low (High re-ID risk for genomics) | High (Loss of granular data) | Low |

| Federated Analysis | High (Data doesn't leave source) | Medium (Limited to compatible algorithms) | High |

| Homomorphic Encryption | Very High | Low (Enables computation on encrypted data) | Very High |

| Data Use Agreements + Access Committees | Medium (Legal/ procedural) | Low (Full data access granted) | Medium (Administrative) |

| Synthetic Data Generation | Medium-High | Variable (Fidelity depends on model) | Medium |

Experimental Protocols for Secure, FAIR-Aligned Data Sharing

Protocol: Federated Genome-Wide Association Study (GWAS) for Pathogen Surveillance

Objective: To identify host genetic factors associated with pathogen virulence without sharing raw individual-level genomic data. Methodology:

- Local Setup: Each participating institution (node) sets up a secure containerized analysis environment (e.g., using Docker/Singularity) with standardized software stacks.

- Harmonization: Nodes harmonize pathogen variant calls (e.g., SNPs, indels) and host phenotypic data (e.g., disease severity, outcome) using a common data model (e.g., OHDSI OMOP or GA4GH Phenopackets).

- Federated Coordination: A central coordinator (does not hold data) disseminates the analysis script (e.g., linear mixed model for association).

- Local Computation: Each node runs the script on its local, secured data, generating summary statistics (e.g., betas, p-values).

- Secure Aggregation: Nodes share only the aggregated summary statistics via a secure multi-party computation (SMPC) protocol or a trusted execution environment (TEE). The coordinator combines these to perform a meta-analysis.

- Result Disclosure: Final, global association results are shared with all participants. The raw genotype and phenotype data never leave the local nodes.

Protocol: Differential Privacy for Aggregate Pathogen Metadata Release

Objective: To publicly release aggregate data on pathogen lineage distribution by region while provably protecting individual sample contribution. Methodology:

- Query Formulation: Define the query (e.g., "Count of samples belonging to lineage B.1.1.7 per country in December 2023").

- Sensitivity Analysis: Calculate the query's global sensitivity (Δf). For a count query, Δf=1 (adding/removing one individual changes the count by at most 1).

- Privacy Budget (ε) Allocation: Set a strict privacy budget (e.g., ε = 0.5) to guarantee strong privacy protection.

- Noise Injection: Generate calibrated Laplacian noise:

Noise = Laplace(scale = Δf/ε). Add this noise to the true count from each country. - Thresholding & Release: Apply a post-processing threshold (e.g., suppress counts below 5) to further reduce re-identification risk. Release the noisy, aggregated table.

- Utility Validation: Internally assess the impact of noise on major trends (e.g., dominant lineage calls remain accurate).

Visualizations

Federated Analysis Workflow for Pathogen Genomics

Federated Analysis Protects Raw Data

Data Sovereignty & Secure Access Logical Pathway

Sovereign Data Access via DAC and Technical Controls

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Secure, FAIR-Compliant Pathogen Genomics Research

| Tool / Reagent Category | Specific Example(s) | Function in Balancing Sharing & Privacy |

|---|---|---|

| Secure Analysis Platforms | GEN3, Seven Bridges, Terra.bio | Provides a controlled, cloud-based workspace with embedded data governance, authentication, and compute, enabling analysis without raw data download. |

| Federated Analysis Frameworks | OpenFL (Intel), NVIDIA FLARE, FEDERATED ARRAY | Enables distributed machine learning and statistical analysis across institutions while keeping data localized. |

| Encryption & Anonymization Tools | HomoPy (Homomorphic Encryption), ARX Data Anonymization Tool | Protects data in-use (encryption) or transforms it to minimize re-identification risk (anonymization/k-anonymity). |

| Data Use Agreement (DUA) & Ontology Tools | DUOS (Data Use Oversight System), GA4GH DUO Ontology | Standardizes and automates the process of matching researcher credentials with data use conditions attached to datasets. |

| Trusted Execution Environments (TEE) | Intel SGX, AMD SEV | Creates secure, isolated regions (enclaves) in processors for confidential computing, allowing analysis of encrypted data in memory. |

| Synthetic Data Generators | Synthea, Mostly AI, Gretel.ai | Generates artificial, statistically representative datasets that mimic real population data without containing any actual individual records, useful for method development. |

| Standardized Metadata Specs | MIxS (Minimum Information about any Sequence), INSDC submission standards | Ensures interoperability and reusability (FAIR principles) by mandating rich, structured metadata, reducing ambiguity and need for data clarifications. |

Within the framework of FAIR (Findable, Accessible, Interoperable, Reusable) data principles for pathogen genomics, the challenge of Metadata Incompleteness presents a significant bottleneck. While sequencing data generation has become routine, the associated metadata—describing the sample source, sequencing methodology, and clinical context—is often sparse, inconsistent, or unstructured. This imposes a severe Burden of Curation, where researchers must dedicate substantial resources to manually clean, harmonize, and enrich metadata before data can be meaningfully analyzed or shared. This guide addresses the technical and procedural strategies to mitigate this challenge.

The Scale of the Problem: Quantitative Analysis

The following tables summarize recent findings on metadata completeness in public pathogen genomic repositories.

Table 1: Metadata Completeness in Major Public Repositories (2023-2024)

| Repository / Database | % of Records with Critical Clinical Metadata (e.g., host health status) | % of Records with Complete Sampling Location (Geo-coordinates) | % of Records with Full Sequencing Protocol (Platform, Kit, Version) |

|---|---|---|---|

| NCBI SRA (Random Sample, Viral Pathogens) | 34% | 41% | 68% |

| GISAID EpiCoV (SARS-CoV-2 Subset) | 72% | 85% | 58% |

| ENA (Bacterial Genomes, Clinical Isolates) | 28% | 52% | 45% |

Table 2: Estimated Researcher Time Cost for Curation

| Task | Average Hours per 100 Isolates (Without Automation) | Average Hours per 100 Isolates (With Semi-Automated Tools) |

|---|---|---|

| Standardization of Field Names | 10-15 | 2-4 |

| Geocoding Location Data | 8-12 | 1-2 |

| Cross-Referencing with Ontology Terms | 15-25 | 5-8 |

| Validation & Error Correction | 20-30 | 5-10 |

Core Methodology: A Protocol for Systematic Metadata Curation

This protocol provides a stepwise approach to address incompleteness.

Experimental Protocol 3.1: Metadata Audit and Gap Analysis

- Data Harvesting: Use APIs (e.g., ENA-API, SRA-tools) to programmatically download metadata for a target dataset.

- Completeness Scoring: Calculate the percentage of non-null values for each field. Flag fields below a defined threshold (e.g., <70%).

- Consistency Check: Identify values that deviate from expected controlled vocabularies (e.g., "male", "Male", "M" for sex).

- Cross-Field Validation: Apply logical rules (e.g., "collectiondate" must be before "submissiondate").

Experimental Protocol 3.2: Metadata Enrichment Using Ontologies and External Databases

- Ontology Mapping: For a field like "host_disease," use the Ontology Lookup Service (OLS) API to map free-text entries to standardized identifiers from the Human Disease Ontology (DOID) or NCBITaxon.

- Geographic Coordinate Resolution: For textual location names, use a gazetteer service (e.g., GeoNames) to obtain precise latitude and longitude.

- Instrument Model Harmonization: Map platform descriptions (e.g., "Illumina HiSeq 2500") to official NCBI BioSample package terms.

Experimental Protocol 3.3: Implementing a FAIR Metadata Submission Workflow

- Template Provision: Provide submitters with a spreadsheet template pre-populated with required fields, value constraints, and dropdown menus from controlled vocabularies.