HUGO's Ecological Genomics Vision: Mapping the Genomic Landscape for Precision Medicine and Drug Discovery

This article explores the Human Genome Organisation's (HUGO) evolving vision for ecological genomics—a framework that moves beyond static reference genomes to understand the dynamic interplay between genetic variation, environment, and...

HUGO's Ecological Genomics Vision: Mapping the Genomic Landscape for Precision Medicine and Drug Discovery

Abstract

This article explores the Human Genome Organisation's (HUGO) evolving vision for ecological genomics—a framework that moves beyond static reference genomes to understand the dynamic interplay between genetic variation, environment, and disease. Tailored for researchers, scientists, and drug development professionals, it provides a comprehensive overview of foundational concepts, current methodologies, analytical best practices, and comparative validation strategies. By synthesizing recent initiatives like the Human Pangenome Reference Consortium and ethical frameworks, the article offers a roadmap for leveraging genomic diversity in biomedical research to unlock novel therapeutic targets and advance equitable, personalized medicine.

Beyond the Reference Genome: Defining HUGO's Ecological and Functional Genomics Framework

The Human Genome Organisation (HUGO) has evolved its vision from a primary focus on linear sequence annotation to an integrated, ecological framework that contextualizes genomic data within multidimensional biological, environmental, and phenotypic landscapes. This whitepaper delineates the core technical and conceptual tenets of this ecological genomics vision, positioning it as the necessary evolution for understanding complex disease etiology and enabling precision drug development.

The Foundational Shift: From Linear Sequence to Ecological Network

The completion of the human genome reference sequence marked the end of the initial "sequence-centric" era. HUGO's current vision, as articulated in recent statements and initiatives, emphasizes that a gene's function and its role in health and disease cannot be understood in isolation. Its ecological context—including the cellular niche, tissue microenvironment, organismal systems, and external exposures—is paramount.

Table 1: Evolution of Genomic Analysis Paradigms

| Paradigm | Primary Focus | Key Question | Limitation |

|---|---|---|---|

| Linear Sequence (c. 2000-2010) | Gene structure, variant cataloging | "What is the sequence and what mutations are present?" | Lacks functional and regulatory context. |

| Functional Genomics (c. 2010-2020) | Gene expression, epigenetic states, protein interactions | "What is the gene's activity and its molecular interactions?" | Often static, lacks multi-scale integration. |

| Ecological Genomics (Current Vision) | Multi-scale networks, spatiotemporal dynamics, environment interaction | "How does genomic function emerge from context at all biological scales?" | Highly complex, requires novel computational and experimental frameworks. |

Core Technical Tenets

Multi-Omic Integration Across Scales

Ecological genomics requires the simultaneous acquisition and fusion of data from genomes, epigenomes, transcriptomes, proteomes, metabolomes, and microbiomes, mapped across spatial (single-cell, tissue, organ) and temporal (development, disease progression) dimensions.

Detailed Protocol: Spatial Multi-Omic Profiling on a Tissue Section

- Sample Preparation: Fresh-frozen or FFPE tissue sections (5-10 µm) are mounted on barcoded spatial array slides (e.g., Visium, Vizgen). The array contains spatially indexed oligonucleotide capture probes.

- On-Slide Library Construction:

- mRNA Capture: Tissue is permeabilized; released mRNA hybridizes to spatially barcoded poly(dT) probes.

- Protein Co-Detection (Optional): Antibodies conjugated to oligonucleotide tags are incubated with the tissue prior to permeabilization, allowing simultaneous protein and mRNA capture.

- In-Situ Reverse Transcription: Captured mRNA is reverse-transcribed to create cDNA with spatial barcodes.

- Tissue Removal & Amplification: Tissue is digested, and the cDNA library is amplified via PCR.

- Sequencing & Analysis: Libraries are sequenced on a high-throughput platform (NovaSeq). Bioinformatic pipelines (Space Ranger, Seurat) demultiplex reads by spatial barcode, align sequences, and generate gene expression matrices mapped to histological coordinates.

Context-Aware Functional Annotation

Moving beyond static Gene Ontology terms, this tenet involves annotating variants and genes with dynamic, context-specific functional data (e.g., cell-type-specific enhancer activity, condition-specific protein complexes).

Modeling Genotype-Environment-Phenotype (GxE) Interactions

Quantitative modeling of how genetic variation modulates organismal response to environmental factors (diet, toxins, microbiota, social stress) to produce phenotypes.

Table 2: Key Quantitative Findings Driving the Ecological Vision

| Study / Initiative (Example) | Key Metric | Value / Finding | Implication for Ecological Vision |

|---|---|---|---|

| GTEx Consortium v9 Analysis | Proportion of eQTLs that are tissue-specific | ~65% | Vast majority of regulatory genetic effects are context-dependent, not universal. |

| Human Cell Atlas (2023) | Number of distinct cell types/states characterized | >5,000 | Unprecedented resolution of cellular ecological niches is required for functional understanding. |

| UK Biobank GxE Studies | Variance in BMI explained by GxE (specific SNP x physical activity) | ~0.3-0.8% per locus | Phenotypic outcomes require integrated models of genetic risk and environmental exposure. |

Experimental & Computational Methodologies

Protocol for a Longitudinal Multi-Omic Cohort Study

- Cohort Design: Recruit a prospective cohort with deep phenotyping (clinical imaging, digital health metrics, biospecimens) and regular sampling (blood, stool, nasal swabs) over time.

- Sample Processing Pipeline:

- Genomics: Whole genome sequencing (30x coverage) from baseline blood DNA.

- Longitudinal Profiling: For each serial biospecimen:

- Blood Plasma: Metabolomics (LC-MS), proteomics (Olink/SomaScan), inflammatory markers.

- Peripheral Blood Mononuclear Cells (PBMCs): Single-cell RNA-seq (10x Genomics) + cell surface protein (CITE-seq).

- Stool: 16S rRNA & shotgun metagenomic sequencing for microbiome.

- Triggered Deep Sampling: Upon a pre-defined health event (e.g., infection onset), collect additional targeted samples (e.g., affected tissue if accessible, heightened frequency).

- Data Integration: Use tensor-based models and dynamical systems approaches to integrate time-series multi-omic data with clinical events and environmental sensor data.

Computational Framework: The Ecological Graph

The core analytical model is a multi-layer, attributed graph where nodes represent entities (genes, cells, metabolites, microbes) and edges represent interactions (regulation, correlation, physical binding). Layers correspond to different biological scales or data types.

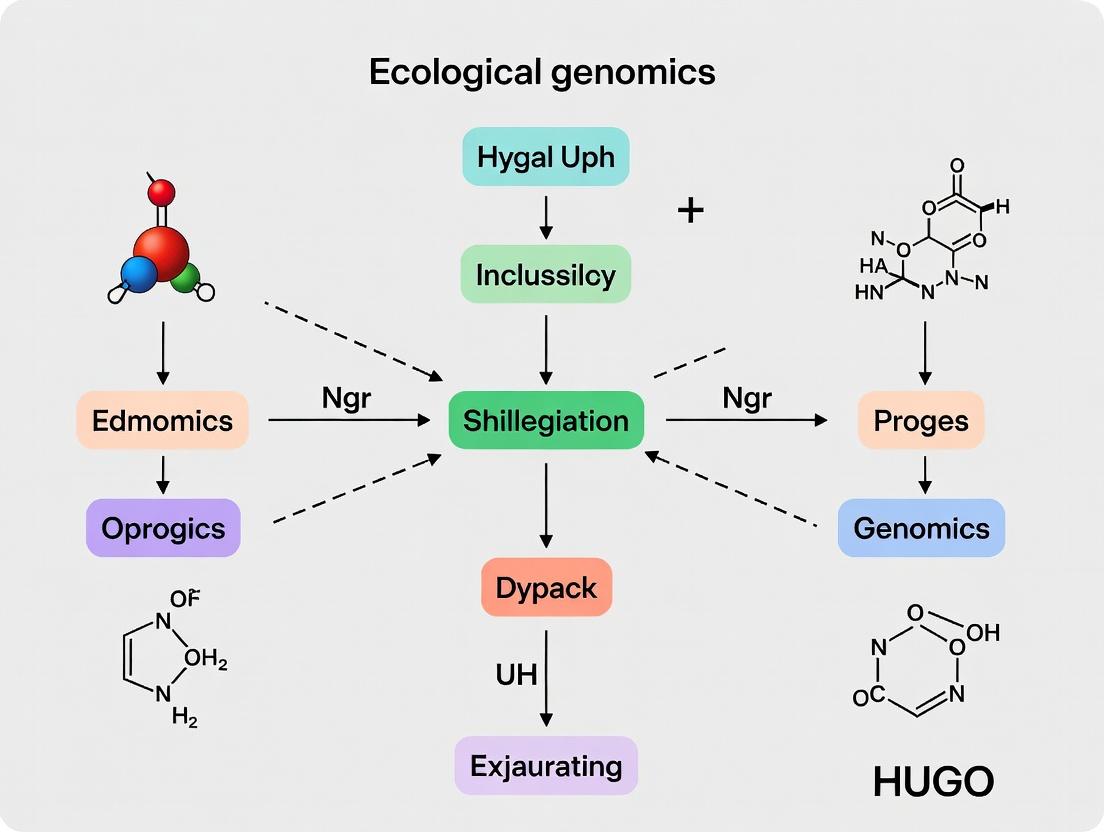

Diagram 1: Multi-Layer Graph Model of Genomic Ecology

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Reagents for Ecological Genomics Research

| Item | Function | Example (Representative) |

|---|---|---|

| Barcoded Spatial Array Slides | Enables transcriptomic/proteomic profiling with retention of 2D/3D tissue architecture. | 10x Genomics Visium, Vizgen MERSCOPE, NanoString CosMx |

| Multiplexed Antibody-Oligo Conjugates | Allows simultaneous measurement of dozens of proteins alongside mRNA in single cells or spatially. | BioLegend TotalSeq, 10x Genomics Feature Barcode |

| Cell Hashing Antibodies | Tags cells with sample-specific barcodes, enabling multiplexed single-cell sequencing and batch effect reduction. | BioLegend TotalSeq-Haso |

| Single-Cell Multiome Kits | Simultaneous assay of chromatin accessibility (ATAC-seq) and gene expression (RNA-seq) from the same single nucleus. | 10x Genomics Multiome ATAC + Gene Exp. |

| CRISPR Perturbation Screening Pools | Link genetic perturbations to transcriptomic phenotypes at single-cell resolution. | 10x CRISPR Guide-Expressing Libraries |

| Stable Isotope Tracers | Track nutrient flow and metabolic activity within cellular ecosystems and host-microbe systems. | 13C-Glucose, 15N-Amino Acids |

| Environmental DNA (eDNA) Extraction Kits | Profile microbiomes and exposomes from diverse, low-biomass samples (air, skin, built environment). | Qiagen DNeasy PowerSoil, ZymoBIOMICS |

Pathway Visualization: Integrative Signaling in Context

Diagram 2: GxExE in Inflammasome Activation

HUGO's ecological genomics vision provides the foundational framework for the next generation of translational research. It mandates a shift from targeting single genes to targeting dysregulated ecological networks within specific disease contexts. This will enable: 1) Context-aware target identification, minimizing failures due to lack of efficacy in heterogeneous human populations; 2) Precision patient stratification based on multi-scale ecological profiles rather than single biomarkers; and 3) Comprehensive biomarker strategies that monitor therapeutic impact across genomic, molecular, and systemic levels. The future of genomics is not merely in the sequence, but in the rich, dynamic ecology it both shapes and is shaped by.

The Human Genome Project's GRCh38 reference assembly, while foundational, is a linear composite derived from a limited number of individuals, failing to capture the full spectrum of human genetic diversity. This limitation introduces reference bias, hindering variant discovery and interpretation, particularly for populations underrepresented in genomic studies. Within the broader thesis of HUGO's ecological genomics vision—which seeks to understand genomic variation within the complex "ecosystem" of global populations and their environmental interactions—the Human Pangenome Reference Consortium (HPRC) emerges as a critical infrastructure project. Its goal is to construct a representative, high-quality, haplotype-resolved pangenome reference that reflects humanity's genetic diversity, thereby enabling more equitable and precise biomedical research and drug development.

Core Objectives and Quantitative Progress

The HPRC aims to sequence genomes from diverse populations using long-read technologies to create a pangenome graph. This graph structure incorporates alternative sequences (alt loci) as branches, allowing for a more natural representation of genetic variation.

Table 1: HPRC Phase 1 Goals and Key Quantitative Outputs (as of latest data)

| Metric | Target/Goal | Achieved Output (Phase 1) | Significance |

|---|---|---|---|

| Number of Assembled Genomes | 350 individuals from diverse populations | 94 fully phased, diploid genome assemblies released (2023) | Provides a critical mass of high-quality data for initial graph construction. |

| Targeted Haplotype Phasing Accuracy | Q50 (Phred-scaled accuracy of 99.999%) | Q50+ achieved for the majority of assemblies using trio-binning or long-read data. | Essential for resolving maternal and paternal haplotypes, crucial for understanding compound heterozygosity. |

| Assembled Genome Quality (Contiguity) | Contig N50 > 50 Mb, Scaffold N50 > 100 Mb | Contig N50 routinely > 30 Mb, with some exceeding 100 Mb; near-complete chromosome arm scaffolds. | Enables analysis of complex structural variants and gene-rich regions without assembly breaks. |

| Population Diversity | Global representation, prioritizing under-represented populations | Initial set includes individuals with Afro-Caribbean, East Asian, South Asian, and European ancestry. | Directly addresses the lack of diversity in GRCh38, reducing reference bias. |

| Variant Discovery | Comprehensive catalog of SNVs, Indels, SVs | Added ~120 million novel variants, including ~1 million structural variants (SVs), many population-specific. | Dramatically expands the known variome, providing new insights for disease association studies. |

Detailed Experimental Protocol: HPRC Genome Assembly Pipeline

The following methodology outlines the core workflow for generating a haplotype-resolved, telomere-to-telomere (T2T) assembly for a single HPRC sample.

1. Sample Selection & Ethics: Individuals are recruited with informed consent, prioritizing diverse genetic backgrounds. Where possible, trio designs (parents and offspring) are employed to enhance phasing.

2. High Molecular Weight (HMW) DNA Extraction: DNA is extracted from lymphoblastoid cell lines or blood using gentle, bead-based methods (e.g., Nanobind CBB Big DNA Kit) to preserve ultra-long fragments (>100 kb).

3. Long-Read Sequencing:

- Pacific Biosciences (HiFi): SMRTbell libraries are constructed. Sequencing on the Revio or Sequel IIe system generates HiFi reads (~15-20 kb length) with >99.9% single-read accuracy.

- Oxford Nanopore Technologies (ONT): Ultra-long DNA libraries are prepared using the Ligation Sequencing Kit (SQK-LSK114). Sequencing on a PromethION flow cell produces reads with an N50 often exceeding 50 kb, useful for spanning complex repeats.

4. Short-Read Sequencing (Optional but Recommended): Illumina PCR-free whole-genome sequencing (~30x coverage) is performed to polish consensus sequences and for quality control.

5. Haplotype Phasing and De Novo Assembly:

- For Trio Samples: The

hifiasm(v0.19) assembler is run with the-toption, utilizing parental short-read data to perform trio-binning. This physically separates maternal and paternal reads prior to assembly, resulting in two completely phased haplotype assemblies (hap1, hap2). - For Single Samples:

hifiasmis run in duo-binning mode (-D), leveraging HiFi read heterozygosity and Hi-C data (if available) to produce phased primary and alternate assemblies.

6. Scaffolding with Hi-C Data: Proximity ligation data (Hi-C) is aligned to the assembled contigs. The YaHS scaffolder orders and orients contigs into chromosome-scale scaffolds, resolving them into the two haplotypes.

7. Alignment-Based Polishing: The MERQURY pipeline is used for quality assessment. pepper (with margin) or GCpp is used for small variant polishing, and pbcromwell for structural consensus polishing against the raw HiFi data.

8. Quality Assessment & Validation:

- Completeness: Assessed via

BUSCOagainst the mammalian ortholog set. - Base Accuracy: QV scores are calculated using

MERQURY. - Phasing Accuracy: For trios,

HapCUT2is used to calculate switch error rates. - Structural Validation: Assembly-to-assembly comparisons with

minimap2andSyRIidentify large-scale SVs, which are validated via PCR or orthogonal sequencing.

9. Pangenome Graph Construction: All phased assemblies are aligned to a reference graph (e.g., minigraph) using minigraph-cactus. The resulting pangenome graph is stored in GFA format and can be used by tools like vg and GraphAligner for downstream analysis.

Visualization: HPRC Experimental and Analytical Workflow

Title: HPRC Genome Assembly and Graph Construction Pipeline

Title: Linear vs. Pangenome Graph Reference Structure

The Scientist's Toolkit: Key Research Reagent Solutions for Pangenome Studies

Table 2: Essential Materials and Reagents for Pangenome-Quality Genome Projects

| Item / Reagent | Function & Rationale |

|---|---|

| Nanobind CBB Big DNA Kit (Circulomics) | Extracts ultra-high molecular weight (uHMW) DNA with minimal shear, critical for generating long sequencing reads. |

| PacBio SMRTbell Prep Kit 3.0 | Prepares hairpin-adapter ligated libraries for PacBio HiFi sequencing, enabling long, accurate circular consensus reads. |

| Oxford Nanopore Ligation Sequencing Kit (SQK-LSK114) | Prepares libraries for ONT sequencing, optimized for ultra-long reads to span complex repeats and structural variants. |

| Dovetail Omni-C Kit | Generates chromosome-conformation capture (Hi-C) data from fixed chromatin, essential for scaffolding contigs into chromosome-scale haplotypes. |

| KAPA HyperPrep Kit (PCR-free) | For constructing high-quality, PCR-free Illumina short-read libraries used in polishing and validation, minimizing coverage bias. |

| hifiasm (v0.19+) Software | State-of-the-art assembler that uses HiFi reads and, optionally, trio or Hi-C data to produce accurate, fully phased diploid assemblies. |

| minigraph-cactus Pipeline | Robust toolchain for aligning multiple assemblies to a reference graph and constructing a pangenome graph in GFA/VG formats. |

| MERQURY Suite | Integrated tool for quality assessment of genome assemblies using k-mer spectra, providing QV scores and completeness metrics. |

The Human Genome Organisation (HUGO) has been the central architect of global human genomics initiatives for over three decades. Framed within a broader thesis on HUGO's ecological genomics vision, this whitepaper examines HUGO’s role as a prioritization engine, moving the field from foundational sequencing (HGP) to large-scale synthesis and engineering (HGP-Write), and toward a future where genomic knowledge is integrated within an ecological framework of human health, diversity, and environmental interaction.

The Foundational Priority: The Human Genome Project (HGP)

HUGO, founded in 1988, was instrumental in coordinating the international effort of the HGP (1990-2003). Its role was not in day-to-day sequencing but in setting ethical standards, fostering collaboration, and defining the core priorities: a complete, accurate, and freely accessible reference human genome sequence.

Key Quantitative Outcomes of the HGP

Table 1: Primary Quantitative Outputs of the Human Genome Project

| Metric | Initial Estimate (1990) | Final Output (2003) | Significance |

|---|---|---|---|

| Genome Size | ~3 billion base pairs (bp) | 3.08 billion bp | Established baseline human genome size. |

| Number of Genes | ~100,000 | ~20,000-25,000 | Revised understanding of genetic complexity. |

| Cost | ~$3 billion | ~$2.7 billion | Established baseline cost for whole-genome sequencing. |

| International Contribution | 5 primary centers | >20 research groups across 6 nations | Model for global scientific collaboration. |

| Data Release Policy | N/A | Bermuda Principles (1996): 24-hour release | Pioneered rapid, open-access genomic data sharing. |

Experimental Protocol: Hierarchical Shotgun Sequencing (HGP Core Method)

Objective: To determine the complete nucleotide sequence of the human genome. Workflow:

- Library Construction: Genomic DNA was sheared and cloned into large-insert Bacterial Artificial Chromosomes (BACs; ~150-200 kb).

- Physical Mapping: BAC clones were fingerprinted and ordered into a tiling path covering each chromosome.

- Shotgun Sequencing: Individual BACs were subcloned into small-insert plasmids, which were then sequenced from both ends using Sanger (dideoxy) chain-termination chemistry on capillary array machines.

- Assembly: Reads from a single BAC were assembled into a contiguous sequence (contig) using Phred/Phrap/Consed software. BAC-end sequences were used to link contigs into scaffolds.

- Finishing: Gaps were closed and low-quality regions were resolved by targeted sequencing of bridging clones or PCR products.

- Annotation: Computational gene prediction tools (e.g., GENSCAN) and alignment with expressed sequence tags (ESTs) were used to identify genes and other functional elements.

The Engineering Priority: From Reading to Writing (HGP-Write)

In 2016, HUGO members spearheaded the proposal for HGP-Write (now the Genome Project-Write, GP-Write), a visionary initiative to prioritize the synthesis and engineering of large genomes. This shifts the focus from analysis to construction to understand genomic design principles.

Core Objectives and Quantitative Goals

Table 2: HGP-Write/GP-Write: Goals and Current Status

| Goal Area | Specific Aim | Key Metrics/Targets | Current Example (as of 2024) |

|---|---|---|---|

| Technology Development | Reduce synthesis cost. | Cost Target: 1000-fold reduction in DNA synthesis cost. | Enzymatic DNA synthesis methods emerging (e.g., DNA printer by Ansa Biotechnologies). |

| Genome Design & Synthesis | Synthesize and test functional genomes. | Pilot: Synthesize all 16 yeast chromosomes (Sc2.0). | Completed: All 16 S. cerevisiae chromosomes synthesized, assembled into a functional strain. |

| Mammalian Genome Engineering | Engineer ultra-safe human cell lines. | Project: Genome Project-Write's "Ultra-safe Cell Line" initiative. | Development of human cell lines with recoded genomes for viral resistance and biocontainment. |

| Ethical, Legal, Social Implications (ELSI) | Proactive governance. | Framework: Integrated ELSI research from inception. | Formation of GP-Write's ELSI Working Group and public engagement forums. |

Experimental Protocol: Synthesis, Assembly, and Replacement (Sc2.0 Yeast Project)

Objective: To design, synthesize, and assemble a fully functional, modified yeast genome. Detailed Methodology:

- Design: Remove all transposable elements, introns, and tRNA genes to a dedicated "neochromosome." Introduce loxPsym sites for genome scrambling (SCRaMbLE system) and synonymous changes for watermarking.

- Oligonucleotide Synthesis: Design 60-80bp oligonucleotides covering the entire designed chromosome sequence with overlaps.

- Hierarchical Assembly: Oligos are assembled via PCR into 750bp blocks (Step 1). Blocks are assembled via transformation-associated recombination (TAR) in yeast into 2-4 kb fragments (Step 2). These are further assembled into 10-30 kb minichunks (Step 3), then 30-60 kb chunks (Step 4) in yeast.

- Chromosomal Integration: Synthesized chunks, containing ~2kb homology arms, are transformed into yeast, replacing the native chromosomal segments via homologous recombination (Step 5).

- Validation: PCRTag sequencing (unique barcodes) and whole-genome sequencing confirm accurate replacement and assembly. Phenotypic assays (growth rate, stress tests) confirm functionality.

Diagram Title: Synthetic Yeast Genome Assembly Workflow

The Future Priority: HUGO's Ecological Genomics Vision

HUGO's emerging priority is to contextualize genomic data within an ecological framework, viewing the human genome as a dynamic component interacting with internal (microbiome, epigenome) and external (environmental, societal) ecosystems. This drives initiatives like the Human Pangenome Reference Consortium (HPRC), which aims to create a representative, high-quality collection of genomes capturing global genetic diversity.

Key Initiative: Human Pangenome Reference

Table 3: Human Pangenome Reference Consortium Goals

| Parameter | Current GRCh38 Reference | HPRC Goal (2024-2026) | Ecological Genomics Implication |

|---|---|---|---|

| Number of Haplotypes | 1 primary assembly + alt loci | 350+ phased diploid genomes from diverse ancestries. | Moves from a single "tree" to a "forest" representing human genomic ecology. |

| Technology | Short-read sequencing, BACs | Long-read (PacBio HiFi, ONT), Hi-C, optical mapping. | Resolves complex structural variation, crucial for understanding adaptive and population-specific traits. |

| Representation Gap | >70% of source from single individual. | <0.001% common allele frequency captured for variants. | Reduces bias in variant discovery and clinical interpretation across populations. |

| Access | Static, linear references. | Graph-based reference (minigraph, pggb) incorporating all haplotypes. | Enables equitable analysis of diverse genomes, foundational for ecological studies of human adaptation. |

Experimental Protocol: De Novo Haplotype-Resolved Genome Assembly (HPRC Protocol)

Objective: Generate a complete, phased (haplotype-resolved) diploid genome assembly for an individual. Detailed Methodology:

- Sample & Library Prep: High molecular weight DNA is extracted from cultured cells (e.g., lymphoblastoid cell lines).

- Multi-platform Sequencing:

- PacBio HiFi Sequencing: Provides long (~15-20kb), highly accurate (>99.9%) reads for primary assembly.

- Oxford Nanopore Ultra-long Sequencing: Provides reads >100kb for spanning complex repeats and scaffolding.

- Hi-C Sequencing: Chromatin conformation capture data links contigs into chromosome-scale scaffolds and phases haplotypes.

- Assembly & Phasing:

- Primary Assembly: HiFi reads are assembled with the hifiasm assembler, which uses read overlaps and haplotype-specific k-mers to generate two preliminary haplotype assemblies (hap1, hap2).

- Scaffolding & Phasing: Hi-C data is aligned to the primary assemblies. Juicer/3D-DNA or Salassar pipelines are used to order and orient contigs into chromosomes. Hi-C contact patterns between heterozygous sites are used to validate and correct phasing.

- Quality Assessment: Completeness (BUSCO), accuracy (QV score via Mercury), and contiguity (N50/N90) are assessed. Assemblies are compared to previous benchmarks (e.g., CHM13) for validation.

Diagram Title: De Novo Diploid Genome Assembly Pipeline

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 4: Key Reagents for Genomic Synthesis, Assembly, and Analysis

| Reagent / Material | Function / Application | Example Product / Technology |

|---|---|---|

| BAC (Bacterial Artificial Chromosome) Clones | Large-insert cloning vector for stable propagation of 150-200 kb genomic DNA fragments; foundational for HGP physical mapping. | pBACe3.6, CopyControl BAC Cloning System. |

| High-Fidelity DNA Polymerase | PCR amplification with ultra-low error rates for accurate assembly of synthetic DNA fragments and library preparation. | Q5 High-Fidelity DNA Polymerase (NEB), Phusion Plus PCR Master Mix (Thermo). |

| Gibson Assembly Master Mix | Enzymatic, isothermal assembly of multiple overlapping DNA fragments via 5' exonuclease, polymerase, and ligase activity. | NEBuilder HiFi DNA Assembly Master Mix (NEB). |

| Yeast Homologous Recombination Strains | Engineered yeast strains (e.g., S. cerevisiae) with high recombination efficiency for assembling large synthetic DNA constructs. | S. cerevisiae VL6-48N (MATα) strain. |

| PacBio SMRTbell Template Prep Kit | Preparation of hairpin-ligated DNA libraries for PacBio HiFi sequencing, enabling long-read, high-accuracy sequencing. | SMRTbell Prep Kit 3.0 (PacBio). |

| D10 Nucleofector Solution & Kit | High-efficiency transfection of large DNA constructs (e.g., synthesized genomes) into mammalian cells. | Cell Line Nucleofector Kit D (Lonza). |

| Chromium Genome Kit (10x Genomics) | Preparation of barcoded linked-read libraries for haplotype phasing and structural variant detection from short reads. | Chromium Genome Reagent Kit v3. |

| Bionano Genomics Saphyr System Reagents | Labeling and imaging reagents for high-throughput optical genome mapping to detect large structural variations and scaffold assemblies. | DLS (Direct Label and Stain) Kit. |

Framing within the HUGO Ecological Genomics Vision The Human Genome Organisation (HUGO) has progressively expanded its vision from a static reference sequence to a dynamic framework for understanding human genomic function in context. Its ecological genomics vision emphasizes that genomic interpretation is inseparable from environmental exposure and phenotypic manifestation. This whitepaper details the GPE Triad as the operational model for this vision, providing a technical roadmap for dissecting the mechanisms by which environment modulates the genotype-phenotype map, with direct implications for precision medicine and therapeutic development.

Core Principles & Quantitative Framework of the GPE Triad

The GPE Triad posits that phenotype (P) is a function of genotype (G), environment (E), and their interaction (GxE): P = f(G, E, GxE). Disentangling these components requires high-dimensional data integration.

Table 1: Core Data Types and Scales in GPE Triad Analysis

| Component | Data Layer | Key Technologies | Typical Scale/Units |

|---|---|---|---|

| Genotype (G) | Genetic Variation | Whole-Genome Sequencing, SNP Arrays | 3.2e9 bp; 4-5e6 variants/individual |

| Environment (E) | Exposome | Geo-mapping, Wearable Sensors, Mass Spectrometry (Metabolomics) | 100s-1000s of chemical, physical, social factors |

| Phenotype (P) | Deep Phenotyping | Clinical Imaging, Transcriptomics, Proteomics, Digital Phenotyping | 10s-1000s of molecular & clinical traits |

| Interaction (GxE) | Multi-omic Response | ATAC-seq, Methylation Arrays, Single-Cell Multiome | Epigenetic changes (e.g., Δβ methylation >0.1) |

Experimental Protocols for GxE Dissection

Protocol 2.1: Controlled Environmental Exposure in Cellular Models

- Objective: To quantify genotype-specific transcriptional responses to a defined environmental stimulus.

- Materials: iPSC-derived cell lines from genetically diverse donors, environmental agent (e.g., 100 µM particulate matter extract, 1 nM TNF-α), control vehicle.

- Procedure:

- Differentiate iPSCs from ≥5 donors (covering relevant genetic variants) into target cells (e.g., bronchial epithelial cells).

- Plate cells in triplicate. At 80% confluence, treat with stimulus or vehicle for 6h.

- Harvest cells for bulk RNA-seq (≥20 million reads/sample). Extract total RNA with TRIzol, prepare libraries (e.g., poly-A selection).

- Analysis: Map reads (STAR), quantify gene expression (featureCounts). Identify: a) Differentially Expressed Genes (DEGs) by stimulus (FDR<0.05), b) Interaction eQTLs (ieQTLs) via linear model Expression ~ Genotype + Treatment + Genotype:Treatment.

Protocol 2.2: Longitudinal Exposome and Phenotype Tracking in Cohorts

- Objective: To correlate dynamic environmental exposure with continuous phenotypic biomarkers and identify genetic moderators.

- Materials: Cohort participants, GPS-enabled activity trackers, portable air monitors, serial biospecimen (blood, urine) collection kits.

- Procedure:

- Recruit cohort with pre-genotyped data. Equip participants with wearables for 30 days to track location, physical activity, and real-time PM2.5/NO2 exposure.

- Collect weekly dried blood spots (DBS) for targeted metabolomics (e.g., inflammation-related lipids) and high-sensitivity CRP measurement.

- Geocode data to link individual exposure to satellite-derived environmental data.

- Analysis: Fit mixed-effects models: Phenotypet ~ BaselineP + CumulativeExposuret-1 + (1 | Participant_ID). Test for genetic variant interaction via a moderated term in the model.

Key Signaling Pathways in GPE Integration

Environmental sensors (e.g., aryl hydrocarbon receptor, NRF2) transduce signals that alter gene expression and phenotype, modulated by genetic background.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents for GPE Triad Research

| Reagent/Material | Provider Examples | Function in GPE Research |

|---|---|---|

| Human Diversity Panel iPSCs | Coriell, Cellular Dynamics | Genetically diverse cellular substrate for controlled GxE experiments. |

| Exposome-Relevant Agonists | Sigma-Aldrich, Cayman Chemical | Defined chemical stimuli (e.g., AhR ligands, oxidative stressors) for in vitro exposure. |

| Multiplex Assay Panels | Meso Scale Discovery, Luminex | Quantify dozens of protein cytokines/chemokines from limited biospecimens, capturing phenotypic response. |

| Methylation EPIC BeadChip | Illumina | Genome-wide profiling of DNA methylation, a key mediator of environmental impact on the genome. |

| Single-Cell Multiome ATAC + Gene Exp. | 10x Genomics | Simultaneously profile chromatin accessibility (environment-influenced) and transcriptome in single cells. |

| Geo-Coding & Exposure Software | ESRI ArcGIS, Google Earth Engine | Link individual participant locations to spatial environmental databases (air, water, green space). |

Integrated Data Analysis Workflow

A systematic computational pipeline is required to integrate G, P, and E data layers.

Operationalizing the HUGO ecological genomics vision requires a steadfast commitment to the GPE Triad model. Moving forward, challenges include standardizing exposome measurement, developing robust multi-omic interaction models, and creating shared computational resources. Success will translate into a new generation of environment-aware therapeutics and personalized health recommendations grounded in a comprehensive understanding of genomic function.

The Human Genome Organisation’s (HUGO) ecological genomics vision posits that human genetic variation is a product of dynamic interaction with ecological and environmental factors across time and space. This framework challenges the static, population-specific models that have historically dominated genomics. Biobanks, as critical infrastructures for biomedical discovery, must evolve to reflect this ecological complexity. The current lack of global diversity in biobanks constitutes a significant scientific and ethical failure, directly undermining the HUGO vision and perpetuating health disparities. This whitepaper outlines the technical and ethical imperatives for creating globally inclusive biobanks, ensuring that genomic research benefits all humanity.

The State of Global Genomic Diversity in Major Biobanks

Current genomic databases suffer from severe ancestral bias. The following table summarizes the proportional representation of major ancestral groups in leading public and commercial biobanks as of recent analyses.

Table 1: Ancestral Representation in Major Genomic Biobanks and Databases

| Biobank / Database | Approx. Total Sample Size | European Ancestry (%) | East Asian Ancestry (%) | African Ancestry (%) | South Asian Ancestry (%) | Admixed/Latin American (%) | Other/Underrepresented (%) |

|---|---|---|---|---|---|---|---|

| UK Biobank | 500,000 | 94% | 0% | 1.5% | 2.5% | 0% | 2% |

| All of Us (US) | ~413,000 (w/ genotyping) | 46% | 2% | 22% | 2% | 18% | 10% |

| FinnGen | 500,000 | ~100% | 0% | 0% | 0% | 0% | 0% |

| BioBank Japan | 200,000 | 0% | ~100% | 0% | 0% | 0% | 0% |

| gnomAD v3.1 | 76,156 genomes | 44% | 10% | 34% | 6% | 5% | <1% |

| TOPMed | ~180,000 genomes | 38% | 4% | 30% | 5% | 20% | 3% |

Table 2: Clinical Impact of Diversity Gaps: Polygenic Risk Score (PRS) Performance

| Disease/Trait | PRS Developed in EUR Population | Transferability (AUC reduction in AFR population) | Key Missing Variants (MAF <1% in EUR, >5% in AFR) |

|---|---|---|---|

| Type 2 Diabetes | High (AUC 0.75) | -15% to -20% | SLC30A8 (p.Arg138*), HNF1A rare variants |

| Breast Cancer | High (AUC 0.68) | -10% to -18% | BRCA1 Founder variants (e.g., c.5266dup) |

| Schizophrenia | Moderate (AUC 0.65) | -25% to -30% | Rare non-coding regulatory variants |

| Cholesterol Levels | High (R² 0.30) | -50% to -70% (R² <0.10) | PCSK9 loss-of-function variants (e.g., R46L) |

Foundational Methodologies for Inclusive Biobanking

Protocol: Community-Engaged Sample and Data Collection Framework

This protocol ensures ethical recruitment and sustained engagement with historically underrepresented communities.

Materials & Reagents: 1) Culturally adapted informed consent forms (digital and paper); 2) Multi-lingual data collection platforms (e.g., REDCap with translation modules); 3) Portable phlebotomy kits for remote collection; 4) Stable DNA/RNA preservative tubes (e.g., PAXgene); 5) Temperature-monitored shipping containers.

Procedure:

- Pre-Engagement & Governance: Establish a Community Advisory Board (CAB) comprising local leaders, ethicists, and potential participants. Co-develop research priorities, consent protocols, and data governance models, including explicit terms for data reuse and benefit-sharing.

- Consent Process: Implement a tiered consent model allowing participants to choose levels of data sharing (e.g., project-specific, broad for health research, no commercial use). Use interactive digital tools with visual aids to explain genomics concepts.

- Phenotypic Data Capture: Collect comprehensive data using standardized ontologies (e.g., HPO, SNOMED CT). Include environmental exposure assessments (geolinked air/water quality, dietary surveys) and social determinants of health (SDOH) metrics.

- Biospecimen Collection: Collect venous blood (for DNA, plasma), saliva (Oragene kits), and, where relevant, tissue biopsies. Process samples within 24 hours using standardized SOPs.

- Data Linkage & Return of Results: Develop pipelines for linkage to electronic health records (EHRs) with privacy safeguards. Establish a clinically actionable variant return pipeline, with genetic counseling support provided in the participant's preferred language.

Protocol: Whole Genome Sequencing & Imputation for Diverse Cohorts

Standard reference panels fail for underrepresented groups. This protocol builds population-specific imputation resources.

Materials & Reagents: 1) High-molecular-weight DNA; 2) PCR-free WGS library prep kits (e.g., Illumina TruSeq DNA PCR-Free); 3) Whole Genome Sequencing platforms (e.g., Illumina NovaSeq X); 4) Population-specific haplotype reference panels (e.g., generated de novo); 5) High-performance computing cluster with >1PB storage.

Procedure:

- DNA QC: Verify DNA integrity (A260/A280 ~1.8, A260/A230 >2.0) and size (avg. fragment >50kb) via agarose gel electrophoresis and Qubit fluorometry.

- Library Preparation & Sequencing: Perform PCR-free library preparation to minimize GC bias. Sequence to a minimum mean coverage of 30x using 150bp paired-end reads.

- Variant Discovery Pipeline: a. Alignment: Map reads to a T2T-CHM13 reference genome using BWA-MEM2. b. Variant Calling: Perform joint calling across all samples in the cohort using GATK HaplotypeCaller in GVCF mode. c. Variant Quality Score Recalibration (VQSR): Train VQSR models using population-specific truth sets, not standard HapMap/Omni resources.

- Reference Panel Creation: Use Eagle2 or SHAPEIT4 for phasing. Combine high-quality, population-specific WGS data to create a new imputation reference panel.

- Imputation Performance Validation: Mask genotypes from a subset of WGS data and impute them using the new panel versus standard panels (e.g., 1000G, TOPMed). Compare r² and allelic concordance rates for rare (MAF 0.1-1%) and ultra-rare (<0.1%) variants.

Key Signaling Pathways & Analytical Workflows

Figure 1: Integrated Ecological Genomics Analysis Workflow.

Figure 2: Dynamic Ethical Governance Structure for Biobanks.

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Research Reagents & Materials for Inclusive Genomic Studies

| Item/Category | Specific Product/Example | Function & Rationale |

|---|---|---|

| DNA Collection & Stabilization | Oragene•DNA / PAXgene Blood DNA tubes | Enables non-invasive, stable saliva collection and high-quality DNA from blood without immediate freezing, crucial for field work in diverse settings. |

| PCR-Free WGS Kits | Illumina TruSeq DNA PCR-Free, Twist Bioscience NGS Enzymatic Fragmentation Kit | Eliminates PCR amplification bias, providing uniform coverage across GC-rich and repetitive regions, essential for accurate variant calling in all genomes. |

| Targeted Enrichment for Understudied Variants | Custom IDT xGen or Twist Pan-African/AI/Indigenous Focus Panels | Probes designed for variants common in specific underrepresented populations but absent from commercial panels, enabling cost-effective deep sequencing of relevant genomic regions. |

| Long-Read Sequencing Platforms | PacBio Revio, Oxford Nanopore PromethION 2 | Resolves complex structural variants, phasing, and repetitive regions (e.g., HLA, CYP) where short-read data fails, capturing diversity missed by standard WGS. |

| Multi-Ethnic Genotype Array | Illumina Global Diversity Array, UK Biobank Axiom Array | Includes content from 1000G Phase 3 and population-specific variants, providing a cost-effective first-pass genotyping tool for diverse cohorts prior to WGS. |

| Bioinformatics Pipelines | GATK Best Practices (Modified), imputation servers (TOPMed, pan-ancestry) | Standardized but adaptable pipelines. Must use population-specific training sets for VQSR and employ diverse reference panels for accurate imputation. |

| Cell Line Generation | Epstein-Barr Virus (EBV) Transformation kits, Lymphoprep | Creates immortalized lymphoblastoid cell lines (LCLs) from donor blood, providing a renewable resource for functional assays and multi-omics studies. |

Aligning biobanking practices with the HUGO ecological genomics vision requires a dual commitment: technical rigor in capturing genomic complexity and an unwavering ethical commitment to inclusivity and justice. This entails moving beyond mere sample collection to building enduring, equitable partnerships. The protocols, tools, and frameworks outlined herein provide a roadmap for creating biobanks that are truly global, thereby unlocking the full potential of genomic medicine for every human population in their unique ecological context.

From Vision to Pipeline: Methodologies for Ecological Genomics in Biomedical Research

Advanced Sequencing Technologies (Long-Read, Spatial Transcriptomics) Enabling Ecological Studies

The Human Genome Organization (HUGO) has long championed the comprehensive understanding of genomic variation and its functional consequences. Extending this vision to ecological genomics, HUGO emphasizes the need to decipher the intricate interplay between organisms, their genomes, and their environment at unprecedented resolution. This paradigm shift from single-organism to ecosystem-scale genomics is now being powered by advanced sequencing technologies. Long-read sequencing breaks the constraints of short genomic fragments, enabling the assembly of complex genomes and the direct detection of epigenetic modifications across entire ecosystems. Spatial transcriptomics transcends bulk tissue analysis, mapping gene expression to its precise ecological context, such as within a soil microbiome matrix or a host-pathogen interface in a natural setting. This whitepaper details the technical application of these technologies, providing a guide for researchers to harness them for transformative ecological studies aligned with the HUGO ecological genomics vision.

Technical Guide: Long-Read Sequencing in Ecological Genomics

2.1 Core Technologies and Comparative Metrics Long-read platforms provide continuous sequence reads spanning thousands to millions of bases, revolutionizing the study of complex ecological samples.

Table 1: Comparative Analysis of Primary Long-Read Sequencing Platforms (2023-2024)

| Platform (Company) | Technology | Average Read Length (N50) | Accuracy (Raw/CCS) | Key Ecological Application | Throughput per Run |

|---|---|---|---|---|---|

| PacBio Revio (PacBio) | HiFi Circular Consensus Sequencing (CCS) | 15-20 kb | >99.9% (Q30) | Eukaryotic genome assembly, haplotype phasing in wild populations, precise metabarcoding. | 120-140 Gb |

| Oxford Nanopore PromethION 2 (ONT) | Nanopore Electronic Sensing | 10-100+ kb (theoretical >4 Mb) | ~98-99% raw (Q20-30), >99.9% with Duplex | Metagenome-assembled genomes (MAGs), direct RNA sequencing, real-time in-field surveillance. | 100-200+ Gb |

| Ultima Genomics UG 100 | Sequencing by Avidity (emerging) | 1-10 kb (developing) | Data pending | Potential for high-volume, low-cost ecological surveys. | Up to 1 Tb |

2.2 Detailed Experimental Protocol: Generating a Chromosome-Scale Assembly for a Non-Model Organism

Objective: De novo genome assembly of a keystone plant species from a natural population. Workflow:

- Sample Collection & High Molecular Weight (HMW) DNA Extraction: Flash-freeze leaf tissue in liquid nitrogen. Use a CTAB-based method with RNase A treatment, followed by HMW DNA isolation via magnetic bead-based size selection (e.g., SRE kit from Circulomics/PacBio). Assess integrity via pulse-field gel electrophoresis (PFGE) or FEMTO Pulse system; target DNA fragments >50 kb.

- Library Preparation & Sequencing (PacBio HiFi): Shear HMW DNA to ~15-20 kb target size using a g-TUBE or Megaruptor. Prepare SMRTbell library using the Express Template Prep Kit 2.0. Perform size selection with AMPure PB beads. Sequence on a Revio system using 8M SMRT Cells, 30-hour movies, and Sequel II binding kit v3.0.

- Library Preparation & Sequencing (Oxford Nanopore): Use the Ligation Sequencing Kit V14 (SQK-LSK114) with native barcoding expansion (EXP-NBD114). Load onto a PromethION R10.4.1 flow cell and run for 72 hours via MinKNOW.

- Data Processing & Assembly:

- PacBio HiFi: Generate HiFi reads using

ccs(Circular Consensus Calling). Assemble withhifiasmorflye. Polish if necessary with the HiFi data itself. - Oxford Nanopore: Perform basecalling with

doradoin super-accuracy mode. Assemble long reads withflyeornextdenovo. Polish withmedaka.

- PacBio HiFi: Generate HiFi reads using

- Scaffolding & Quality Assessment: Use Hi-C data (from the same individual) with

Juicerand3D-DNAorSalassarto achieve chromosome-scale scaffolding. Assess completeness withBUSCOagainst the appropriate lineage database (e.g., embryophyta_odb10).

Diagram Title: Long-Read Genome Assembly & Hi-C Scaffolding Workflow

Technical Guide: Spatial Transcriptomics in Ecological Contexts

3.1 Core Technologies and Spatial Resolution Spatial transcriptomics captures the entire transcriptome while retaining two-dimensional positional information, critical for understanding microenvironmental interactions.

Table 2: Spatial Transcriptomics Platforms for Ecological Tissue Sections

| Platform / Method | Spatial Resolution | Throughput (Genes) | Requires Pre-Defined Genes? | Ecological Application Example |

|---|---|---|---|---|

| 10x Genomics Visium | 55 µm (with 55 µm spot center-to-center) | Whole Transcriptome (~18,000 genes) | No | Host-pathogen interaction zones in coral or plant leaves; spatial mapping of biosynthetic gene clusters in microbial mats. |

| Nanostring GeoMx Digital Spatial Profiler (DSP) | ROI-based (1-600 µm) | Whole Transcriptome or Protein (~18,000+ targets) | Yes (for WTA) | Profiling specific symbiotic structures (e.g., root nodules, lichen thalli) or lesion sites in wildlife disease. |

| MERFISH / seqFISH+ | Subcellular (~0.1-1 µm) | Hundreds to thousands | Yes | Ultra-high-resolution mapping of microbial consortia spatial organization. |

| Slide-seq / Visium HD | ~2-5 µm (near-cellular) | Whole Transcriptome | No | Cellular-level ecology within complex tissues like gut microbiomes in situ. |

3.2 Detailed Experimental Protocol: Spatial Host-Microbiome Profiling with Visium

Objective: Map the transcriptomic landscape of a coral polyp section and its associated symbiotic algae (Symbiodiniaceae) and bacteria. Workflow:

- Sample Preparation: Snap-freeze a coral fragment in liquid nitrogen. Embed in Optimal Cutting Temperature (OCT) compound. Cryosection at 10 µm thickness onto a Visium Spatial Gene Expression slide. Immediately fix tissue with chilled methanol. Stain with Hematoxylin and Eosin (H&E) and image at high resolution.

- Permeabilization Optimization: Perform a tissue optimization slide run to determine the ideal permeabilization time (e.g., 12, 18, 24 minutes) using the provided fluorescent RNA-binding probes to maximize cDNA yield from both coral host and microbial RNA.

- Spatial Library Construction: On the main slide, perform permeabilization (using optimized time) to release RNA, which is captured by spatially barcoded oligo-dT primers on the slide. Synthesize cDNA in situ. Harvest cDNA, amplify via PCR, and construct Illumina-compatible libraries following the Visium User Guide.

- Sequencing & Data Analysis: Sequence on an Illumina NextSeq 2000 (P3 100 cycle kit, aiming for ~50,000 read pairs per spot). Align reads to a combined reference genome (coral host + Symbiodiniaceae clade reference + common bacterial symbionts) using

Space Ranger. Perform downstream analysis inSeurat(R) with spatial functions to identify spatially variable gene modules, correlate host immune response zones with microbial presence, and visualize expression gradients.

Diagram Title: Spatial Transcriptomics Workflow for Host-Microbe Systems

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Reagent Solutions for Advanced Ecological Sequencing

| Item Name (Example) | Category | Primary Function in Ecological Studies |

|---|---|---|

| Circulomics SRE (Short Read Eliminator) Kit | HMW DNA Prep | Selectively removes short, fragmented DNA from environmental or host extracts, enriching for long, intact molecules crucial for long-read assembly of complex genomes/MAGs. |

| PacBio SMRTbell Express Template Prep Kit 2.0 | Long-Read Library Prep | Prepares sheared, size-selected HMW DNA into SMRTbell libraries for PacBio HiFi sequencing, enabling high-accuracy long reads from mixed samples. |

| Oxford Nanopore Ligation Sequencing Kit V14 | Long-Read Library Prep | Prepares DNA (or direct RNA) libraries for nanopore sequencing, facilitating real-time, ultra-long read generation ideal for in-field pathogen surveillance or metagenomics. |

| 10x Genomics Visium Spatial Tissue Optimization Slide | Spatial Transcriptomics | Determines the optimal tissue permeabilization condition for a new ecological sample type (e.g., insect cuticle, plant bark, fungal tissue) to maximize RNA capture efficiency. |

| Visium Spatial Gene Expression Slide & Reagents | Spatial Transcriptomics | The core consumable for capturing spatially barcoded whole transcriptome data from a tissue section, enabling mapping of gene expression to ecological micro-niches. |

| Nanostring GeoMx Human/ Mouse Whole Transcriptome Atlas | Spatial Profiling | A pre-designed probe set for DSP enabling whole transcriptome analysis of any ROI in samples where host is model-adjacent (e.g., rodent disease reservoirs), adaptable via custom probes. |

| DNeasy PowerSoil Pro Kit (Qiagen) | Environmental DNA Extraction | Standardized, high-yield extraction of inhibitor-free DNA from challenging environmental samples (soil, sediment, feces) for subsequent long or short-read metabarcoding. |

| RNAlater Stabilization Solution | RNA Preservation | Rapidly penetrates and stabilizes cellular RNA in field-collected specimens, preserving the transcriptional state at the moment of sampling for later spatial or bulk analysis. |

Computational Tools for Pan-Genome Analysis and Structural Variation Discovery

The Human Genome Organisation’s (HUGO) Ecological Genomics Vision emphasizes understanding human genetic diversity within the broader context of environmental and evolutionary pressures. This framework necessitates a shift from single linear reference genomes to pan-genomes, which capture the full complement of genes and sequences within a species, including structural variants (SVs). Computational pan-genome analysis is fundamental to this vision, enabling the discovery of SVs that contribute to phenotypic diversity, disease susceptibility, and adaptive traits across populations.

Core Computational Tools and Data Presentation

The landscape of computational tools is segmented by primary function. The following tables summarize key quantitative performance metrics and characteristics based on recent benchmarking studies (2023-2024).

Table 1: Pan-Genome Graph Construction & Indexing Tools

| Tool | Core Algorithm | Input | Output Graph Type | Key Metric (Indexing Speed)* | Key Metric (Index Size)* |

|---|---|---|---|---|---|

| vg | Variation Graph | VCF, Reference FASTA | Variation Graph | ~4 hours (1000GP chr20) | ~1.8 GB (1000GP chr20) |

| Minigraph | Minimizer-based chaining | Assemblies, Reference | Pangenome Graph (aGAM) | ~1 hour (CHM13+12 assm.) | ~0.5 GB (CHM13+12 assm.) |

| Minigraph-Cactus | Cactus progressive alignment | Assemblies | Pangenome Graph (GFA) | ~10 hours (100 verteb. genomes) | Varies with complexity |

| pggb | wfmash / seqwish | Assemblies, Haplotypes | Pangenome Graph (GFA) | ~2 hours (54 human hap.) | ~700 MB (54 human hap.) |

*Metrics are illustrative and dataset-dependent. 1000GP: 1000 Genomes Project; assm.: assemblies; hap.: haplotypes.

Table 2: Structural Variation Discovery & Genotyping Tools

| Tool | SV Type Detected | Primary Input | Key Metric (Recall)* | Key Metric (Precision)* | Specialization |

|---|---|---|---|---|---|

| Sniffles2 | INS, DEL, DUP, INV, BND | Long-read alignment (BAM) | 0.92 | 0.89 | Long-read optimized |

| cuteSV2 | INS, DEL, DUP, INV, BND | Long-read alignment (BAM) | 0.90 | 0.93 | Population-scale long-read |

| Delly2 | DEL, DUP, INV, BND, INS | Short-read alignment (BAM) | 0.85 | 0.88 | Short-read, paired-end |

| Manta | DEL, DUP, INV, BND, INS | Short-read alignment (BAM) | 0.88 | 0.95 | Germline & somatic |

| SVIM-asm | INS, DEL, DUP, INV | Genome Assemblies | 0.87 | 0.91 | Assembly-based |

*Example metrics for DEL/INS >50bp on simulated PacCLR data (Sniffles2, cuteSV2) or Illumina (Delly2, Manta).

Experimental Protocols

Protocol 1: Building and Querying a Population-Scale Pan-Genome Graph

Objective: Construct a chromosome-specific pan-genome graph from multiple high-quality assemblies and genotype variants in a sample.

Materials: High-quality haplotype-resolved assemblies (FASTA), reference genome (FASTA), HPRC or similar data.

Method:

- Graph Construction:

a. Use

minigraphto create an initial graph:minigraph -cxggs ref.fa haplotype1.fa haplotype2.fa ... > graph.gfab. Refine withminigraph-cactusorpggbfor improved alignment:pggb -i input.fa -o output_dir -p 90 -s 50000 -n 50 - Graph Indexing:

a. Convert graph to vg format:

vg convert graph.gfa > graph.vgb. Index withvg autoindex:vg autoindex --workflow giraffe -r ref.fa -v population.vcf.gz -p -t 16 -g index - Read Mapping and Genotyping:

a. Map sequencing reads to the graph:

vg giraffe -Z index.giraffe.gbz -m index.min -d index.dist -f sample.fq -o GAMb. Pack alignments:vg pack -x graph.xg -g alignments.gam -o sample.packc. Call variants:vg call graph.xg -k sample.pack -r > sample.vcf

Protocol 2: Integrated SV Discovery from Long-Read Sequencing

Objective: Identify high-confidence SVs using PacBio HiFi or ONT data.

Materials: Long-read FASTQ, reference genome (FASTA).

Method:

- Reference Alignment:

a. Align reads with

minimap2:minimap2 -ax map-hifi ref.fa sample.fq --secondary=no | samtools sort -o aligned.bamb. Index BAM:samtools index aligned.bam - SV Calling with Sniffles2:

a. Call SVs:

sniffles --input aligned.bam --vcf output.vcf --reference ref.fa --threads 16b. For population calling, create a sniffles VCF for each sample, then merge:sniffles --input sample_list.txt --vcf population.vcf - SV Filtering and Annotation:

a. Filter for precision: Use

bcftoolsto filter onSUPPORT,SVLEN, andQUAL.bcftools view -i 'SUPPORT>=5 && SVLEN>=50 && QUAL>10' output.vcf > filtered.vcfb. Annotate withSnpEfforVEPusing a custom database built from the pan-genome.

Mandatory Visualizations

Title: HUGO Vision Driving Pan-Genome & SV Analysis

Title: Pan-Genome Graph Construction and Query Workflow

Title: Multi-Method SV Discovery from Long Reads

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Pan-Genome & SV Analysis Experiments

| Item / Reagent | Function in Analysis | Example/Note |

|---|---|---|

| High-Quality Genomic DNA | Input material for long-read sequencing and de novo assembly. | Recommended: >50kb mean fragment size (PacBio), >30µg mass. |

| PacBio HiFi or ONT Ultra-Long Reads | Generate accurate, long sequencing reads for assembly and direct SV detection. | HiFi reads for accuracy, ONT for longest spans of repeats. |

| CHM13 or GRCh38 Reference Genome | Baseline linear reference for initial alignment and graph construction. | T2T CHM13 v2.0 is now the gold-standard complete reference. |

| HPRC/HGSVC Assemblies | Publicly available, haplotype-resolved assemblies for graph construction. | Human Pangenome Reference Consortium data. |

| Benchmark SV Callsets (GIAB, HGSVC) | Gold-standard truth sets for validating novel SV calls. | GIAB v1.0 for Tier 1 regions; HGSVC for complex regions. |

| Containerized Software (Docker/Singularity) | Ensures reproducible tool environments and version control. | Most tools (e.g., vg, pggb) have pre-built containers on Biocontainers. |

| High-Performance Computing Cluster | Provides necessary CPU, memory, and storage for graph operations. | Typical requirements: >64 cores, >512GB RAM, >10TB storage for human pan-genome. |

Within the framework of the HUGO ecological genomics vision, which posits that human health must be understood through the dynamic interplay between genomic architecture and environmental exposures across time and space, this whitepaper examines the mechanistic dissection of gene-environment (GxE) interactions in complex diseases. This ecological perspective moves beyond static genomic catalogs to a systems-level understanding, crucial for oncology, immunology, and neurology, where environmental triggers often unlock genetic susceptibility.

Core Mechanisms and Quantitative Data

Oncology: Carcinogen Metabolism and DNA Repair

Environmental carcinogens (e.g., polycyclic aromatic hydrocarbons (PAHs), aflatoxin B1) require metabolic activation by Phase I/II enzymes, whose genetic polymorphisms create differential risk landscapes.

Table 1: Key Genetic Variants Modifying Environmental Cancer Risk

| Gene | Variant | Environmental Exposure | Associated Cancer | Odds Ratio (95% CI) | Study (Year) |

|---|---|---|---|---|---|

| GSTM1 | Null deletion | Tobacco smoke (PAHs) | Lung adenocarcinoma | 1.41 (1.23-1.61) | Meta-Analysis (2023) |

| CYP1A1 | rs4646903 (T>C) | Charred meat consumption | Colorectal Cancer | 1.82 (1.35-2.45) | Cohort (2024) |

| TP53 | R249S mutation | Aflatoxin B1 exposure | Hepatocellular Carcinoma | 6.9 (3.8-12.5) | Case-Control (2023) |

| NAT2 | Slow acetylator | Heterocyclic amines (diet) | Bladder Cancer | 1.54 (1.28-1.85) | Meta-Analysis (2024) |

Immunology: Hypersensitivity and Autoimmunity

Environmental adjuvants (e.g., silica, cigarette smoke) can breach immune tolerance in genetically predisposed individuals, often through epigenetic reprogramming of immune cells.

Table 2: GxE Interactions in Autoimmune Disease

| Disease | HLA Locus | Environmental Factor | Proposed Mechanism | Risk Increase (Fold) |

|---|---|---|---|---|

| Rheumatoid Arthritis | HLA-DRB1 SE | Cigarette Smoke | Citrullination of peptides, enhanced MHC binding | 21.0 (SE+Smoking vs. neither) |

| Celiac Disease | HLA-DQ2.5 | Dietary Gluten | Deamidation of gliadin, high-affinity T cell receptor engagement | Absolute risk ~3% in carriers |

| SLE | HLA-DRB103 | UV Radiation | Apoptosis-induced autoantigen exposure, interferon-α activation | 5.2 (vs. non-carriers, post-exposure) |

Neurology: Neurotoxins and Neurodevelopment

Prenatal and early-life exposures (e.g., pesticides, air pollution) interact with neurodevelopmental gene networks, influencing synaptic pruning and microglial function.

Table 3: Neurodevelopmental GxE Interactions

| Disorder | Candidate Gene/Pathway | Environmental Exposure | Endophenotype | Effect Size (β or Hazard Ratio) |

|---|---|---|---|---|

| Autism Spectrum Disorder | CHD8 (chromatin remodeler) | Maternal Valproate Use | Altered Wnt/β-catenin signaling, synaptic gene dysregulation | HR = 4.8 (exposed carriers) |

| Parkinson's Disease | GBA1 mutations | Pesticide (Paraquat/Rotenone) | Lysosomal dysfunction, α-synuclein aggregation | β = 2.7 for interaction term |

| Alzheimer's Disease | APOE ε4 allele | PM2.5 Air Pollution | Accelerated amyloid-β plaque deposition, neuroinflammation | HR = 1.95 per 2 µg/m³ in ε4 carriers |

Detailed Experimental Protocols

Protocol 1: Genome-Wide GxE Interaction Study (GWGxE) Using Case-Only Design

Objective: To identify genetic variants whose effect on disease risk is modified by a binary environmental exposure.

- Cohort Selection: Recruit n≥5000 cases with precise environmental exposure data (e.g., smoking status verified by cotinine assay).

- Genotyping & QC: Perform whole-genome sequencing or high-density SNP array. Apply standard QC: call rate >98%, MAF >1%, HWE p>1e-6.

- Exposure Assessment: Quantify exposure using a validated binary or continuous measure. For continuous measures, consider quantile normalization.

- Statistical Analysis (R

PLINKorSNPtest): a. For binary exposure (E=0/1), use a case-only logistic regression model:logit(P(G=1)) = β0 + β1 * E. The interaction parameter β1 tests for departure from multiplicative independence. b. Control for population stratification using principal components (PCs). c. Genome-wide significance: p < 5e-8. Use Q-Q plots to inspect inflation (λGC). - Validation: Replicate significant hits in an independent case-control cohort using a traditional case-control interaction test.

Protocol 2: Epigenomic Profiling of GxE via ATAC-seq and RNA-seq

Objective: To characterize the impact of an environmental exposure on chromatin accessibility and transcription in a genotype-dependent manner.

- Cell Model: Establish primary cells (e.g., bronchial epithelial cells) or iPSC-derived lineages from donors with different genotypes (e.g., GSTM1 null vs. wild-type).

- Exposure Regime: Treat cells with a physiologically relevant dose of environmental agent (e.g., 1µM Benzo[a]pyrene) vs. vehicle control for 24h.

- ATAC-seq:

a. Harvest 50,000 cells per condition. Perform cell lysis and transposition using the Illumina Tagmentase TDE1 (37°C, 30 min).

b. Purify transposed DNA, amplify with indexed primers (PCR: 12 cycles).

c. Sequence on Illumina NovaSeq (2x150bp).

d. Analysis: Align to hg38 with

BWA, call peaks withMACS2. Identify differential accessibility sites (FDR<0.05) withDESeq2. - RNA-seq:

a. Extract total RNA in parallel (TRIzol). Prepare poly-A selected libraries.

b. Sequence to depth of 30M reads per sample.

c. Analysis: Align with

STAR, quantify transcripts withfeatureCounts. Perform differential expression (FDR<0.05) and pathway enrichment (GSEA). - Integration: Overlap differential ATAC peaks with promoter/enhancer regions of differentially expressed genes. Test for genotype-by-exposure interaction effect on both layers.

Visualizations

Oncology GxE: Carcinogen Metabolism Pathway

Immunology GxE: RA Citrullination Pathway

Neurology GxE Experimental Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Reagents for GxE Mechanistic Studies

| Reagent / Solution | Function & Application | Example Product (Vendor) |

|---|---|---|

| iPSC Differentiation Kits | Generate disease-relevant cell types (neurons, microglia, hepatocytes) for in vitro exposure studies. | Gibco PSC Microglia Differentiation Kit (Thermo Fisher) |

| Environmental Exposure Mimetics | Standardized chemical agents to simulate real-world exposures in cell/animal models. | Urban Dust Particulate Matter SRM 1648a (NIST) |

| Multiplex Cytokine/Chemokine Panels | Quantify inflammatory secretome changes post-exposure across many analytes simultaneously. | Human Cytokine 48-Plex Discovery Assay (Eve Technologies) |

| Tagmentase (Tn5) for ATAC-seq | Enzyme for simultaneous fragmentation and tagging of open chromatin regions for sequencing. | Illumina Tagmentase TDE1 (Illumina) |

| Genotype-Specific Reporter Assays | Luciferase constructs with variant alleles to test enhancer/promoter activity under exposure. | Custom pGL4.23[luc2/minP] constructs (Promega) |

| CRISPR/Cas9 Isogenic Cell Lines | Engineer specific genetic variants into a controlled background to isolate GxE effects. | Edit-R CRISPR-Cas9 Gene Engineering System (Horizon Discovery) |

| Metabolite Detection Kits | Quantify intermediates of environmental toxin metabolism (e.g., aflatoxin-DNA adducts). | Aflatoxin B1 ELISA Kit (Creative Diagnostics) |

Decoding GxE interactions demands an ecological genomic approach, integrating precise environmental measurements with deep molecular phenotyping across genomic, epigenomic, and transcriptomic layers. The protocols and tools outlined provide a roadmap for mechanistic discovery. This aligns with the HUGO vision, pushing towards predictive models of disease risk that encompass the full environmental context of the genome, thereby enabling targeted prevention strategies and personalized therapeutic interventions in oncology, immunology, and neurology.

The Human Genome Organization’s (HUGO) ecological genomics vision posits that human health cannot be fully understood in isolation from the complex, multi-layered environmental and ecological contexts in which genomes function. This whitepaper situates pharmacogenomics—the study of how genes affect a person’s response to drugs—within this expansive framework. Moving beyond traditional single-nucleotide polymorphism (SNP)-drug pair analyses, we integrate ecological data (e.g., environmental exposures, microbiome composition, lifestyle vectors) to build predictive models for drug efficacy and adverse drug events (ADEs). This convergence is critical for realizing personalized medicine that accounts for the totality of an individual’s exposome.

Core Quantitative Data: Key Gene-Environment-Drug Interactions

Recent meta-analyses and consortium data (e.g., PharmGKB, UK Biobank) highlight quantifiable interactions. The tables below summarize critical findings.

Table 1: Impact of Selected Pharmacogenes on Drug Response Prevalence

| Pharmacogene (Variant) | Drug/Therapy Class | Altered Response Prevalence | Effect Size (Odds Ratio/Hazard Ratio) | Key Ecological Modifier |

|---|---|---|---|---|

| CYP2C19 (loss-of-function alleles) | Clopidogrel (Antiplatelet) | 30-40% in poor metabolizers | OR for stent thrombosis: 3.45 (CI: 2.14-5.57) | High H. pylori burden (affects gastric pH) |

| VKORC1 (-1639G>A) | Warfarin (Anticoagulant) | ~55% variance in stable dose | N/A | Dietary Vitamin K1 intake (ecological food source data) |

| HLA-B (∗15:02 allele) | Carbamazepine (Anticonvulsant) | Severe cutaneous ADE risk: 5-10% in carriers | OR: 113.4 (CI: 51.2-251.0) | Concurrent viral infection (e.g., HHV-6) |

| DPYD (IVS14+1G>A) | 5-Fluorouracil (Chemotherapy) | Severe toxicity in 40-50% of variant carriers | HR for toxicity: 4.40 (CI: 2.10-9.26) | Gut microbiome β-glucuronidase activity |

Table 2: Ecological Data Layers and Their Measurable Influence on Pharmacokinetics

| Ecological Data Layer | Measurable Metric | Influence on PK Parameter | Typical Effect Magnitude (Fold-Change) |

|---|---|---|---|

| Gut Microbiome | Bacteroides spp. abundance vs. Firmicutes | Drug Bioavailability (e.g., Digoxin inactivation) | Up to 2.5x reduction in AUC |

| Chemical Exposome | Urinary bisphenol A (BPA) level | Hepatic CYP3A4 induction | 1.3-1.8x increased clearance |

| Dietary Patterns | Cruciferous vegetable index | CYP1A2 activity | 1.2-2.0x increased metabolism |

| Geospatial Air Quality | PM2.5 exposure (μg/m³) | Systemic inflammation; P-glycoprotein expression | Alters IC50 for chemotherapeutics by up to 1.5x |

Integrated Experimental Protocols

Protocol: Multi-Omic Profiling for Gene-Environment-Drug Interaction Discovery

Objective: To identify novel interactions between host pharmacogenomic variants, gut microbiome composition, and drug metabolite levels.

Materials: Patient blood (DNA, plasma), stool samples, target drug (e.g., metformin), LC-MS/MS, next-generation sequencing (NGS) platform.

Procedure:

- Pre-Dose Baseline: Collect blood for germline whole-genome sequencing (30x coverage) and stool for 16S rRNA metagenomic sequencing (V3-V4 region, 50,000 reads/sample).

- Drug Administration & Pharmacokinetics: Administer standard drug dose. Collect serial plasma samples at 0, 0.5, 1, 2, 4, 8, 12, 24 hours.

- Metabolomic Profiling: Quantify parent drug and major metabolite concentrations using LC-MS/MS. Calculate AUC, C~max~, T~max~, half-life.

- Integrative Biostatistical Analysis:

- Perform GWAS on PK parameters (e.g., AUC).

- Correlate microbial taxa abundance (e.g., from QIIME2 analysis) with metabolite ratios.

- Test for significant interaction terms (genotype × microbial abundance) on drug clearance using linear mixed models, correcting for covariates (age, BMI, diet).

Protocol: Ex Vivo Assessment of Environmental Toxicant on Drug Transport

Objective: To determine how pre-exposure to a prevalent ecological toxicant (e.g., BPA) alters transporter-mediated drug uptake in cultured cells.

Materials: HEK293 cells overexpressing OATP1B1, culture medium, BPA stock solution, fluorescent substrate (e.g., CDCF), flow cytometer.

Procedure:

- Cell Culture & Exposure: Culture transporter-overexpressing and control cells. Treat with 10 nM BPA or vehicle (DMSO <0.1%) for 72 hours.

- Uptake Assay: Wash cells with PBS. Incubate with 5 μM fluorescent substrate at 37°C for 5 minutes. Terminate uptake with ice-cold PBS.

- Quantification: Lyse cells. Measure fluorescence intensity via plate reader (Ex/Em: 485/535 nm). Normalize to total protein content (BCA assay).

- Data Analysis: Calculate fold-change in uptake velocity (V~max~ apparent) in BPA-exposed vs. control cells. Significance tested via unpaired t-test (n≥6).

Visualizing Pathways and Workflows

Title: Integrative Model for Drug Response Prediction

Title: Microbiome-Mediated Drug Toxicity Pathway

The Scientist's Toolkit: Research Reagent Solutions

| Item Name | Function in PGx-Ecology Research | Example Vendor/Product |

|---|---|---|

| PharmCAT Software | Bioinformatics pipeline for annotating pharmacogenomic variants from WGS/WES data. | GitHub (PharmGKB/PharmCAT) |

| GeoMx DSP | Digital spatial profiler for analyzing drug target expression in tissue context under ecological stressors. | NanoString Technologies |

| Simcyp Simulator | Physiology-based PK/PD modeling platform incorporating genetic and ecological (e.g., enzyme abundance) variability. | Certara |

| MiBioGen Consortium Array | Genotyping array optimized for host-microbiome GWAS interactions, including immune-related loci. | Illumina |

| HUMAnN3 Pipeline | Profiles species-specific metabolic pathways from metagenomic data, linking microbiome function to drug metabolism. | biobakery |

| Exposome Explorer DB | Curated database of biomarkers of environmental exposure for correlative analysis with pharmacotypes. | Imperial College London |

| PacBio HiFi Reads | Long-read sequencing for resolving complex pharmacogene haplotypes (e.g., CYP2D6) with high accuracy. | PacBio |

| Organ-on-a-Chip (Gut-Liver) | Microfluidic co-culture system to model first-pass metabolism and gut microbiome interactions. | Emulate, Inc. |

Integrative multi-omics represents the cornerstone of a modern, systems-level approach to biological research, directly aligning with the Human Genome Organization (HUGO) ecological genomics vision. HUGO's ecological genomics framework emphasizes understanding the genome within its environmental and regulatory context, recognizing that phenotypic outcomes are the product of dynamic, multi-layered interactions. This whitepaper provides a technical guide for linking genomic variants with their functional consequences across epigenomic, proteomic, and metabolomic layers, thereby realizing HUGO's vision of a comprehensive, ecologically informed view of genome function in health, disease, and drug response.

Foundational Concepts and Data Types

Each omics layer provides a distinct, yet interconnected, perspective on biological state.

Table 1: Core Multi-Omics Data Layers and Their Characteristics

| Omics Layer | Primary Molecular Entity | Key Technologies | Temporal Dynamics | Primary Functional Insight |

|---|---|---|---|---|

| Genomics | DNA Sequence | WGS, WES, SNP Arrays | Static (germline) | Genetic blueprint & variation |

| Epigenomics | DNA/Chromatin Modifications | ChIP-seq, ATAC-seq, WGBS, RRBS | Dynamic, tissue-specific | Regulatory potential & gene silencing/activation |

| Proteomics | Proteins & Post-Translational Modifications (PTMs) | LC-MS/MS, TMT, Antibody Arrays | Moderate (mins-hrs) | Functional effectors & pathway activity |

| Metabolomics | Small-Molecule Metabolites | LC/GC-MS, NMR | Rapid (secs-mins) | Biochemical phenotype & metabolic fluxes |

Methodologies for Integration

Experimental Design and Cohort Considerations

Effective integration begins with robust experimental design. For studies within the HUGO ecological framework, samples should be collected with detailed phenotypic and environmental metadata. A recommended design is a matched multi-omics profile on the same biological sample (e.g., tissue biopsy, primary cells) or from the same subject.

Protocol 2.1.1: Matached Sample Multi-Omics Extraction from Tissue

- Tissue Homogenization: Flash-freeze tissue in liquid N₂. Pulverize using a cryomill. Aliquot powder for parallel extractions.

- Genomic DNA Extraction: Use a silica-column based kit (e.g., Qiagen DNeasy). Perform RNase A treatment. Assess purity (A260/280 ~1.8) and integrity (PFGE or Genomic DNA ScreenTape).

- Epigenomic Material (Chromatin/Nuclei): For ATAC-seq or ChIP-seq, immediately process a separate aliquot. For ATAC-seq, use the Omni-ATAC protocol: homogenize in cold lysis buffer, spin, tagment purified nuclei with Tn5 transposase (Illumina).

- Protein Extraction: Homogenize tissue powder in RIPA buffer with protease/phosphatase inhibitors. Sonicate on ice. Clarify by centrifugation at 14,000g for 15 min at 4°C. Quantify via BCA assay.

- Metabolite Extraction: Use a dual-phase methanol/chloroform/water extraction. For 10mg powder, add 400µl cold methanol and 85µl chloroform. Vortex, add 200µl water, vortex, centrifuge. Collect aqueous (polar) and organic (lipid) phases separately. Dry under N₂ gas and reconstitute in MS-compatible solvent.

Data Generation and Pre-processing

Each data type requires stringent, layer-specific QC before integration.

Table 2: Key QC Metrics and Normalization by Layer

| Layer | QC Metric | Tool/Software | Normalization Method |

|---|---|---|---|

| Genomics | Coverage depth, Ti/Tv ratio, call rate | GATK, bcftools | None (variant calling) |

| Epigenomics | FRiP score (ChIP-seq), TSS enrichment (ATAC-seq), bisulfite conversion rate (WGBS) | FASTQC, deepTools, Bismark | Reads per kilobase per million (RPKM) or DESeq2 (for counts) |

| Proteomics | PSMs, missed cleavage rate, intensity distribution | MaxQuant, Proteome Discoverer | Median centering, variance stabilization (vsn) |

| Metabolomics | Total ion count, RT alignment, blank subtraction | XCMS, MS-DIAL | Probabilistic quotient normalization (PQN), log-transformation |

Core Computational Integration Strategies

Integration can be vertical (matching features across layers for the same samples) or horizontal (concatenating features across samples). The following are key methodologies.

2.3.1 Correlation-Based Network Analysis This method identifies relationships between entities (e.g., a SNP, a chromatin peak, a protein, a metabolite) across omics layers.

Protocol 2.3.1: Multi-Omic Network Construction using WGCNA

- Feature Selection: For each omics layer, filter to the top n most variable features (e.g., n=5000).

- Data Scaling: Standardize each feature (mean=0, variance=1) across all samples.

- Similarity Matrix: Calculate a pairwise biweight midcorrelation or Spearman correlation matrix for all selected features across all layers.

- Network Construction: Use Weighted Gene Co-expression Network Analysis (WGCNA) to build an unsigned adjacency matrix: a_ij = |cor(x_i, x_j)|^β. Soft-power β is chosen based on scale-free topology fit.

- Module Detection: Perform hierarchical clustering on a topological overlap matrix (TOM) and identify modules using dynamic tree cutting.

- Integration: Relate modules to external traits (e.g., disease status). Extract module eigengenes (first principal component) and correlate them across layers to identify inter-omic module relationships.

2.3.2 Latent Variable Methods (Factorization) These models decompose the multi-omics data matrix into a set of latent (hidden) factors that represent shared biological signals.

Protocol 2.3.2: Integration using Multi-Block Partial Least Squares (MB-PLS)

- Data Arrangement: Organize data into blocks X1 (genomics/variants), X2 (epigenomics), X3 (proteomics), X4 (metabolomics), and an outcome matrix Y (phenotype).

- Deflation and Weight Calculation: The algorithm seeks weight vectors w1...w4 to maximize covariance between the combined latent components t = Σ Xkwk* and Y.

- Iterative Solution: Solve via the NIPALS algorithm: a) Start with u from Y. b) For each block k, calculate inner relation weights: wk = Xk'u / (u'u). c) Normalize wk. d) Calculate block scores: tk = Xkwk. e) Combine block scores into a super-score *t. f) Update u as the Y-score from regressing Y on t. g) Repeat until convergence.

- Interpretation: Examine loadings for each wk to identify which features from each omics block contribute most to the latent factor correlated with the phenotype.

2.3.3 Pathway-Centric Integration This approach maps features from all layers onto known biological pathways to gain functional insight.

Protocol 2.3.3: Multi-Omic Pathway Enrichment with IMPaLA