GC Bias in NGS: Understanding, Correcting, and Validating Normalization Algorithms for Accurate Analysis

This article provides a comprehensive guide for researchers and bioinformaticians on GC bias normalization in sequencing data.

GC Bias in NGS: Understanding, Correcting, and Validating Normalization Algorithms for Accurate Analysis

Abstract

This article provides a comprehensive guide for researchers and bioinformaticians on GC bias normalization in sequencing data. It covers the foundational causes of GC-content bias in NGS workflows, explores major algorithmic correction methods (including LOESS, GC-aware CNV tools, and machine learning approaches), offers troubleshooting strategies for persistent bias, and presents frameworks for validating normalization efficacy. The content aims to equip professionals with the knowledge to select, apply, and validate the optimal GC bias correction method for applications ranging from variant calling to copy number variation analysis and differential expression, ultimately enhancing the reliability of downstream genomic interpretations.

What is GC Bias? Demystifying the Sequence Composition Effect in NGS Data

GC bias is a systematic technical artifact in next-generation sequencing (NGS) where the observed read depth and coverage across a genome are non-uniformly influenced by the local guanine-cytosine (GC) content. In an ideal, unbiased experiment, reads should be sampled uniformly from all genomic regions regardless of sequence composition. However, in practice, regions with extremely low or high GC content show marked reductions in coverage, while regions with moderate GC content (typically ~50%) are overrepresented. This bias compromises variant calling, copy number variation (CNV) analysis, and quantitative applications like RNA-seq or ChIP-seq.

The underlying causes are multifaceted and occur during key library preparation steps:

- PCR Amplification: DNA polymerases exhibit varying efficiency based on template GC content. High-GC regions form stable secondary structures, causing polymerase stalling and dropouts. Low-GC regions may denature more easily or be less efficiently primed.

- Cluster Generation (Illumina): The bridge amplification on the flow cell is fundamentally a PCR process, propagating the initial bias.

- Sequencing: Base calling and phasing/pre-phasing corrections can be less accurate in homopolymer or extreme GC regions.

Quantitative Impact of GC Bias

The following table summarizes typical coverage deviations observed across GC content bins in unnormalized Whole Genome Sequencing (WGS) data.

Table 1: Representative Coverage Variation by GC Content Bin

| GC Content Bin (%) | Expected Normalized Coverage (%) | Typical Observed Raw Coverage (%) | Deviation Factor |

|---|---|---|---|

| ≤ 20 | 100 | 40 - 60 | 0.4x - 0.6x |

| 30 - 40 | 100 | 80 - 95 | 0.8x - 0.95x |

| 40 - 50 | 100 | 105 - 120 | 1.05x - 1.2x |

| 50 - 60 | 100 | 110 - 125 | 1.1x - 1.25x |

| ≥ 70 | 100 | 30 - 70 | 0.3x - 0.7x |

Note: Specific deviation values depend on the sequencing platform, library prep kit, and PCR cycle count.

Protocol: Assessing GC Bias in WGS Data

This protocol details the computational workflow to quantify GC bias from a BAM file.

Objective: Generate a plot of mean coverage depth versus GC content percentage for a reference genome.

Input: Aligned sequencing reads in BAM format (sample.bam), reference genome FASTA (ref.fa).

Software: Samtools, BEDTools, R/Python with appropriate libraries.

Procedure:

Generate GC Content Bins:

Calculate Read Depth per Window:

Aggregate and Visualize:

- Load the final

.bedfile into R/Python. - Group windows into bins based on their GC content (e.g., 1% bins from 0% to 100%).

- Calculate the mean depth for all windows within each GC bin.

- Normalize the mean depth by the median depth across all bins.

- Plot normalized coverage (y-axis) against GC content percentage (x-axis).

- Load the final

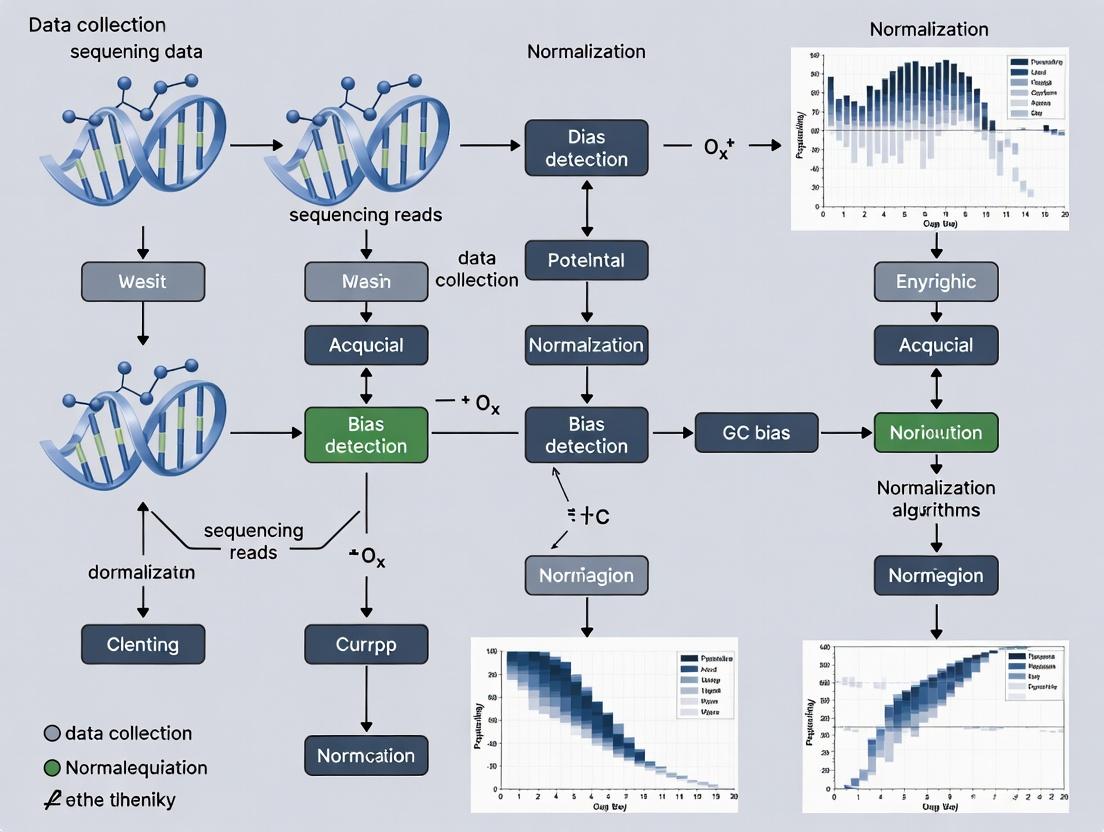

Visualization: GC Bias Assessment Workflow

Diagram Title: Computational Workflow for GC Bias Quantification

Table 2: Key Research Reagents and Solutions for GC Bias Studies

| Item | Function in GC Bias Context |

|---|---|

| PCR-Free Library Prep Kits (e.g., Illumina TruSeq DNA PCR-Free) | Eliminates the primary source of bias by omposing the PCR amplification step, crucial for establishing a baseline. |

| GC-Rich Enhancers / Additives (e.g., Q5 High GC Enhancer, DMSO, Betaine) | Chemical additives that destabilize secondary structures in high-GC regions, improving polymerase processivity during library amplification. |

| Balanced Nucleotide Mixes | Optimized dNTP solutions that can help mitigate incorporation biases during amplification. |

| Molecular Biology Grade Water | A consistent, nuclease-free water source is critical for reproducible library prep reactions. |

| High-Fidelity DNA Polymerases (e.g., Q5, KAPA HiFi) | Engineered enzymes with reduced GC-content sensitivity and lower error rates for more uniform amplification. |

| Bioanalyzer/TapeStation & qPCR Kits | For accurate quantification of library concentration and size distribution, ensuring balanced loading on the sequencer. |

| Phix Control v3 | Provides a known, low-GC content spike-in control for monitoring run performance and potential bias. |

| Reference Genomes with Pre-calculated GC Tracks | Essential for the bioinformatic pipeline to compute expected vs. observed coverage. |

Protocol: GC Bias Normalization Using Linear Scaling

This protocol outlines a standard in-silico correction method.

Objective: Apply a linear scaling normalization to correct observed read counts based on GC content. Input: GC-coverage profile from Protocol 3.

Procedure:

- Generate the Observed Profile: Follow Protocol 3 to obtain a table of

GC_binandmean_observed_coverage. - Define Expected Coverage: Calculate the global median coverage across all GC bins. This is your

expected_coverage. - Calculate Correction Factors: For each GC bin

i: - Apply Correction: For each genomic window/region belonging to GC bin

i, multiply its raw read count bycorrection_factor(i). - Re-evaluate: Recalculate the GC-coverage profile using the corrected read counts. The plot should show a flat line across all GC bins.

Visualization: GC Bias Normalization Algorithm Concept

Diagram Title: Core Steps of Linear Scaling GC Bias Normalization

Introduction Within the critical pursuit of robust GC bias normalization algorithms for sequencing data, it is essential to understand the fundamental technical artifacts that introduce GC-content-dependent bias. This document details the three primary root causes—PCR amplification, sequencing chemistry, and mapping artifacts—and provides protocols for their diagnosis and mitigation.

1. PCR Amplification Bias During library preparation, PCR amplification unevenly enriches fragments based on their GC content. High-GC fragments exhibit lower amplification efficiency due to incomplete denaturation and polymerase stalling, while low-GC fragments may be overrepresented.

Quantitative Data: PCR Bias Impact

| GC Content Bin (%) | Theoretical Coverage | Observed Coverage (Pre-Correction) | Fold Change |

|---|---|---|---|

| 30-40 | 100x | 125x | +1.25 |

| 40-50 | 100x | 105x | +1.05 |

| 50-60 (Balanced) | 100x | 100x | 1.00 |

| 60-70 | 100x | 82x | 0.82 |

| 70-80 | 100x | 65x | 0.65 |

Protocol 1.1: Assessing PCR Bias with qPCR Objective: Quantify amplification efficiency across GC tiers. Materials: Pre-amplified library DNA, SYBR Green master mix, GC-binned primer sets. Procedure:

- Partition a representative genomic library into 5 GC-content bins (30-40%, 40-50%, 50-60%, 60-70%, 70-80%) via bead-based selection or by design.

- For each bin, perform a standard qPCR reaction in triplicate using primers amplifying a constant region within the fragment.

- Use a serial dilution of a control template to generate a standard curve for each reaction plate.

- Calculate the Amplification Efficiency (E) for each GC bin: E = 10^(-1/slope) - 1.

- Normalize efficiencies to the 50-60% bin. A deviation >10% indicates significant GC bias.

Diagram: PCR Bias Mechanism & Assessment

2. Sequencing Chemistry Bias Sequencing-by-synthesis chemistries exhibit variable performance across homopolymer regions and nucleotide compositions. Altered fluorescence intensities and phasing/pre-phasing errors correlate with local GC content.

Protocol 2.1: Evaluating Cycle-Specific Base-Call Error Objective: Map base-call error rates to sequencing cycle and sequence context. Materials: Sequencer-ready library, spike-in control (e.g., PhiX), alignment software (e.g., BWA, minimap2). Procedure:

- Spike in 1% PhiX genome control into your library. Perform paired-end sequencing.

- Align reads to the PhiX reference genome using stringent parameters.

- Using tools like

Picard CollectGcBiasMetricsor custom scripts, calculate for each sequencing cycle:- Error Rate: (Mismatches + Indels) / Total Bases.

- Average GC% of fragments being sequenced in that cycle.

- Plot error rate and intensity metrics against cycle number and local GC%. Clustering of high error in later cycles for high-GC regions indicates chemistry bias.

Diagram: Sequencing Chemistry Bias Workflow

3. Mapping Artifacts Reads from extreme GC regions map with lower confidence due to ambiguous placement or reduced uniqueness in the reference genome, leading to coverage drops.

Quantitative Data: Mapping Artifact Impact

| Region Type | Average GC% | Uniquely Mapped Reads (%) | Multi-Mapped Reads (%) | Unmapped Reads (%) |

|---|---|---|---|---|

| Low-Complexity | 25% | 85% | 12% | 3% |

| Balanced | 50% | 96% | 3% | 1% |

| High-GC (Promoter) | 70% | 78% | 18% | 4% |

Protocol 3.1: Diagnosing Mapping Ambiguity

Objective: Determine the fraction of reads lost due to non-unique mapping.

Materials: FASTQ files, reference genome, aligner with multi-mapping reporting (e.g., bowtie2 -k 10 or STAR).

Procedure:

- Align reads, allowing for the reporting of multiple alignments per read (e.g.,

-k 10). - Parse alignment output to categorize reads: uniquely mapped, multi-mapped (with number of loci), and unmapped.

- Annotate reads with the GC content of their source fragment or original genomic window.

- Generate a histogram plotting the percentage of multi-mapped and unmapped reads against GC content bins. A spike in multi-mapping at high or low GC indicates mapping artifact bias.

Diagram: Mapping Artifact Decision Logic

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Mitigating GC Bias |

|---|---|

| PCR Enzymes (e.g., KAPA HiFi) | High-fidelity polymerases with enhanced processivity on high-GC templates, reducing amplification bias. |

| Duplex-Sequencing Adapters | Allows for linear amplification or UMI-based correction, minimizing PCR cycles and associated bias. |

| GC Spike-in Controls | Synthetic oligonucleotides with known concentration spanning a GC range; used to quantify and correct bias. |

| Fragmentation Beads | Consistent, enzyme-free fragmentation (e.g., acoustic shearing) reduces initial library composition bias. |

| Methylated Adapter Kits | Prevents adapter-dimer formation, reducing the need for excessive purification that can skew GC representation. |

| Hybridization Capture Reagents | Solution-based capture (e.g., xGen) can exhibit less GC bias compared to solid-phase capture methods. |

1. Introduction This document details the impact of false positives (FPs) and false negatives (FNs) in genomic variant calling and expression analysis, framed within a thesis investigating GC bias normalization algorithms. GC bias—non-uniform sequencing coverage correlated with local guanine-cytosine content—is a major source of technical noise that directly exacerbates FP/FN rates across assays. This application note provides protocols for mitigating these errors and quantifies their downstream consequences on biological interpretation.

2. Quantifying the Impact of GC Bias on FP/FN Rates The following table summarizes the quantitative impact of uncorrected GC bias on FP/FN rates, as established by recent studies.

Table 1: Impact of GC Bias on FP/FN Rates Across Sequencing Assays

| Assay | Primary Impact of GC Bias | Typical FP Rate Increase (Uncorrected) | Typical FN Rate Increase (Uncorrected) | Key Downstream Consequence |

|---|---|---|---|---|

| Copy Number Variation (CNV) | Coverage fluctuation mimicking gains/losses | ~15-25% in high/low GC regions | ~10-20% for focal events in extreme GC | Erroneous pathway enrichment; incorrect oncogene/TSG identification. |

| Single Nucleotide Variant (SNV) | Imbalanced allelic depth and lowered coverage. | Up to 5-10% in low-complexity/GC-extreme regions | Up to 15% in high-GC regions | Missed driver mutations; false somatic variants compromising target validation. |

| RNA-Seq (Differential Expression) | Gene-level read count distortion. | ~8-12% for lowly expressed genes | ~5-10% for genes with mid-to-high expression | Incorrect DE gene lists; corrupted pathway (e.g., KEGG, GO) analysis results. |

3. Protocols for GC Bias Normalization and FP/FN Mitigation

Protocol 3.1: GC Bias Correction in Whole Genome Sequencing (WGS) for CNV/SNV Calling Objective: Normalize read depth variability due to GC content prior to variant calling to reduce FP/FN. Materials: See Scientist's Toolkit. Procedure:

- Alignment: Align paired-end WGS reads to the reference genome (e.g., GRCh38) using BWA-MEM or Bowtie2.

- Post-Alignment Processing: Sort, mark duplicates (GATK Picard), and generate a coverage histogram.

- GC Content Calculation: Using a custom script (e.g., in R or Python), calculate the GC percentage for each non-overlapping 1000-bp bin across the reference genome.

- Loess Regression Normalization: a. For each bin, plot the log2(read count) against its GC percentage. b. Fit a loess curve (span=0.75) to the modal trend. c. Subtract the loess-predicted value from the observed log2(read count) for each bin to obtain the GC-corrected log2 ratio.

- Segmentation & Calling: Input corrected log2 ratios into a segmentation algorithm (e.g., CBS via DNAcopy) to call CNV regions.

- SNV Calling: For SNVs, use GC-corrected BAM files as input to callers (e.g., GATK Mutect2 for somatic, HaplotypeCaller for germline).

Protocol 3.2: GC-Normalized Differential Expression Analysis from RNA-Seq Objective: Generate accurate gene counts corrected for transcript-level GC bias. Materials: See Scientist's Toolkit. Procedure:

- Pseudo-alignment & Quantification: Use Salmon or kallisto in mapping-aware mode, providing a decoy-aware transcriptome index.

- GC Content Attribute: Calculate the GC content for each transcript using

biomaRtorGenomicFeaturesin R. - Count Normalization: Import transcript-level abundances (TPM) and counts using

tximportin R. Generate a gene-level count matrix. - Exploratory Plot: Create a plot of log2(counts) vs. transcript GC content to visualize bias.

- Bias-Aware Correction: Use the

gcqnpackage or incorporate GC content as a covariate inDESeq2's normalization model (model.matrix(~ gc + condition)). Alternatively, useEDASeq's within-lane normalization. - Differential Testing: Perform standard DE analysis with

DESeq2orlimma-voomon the GC-corrected count matrix.

4. Visualization of Workflows and Impacts

Title: GC Bias Causes False Variants and Impacts Analysis

Title: GC Bias Normalization Experimental Workflow

5. The Scientist's Toolkit

Table 2: Key Research Reagent Solutions for GC Bias Studies

| Item / Reagent | Provider / Package | Primary Function in Context |

|---|---|---|

| KAPA HyperPrep Kit | Roche | Library prep kit with demonstrated low GC bias. Critical for reducing bias at source. |

| Illumina NovaSeq 6000 | Illumina | Sequencing platform. Balance between output and inherent bias; requires in silico correction. |

| GATK (v4.3+) | Broad Institute | Toolkit for variant discovery. Includes modules for BAM processing and SNV/indel calling post-correction. |

| DNAcopy (R package) | Bioconductor | Circular Binary Segmentation for CNV calling on GC-corrected log-R ratios. |

| DESeq2 / limma-voom | Bioconductor | Differential expression analysis. Accepts GC-corrected counts or models GC as covariate. |

| EDASeq / gcqn | Bioconductor | Specifically designed for within-lane and GC-content normalization of sequencing data. |

| Salmon | COMBINE-lab | Fast, bias-aware transcript quantification for RNA-Seq, includes GC bias modeling options. |

| GRCh38 / Gencode v44 | Genome Reference Consortium | Standard reference genome and annotation. Essential for accurate GC content calculation per feature. |

Within the broader thesis on GC bias normalization algorithms for sequencing data research, accurate visualization and quantification of GC bias is a critical first step. GC bias—the variation in sequencing coverage as a function of genomic guanine-cytosine (GC) content—compromises downstream analyses including copy number variant detection, transcript quantification, and methylation analysis. This application note details the protocols for generating diagnostic plots and calculating key metrics to assess GC bias in next-generation sequencing (NGS) data, providing researchers, scientists, and drug development professionals with standardized methods for diagnostic evaluation.

Key Metrics for Quantifying GC Bias

The following metrics provide a quantitative summary of GC bias severity, enabling comparison across samples and sequencing runs.

Table 1: Key Metrics for GC Bias Quantification

| Metric | Formula/Description | Interpretation | Optimal Value |

|---|---|---|---|

| Spread (Coefficient of Variation) | (Standard Deviation of Coverage per GC bin / Mean Coverage) × 100 | Measures the overall variability of coverage across GC bins. Lower values indicate less bias. | < 10-15% |

| Correlation (Pearson's r) | Correlation coefficient between GC% and mean coverage. | Strength and direction of linear relationship. | Closer to 0 |

| Range | (Max Mean Coverage - Min Mean Coverage) / Mean Coverage | Normalized difference between the highest and lowest coverage bins. | Minimized |

| Residual Error | Sum of squared deviations from a loess-smoothed or polynomial fit. | Captures non-linear bias patterns. | Minimized |

Protocols for Generating Diagnostic Plots

This protocol describes the workflow from aligned sequencing data (BAM files) to the generation of the Coverage vs. GC% plot.

Protocol 3.1: Computational Generation of Coverage vs. GC% Plots

Objective: To visualize the relationship between genomic GC content and sequencing read depth.

Input: Coordinate-sorted BAM file, reference genome FASTA file, and its corresponding GC content pre-computed file (e.g., from gcCounter).

Software: Samtools, BEDTools, R/Python with ggplot2/matplotlib.

Define Genomic Windows:

- Partition the reference genome into non-overlapping windows of a defined size (e.g., 1 kb, 20 kb for whole-genome sequencing; exonic windows for capture data). Generate a BED file.

- Critical: Window size must be chosen based on sequencing design and expected fragment length.

Calculate GC Content per Window:

- Use

bedtools nucwith the reference genome FASTA and the window BED file to compute the GC fraction for each genomic window. bedtools nuc -fi reference.fasta -bed windows.bed > gc_content.bed

- Use

Calculate Mean Coverage per Window:

- Use

samtools depthandbedtools mapor a coverage calculator likemosdepth. mosdepth --by windows.bed output_prefix sample.bam

- Use

Merge and Aggregate Data:

- Merge the GC content and mean coverage tables by genomic coordinates.

- Group windows into bins based on their GC fraction (e.g., 0-1%, 1-2%, ..., 99-100%). Calculate the mean coverage for all windows within each GC bin.

Generate the Diagnostic Plot:

- Plot the mean coverage (y-axis) against the GC bin midpoint (x-axis).

- Fit and overlay a smoothing curve (e.g., LOESS regression).

- Indicate the global mean coverage as a horizontal line.

- Annotate plot with key metrics (Spread, Correlation).

Protocol 3.2: In-silico Spike-in Assessment of GC Bias

Objective: To control for biological confounders and isolate technical GC bias using synthetic sequences. Input: A set of synthetic DNA sequences (e.g., from Spike-in controls like ERCC ExFold RNA mixes or custom DNA fragments) with known concentrations spanning a wide GC range, spiked into the sample prior to library preparation.

Spike-in Addition:

- Add a known molar quantity of synthetic spike-in sequences to the patient/experimental sample prior to DNA/RNA extraction and library construction.

Sequencing and Alignment:

- Sequence the pooled library. Align reads to a combined reference containing both the experimental genome and the spike-in sequences.

Isolated Analysis:

- Extract alignments corresponding only to the spike-in references.

- Perform steps 3.1.2-3.1.5 exclusively on the spike-in sequences. The observed coverage vs. GC relationship directly reflects technical bias, as biological factors are absent.

Bias Correction Calibration:

- The deviation from the expected uniform coverage across spike-ins informs and calibrates the parameters of GC bias normalization algorithms applied to the experimental data.

Title: Computational workflow for GC bias diagnostic plot.

Title: In-silico spike-in protocol for isolating technical GC bias.

The Scientist's Toolkit

Table 2: Essential Research Reagents & Tools

| Item | Function in GC Bias Analysis | Example/Note |

|---|---|---|

| GC-Rich/Poor Control DNA | Provides known-molecule standards to track bias through wet-lab steps. | Horizon Discovery's Multiplex I cDNA, AT/GC-rich synthetic fragments. |

| Spike-in RNA/DNA Mixes | In-situ controls for isolating technical bias from biological variation. | ERCC ExFold RNA Spike-in Mixes (Thermo Fisher), SIRV Sets (Lexogen). |

| Bias-Aware Aligners | Aligners that account for GC-content to reduce mapping bias at this step. | BWA-MEM, Bowtie2 with appropriate settings. |

| Coverage Profilers | Fast, efficient tools for calculating coverage across genomic windows. | mosdepth, bedtools coverage, deepTools bamCoverage. |

| GC Bias Norm. Software | Algorithms to correct observed bias in coverage data. | GATK GC Bias Correction, cn.MOPS, normalizeCoverage (Bioconductor). |

| Visualization Packages | Libraries for generating publication-quality diagnostic plots. | R: ggplot2, gt. Python: matplotlib, seaborn. |

Within the broader thesis on GC bias normalization algorithms for sequencing data, a critical distinction must be made. While most observed GC-content bias arises from technical artifacts during library preparation, amplification, and sequencing, genuine biological correlations between genomic features and GC content also exist. Misidentifying biological correlation as technical bias can lead to the erroneous normalization of true biological signal, confounding downstream analysis in biomarker discovery, differential expression, and variant calling. This document provides application notes and protocols to distinguish between these two sources of GC correlation.

Table 1: Discriminating Features of GC Correlation Sources

| Feature | Technical Confounder | Biological Confounder |

|---|---|---|

| Pattern Across Samples | Consistent pattern across all samples/runs from a given platform/protocol. | Variable across sample groups (e.g., disease vs. control); may follow biological covariates. |

| Genomic Uniformity | Correlation is relatively uniform across the genome for similar genomic regions. | Concentrated in functional genomic elements (e.g., gene-rich areas, specific chromatin domains). |

| Reproducibility | Reproducible across technical replicates; mitigated by changing library kit or sequencer. | Reproducible across biological replicates; persists despite technical changes. |

| Effect on Metrics | Leads to spurious correlations between coverage/expression and GC content, even in inert DNA. | Co-localizes with validated functional annotations; linked to known biological mechanisms. |

| Normalization Outcome | Standard GC bias normalization (e.g., Loess, GC-bin) improves accuracy and uniformity. | Similar normalization attenuates or removes genuine biological signal. |

Table 2: Common Assays and Their Typical GC Bias Profile (Current Platforms)

| Assay | Primary Source of GC Correlation | Recommended Investigation Method |

|---|---|---|

| Whole Genome Sequencing (WGS) | Strong technical bias during PCR amplification. | Compare coverage in genomic bins vs. GC content; use spike-in controls. |

| RNA-Seq | Mixed: Technical bias in cDNA synthesis, and biological correlation with gene expression regulation. | Analyze correlation in exogenous spike-ins (technical) vs. endogenous genes (biological). |

| ChIP-Seq | Predominantly biological (open chromatin is GC-rich), but with technical PCR bias. | Use input DNA control to isolate technical component. |

| ATAC-Seq | Strong biological signal (accessible regions are GC-rich), with minor technical bias. | Compare to DNase-seq and chromatin state maps. |

Experimental Protocols

Protocol 1: Disentangling Technical Bias Using Exogenous Spike-in Controls

Objective: To isolate and quantify the technical component of GC bias.

Materials: ERCC ExFold RNA Spike-in Mixes (Thermo Fisher) or Sequins (synthetic DNA mimics); standard NGS library prep kit.

Procedure:

- Spike-in Addition: Prior to library preparation, add a known quantity of exogenous spike-in sequences (e.g., ERCC for RNA-Seq, Sequins for WGS) to your sample. These molecules have a wide, predetermined range of GC contents but are biologically inert in your system.

- Library Preparation & Sequencing: Proceed with standard library preparation and sequencing.

- Data Analysis:

- Map reads to a combined reference genome + spike-in sequence index.

- For each spike-in molecule, calculate observed read count (or coverage) and its expected abundance based on input amount.

- Plot the log-ratio (observed/expected) against the GC content of each spike-in.

- Interpretation: Any systematic correlation in this plot is purely technical bias. Model this relationship (e.g., polynomial fit).

- Application: This model can be used to correct the technical bias component for endogenous genomic features, preserving biological correlations.

Protocol 2: Validating Biological GC Correlation via Orthogonal Assays

Objective: To confirm that a GC-correlated signal has a biological basis.

Materials: Samples for primary NGS assay (e.g., ATAC-seq) and for orthogonal assay (e.g., DNase-seq, ChIP-seq for histone marks).

Procedure:

- Primary Assay: Generate your primary dataset (e.g., ATAC-seq peaks).

- GC Correlation Analysis: Calculate the GC content of signal regions (e.g., ATAC peaks) and compare to size-matched, random genomic regions. A significant difference suggests a potential biological link.

- Orthogonal Validation:

- Perform an orthogonal, biologically related assay on the same biological samples (e.g., DNase-seq, which also detects open chromatin but uses a different enzymatic principle).

- Identify signal regions from the orthogonal assay.

- Co-localization Analysis:

- Overlap the GC-correlated regions from the primary assay with regions from the orthogonal assay.

- Use statistical tests (e.g., hypergeometric) to determine if the overlap is significantly greater than expected by chance.

- Interpretation: Significant co-localization strongly supports that the GC correlation is driven by shared underlying biology (e.g., open chromatin structure), not a technical artifact unique to one protocol.

Diagrams

Diagram 1: Decision Workflow for GC Correlation Analysis

Diagram 2: Spike-in Protocol to Isolate Technical Bias

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for GC Bias Investigation

| Item | Vendor Examples (Current) | Function in Analysis |

|---|---|---|

| Exogenous RNA Spike-in Mixes | ERCC RNA Spike-In Mix (Thermo Fisher), SIRVs (Lexogen) | Provides GC-diverse, biologically inert controls for RNA-Seq to isolate technical bias. |

| Exogenous DNA Spike-in Controls | Sequins (Garvan Institute), PhiX Control v3 (Illumina) | Synthetic DNA mimics or non-host genomes used in WGS/ChIP-seq to model technical bias. |

| Ultrapure, GC-Standard DNA/RNA | Human Reference Genomic DNA (e.g., NA12878, Coriell), Synthetic Oligo Pools | Provides a uniform background to run control experiments assessing baseline technical bias of a platform. |

| Automated Nucleic Acid Shearing System | Covaris, Diagenode Bioruptor | Produces consistent fragment size distributions, reducing a major source of technical variability that interacts with GC bias. |

| PCR-Free Library Prep Kits | Illumina DNA PCR-Free Prep, KAPA HyperPrep | Minimizes the introduction of GC bias during amplification, a major technical confounder. |

| Bias-Correcting Polymerases | Q5 High-Fidelity DNA Polymerase (NEB), KAPA HiFi HotStart ReadyMix | Enzymes engineered for uniform amplification across varying GC templates, reducing technical bias. |

A Practical Guide to GC Bias Normalization Algorithms and Tools

Application Notes

Within the context of GC bias normalization for high-throughput sequencing data, algorithmic strategies have evolved from rudimentary adjustments to sophisticated, model-based corrections. This progression is critical for accurate downstream analyses in genomics, variant calling, and biomarker discovery for drug development.

Evolution of Normalization Approaches: Early methods treated GC bias as a simple scaling problem, using linear corrections based on the observed vs. expected read counts across GC-content bins. Modern approaches recognize the non-linear, sample-specific, and often library preparation-dependent nature of the bias, employing advanced regression and machine learning models that account for these complexities and integrate multiple covariates.

Key Quantitative Comparison: Table 1: Comparison of GC Bias Normalization Algorithm Classes

| Algorithm Class | Key Principle | Typical Use Case | Advantages | Limitations |

|---|---|---|---|---|

| Simple Scaling | Linear adjustment per GC-bin (e.g., median scaling). | Preliminary data exploration, shallow sequencing. | Fast, transparent, easy to implement. | Assumes uniform bias, fails with non-linear effects. |

| LOESS Regression | Local polynomial regression of read count vs. GC fraction. | Standard WGS/exome data with moderate bias. | Models non-linear trends, robust to local variation. | Can be sensitive to parameter choice, may over/under-smooth. |

| Advanced Regression | Generalized linear models (GLMs), elastic nets, or quantile regression incorporating multiple covariates (e.g., mappability, dinucleotide content). | Complex samples (FFPE, low-input), precision applications. | Highly flexible, controls for confounders, improves accuracy. | Computationally intensive, risk of overfitting, requires careful validation. |

| Machine Learning | Random Forest or CNN models trained on sequence features to predict expected coverage. | Cutting-edge research, highly heterogeneous data. | Captures complex, high-order interactions without explicit formula. | "Black-box" nature, requires large training sets, complex deployment. |

Experimental Protocols

Protocol 1: Benchmarking GC Normalization Algorithms for Variant Calling

Objective: To evaluate the efficacy of different normalization algorithms in improving SNP/Indel calling accuracy.

Materials: Paired tumor-normal WGS datasets (e.g., from publicly available repositories like ICGC or TCGA), raw sequencing reads (FASTQ), reference genome (GRCh38), BWA-MEM2, GATK, Samtools, R/Bioconductor packages (cn.mops, QDNAseq or custom scripts).

Procedure:

- Data Processing: Align all FASTQ files to the reference genome using BWA-MEM2. Sort and index BAM files using Samtools.

- Generate GC Content Track: Calculate GC percentage for fixed-size genomic bins (e.g., 1 kb) across the reference genome using a custom script.

- Apply Normalizations:

- Control: Use unnormalized read counts per bin.

- Simple Scaling: Compute the median read count across all bins. For each GC-bin, calculate a scaling factor as (global median / bin median). Multiply counts in each bin by its factor.

- LOESS Normalization: Fit a LOESS curve (span=0.75) to read count as a function of GC percentage. Divide observed counts by the LOESS-predicted value for its GC bin.

- Advanced Regression: Fit a Poisson or Negative Binomial GLM:

Read Count ~ GC + GC^2 + Mappability + Repetitive Content. Use fitted values to normalize observed counts.

- Variant Calling: Perform duplicate marking and base quality score recalibration. Call variants using GATK HaplotypeCaller on both raw and normalized BAM files for tumor and normal samples.

- Analysis: Compare variant call sets against a validated truth set (e.g., Genome in a Bottle). Calculate precision, recall, and F1-score for each normalization method.

Protocol 2: Normalization for Copy Number Variation (CNV) Analysis in Cancer

Objective: To assess impact of GC correction on CNV segmentation and detection.

Materials: As in Protocol 1, plus CNV calling software (DNAcopy, GISTIC).

Procedure:

- Bin Count Extraction: Generate read counts for fixed bins (20-50 kb) from normalized (via each method) and unnormalized BAMs.

- Segment & Call CNVs: Use circular binary segmentation (

DNAcopy) to identify genomic segments with abnormal log2 ratios (sample/control). - Evaluation: Compare identified CNV segments against orthogonal validation data (e.g., array CGH or digital PCR). Key metrics: False Discovery Rate (FDR) for amplifications/deletions, breakpoint accuracy.

Mandatory Visualization

Title: GC Bias Normalization Algorithm Workflow

Title: Algorithm Selection Decision Tree

The Scientist's Toolkit

Table 2: Essential Research Reagent Solutions for GC Bias Normalization Experiments

| Item | Function in GC Bias Studies |

|---|---|

| High-Quality Reference Genomes (e.g., GRCh38, CHM13) | Provides the baseline sequence for calculating GC content and mappability for each genomic bin. |

| Calibration DNA Standards (e.g., Genome in a Bottle, Seraseq) | Provides orthogonal truth sets for benchmarking the accuracy of normalization on variant or CNV calls. |

| Library Preparation Kits with GC Mitigation (e.g., Kapa HiFi, SPLAT) | Reagents designed to minimize the introduction of GC bias during PCR amplification, serving as a pre-emptive control. |

| Bioinformatics Suites (GATK, BWA, Samtools) | Core tools for read alignment, file manipulation, and initial data processing prior to normalization. |

Statistical Software & Packages (R/Bioconductor: DNAcopy, cn.mops, EDASeq) |

Provide implemented functions for LOESS, regression-based normalization, and downstream structural variant analysis. |

| Compute Infrastructure (HPC clusters, Cloud computing) | Essential for processing large sequencing datasets and running computationally intensive advanced regression models. |

Application Notes and Protocols

Within the broader thesis on GC bias normalization algorithms for sequencing data research, LOESS (Locally Estimated Scatterplot Smoothing) regression for GC-content correction is a fundamental computational technique. It addresses systematic biases in next-generation sequencing (NGS) where read counts or coverage correlate with the GC content of genomic regions. This bias adversely affects copy number variant (CNV) detection, comparative genomic hybridization (CGH), and gene expression analysis. The implementation, often referred to as CorrectGC or similar, fits a smooth curve to the observed read count vs. GC% relationship, which is then used to normalize the data, yielding a more accurate biological signal.

Core Principles of LOESS/GC Curve Fitting

LOESS is a non-parametric method that fits simple models (e.g., polynomials) to localized subsets of data. For GC correction:

- Input Data: Binned genomic regions (e.g., windows, exons, genes) with two key metrics: (1) observed read count/coverage and (2) GC percentage.

- Fitting Process: For each data point, a low-degree polynomial is fitted to a neighborhood of points, weighted by their distance from the point of interest. The smoothed value at that point is the value of the fitted polynomial.

- Normalization: The observed read count is divided by the LOESS-fitted expected value for its specific GC%, generating a normalized copy number ratio or coverage score.

Table 1: Key Parameters in LOESS/GC Normalization

| Parameter | Typical Range/Choice | Impact on Curve Fitting |

|---|---|---|

| Span (Bandwidth) | 0.2 - 0.75 | Controls smoothness. Lower span = more local detail/noise; higher span = greater smoothness. |

| Polynomial Degree | 1 (Linear) or 2 (Quadratic) | Complexity of the local fit. Degree 1 is standard for GC correction. |

| Weighting Function | Tricube (default) | Gives more weight to points closer to the estimation point. |

| Genomic Bin Size | 1 kb - 50 kb (WGS) / Exon-level (Targeted) | Affects data distribution. Smaller bins = noisier relationship. |

| Iterations | 1-3 (with robust fitting) | Reduces influence of outliers during fitting. |

Detailed Experimental Protocol: CorrectGC Workflow for WGS CNV Analysis

Objective: Normalize read depth from whole-genome sequencing for accurate CNV calling.

Materials & Input Data:

- Aligned Sequencing Reads: BAM/CRAM files.

- Reference Genome: FASTA file for GC content calculation.

- Genomic Interval List: BED file defining bins (e.g., 20 kb non-overlapping windows).

Procedure: Step 1: Generate Read Counts per Genomic Bin.

- Use tools like

mosdepth,bedtools multicov, orsamtools bedcov. - Command Example (bedtools):

Step 2: Calculate GC Percentage for Each Bin.

- Extract sequence for each bin from the reference genome and compute GC%.

- Command Example (bedtools & awk):

Step 3: Merge and Filter Data.

- Merge count and GC tables. Remove bins with extreme GC (<20% or >80%) or in problematic genomic regions (e.g., gaps, telomeres).

Step 4: Perform LOESS Fitting and Normalization (R Implementation).

Step 5: Visualization and QC.

- Plot observed log2(count) vs. GC% before and after correction. The corrected values should show no systematic GC dependency.

The Scientist's Toolkit

Table 2: Essential Research Reagent Solutions for GC Bias Normalization

| Item | Function/Description | Example Tools/Implementations |

|---|---|---|

| Sequence Read Counter | Calculates coverage per genomic region from aligned reads. | mosdepth, bedtools multicov, GATK DepthOfCoverage |

| GC Content Calculator | Computes the fraction of G and C bases for defined genomic intervals. | bedtools nuc, Pyfaidx (Python) |

| LOESS Fitting Engine | Core algorithm performing the local regression smoothing. | R stats::loess(), Python statsmodels.nonparametric.smoothers_lowess |

| Normalization Pipeline | Integrates steps from counting to correction. | cn.mops, QDNAseq, HMMcopy, in-house R/Python scripts. |

| Visualization Package | Generates diagnostic plots for QC of bias correction. | R ggplot2, Python matplotlib/seaborn |

| Benchmark Dataset | Control sample(s) with known copy number landscape for validation. | NA12878 (Genome in a Bottle) cell line, simulated spike-in data. |

Visualizing the Workflow and Logic

Diagram 1: LOESS GC Normalization Pipeline

Diagram 2: LOESS Local Fitting Logic

This application note details read-count based methods for Copy Number Variation (CNV) analysis, specifically focusing on the Circular Binary Segmentation (CBS) algorithm and its implementation in the DNAcopy package. This work is situated within a broader thesis investigating GC bias normalization algorithms for next-generation sequencing (NGS) data. Accurate CNV detection is fundamentally dependent on the effective correction of sequence-derived biases, with GC content being a predominant confounding factor. These segmentation methods operate on normalized read-count data, where the precision of the input directly dictates the accuracy of the identified copy number segments. Therefore, the protocols herein assume the use of optimally bias-corrected read-count data as a critical pre-processing step.

- CBS (Circular Binary Segmentation): A widely-used change-point detection algorithm that recursively partitions chromosomal data into segments of constant copy number. It uses a maximal t-statistic to test for a change-point between two adjacent segments and continues until no statistically significant change-points remain.

- DNAcopy: An R/Bioconductor package that implements the CBS algorithm, along with smoothing and outlier detection functions. It is the standard implementation for array-based and sequencing-based CNV analysis.

- Segmentation (General Concept): The overarching process of dividing a sequence of normalized read-counts (log₂ ratios) along a chromosome into discrete intervals, each hypothesized to originate from a region of constant copy number.

Quantitative Comparison of Segmentation Methods

Table 1: Comparison of Read-Count Based Segmentation Methods and Associated Tools

| Method/Package | Core Algorithm | Primary Input | Key Strength | Typical Use Case | Statistical Test |

|---|---|---|---|---|---|

| DNAcopy (CBS) | Circular Binary Segmentation | Normalized Log R Ratios | High sensitivity for focal CNVs; robust to outliers. | Discovery of both broad and focal CNVs in exome/targeted sequencing. | Maximized t-test |

| PICNIC | Hidden Markov Model (HMM) | Allele-specific read counts | Integrates allelic ratio with total read depth. | Tumor ploidy and aberration profiling in whole-genome sequencing. | Bayesian inference |

| CNVkit | CBS & HMM hybrids | Targeted & off-target reads | Reference copy number normalization; panel sequencing. | Clinical targeted panels and exome sequencing. | Circular Binary Segmentation |

| QDNAseq | HMM | Binned read counts from .bam | Corrects for GC and mappability biases in WGS. | Whole-genome sequencing from single cells or bulk tissue. | Hidden Markov Model |

Detailed Experimental Protocols

Protocol 3.1: CNV Segmentation Using DNAcopy on GC-Normalized WES Data

Objective: To identify somatic copy number alterations from tumor-normal paired whole-exome sequencing data after GC-bias normalization.

I. Pre-processing & Input Data Generation

- Alignment & BAM Generation: Align paired-end FASTQ reads to a reference genome (e.g., GRCh38) using BWA-MEM. Process with GATK best practices for duplicate marking and base quality recalibration.

- GC-Bias Normalization: Using tools from the thesis framework (e.g.,

gcNormSeq), generate bias-corrected read counts. An alternative standard method is:- Use

mosdepthto calculate read depth in fixed-size bins (e.g., 10 kb) or exonic targets. - Apply a LOESS or polynomial regression model to correct the relationship between read depth and GC content.

- Use

- Calculate Log₂ Ratios: For each bin/target, compute log₂(Tumor Read Count / Normal Read Count). Subtract the median log₂ ratio (assuming diploid baseline).

II. Segmentation with DNAcopy R Package

III. Post-processing & Interpretation

- Calling Aberrations: Define thresholds (e.g., log₂ ratio > 0.3 as gain, < -0.3 as loss). More sophisticated calling may consider segment mean and width.

- Annotation: Annotate segments with gene information using tools like

bedtools intersector R/Bioconductor packages (GenomicRanges). - Visual Validation: Inspect segmented data in genome browsers (e.g., IGV).

Protocol 3.2: Benchmarking Segmentation Performance Using Simulated Data

Objective: To evaluate the sensitivity and specificity of CBS under varying levels of GC bias residual noise.

Procedure:

- Generate Ground Truth: Use a simulator (e.g.,

ARTfor reads,CNVSimfor profiles) to create in-silico tumor genomes with known CNVs across a range of lengths and amplitudes. - Introduce Controlled GC Bias: Artificially impose a non-linear GC-depth relationship on the simulated read counts.

- Apply Normalization: Correct the biased counts using the thesis GC normalization algorithm and a standard method (e.g., from

CNVkit). - Run Segmentation: Process both normalized datasets through DNAcopy with identical parameters (alpha=0.01, min.width=5).

- Calculate Metrics: Compare called segments to the ground truth using metrics in Table 2.

Table 2: Performance Metrics for Segmentation Benchmarking

| Metric | Formula | Interpretation |

|---|---|---|

| Precision (Positive Predictive Value) | TP / (TP + FP) | Proportion of called CNVs that are true. |

| Recall (Sensitivity) | TP / (TP + FN) | Proportion of true CNVs that are detected. |

| F1-Score | 2 * (Precision * Recall) / (Precision + Recall) | Harmonic mean of precision and recall. |

| Breakpoint Accuracy | Mean absolute distance between predicted and true breakpoints (bp) | Average error in locating segment boundaries. |

Visual Workflows and Diagrams

Title: Workflow for CNV Analysis from Sequencing Data

Title: CBS Algorithm Decision Logic

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Tools for CNV Analysis Experiments

| Item/Category | Specific Example(s) | Function in Protocol |

|---|---|---|

| NGS Library Prep Kit | Illumina TruSeq DNA PCR-Free, Kapa HyperPrep | Preparation of sequencing libraries from genomic DNA for whole-genome or exome analysis. |

| Target Enrichment Kit | Illumina Nextera Flex for Enrichment, Agilent SureSelect XT | Capture of exonic or specific genomic regions for targeted sequencing. |

| Alignment Software | BWA-MEM, Bowtie2 | Maps sequencing reads to a reference genome to produce BAM files. |

| GC Normalization Tool | Custom Thesis Algorithm, CNVkit fix, GATK CalculateTargetCoverage with GC correction. |

Corrects systematic bias in read counts associated with GC content, critical for accurate log₂ ratios. |

| Segmentation Engine | DNAcopy (CBS) in R, cnvlib.segment in CNVkit (Python) |

Core algorithm that identifies discrete copy number change-points from continuous data. |

| Genomic Annotation Database | UCSC RefGene, ENSEMBL, dbVar | Provides gene coordinates and known CNV regions for segment annotation and interpretation. |

| Visualization Software | IGV, R/ggplot2 for custom plots, Gviz Bioconductor package | Enables visual inspection of read depth and segmented data across the genome. |

| Reference Genome | GRCh38 (hg38), GRCh37 (hg19) | Standardized genomic coordinate system for alignment and reporting. |

GC bias, the variation in sequencing coverage due to guanine-cytosine (GC) content, remains a critical challenge in next-generation sequencing (NGS) data analysis. Within the broader thesis on normalization algorithms, this document details emerging, sophisticated computational approaches that move beyond traditional linear or LOESS-based corrections. Machine Learning (ML) and Kernel-based methods offer powerful, data-adaptive frameworks to model complex, non-linear GC-bias effects, leading to more accurate downstream analyses in variant calling, copy number variation (CNV) detection, and differential expression.

Core Methodologies: Application Notes

Machine Learning-Based Normalization

ML models treat GC bias correction as a supervised regression problem, where the input features (e.g., GC percentage, mappability, genomic position) predict the expected read depth.

Key Algorithms & Applications:

- Random Forest / Gradient Boosting (XGBoost, LightGBM): Used for their robustness to outliers and ability to capture complex feature interactions. Effective for whole-genome sequencing (WGS) CNV normalization.

- Deep Neural Networks (DNNs): Multi-layer perceptrons can model highly non-linear relationships. Applied in normalizing targeted panel and exome sequencing data where bias patterns are region-specific.

- Convolutional Neural Networks (CNNs): Utilize spatial genomic information (e.g., local GC context across bins) for bias prediction in high-resolution datasets.

Protocol 2.1.1: Random Forest Regression for WGS CNV Normalization

- Input Data Preparation: Bin the reference genome into fixed-width bins (e.g., 1 kbp). Calculate per-bin: a) normalized read count (RPM/CPM), b) GC content percentage, c) mappability score, d) regional genomic feature flags.

- Feature & Target Definition: Assemble features (GC%, mappability, etc.) into matrix

X. The target variableyis the log2-transformed read count. - Model Training: On a set of control samples (e.g., pooled normals), train a Random Forest regressor to predict

yfromX. Optimize hyperparameters (tree depth, number of estimators) via cross-validation. - Bias Prediction & Correction: For each sample (test or control), generate predicted read counts (

y_pred) using the trained model. Compute corrected read counts:corrected_count = observed_count / exp(y_pred). - Output: GC-bias normalized read count profile for each sample, ready for segmentation and CNV calling.

Kernel-Based Normalization

Kernel methods, like Support Vector Regression (SVR) and Gaussian Process Regression (GPR), operate in high-dimensional feature spaces without explicit coordinate transformation, ideal for modeling smooth, continuous bias functions.

Key Algorithms & Applications:

- Gaussian Process Regression (GPR): Provides a probabilistic framework, delivering both a bias estimate and uncertainty (variance). Highly effective for modeling smooth coverage trends across GC content in RNA-seq and ChIP-seq data normalization.

- Support Vector Regression (SVR): Seeks to find a function that deviates from observed read depths by no more than a specified margin (ε), offering robustness.

Protocol 2.2.1: Gaussian Process Regression for RNA-seq GC Bias Correction

- Data Collation: For each gene or transcript, compute: a) log-transformed reads per kilobase million (RPKM/TPM), b) average GC content of its exonic regions.

- Kernel Selection: Define a covariance kernel function. A common choice is the Radial Basis Function (RBF) kernel:

k(GC_i, GC_j) = exp(-||GC_i - GC_j||^2 / (2 * l^2)), wherelis the length-scale parameter. - Model Fitting: Using a set of stable control genes or all genes, fit a GPR model that describes the relationship

log(RPKM) = f(GC) + noise, wherefis drawn from the Gaussian process. - Bias Estimation: The GPR model's predictive mean represents the systematic bias associated with a given GC value.

- Normalization: Subtract the predicted bias (log-scale) from the observed log(RPKM) values for all genes. Exponentiate to return to linear scale.

- Output: GC-normalized gene expression values with associated confidence intervals.

Table 1: Performance Comparison of Normalization Methods on Simulated WGS Data

| Method | Mean Absolute Error (Read Depth) | CNV Detection F1-Score | Runtime (min per sample) | Key Assumption |

|---|---|---|---|---|

| Linear Regression | 0.85 | 0.72 | < 1 | Linear GC effect |

| LOESS | 0.41 | 0.85 | 2 | Local linearity |

| Random Forest | 0.22 | 0.93 | 15 | Data-driven, non-linear |

| Gaussian Process | 0.19 | 0.94 | 25 | Smooth, continuous function |

Table 2: Typical Reagent & Computational Resource Requirements

| Item / Resource | Specification / Function | Example Product / Tool |

|---|---|---|

| Reference Standard | High-quality, GC-balanced control DNA | Coriell Institute NA12878 |

| Sequencing Kit | Library prep with uniform GC representation | KAPA HyperPlus Kit |

| PCR Reagent | Polymerase with low GC bias | KAPA HiFi HotStart ReadyMix |

| Compute Hardware | High RAM for model training | 64+ GB RAM, Multi-core CPU |

| ML Framework | Library for model implementation | scikit-learn, TensorFlow, GPy |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for GC-Bias Normalization Experiments

| Item | Function in Context | Notes |

|---|---|---|

| GC-Balanced Control Spike-Ins | External standards added to samples to monitor and model GC bias independently of biological content. | Example: ERCC RNA Spike-In Mixes (for RNA-seq). |

| Cell Line DNA Reference Standards | Genomically characterized, stable source of DNA used to train and validate normalization models across runs. | Example: Coriell Institute GM12878. |

| Low-Bias PCR Enzymes | Polymerases designed for uniform amplification across varying GC content, reducing upstream technical bias. | Critical for library preparation prior to sequencing. |

| Targeted Capture Probes | Probes designed with balanced GC content to minimize capture bias in exome/panel sequencing. | Improves uniformity, simplifying downstream normalization. |

| Benchmarking Variant Sets | Curated sets of known variants (e.g., GIAB) to quantitatively assess normalization performance on variant calling. | Gold standard for validation. |

Visualization of Workflows & Relationships

Title: Machine Learning-Based GC Bias Normalization Workflow

Title: Kernel Method Concept for Non-Linear Bias Correction

Application Notes

Within the context of a broader thesis on GC bias normalization algorithms for sequencing data research, effective normalization is the critical bridge between raw genomic signals and biologically interpretable results. GC bias, the variation in sequencing coverage correlated with guanine-cytosine (GC) content, is a pervasive technical artifact that confounds copy number variant (CNV) detection, variant calling, and differential expression analysis. This note details the application and protocols for normalization within three essential toolkits: GATK (for short variants and CNVs), CNVkit (for targeted and whole-genome CNV analysis), and Bioconductor packages (for high-throughput genomic analysis in R).

The core principle across platforms involves modeling the relationship between observed read counts/depths and GC content, then correcting the data based on this model. The algorithms and implementation, however, are tailored to specific data types and analytical goals.

Table 1: Normalization Toolkit Comparison

| Toolkit | Primary Use Case | GC Normalization Method | Key Input | Key Output |

|---|---|---|---|---|

| GATK (4.0+) | Germline CNV, Pre-variant calling | Loess regression on binned genomic intervals | BAM files, Reference genome | Corrected coverage tracks, Denoised copy ratios |

| CNVkit | Targeted/WGS CNV profiling | Biweight smoothing of bin-level coverage vs. GC | BAM files, Target/antitarget BED | Normalized log2 copy ratios |

Bioconductor (e.g., edgeR, DESeq2) |

RNA-seq differential expression | Global scaling (e.g., TMM) and within-lane GC correction | Count matrix, GC content per gene | Normalized counts for DE analysis |

Experimental Protocols

Protocol 1: GC Bias Normalization in GATK for Germline CNV Analysis

Objective: To generate GC-bias-corrected and denoised copy ratio estimates from whole-genome sequencing data for germline CNV detection.

- Prerequisites: Indexed BAM files and reference genome (hg38/hg19). Install GATK (v4.3+).

- Collect Read Counts: Run

CollectReadCountsto generate counts per fixed-size bin (e.g., 1000 bp). - Annotate Intervals with GC Content: Run

CollectGcBiasMetrics(Picard) to generate a GC profile, then useAnnotateIntervalswith the--gc-contentflag. - Correct GC Bias and Denoise: Use

DenoiseReadCounts. The--standardized-copy-ratiosoutput is GC-corrected.

Protocol 2: GC Correction in CNVkit for Targeted Panel Data

Objective: To normalize on-target and off-target reads from a targeted sequencing panel for robust copy number estimation.

- Prerequisites: BAM file(s) and BED file of target regions. Install CNVkit via conda/pip.

- Compute Coverage: Run

cnvkit.py coverageto get raw coverage for targets and antitargets. - Build Reference (Normal Pool): Combine coverage files from multiple normal samples. This step models the GC bias.

- Normalize Sample: Correct the sample's coverage using the reference, which includes GC bias correction via a smoothing spline.

The

.cnrfile contains GC-normalized log2 ratios ready for segmentation.

Protocol 3: Within-Lane GC Normalization for RNA-seq in Bioconductor

Objective: To adjust gene-level counts in an RNA-seq experiment for biases related to gene GC content.

- Prerequisites: R with Bioconductor packages

edgeRandEDASeq. A matrix of read counts and a vector of gene GC content. Load Data & Create Data Objects.

Perform Within-Lane GC Normalization using

EDASeq.Proceed with Standard Differential Expression analysis on normalized counts using

edgeR.

Visualizations

Title: GATK Germline CNV Normalization Workflow

Title: CNVkit Reference-Based Normalization Process

Title: Bioconductor RNA-seq GC Normalization Pathway

The Scientist's Toolkit: Research Reagent Solutions

| Item / Resource | Function in GC Bias Normalization |

|---|---|

| Reference Genome FASTA | Essential for calculating the theoretical GC content of genomic intervals (GATK AnnotateIntervals, CNVkit reference). |

| Panel of Normal (PON) Samples | A set of BAM files from known normal samples. Used in GATK to model systematic noise, including residual GC bias, improving correction. |

| Target and Antitarget BED Files | Defines regions of interest and control regions for hybrid-capture sequencing. Critical for CNVkit's paired coverage calculation and normalization. |

| Interval List File | A GATK-specific file defining genomic regions for binning. Required for CollectReadCounts to ensure consistent genomic partitioning. |

| Gene Annotations (GTF/GFF) | Provides genomic coordinates and metadata for genes. Used to calculate per-gene GC content for RNA-seq GC normalization in Bioconductor. |

| High-Quality Normal Sample Cohort | A set of control samples processed identically to cases. Building a robust CNVkit reference.cnn or GATK PON is dependent on this cohort's quality and size. |

Integrating Whole Genome Sequencing (WGS), Whole Exome Sequencing (WES), and RNA-Seq pipelines is critical for comprehensive genomic and transcriptomic analysis in modern therapeutics development. This integration is fundamentally challenged by technical artifacts, most notably GC bias—the variation in sequencing coverage correlated with the guanine-cytosine (GC) content of genomic regions. GC bias can confound variant calling, copy number estimation, and gene expression quantification, leading to inaccurate biological interpretations. This guide provides detailed protocols for executing and integrating these three core sequencing workflows, with explicit steps for implementing and evaluating GC bias normalization algorithms, a central thesis of contemporary sequencing data research.

Foundational Principles & GC Bias Impact

GC bias arises during library preparation (PCR amplification) and sequencing. Its severity varies by platform, library kit, and genomic context.

Table 1: Impact of GC Bias Across Sequencing Applications

| Application | Primary Impact of GC Bias | Consequence for Analysis |

|---|---|---|

| WGS | Non-uniform coverage across genomes. | False positive/negative variant calls; inaccuracies in copy number variation (CNV) detection. |

| WES | Exacerbated in capture-based enrichment. | Inconsistent depth across exons; reduced sensitivity for variant detection in high/low GC regions. |

| RNA-Seq | Correlated with gene expression level estimates. | Differential expression false positives; biased transcript abundance estimates. |

Integrated Step-by-Step Protocols

Universal Pre-Processing & QC Workflow

All three pipelines begin with raw sequencing read management and quality control.

Protocol 1.1: Raw Data QC and Adapter Trimming

- Tool:

FastQCfor quality control;Trim Galore(wrapper forCutadaptandFastQC) for trimming. - Methodology:

- Execute

fastqc *.fastq.gz -o ./qc_raw/on all raw FASTQ files. - Trim adapters and low-quality bases using:

trim_galore --paired --gzip --output_dir ./trimmed/ read1.fq.gz read2.fq.gz. - Run

FastQCagain on trimmed files to confirm improvement.

- Execute

- GC Bias Check: Generate per-sequence GC content plots from

FastQC. A skewed distribution away from the theoretical genomic average indicates strong GC bias.

Diagram Title: Universal Pre-processing and QC Workflow

WGS-Specific Pipeline

Protocol 2.1: Alignment, Processing, and GC Normalization for CNV Calling

- Alignment: Align to reference genome (e.g., GRCh38) using

BWA-MEM:bwa mem -t 8 reference.fa trimmed_1.fq.gz trimmed_2.fq.gz | samtools sort -o aligned.bam. - Mark Duplicates: Use

GATK MarkDuplicatesto flag PCR duplicates. - GC Bias Normalization for CNV:

- Tool:

CNVkitorGATK CollectGCBias. - Methodology: Using CNVkit as an example:

- Generate a GC profile of the reference:

cnvkit.py access reference.fa -o access.bed. - Calculate coverage in target bins:

cnvkit.py autobin aligned.bam -t access.bed --gcbias. - Build a reference profile from control samples:

cnvkit.py reference *.targetcoverage.cnn --fasta reference.fa. - Normalize sample coverage against the reference, which includes GC correction:

cnvkit.py fix sample.targetcoverage.cnn sample.antitargetcoverage.cnn reference.cnn --gc.

- Generate a GC profile of the reference:

- The

--gcflag applies a LOESS regression model to correct bin-level coverage based on GC content.

- Tool:

WES-Specific Pipeline

Protocol 3.1: Post-Alignment Processing and Capture Efficiency Bias Mitigation

- Alignment: Same as WGS (Protocol 2.1).

- Base Quality Score Recalibration (BQSR): Essential for WES. Uses

GATK BaseRecalibratorwith known variant sites to correct systematic errors. - GC Bias Assessment in Target Regions:

- Use

GATK CollectHsMetricsto calculateGC_DROPOUTandAT_DROPOUTmetrics, quantifying coverage loss in extreme GC regions. - Normalization Approach: While explicit GC normalization is less common in WES variant calling (reliance on BQSR and duplicate marking), it is crucial for CNV analysis. Tools like

CNVkit(as above) orExomeDepthincorporate GC correction in their models when calculating exon-level copy number.

- Use

RNA-Seq-Specific Pipeline

Protocol 4.1: Transcriptomic Alignment, Quantification, and Expression Bias Correction

- Alignment: Use a splice-aware aligner (

STARrecommended):STAR --genomeDir /star_index --readFilesIn trimmed_1.fq.gz trimmed_2.fq.gz --runThreadN 8 --outSAMtype BAM SortedByCoordinate --outFileNamePrefix ./star_out/. - Gene/Transcript Quantification: Use

featureCounts(gene-level) orSalmon/kallisto(transcript-level). Example withfeatureCounts:featureCounts -p -a annotation.gtf -o counts.txt star_out/Aligned.sortedByCoord.out.bam. - GC Bias Normalization in Differential Expression:

- Tool: Integrated into R/Bioconductor packages (

DESeq2,edgeR). - Methodology in DESeq2:

- The core

DESeq2model does not explicitly correct for GC content. - Key Step: GC content can be included as a covariate in the model if it correlates with expression and is not of biological interest.

- Calculate GC content per gene using the reference genome.

- Include the per-gene GC content vector as a numerical covariate in the

DESeqdesign formula:~ gc_content + condition. - This controls for the effect of GC content on read count, isolating the biological effect of the condition.

- The core

- Tool: Integrated into R/Bioconductor packages (

Diagram Title: Integrated Multi-Omics Workflow with GC Bias Normalization

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents and Tools for Integrated Sequencing Pipelines

| Item / Solution | Function / Purpose | Application Context |

|---|---|---|

| KAPA HyperPrep/HyperPlus Kit | Library construction with optimized chemistry to reduce GC bias during PCR amplification. | WGS, WES, RNA-Seq (for non-stranded protocols). |

| IDT xGen Hybridization Capture Probes | High-performance probes for exome enrichment; uniform coverage improves GC-bias mitigation. | WES only. |

| Illumina NovaSeq 6000 S-Prime Reagent Kit | Production-scale sequencing with balanced chemistry across GC-rich and AT-rich regions. | All applications (large scale). |

| RNase H / DNase I | Degrade RNA or DNA contaminants in samples to ensure pure template input. | All applications (sample prep). |

| ERCC RNA Spike-In Mix | Known concentrations of exogenous RNA transcripts used to monitor technical variance, including GC-related effects. | RNA-Seq (quality control). |

| PhiX Control v3 | Balanced genomic library used for run quality control and calibration on Illumina platforms. | All applications (sequencing run QC). |

| GATK Best Practices Bundle | Curated set of known variant sites (e.g., dbsnp, Mills indels) for BQSR and variant evaluation. | Primarily WGS/WES. |

| GC Bias Correction Algorithms | Software tools (CNVkit, EDASeq, cqn R package) implementing LOESS regression or conditional quantile normalization. | Applied post-alignment in all pipelines. |

Data Presentation: Comparative Pipeline Metrics

Table 3: Typical Output Metrics and GC Bias Indicators by Pipeline

| Metric | WGS (Optimal Value) | WES (Optimal Value) | RNA-Seq (Optimal Value) | Tool for Measurement |

|---|---|---|---|---|

| Mean Coverage | 30-50x | 100-200x | N/A | Mosdepth, GATK |

| Uniformity of Coverage | >95% bases at 0.2x mean | >80% targets at 20x | N/A | GATK CollectHsMetrics |

| Duplicate Rate | <10-20% | <10-20% | Varies by protocol | GATK MarkDuplicates |

| GC Dropout Metric | Low value | GC_DROPOUT < 5% |

N/A | GATK CollectHsMetrics |

| Gene Body 3'/5' Bias | N/A | N/A | Ratio ~1.0 | RSeQC |

| Key GC-Bias Diagnostic | Visual flatness of coverage in CNV profile | Correlation of coverage with target GC% | Correlation of residuals with gene GC% | CNVkit, IGV, DESeq2 |

Solving Common GC Bias Problems: Optimization and Pitfall Avoidance

Within the broader thesis on GC bias normalization algorithms for sequencing data research, effective correction is critical for accurate downstream analysis in genomics, transcriptomics, and epigenomics. Inadequate normalization can propagate systematic errors, leading to false biological conclusions, especially in differential expression, copy number variation detection, and biomarker discovery for drug development. This document outlines diagnostic signs of normalization failure and provides protocols for validation.

Key Signs of Normalization Failure

Quantitative and qualitative indicators of unsuccessful GC bias correction.

Table 1: Primary Signs of Failed GC Bias Normalization

| Sign | Description | Typical Metric/Visual Cue | ||

|---|---|---|---|---|

| Residual GC-Read Count Correlation | Significant correlation between GC content and read depth/coverage persists after normalization. | Pearson’s | r | > 0.1 in binned genomic regions. |

| Non-uniform M-A Plot Spread | Fan-shaped or systematic pattern in log-ratio (M) vs. average abundance (A) plots post-normalization. | Increasing variance with decreasing average read count. | ||

| Perplexing QC Metric Drift | Key quality control metrics (e.g., TMM factors, scaling factors) show extreme or bimodal distribution. | Scaling factors range > 4-fold or show clear batch dependency. | ||

| Failed Spiked-in Control Profiles | Measured abundances of exogenous controls (e.g., ERCC RNA spikes) deviate significantly from expected ratios. | > 2-fold deviation from expected log-ratio for controls. | ||

| Increased Technical Replicate Variance | Normalization increases rather than decreases distance between technical replicates in PCA. | Inter-replicate distance higher in normalized vs. raw data. | ||

| Biomarker Signal Loss | Known positive controls or validated biomarkers lose statistical significance post-normalization. | P-value for known true positive becomes non-significant (p > 0.05). |

Experimental Protocols for Diagnosis

Protocol 1: Assessing Residual GC-Read Depth Correlation

Objective: Quantify remaining systematic bias between genomic GC content and sequencing coverage after normalization. Materials: Normalized read count matrix (e.g., BAM or BigWig files), reference genome FASTA. Procedure:

- Divide the genome into non-overlapping bins (e.g., 1 kb for WGS, gene bodies for RNA-seq).

- Calculate the mean GC percentage for each bin from the reference genome.

- Extract the mean normalized read depth/coverage for each corresponding bin.

- Compute Pearson and Spearman correlation coefficients between bin GC% and bin read depth.

- Generate a scatter plot: GC% (x-axis) vs. normalized read depth (y-axis). Interpretation: A successful normalization minimizes correlation. A significant correlation (|r| > 0.1, p < 0.05) indicates residual bias.

Protocol 2: M-A Plot Analysis for Spread Homogeneity

Objective: Visualize the dependence of variance on abundance after normalization. Materials: Normalized count matrix for two conditions or samples. Procedure:

- Calculate the log2 fold-change (M) and mean average expression (A) for each feature (gene, region) between two samples or a sample and a reference.

M = log2(Count_sample / Count_reference)A = (1/2) * log2(Count_sample * Count_reference)

- Plot M (y-axis) against A (x-axis).

- Apply a local regression (LOESS) to visualize the trend of the spread. Interpretation: Successful normalization yields a cloud of points with consistent variance across all expression levels. A fan-shaped pattern (increasing variance at low A) indicates inadequate correction for bias dependent on abundance.

Protocol 3: Validation Using Spiked-in Controls

Objective: Use exogenous controls with known concentrations to audit normalization accuracy. Materials: Sequencing data with spiked-in synthetic oligonucleotides (e.g., ERCC RNA Spike-In Mix). Procedure:

- Align reads to a combined reference (organism + spike-in sequences).

- Quantify reads aligning uniquely to each spike-in control.

- Normalize the entire dataset (including spikes) using the candidate GC bias algorithm.

- For each spike-in transcript, plot the observed log2(normalized count) against the expected log2(concentration).

- Fit a linear model and calculate the R² and slope. Interpretation: Accurate normalization results in a strong linear fit (R² > 0.95, slope ~1) for spike-ins. Deviations indicate systematic distortion introduced by the correction.

Visualization of Diagnostic Workflows

Diagnostic Flow for GC Bias Normalization

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for GC Bias Normalization Experiments

| Item | Function & Application |

|---|---|

| ERCC RNA Spike-In Mix (Thermo Fisher) | Exogenous control set with known concentrations for auditing normalization performance and absolute quantification in RNA-seq. |

| PhiX Control Library (Illumina) | Low-complexity, known genome used for run quality control; can help monitor nucleotide composition-related biases. |

| UMI Adapter Kits (e.g., from Bioo Scientific) | Unique Molecular Identifiers to correct for PCR amplification bias, a critical pre-step before GC correction. |

| Genomic DNA Spike-Ins (e.g., from SIRV/EQUICOMB) | Defined synthetic genomes for assessing normalization in DNA sequencing assays like ChIP-seq or WGS. |

| High GC / Low GC Control Genomes | Bacterial or synthetic DNA with extreme GC content to run as process controls and stress-test normalization algorithms. |

| cDNA Synthesis Kits with GC Modulation | Reverse transcriptase kits optimized for uniform coverage across varying GC regions (e.g., SMARTER from Takara Bio). |

| Commercial Normalization Software | Packages like cqn (Conditional Quantile Normalization), gcCorrect, or proprietary tools within CLC Genomics, Partek Flow. |

| Benchmarking Datasets (e.g., SEQC/MAQC) | Publicly available gold-standard datasets with validated truth sets for systematically evaluating normalization performance. |

This document serves as an application note for the critical parameter optimization phase within a broader thesis on algorithmic correction of GC bias in next-generation sequencing (NGS) data. GC bias, the non-uniform sequencing coverage correlated with genomic regions' guanine-cytosine (GC) content, confounds copy number variation (CNV) detection, transcript abundance quantification, and variant calling in cancer genomics and biomarker discovery. The core normalization algorithm—often a loess or polynomial regression of read depth against GC content—relies heavily on three interdependent parameters: the genomic window size, the GC content bin count, and the regression span (or bandwidth). Incorrect tuning leads to over-correction, under-correction, or introduction of artificial artifacts, compromising downstream analysis in therapeutic target identification. This protocol provides a systematic framework for empirically determining the optimal parameter set for a given sequencing library and study design.

Table 1: Common Parameter Ranges and Observed Effects on Normalization Performance

| Parameter | Typical Range | Effect if Too Small | Effect if Too Large | Common Default (Illumina WGS) |

|---|---|---|---|---|

| Window Size (bp) | 100 - 20,000 | High variance in GC/coverage calculation; noisy regression. | Loss of local genomic resolution; masks small-scale biases. | 1,000 |

| Bin Count | 10 - 100 | Coarse GC distribution; poor modeling of nonlinear bias. | Sparse bins; insufficient data per bin for stable coverage estimate. | 50 |

| Regression Span | 0.1 - 1.0 | Overfitting to local noise in the GC-coverage relationship. | Underfitting; fails to capture true bias trend, leaving residual bias. | 0.75 |

Table 2: Example Optimization Results from a Simulated 30X Whole Genome Sequencing Dataset

| Tested Window (bp) | Tested Bins | Tested Span | Post-Normation CV of Coverage* | Residual GC Correlation (r) |

|---|---|---|---|---|

| 500 | 30 | 0.5 | 0.18 | 0.08 |

| 1000 | 30 | 0.5 | 0.15 | 0.05 |

| 1000 | 50 | 0.75 | 0.12 | 0.01 |

| 5000 | 50 | 0.75 | 0.14 | 0.03 |

| 1000 | 100 | 1.0 | 0.17 | 0.10 |

*Coefficient of Variation across autosomal genomic windows. Lower is better.

Experimental Protocol for Parameter Optimization

Protocol 3.1: Systematic Grid Search for Parameter Trio

Objective: To empirically identify the parameter combination that minimizes coverage variance and residual GC correlation. Materials: Aligned sequencing reads (BAM file), reference genome FASTA, normalization software (e.g., mosdepth, GATK, or custom R/Python script). Procedure:

- Generate Input Windows: Using a tool like

bedtools makewindows, partition the reference genome (excluding telomeres, centromeres, and sex chromosomes) into non-overlapping windows for each window size in your test set (e.g., 500bp, 1kbp, 5kbp, 10kbp). - Calculate Raw Metrics: For each window size:

a. Compute read depth (

mosdepthorsamtools depth). b. Compute GC fraction (bedtools nuc). - Bin GC Content: For each window set, distribute windows into N bins based on their GC fraction, where N is varied (e.g., 20, 30, 50, 100 bins). Ensure each bin contains a minimum number of windows (e.g., >100); else, adjust binning strategy.

- Perform Normalization Iterations: For each combination (Window Size, Bin Count, Regression Span):

a. Calculate the median read depth for all windows within each GC bin.

b. Fit a loess (or polynomial) model with the specified

spanparameter, predicting median depth from bin's midpoint GC%. c. For each window, compute a normalized depth = (raw depth / predicted depth) * global median depth. - Evaluate Output: For each normalized dataset, calculate: a. Coefficient of Variation (CV): Standard deviation of normalized coverage across all windows / mean normalized coverage. b. Residual GC Correlation: Pearson's r between normalized coverage and GC fraction. c. MAD of Chromosome Medians: Median Absolute Deviation of per-chromosome median coverages.

- Select Optimal Set: Choose the parameter combination that simultaneously minimizes CV, absolute residual correlation, and MAD. Visual inspection of the GC-coverage curve post-normalization is essential.

Protocol 3.2: Validation via Spike-in or Known CNV Regions

Objective: To ensure optimization does not erase true biological signals, such as copy number alterations. Materials: Cell line DNA with known CNVs (e.g., NA12878 with known deletions/duplications) or spike-in controls (e.g., ERCC RNA spikes for RNA-seq). Procedure:

- Process the control sample through the normalization pipeline using your top 3 candidate parameter sets from Protocol 3.1.