A Complete SOP for NCBI WGS Quality Assessment: Best Practices for Researchers & Clinicians

This comprehensive guide provides researchers, scientists, and drug development professionals with a detailed Standard Operating Procedure (SOP) for quality assessment of Whole Genome Sequencing (WGS) data submitted to the NCBI.

A Complete SOP for NCBI WGS Quality Assessment: Best Practices for Researchers & Clinicians

Abstract

This comprehensive guide provides researchers, scientists, and drug development professionals with a detailed Standard Operating Procedure (SOP) for quality assessment of Whole Genome Sequencing (WGS) data submitted to the NCBI. It covers foundational concepts of quality metrics, step-by-step methodological workflows using current tools, troubleshooting for common data issues, and validation strategies for clinical and research compliance. The article synthesizes the latest NCBI requirements and bioinformatics best practices to ensure data integrity, reproducibility, and successful submission for downstream biomedical applications.

Understanding WGS Quality Metrics: The Foundation for NCBI Submission Success

Within the framework of a Standard Operating Procedure (SOP) for Whole Genome Sequencing (WGS) quality assessment leading to NCBI research, understanding submission requirements is critical. This document provides detailed Application Notes and Protocols for submitting data to the Sequence Read Archive (SRA), GenBank, and for WGS projects. Compliance ensures data reproducibility, accessibility, and contributes to the integrity of public repositories.

Table 1: Summary of NCBI Submission Portal Requirements

| Feature | SRA | GenBank | WGS |

|---|---|---|---|

| Primary Data Type | Raw sequencing reads (FASTQ, BAM) | Assembled, annotated sequences (FASTA) | Whole genome assembly contigs/scaffolds (FASTA) |

| Mandatory Metadata | BioProject, BioSample, library strategy, instrument, processing descriptions. | Source organism, author, publication, gene/feature annotations. | BioProject, BioSample, assembly method, genome coverage. |

| File Formats | FASTQ, BAM, SRA (compressed). | FASTA (sequence), tbl (feature table), sqn (ASN.1), or Sequin/ BankIt flatfile. | FASTA (contigs/scaffolds), AGP (assembly structure), optional annotation files. |

| Accession Prefix | SRR, SRX, SRS, SRP. | Accession.Version (e.g., MT123456.1). | JAxxxxxx, JZxxxxxx, etc. |

| Submission Tool | SRA Toolkit (prefetch, fasterq-dump), Web interface, or command-line. |

BankIt (web, simple), tbl2asn (command-line, complex), Sequin. | WGS Submission Portal (web) with file upload or FTP. |

| Release Date Control | Immediate or specified future date. | Immediate or specified future date; can hold until publication. | Immediate or specified future date. |

| Update Policy | New submission set; can suppress old. | Versioning: New sequence receives new accession.version. New annotations replace old. | New assembly requires new submission. |

Detailed Application Notes and Protocols

Protocol: SRA Submission via Command-Line

Objective: Submit raw WGS reads to the SRA.

Materials & Reagents:

- SRA Toolkit: Suite of tools for data formatting and submission.

- NCBI Account & Submission Portal: Authentication and project management.

- Metadata Spreadsheets: Template downloaded from Submission Portal.

- Valid BioProject & BioSample Accessions: Created prior to submission.

Procedure:

- Prepare Metadata: Log into the NCBI Submission Portal. Create a new SRA submission. Define BioProject and BioSample if not existing. Download the metadata spreadsheet template (e.g.,

SRA_metadata.xlsx). Fill in all required fields: sample name, isolate, library ID, layout (PAIRED/ SINGLE), platform (ILLUMINA, OXFORD_NANOPORE, etc.), instrument model, strategy (WGS), and file names. - Organize Data Files: Ensure FASTQ files are named exactly as specified in the metadata sheet. Use

gziporbzip2for compression. - Generate Submission Files: Use the

SRA Toolkitcommandprefetchandfasterq-dumpfor validation and format checking locally. - Upload Data: Use an FTP client (e.g.,

lftp,FileZilla) or Aspera Connect (ascp) to transfer files to the secure NCBI upload directory provided in the portal. - Validate and Submit: In the Submission Portal, upload the filled metadata spreadsheet. The system will validate file names and metadata consistency. Address any errors. Finalize submission and set release date.

Protocol: GenBank Submission via tbl2asn

Objective: Submit an annotated WGS-derived genome or sequence to GenBank.

Materials & Reagents:

- tbl2asn Program: NCBI command-line tool for creating submission files.

- FASTA sequence file: The final contiguous sequence.

- Five-column Feature Table (tbl) file: Contains annotation (CDS, rRNA, gene, etc.).

- Submission Template File (sbt): Defines authorship and contact info.

Procedure:

- Create Input Files:

- Prepare a FASTA file (

sequence.fsa) with the DNA sequence. The definition line should contain organism and isolate information. - Create a five-column tab-delimited feature table file (

sequence.tbl) detailing all biological features. Columns:>Feature [SeqId], then lines of[start] [end] [feature],[tab] [qualifier] [value]. - Generate a template file (

template.sbt) via the NCBI Submission Portal.

- Prepare a FASTA file (

- Run tbl2asn: Execute the command:

tbl2asn -p . -t template.sbt -M n -Z discrep -j "[organism=Scientific Name] [strain=Strain ID]" -V b-p .uses current directory.-tspecifies template.-M nallows non-IUPAC bases to be flagged.-Z discrepgenerates a discrepancy report.

- Review Output: Check the

.valanddiscrep.txtfiles for errors and warnings. Correct annotations in the.tblfile and reruntbl2asnif necessary. - Submit: Upload the generated

.sqnfile (and optionally the source files) via the NCBI Submission Portal under a GenBank submission.

Protocol: WGS Project Submission

Objective: Submit a complete Whole Genome Shotgun assembly.

Materials & Reagents:

- Assembly FASTA File: Contigs or scaffolds.

- AGP File: Describes how scaffolds are built from contigs (required for scaffolds).

- Optional Annotation Files: GFF3 or feature table files.

Procedure:

- Prepare Assembly Files:

- Create a FASTA file of all contigs/scaffolds. Header format:

>gnl|ProjectID|SeqID [optional description]. - If scaffolds are present, create an AGP v2.1 file linking component contigs to scaffolds.

- Create a FASTA file of all contigs/scaffolds. Header format:

- Create Submission on Portal: Log into the NCBI Submission Portal. Initiate a WGS submission. Link to an existing BioProject and BioSamples.

- Upload and Validate: Use the web interface or FTP to transfer the FASTA, AGP, and optional annotation files. The portal will run validation checks on file formats and completeness.

- Finalize: Assign a WGS genome assembly prefix (provided by NCBI). Submit. The assembly will receive a WGS accession range (e.g., JAABCD010000001-JAABCD010000050).

Visual Workflows

Title: SRA Submission Workflow for WGS Data

Title: GenBank Submission via tbl2asn Protocol

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools and Resources for NCBI Submissions

| Item | Primary Function | Notes |

|---|---|---|

| SRA Toolkit | Command-line utilities for formatting, validating, and downloading SRA data. | Essential for large-scale or automated SRA submissions and data retrieval. |

| tbl2asn | NCBI command-line program to create ASN.1 (.sqn) submission files from FASTA and feature tables. | Core tool for complex GenBank submissions; requires precise input file formatting. |

| BankIt Web Form | User-friendly web interface for submitting simple nucleotide sequences to GenBank. | Ideal for single genes or small batches of sequences without complex annotation. |

| NCBI Submission Portal | Central web dashboard for managing all submissions (BioProject, BioSample, SRA, GenBank, WGS). | Mandatory for obtaining accession numbers and coordinating related submissions. |

| AGP File Generator | Scripts or tools (e.g., from assembly pipelines) to create AGP files describing scaffold builds. | Crucial for WGS submissions with scaffolds to describe assembly structure. |

| Metadata Spreadsheet Templates | Excel/TSV templates provided by NCBI for SRA and BioSample metadata. | Ensures correct metadata field formatting and completeness for validation. |

| Aspera Connect / FTP Client | High-speed transfer protocols for uploading large sequence files to NCBI servers. | Required for transferring multi-GB FASTQ or assembly files. |

Why WGS Quality Control is Non-Negotiable for Research and Clinical Validity

Whole Genome Sequencing (WGS) quality assessment is a critical gatekeeper for both research credibility and clinical actionability. In the context of establishing a Standard Operating Procedure (SOP) for WGS quality assessment for NCBI-submissible research, rigorous QC is the foundational step that determines all downstream analyses. Failure at this stage introduces irreparable biases, leading to false positives/negatives in variant calling, erroneous conclusions in research, and potentially harmful misinterpretations in clinical diagnostics. This document outlines the essential application notes and detailed protocols for implementing a non-negotiable QC pipeline.

Quantitative Quality Metrics & Thresholds

The following tables summarize the key quantitative metrics used to assess raw sequencing data and aligned genomes. These thresholds are informed by current best practices from leading genomics consortia (e.g., FDA-STEP, CDC, and GIAB) and are prerequisites for submission to NCBI repositories.

Table 1: Key Quality Metrics for Raw WGS Data (Illumina Platform)

| Metric | Recommended Threshold | Purpose & Rationale |

|---|---|---|

| Q-score (Q30) | ≥ 80% of bases | Indicates base call accuracy. <80% increases error rate and variant false positives. |

| Total Yield | ≥ 90 Gb for 30x coverage (Human) | Ensures sufficient data for required genomic coverage. |

| % Bases ≥ Q30 | ≥ 85% | Critical for reliable variant calling, especially for SNVs. |

| Cluster Density | 170-220 K/mm² (NovaSeq) | Optimal for image analysis; deviations cause phasing/pre-phasing errors. |

| % PhiX Alignment | 1-5% | Monitors sequencing performance and identifies index swapping. |

| Mean Insert Size | Within 20% of library prep target | Deviations indicate library preparation issues affecting coverage uniformity. |

Table 2: Post-Alignment Quality Metrics (Human Genome)

| Metric | Recommended Threshold | Purpose & Rationale |

|---|---|---|

| Mean Coverage Depth | ≥ 30x (Clinical: ≥ 40x) | Balances cost and sensitivity for variant detection. |

| Coverage Uniformity (% > 0.2x mean) | ≥ 95% | Ensures even coverage; low uniformity misses regions. |

| Duplication Rate | < 10-20% (varies by prep) | High PCR duplication reduces effective coverage and diversity. |

| Mapping Rate | ≥ 95% (to primary genome) | Low rate suggests contamination or poor library quality. |

| Chimerical Read Rate | < 5% | High rates indicate molecular degradation or artifacts. |

| Contamination Estimate | < 1-3% | Critical for clinical validity; high contamination causes false heterozygotes. |

Detailed Protocols for Core QC Experiments

Protocol 3.1: Pre-Alignment Quality Control with FastQC and MultiQC

Objective: Assess the quality of raw FASTQ files.

- Tool Setup: Install

FastQC(v0.12.0+) andMultiQC(v1.14+). - Run FastQC:

fastqc *.fastq.gz -t [number_of_threads] -o [output_dir] - Aggregate Reports:

multiqc [output_dir] -n multiqc_report.html - Interpretation: Examine the MultiQC HTML report. Critical checks:

- Per Base Sequence Quality: Look for drops in quality at read starts/ends.

- Adapter Content: >5% adapter contamination necessitates trimming.

- Overrepresented Sequences: Identify potential contaminants.

- GC Content: Should match reference genome distribution (~40% for human).

Protocol 3.2: Alignment and Post-Alignment QC using BWA-Mem and SAMtools

Objective: Generate aligned BAM files and calculate key metrics.

- Alignment:

- Index reference genome:

bwa index GRCh38.fa - Align:

bwa mem -t 8 -R "@RG\tID:sample\tSM:sample" GRCh38.fa read1.fq read2.fq | samtools sort -o aligned_sorted.bam -@ 8

- Index reference genome:

- Mark Duplicates: Use

sambambaorGATK MarkDuplicates.sambamba markdup -t 8 aligned_sorted.bam deduplicated.bam

- Calculate Metrics:

- Mapping rate:

samtools flagstat deduplicated.bam > flagstat.txt - Insert size:

samtools stats deduplicated.bam | grep ^SN | cut -f 2- > samtools_stats.txt - Coverage:

mosdepth -t 4 -b 500 sample_output deduplicated.bam

- Mapping rate:

Protocol 3.3: Contamination Estimation with VerifyBamID2

Objective: Estimate sample cross-contamination and ancestry concordance.

- Download Resources: Get the population-specific allele frequency (AF) SNP file from the VerifyBamID2 repository.

- Execute:

VerifyBamID --BamFile deduplicated.bam --Reference GRCh38.fa --SVDPrefix [path_to_AF_file] --Output output_prefix - Interpretation: Key output is

output_prefix.selfSM. TheFREEMIXcolumn indicates contamination fraction. Action: IfFREEMIX> 0.03, the sample fails and must be re-processed.

Protocol 3.4: Comprehensive Variant Calling QC using hap.py

Objective: Benchmark variant calls against a truth set (e.g., GIAB).

- Prepare Truth/Query VCFs: Restrict to high-confidence benchmark regions.

- Run hap.py:

python3 hap.py truth.vcf query.vcf -r GRCh38.fa -o performance_report -T [bed_file_of_confident_regions] - Analyze Output: Review

performance_report.metrics.json. For clinical WGS:- SNP Precision/Recall (F1) > 0.99

- Indel Precision/Recall (F1) > 0.95

Visualization of the QC Workflow & Decision Logic

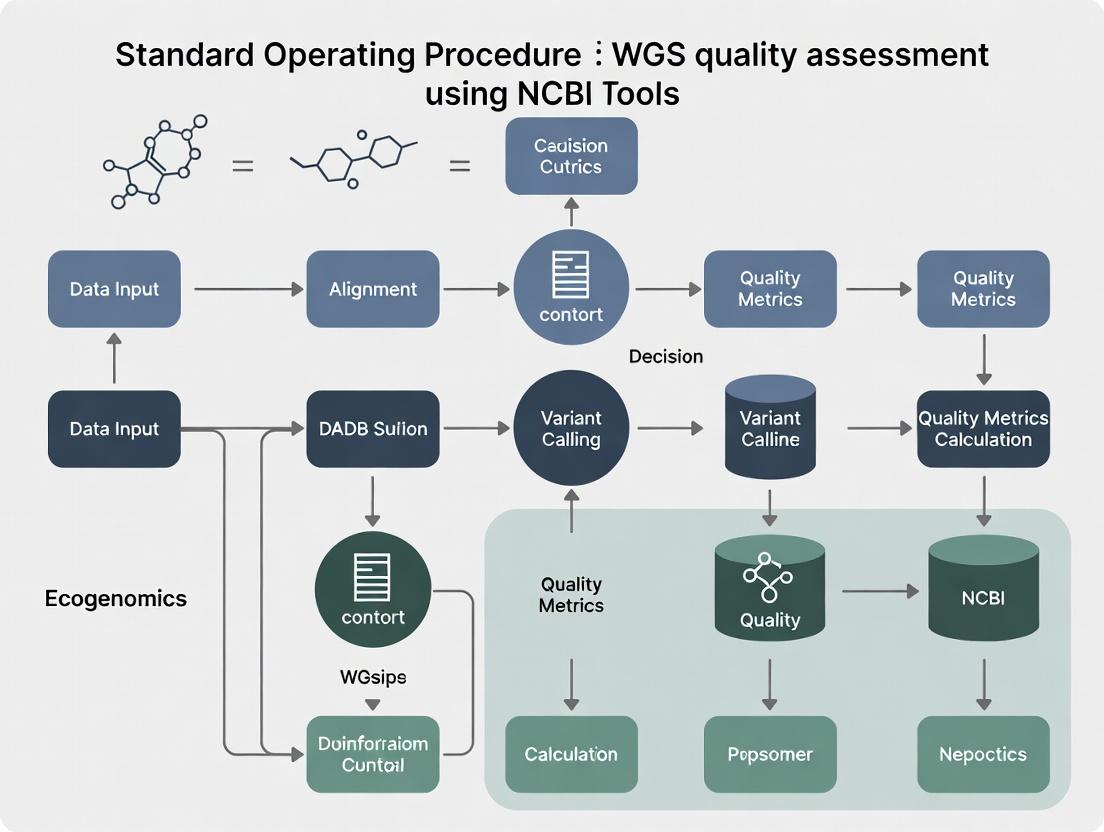

Title: End-to-End WGS Quality Assessment Decision Workflow

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Reagents & Materials for Robust WGS QC

| Item | Function & Rationale |

|---|---|

| NIST Genome in a Bottle (GIAB) Reference Materials | Provides a benchmark 'truth set' of variants for a specific genome (e.g., HG001/NA12878) to validate and tune the entire WGS pipeline, enabling measurement of precision and recall. |

| PhiX Control v3 (Illumina) | A well-characterized, small genome spiked into runs (1-5%) to monitor sequencing accuracy, cluster density, and error rates across lanes in real-time. |

| Pre-made QC Metric Collection Kits (e.g., Agilent D1000 ScreenTape) | For objective, reproducible quantification and sizing of genomic DNA libraries prior to sequencing, ensuring insert size distribution is optimal. |

| Multiplexed Reference Genomes (e.g., Seracare Metagenomic Mix) | A defined blend of microbial and human genomes used to detect and quantify cross-sample contamination in multiplexed sequencing runs. |

| Commercial Contamination Estimation Services/Tools | Tools like VerifyBamID2 or Conpair require population-specific SNP frequency files; these curated resources are critical for accurate autosomal contamination estimates. |

| Automated QC Pipeline Software (e.g., nf-core/sarek, MultiQC) | Pre-configured, version-controlled bioinformatics workflows (Nextflow/Snakemake) that standardize QC metric generation and reporting across labs, ensuring reproducibility. |

Application Notes

Within a comprehensive Standard Operating Procedure (SOP) for Whole Genome Sequencing (WGS) quality assessment intended for NCBI submission and subsequent research, the evaluation of raw sequencing data is paramount. This document decodes four critical metrics that form the cornerstone of initial quality control (QC). The failure to meet established thresholds for these metrics can compromise downstream analysis, leading to erroneous variant calls and unreliable biological conclusions. The following table summarizes benchmark values for Illumina short-read WGS data, as per current community standards.

Table 1: Key Quality Metrics and Benchmarks for Illumina WGS Data (Human)

| Metric | Optimal Range/Value | Threshold for Concern | Primary Implication |

|---|---|---|---|

| Per-Base Q Score (Q30) | ≥ 80% of bases ≥ Q30 | < 75% of bases ≥ Q30 | High base-call error rate, reducing SNP calling accuracy. |

| GC Content | ~40-42% for human genomes | Deviation > 5% from expected | May indicate adapter contamination, PCR bias, or microbial contamination. |

| Adapter Contamination | < 0.5% of reads | > 1% of reads | Causes misalignment, reduces usable data, and biases coverage. |

| Duplication Rate | < 10-20% (varies with sequencing depth) | > 20-25% | Indicates PCR over-amplification or limited library complexity, reducing effective coverage. |

Detailed Protocols

Protocol 1: Comprehensive QC Workflow Using FastQC and MultiQC This protocol provides a standardized method for initial quality assessment of raw FASTQ files.

- Software Installation: Install

FastQC(v0.12.1) andMultiQC(v1.20) via conda:conda create -n qc_env fastqc multiqc -c bioconda -c conda-forge. - FastQC Analysis: Run FastQC on all FASTQ files:

fastqc *.fq.gz -o ./fastqc_results -t [number_of_threads]. - Aggregate Reports: Use MultiQC to compile all FastQC reports into a single HTML report:

multiqc ./fastqc_results -o ./multiqc_report. - Interpretation: Open the

multiqc_report.html. Key sections:- Per Base Sequence Quality: Verify Q scores across all cycles.

- Per Sequence GC Content: Check for a normal distribution centered on the expected mean.

- Adapter Content: Determine the percentage of adapter sequence detected.

- Sequence Duplication Levels: Assess the degree of duplication.

Protocol 2: Adapter Trimming and QC Re-assessment Using fastp This protocol details adapter removal and post-cleaning validation.

- Software: Install

fastp(v0.24.2):conda install -c bioconda fastp. - Trimming Command: Execute a standard trimming run:

- Post-Cleanup QC: Repeat Protocol 1 on the trimmed output files (

trimmed_R1.fq.gz,trimmed_R2.fq.gz). - Validation: Compare the MultiQC reports before and after trimming. Confirm reductions in adapter content and improved per-base quality in the initial cycles.

Visualization: WGS Quality Assessment Workflow

Diagram Title: WGS QC & Trimming Decision Workflow

The Scientist's Toolkit: Essential Reagent & Software Solutions

Table 2: Key Resources for WGS Quality Assessment

| Item/Resource | Function/Description | Example Provider |

|---|---|---|

| Illumina DNA Prep Kits | Library preparation reagents, including indexed adapters. | Illumina |

| Universal Adapter Sequences | Known oligo sequences for contamination detection and trimming. | Illumina, custom synthesis |

| FastQC Software | Primary tool for calculating all key QC metrics from FASTQ files. | Babraham Bioinformatics |

| MultiQC Software | Aggregates results from multiple tools (FastQC, fastp) into a single report. | MultiQC Project |

| fastp / Trimmomatic | Performs adapter trimming, quality filtering, and poly-G tail removal. | Open Source |

| Reference Genome (GRCh38) | Essential for alignment-based duplication rate calculation (e.g., via Sambamba). | NCBI Genome Reference Consortium |

| Sambamba / Picard Tools | Calculate post-alignment duplicate read metrics using sequence coordinates. | Open Source / Broad Institute |

Within the Standard Operating Procedure (SOP) for Whole Genome Sequencing (WGS) quality assessment for NCBI-submissible research, defining sequential quality checkpoints is critical. The process is bifurcated into two principal phases: Raw Read Assessment and Post-Alignment Metrics. This application note details the protocols, metrics, and decision points for each phase, ensuring data integrity prior to downstream analysis and submission.

Sequential Quality Checkpoint Framework

Diagram 1: WGS QC Checkpoint Workflow (95 chars)

Checkpoint 1: Raw Read Assessment

Purpose & Rationale

Assess the quality of sequencing output independently of alignment to detect issues arising from sequencing chemistry, instrumentation, or library preparation that could invalidate downstream results.

Experimental Protocol: FastQC Analysis

Materials & Software:

- Input: Paired-end or single-end FASTQ files.

- Software: FastQC (v0.12.1), MultiQC (v1.21) for aggregation.

- Compute: Standard Linux server or HPC node.

Procedure:

- Data Organization: Place all FASTQ files for a sample in a dedicated directory.

- Run FastQC:

- Aggregate Reports: Use MultiQC to compile results across all samples.

- Interpretation: Open the

multiqc_report.htmland evaluate against thresholds in Table 1.

Key Metrics & Interpretation Thresholds

Table 1: Raw Read Assessment Metrics (Checkpoint 1)

| Metric | Ideal Value | Warning Threshold | Failure Threshold | Primary Diagnostic For |

|---|---|---|---|---|

| Per Base Sequence Quality (Phred Score) | ≥ Q30 across all bases | Q28-30 in late cycles | < Q28 across many cycles | Sequencing chemistry degradation. |

| Per Sequence Quality Scores | High, narrow peak (≥Q30) | Peak broadening | Peak centered < Q20 | Presence of low-quality reads. |

| Adapter Content | 0% | < 5% | ≥ 5% | Incomplete adapter trimming. |

| GC Content (%) | Matches organism ± ~5% | Deviation ± 5-10% | Deviation > ±10% | Contamination or biased library. |

| Sequence Duplication Level | Low, diverse library | Moderate duplication | High duplication (>50%) | PCR over-amplification, low input. |

| Overrepresented Sequences | None identified | Few (< 0.1%) | Many (> 0.1%) | Adapter dimers or biological contamination. |

Checkpoint 1 Decision: If any metric hits Failure Threshold, the run fails. Investigate library prep or sequencing run. Proceed to trimming/filtering only if metrics are at "Ideal" or "Warning" levels.

Transition: Data Preprocessing

Following a passing Checkpoint 1, raw reads undergo preprocessing before alignment.

Protocol: Adapter Trimming & Quality Filtering (using fastp)

Checkpoint 2: Post-Alignment Metrics

Purpose & Rationale

Assess the success of the alignment process, including mapping efficiency, coverage uniformity, and potential sample contamination, which are critical for variant calling and NCBI submission integrity.

Experimental Protocol: Alignment & Metric Collection

Materials & Software:

- Input: Trimmed FASTQ files, Reference genome (e.g., GRCh38.p14).

- Software: BWA-MEM2 (aligner), SAMtools, Picard Tools, Qualimap, mosdepth.

- Compute: High-memory node for alignment and duplicate marking.

Procedure:

- Alignment:

- Sort & Convert to BAM:

- Mark Duplicates (using GATK4):

- Calculate Coverage:

mosdepth -t 8 -b 1000 sample sample_marked.bam - Generate Comprehensive Metrics:

Key Metrics & Interpretation Thresholds

Table 2: Post-Alignment Metrics (Checkpoint 2)

| Metric Category | Specific Metric | Ideal Value | Warning Threshold | Failure Threshold | Significance for WGS |

|---|---|---|---|---|---|

| Mapping & Yield | % Aligned Reads | > 95% | 90-95% | < 90% | Sufficient on-target data. |

| % Duplication | < 10% (WGS) | 10-20% | > 20% | Library complexity; impacts SNV calls. | |

| Coverage | Mean Coverage (Target) | ≥ 30x (project-dependent) | ~25-30x | < 20x | Statistical power for variant detection. |

| % Coverage at 10x/20x | ≥ 95% / ≥ 90% | Slight drop | < 90% / < 85% | Uniformity of coverage. | |

| Base Quality | % Mismatched Bases | Low (~0.1-0.5%) | Slight increase | > 1% (context-dependent) | Potential contamination or high error rate. |

| Insert Size | Median Insert Size | Matches library prep (~350-550bp) | Deviation ± 50bp | Major deviation | Library preparation anomaly. |

Checkpoint 2 Decision: Failure thresholds indicate problems with the sample, reference, or alignment parameters. A sample must pass all failure thresholds to proceed to variant calling and submission.

Diagram 2: Metric Interdependence for Final Data Quality (95 chars)

The Scientist's Toolkit: Research Reagent & Software Solutions

Table 3: Essential Tools for WGS Quality Assessment

| Item | Name/Example | Function & Relevance to SOP |

|---|---|---|

| Quality Control Suite | FastQC, MultiQC | Provides the initial, non-aligned assessment of read quality, adapter content, and biases (Checkpoint 1). |

| Trimming/Filtration Tool | fastp, Trimmomatic | Removes adapter sequences, low-quality bases, and reads. Critical for improving mapping rates. |

| Alignment Software | BWA-MEM2, Bowtie2 | Precisely maps sequencing reads to a reference genome, the foundational step for Checkpoint 2. |

| SAM/BAM Utilities | SAMtools, sambamba | Handles format conversion, sorting, indexing, and basic statistics of alignment files. |

| Duplicate Marking Tool | GATK MarkDuplicates, Picard | Identifies PCR/optical duplicates which can bias variant calling metrics. |

| Comprehensive QC Tool | Qualimap, deepTools | Generates a holistic set of post-alignment metrics including coverage, GC bias, and insert size. |

| Coverage Profiler | mosdepth, bedtools | Calculates depth of coverage quickly and efficiently across the genome or target regions. |

| Reference Genome | GRCh38 from GENCODE/NCBI | The standardized, high-quality sequence against which reads are aligned for human WGS. |

| Metric Aggregator | MultiQC (re-used) | Compiles outputs from FastQC, samtools, fastp, Qualimap, etc., into a single report for final review. |

NCBI's Role in Data Integrity and the Importance of Pre-Submission QC

Within the Standard Operating Procedure (SOP) for Whole Genome Sequencing (WGS) quality assessment for NCBI research, data integrity is paramount. The National Center for Biotechnology Information (NCBI) serves as a critical, authoritative repository for public genomic data. Its role in maintaining data integrity begins with stringent submission requirements, emphasizing that quality control is the responsibility of the data submitter. Pre-submission QC is not merely a recommendation but a foundational practice to ensure that data deposited into resources like the Sequence Read Archive (SRA) or GenBank are accurate, reproducible, and usable by the global research community, including drug development professionals who rely on these datasets for target identification and validation.

Application Notes: Quantitative Benchmarks for SRA Submission

NCBI's submission portals enforce specific data standards and formats. The following quantitative benchmarks, derived from current NCBI guidelines and community best practices, are essential for successful WGS data submission.

Table 1: Pre-Submission QC Metrics for WGS Data

| Metric | Recommended Threshold | Purpose | NCBI Validation Check |

|---|---|---|---|

| Sequence Read Quality (Q-score) | ≥ Q30 for ≥ 80% of bases | Ensures base call accuracy; minimizes downstream analysis errors. | Format compliance; not actively scored. |

| Adapter Contamination | ≤ 0.1% of reads | Prevents misinterpretation of adapter sequence as genomic data. | Not actively checked; critical for user analysis. |

| Host/Contaminant DNA | ≤ 5% (dependent on sample type) | Ensures target organism data predominance. | Not checked; submitter must declare. |

| Read Length Uniformity | Consistent with platform specs (<10% deviation) | Confirms library preparation and sequencing stability. | Checked via file integrity and declared metadata. |

| Genome Coverage Depth | ≥ 30x for microbial; ≥ 100x for human (project-dependent) | Ensures statistical confidence in variant calling. | Metadata field; must be accurately reported. |

| Metadata Completeness | 100% of required fields | Enables discoverability, reproducibility, and secondary analysis. | Enforced via submission wizard. |

Experimental Protocols for Pre-Submission QC

Protocol 3.1: Comprehensive WGS QC Workflow Prior to SRA Submission

This protocol outlines a standardized workflow to assess WGS data quality before submission to NCBI-SRA.

Research Reagent Solutions & Essential Materials:

| Item | Function in Pre-Submission QC |

|---|---|

| FastQC (Software) | Provides initial quality overview (per-base sequence quality, adapter content, GC distribution). |

| Trimmomatic or Cutadapt | Removes adapter sequences and low-quality bases from read termini. |

| Kraken2/Bracken | Detects and quantifies taxonomic contamination (e.g., host, microbial). |

| FastQ Screen | Screens reads against a panel of reference genomes to identify contaminants. |

| BWA-MEM & Samtools | Align reads to a reference genome to calculate coverage depth and uniformity. |

SRA Toolkit (prefetch, fasterq-dump) |

Validates SRA-compatible file format and structure. |

| NCBI Submission Portal | Final validation of metadata and file integrity. |

Methodology:

- Raw Read Assessment: Run

FastQCon raw FASTQ files. Generate a summary report usingMultiQC. - Adapter/Quality Trimming: Use

Trimmomatic:java -jar trimmomatic.jar PE -phred33 input_R1.fq input_R2.fq output_R1_paired.fq output_R1_unpaired.fq output_R2_paired.fq output_R2_unpaired.fq ILLUMINACLIP:adapters.fa:2:30:10 LEADING:3 TRAILING:3 SLIDINGWINDOW:4:15 MINLEN:36. - Contamination Screening: Execute

Kraken2against a standard database (e.g., Minikraken2):kraken2 --db /path/to/kraken2_db --paired output_R1_paired.fq output_R2_paired.fq --report kraken_report.txt. - Coverage Analysis: Align trimmed reads to a reference genome with

BWA-MEM:bwa mem -t 8 reference.fa output_R1_paired.fq output_R2_paired.fq | samtools sort -o aligned.bam. Calculate depth withsamtools depth aligned.bam > coverage.txt. - Metadata Preparation: Compile all experimental metadata according to the SRA metadata checklist (e.g., sample attributes, instrument, library strategy).

- Submission Package Validation: Use the

SRA Toolkitto test file integrity and generate a submitter-ready report.

Protocol 3.2: Validating Assembly Quality for GenBank Submission

For assembled genome submissions to GenBank.

Methodology:

- Assembly QC Metrics: Calculate metrics using

QUAST:quast.py assembly.fasta -r reference.fasta -o quast_report. - Contig/Scaffold Validation: Check for misassemblies flagged by QUAST. Ensure contig count is minimized and N50 is maximized for the genome complexity.

- Annotation Check: If submitting annotated genome, validate gene calls using

PROKKAor a similar pipeline against expected protein profiles. - GenBank File Formatting: Use

tbl2asn(provided by NCBI) to generate the final .sqn file from a template, using the assembly and annotation files alongside source metadata.

Title: WGS Pre-Submission QC Workflow for NCBI

NCBI's Validation Infrastructure and Submitter Responsibility

NCBI employs a system of validators to check file formatting, completeness of metadata, and basic integrity (e.g., matching read counts in paired files). However, NCBI does not perform scientific QC—it does not assess read quality, contamination levels, or coverage sufficiency. This underscores the critical nature of the pre-submission protocols. The NCBI submission process is the final checkpoint in the SOP for WGS, ensuring that data meeting minimum technical standards enter the public domain, but it is the researcher's rigorous pre-submission QC that guarantees its scientific utility and integrity.

Title: Data Integrity Responsibility Division

Integrating robust, documented pre-submission QC protocols into an SOP for WGS is non-negotiable for high-quality NCBI research. NCBI's role is to provide the infrastructure and standards to preserve and disseminate data, but the onus of data integrity lies with the submitting scientist. By adhering to the detailed application notes and protocols outlined herein, researchers ensure their data constitutes a reliable foundation for future discoveries, upholding the collective integrity of public databases.

Step-by-Step SOP: Executing WGS QC from FASTQ to NCBI-Ready Data

Application Notes

This protocol initiates the Standard Operating Procedure (SOP) for Whole Genome Sequencing (WGS) data submission to the NCBI. The initial quality assessment and control (QC) of raw sequencing reads is a critical, non-negotiable step that directly impacts downstream analysis validity and repository compliance. A robust, reproducible QC environment, built on established open-source tools, ensures the identification of technical artifacts, contaminants, and sequence biases, forming the foundation for high-quality NCBI research-grade data.

The recommended toolkit is selected for its complementary functions, community support, and interoperability:

- FastQC: Provides a foundational, comprehensive visual report on read quality metrics.

- MultiQC: Aggregates results from multiple tools and samples into a single, interactive report, essential for batch processing.

- Fastp: An all-in-one, ultra-fast preprocessor that performs QC, adapter trimming, and filtering, ideal for large WGS datasets.

- Trimmomatic: A highly configurable, precise tool for read trimming and filtering, often used for more specific, conservative processing needs.

Table 1: Core Software Toolkit Comparison for WGS QC

| Tool | Primary Function | Key Strength | Typical Use Case in WGS SOP |

|---|---|---|---|

| FastQC v0.12.1 | Quality Control Visualization | Generates standard, interpretable plots for per-base/sequence quality, adapter content, GC bias, etc. | Initial diagnostic assessment of raw FASTQ files from the sequencer. |

| MultiQC v1.21 | Report Aggregation | Compiles outputs from FastQC, fastp, Trimmomatic, etc., into a unified HTML report. | Centralized QC monitoring for all samples in a sequencing run or project. |

| fastp v0.24.0 | All-in-one Processing | Single-pass processing for adapter trimming, quality filtering, polyG/X trimming, and UMI handling. | High-speed, efficient primary cleanup of Illumina WGS data. |

| Trimmomatic v0.39 | Read Trimming | Precise control over sliding window trimming and heuristic filtering; robust to low-quality ends. | Conservative trimming when specific, parameter-sensitive trimming is required. |

Experimental Protocols

Protocol 2.1: Initial Quality Assessment with FastQC and MultiQC

Objective: To generate a comprehensive quality profile for raw WGS FASTQ files and aggregate results across all samples.

Materials:

- Raw paired-end or single-end FASTQ files.

- Unix-based environment (Linux/macOS) or Windows Subsystem for Linux.

- Java Runtime Environment (for FastQC, Trimmomatic).

- Python 3.7+ (for MultiQC).

Procedure:

- Installation: Install tools via package managers.

- Run FastQC: Execute on all FASTQ files.

-o: Output directory.-t: Number of threads.

- Aggregate with MultiQC: Compile all FastQC reports.

- Interpretation: Open the

multiqc_report.html. Critically examine key modules:- Per Base Sequence Quality: Ensure Q-score median > 30 for most cycles.

- Adapter Content: Note if adapter contamination exceeds 5% at read ends.

- Per Sequence GC Content: Distribution should be normal, centered on expected genome GC%.

- Sequence Duplication Levels: High duplication in WGS may indicate PCR over-amplification or enrichment artifacts.

Protocol 2.2: Read Preprocessing with Fastp

Objective: To perform adapter trimming, quality filtering, and polyG trimming in a single pass.

Materials:

- Raw FASTQ files.

- Adapter sequence files (optional; built-in detection for common Illumina adapters).

- Activated

wgs-qcconda environment.

Procedure:

- Basic Processing Run:

- Parameters Explained:

--qualified_quality_phrase: Bases with Q<20 are considered unqualified.--length_required: Reads shorter than 50bp after trimming are discarded.--poly_g_min_len: Trim polyG tails (common in NovaSeq data).--correction: Enable base correction for paired-end reads.

- Post-processing QC: Run FastQC and MultiQC on the trimmed FASTQ files to confirm improvement.

Protocol 2.3: Alternative Read Trimming with Trimmomatic

Objective: To apply precise, sliding window quality trimming and remove Illumina adapters.

Materials:

- Raw FASTQ files.

- Adapter FASTA file (e.g.,

TruSeq3-PE-2.fafor paired-end, provided with Trimmomatic). - Java Runtime Environment.

Procedure:

- Execute Trimmomatic for Paired-End Reads:

- Step-wise Parameter Rationale:

ILLUMINACLIP: Removes adapter sequences.2:30:10specifies seed mismatches, palindrome clip threshold, and simple clip threshold.LEADING/TRAILING: Cut bases off start/end if below Q20.SLIDINGWINDOW: Scan read with 4-base window, trim if average Q < 25.MINLEN: Drop reads shorter than 50bp.

- Output: Use only the

*_paired.fq.gzfiles for downstream alignment. Aggregate QC reports with MultiQC.

Visualization: WGS QC and Preprocessing Workflow

Diagram 1: WGS QC and Preprocessing Workflow

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 2: Essential Materials & Resources for WGS QC

| Item | Function/Description | Source/Example |

|---|---|---|

| Adapter Sequence File | FASTA file containing adapter sequences for precise removal by Trimmomatic. Essential for handling index/transposase sequences. | Provided with Trimmomatic (e.g., TruSeq3-PE.fa). Customizable for non-standard kits. |

| Reference Genome (FASTA) | Used for optional alignment-based QC metrics (e.g., mapping rate). Not in core QC but part of extended validation. | NCBI RefSeq, GenBank. |

| Conda/Bioconda Channels | Reproducible environment management ensuring version-controlled installation of all bioinformatics tools. | https://anaconda.org/bioconda/ |

| High-Performance Computing (HPC) Resources | Essential for parallel processing of large WGS datasets (dozens of FASTQ files, each ~1-10 GB). | Local cluster or cloud compute (AWS, GCP). |

MultiQC Configuration File (multiqc_config.yaml) |

Customizes report appearance, ignores specific modules, or adds custom content for thesis documentation. | User-generated. |

| QC Threshold Documentation | Lab-specific SOP defining pass/fail criteria (e.g., min Q-score, max adapter %, min read length). Critical for consistent decision-making. | Internal laboratory document. |

This Application Note, framed within a Standard Operating Procedure (SOP) for Whole Genome Sequencing (WGS) quality assessment for NCBI-submissible research, details the protocol for the initial computational assessment of raw sequencing data using FastQC. It provides researchers and drug development professionals with a standardized framework for interpreting FastQC reports across Illumina, Ion Torrent, and PacBio platforms to determine data suitability for downstream analysis.

The initial quality assessment of raw sequence data is a critical gatekeeping step in any WGS pipeline. Consistent interpretation of FastQC reports ensures that only data meeting predefined quality thresholds proceeds to alignment and variant calling, safeguarding the integrity of research destined for public repositories like NCBI SRA. This protocol standardizes the assessment across common sequencing platforms.

FastQC Module Interpretation & Quality Thresholds

FastQC evaluates multiple metrics. The following table summarizes key modules, their interpretation, and recommended action thresholds for Illumina short-read data, with notes for other platforms.

Table 1: Interpretation Guide for FastQC Modules and Quality Thresholds

| FastQC Module | Ideal Outcome | Warning/Flag (Per FastQC) | Critical Threshold (SOP Recommendation) | Platform-Specific Notes |

|---|---|---|---|---|

| Per Base Sequence Quality | Quality scores mostly in green range (≥Q30). | Quality scores dip into orange/yellow. | >80% of bases ≥ Q30 for Illumina. | Ion Torrent: Expect lower Q-scores. PacBio: Uses different quality system (QV). |

| Per Sequence Quality Scores | Sharp peak in the high-quality region (e.g., Q30+). | Broad or multiple peaks. | Mean sequence quality ≥ Q28. | Applicable to all platforms. |

| Per Base Sequence Content | Lines run parallel, with small deviation at read start. | Marked deviation from parallelism. | Nucleotide proportion delta < 10% after position 5. | PacBio: More variation is typical. |

| Adapter Content | No detected adapters. | Any adapter presence reported. | < 5% adapter contamination (post-trimming target: 0%). | Platform-specific adapter kits must be selected. |

| Overrepresented Sequences | No overrepresented sequences. | Any hit reported. | > 0.1% of total reads requires investigation. | Common in amplicon or targeted sequencing. |

Protocol: Initial Raw Data Assessment Workflow

Materials and Software (The Scientist's Toolkit)

Table 2: Essential Research Reagent Solutions & Computational Tools

| Item | Function/Description |

|---|---|

| Raw FASTQ Files | The primary input data, containing sequence reads and quality scores. |

| FastQC Software (v0.12.0+) | The core tool for generating the initial quality report from FASTQ files. |

| MultiQC (v1.15+) | Aggregates multiple FastQC reports into a single summary HTML for batch analysis. |

| Trimmomatic or Cutadapt | Used for read trimming if FastQC flags adapter content or quality drops. |

| High-Performance Compute (HPC) Cluster or Cloud Instance | Necessary for processing large WGS datasets efficiently. |

| Platform-Specific Adapter Fasta Files | Essential for accurate adapter contamination detection (e.g., TruSeq for Illumina). |

Detailed Experimental Protocol

Step 1: FastQC Report Generation

- Command: Execute FastQC on all FASTQ files from the sequencing run.

- Output: For each FASTQ, an HTML report (

*.html) and a ZIP file containing raw data.

Step 2: Report Aggregation with MultiQC

- Navigate to the directory containing all FastQC output (

*.zipfiles). - Command:

- Output: A single

multiqc_report.htmlfile providing a consolidated view.

Step 3: Systematic Report Interpretation (Refer to Table 1)

- Open the MultiQC report or individual FastQC HTML files.

- Assess each module sequentially. Flag any module failing the "Critical Threshold" in Table 1.

- Platform-Specific Considerations:

- Illumina: Focus on Per Base Quality and Adapter Content.

- Ion Torrent: Expect more "warnings" in Per Base Sequence Content; focus on mean quality scores.

- PacBio/ONT: Use dedicated tools like

pycoQCfor long-read metrics, but FastQC can still check for general anomalies.

Step 4: Decision Point & Documentation

- If all metrics pass critical thresholds, data passes to the next SOP step (alignment).

- If failures are noted (e.g., high adapter content, low quality), initiate the pre-defined trimming and re-assessment sub-protocol.

- Document: Record the assessment outcome, including pass/fail status and any deviations, in the project's Quality Assessment Log.

Visualized Workflows

Diagram 1: Raw Data Assessment & Decision Workflow

Diagram 2: FastQC Module Failure Analysis Guide

This document establishes the standard operating procedure for Step 3 of the Whole-Genome Sequencing (WGS) quality assessment pipeline for NCBI-submissible research data. Consistent preprocessing is critical for ensuring downstream analytical accuracy in variant calling, assembly, and comparative genomics.

1. Core Principles and Quantitative Benchmarks Preprocessing aims to remove technical artifacts and systematic errors. The following table summarizes key performance metrics and decision thresholds for Illumina short-read data.

Table 1: Preprocessing Parameters and Target Metrics

| Processing Step | Key Parameter | Typical Threshold/Setting | Post-Processing Success Metric |

|---|---|---|---|

| Adapter Trimming | Overlap length | 1 bp (minimum) | >99% adapter contamination removed. |

| Error tolerance | 0.1-0.2 | ||

| Quality Filtering | Minimum per-base quality (Q) | Q20 (Phred scale) | >90% of bases above Q30. |

| Minimum read length | 50-70% of original length | Retained reads > 80% of input. | |

| Ambiguous bases (N) | 0 allowed | 0 Ns in final read set. | |

| Read Correction | k-mer size | 21-31 (must be odd) | Error rate reduction > 50% (e.g., from 0.1% to <0.05%). |

| Minimum k-mer multiplicity | 2-3 |

2. Detailed Experimental Protocols

Protocol 2.1: Adapter Trimming with FastP Objective: To remove adapter sequences and trim low-quality bases from read ends. Materials: Raw FASTQ files (R1 & R2), High-performance computing cluster or workstation. Procedure:

- Install

fastp(version >=0.23.0). - Execute command:

fastp -i sample_R1.fastq.gz -I sample_R2.fastq.gz -o sample_R1_trimmed.fastq.gz -O sample_R2_trimmed.fastq.gz --detect_adapter_for_pe --trim_poly_g --overrepresentation_analysis --thread 8 - Validate output using the integrated HTML report. Confirm adapter content curve approaches zero.

Protocol 2.2: Quality Filtering with PRINSEQ++ Objective: To discard reads failing quality, length, or complexity thresholds. Materials: Adapter-trimmed FASTQ files. Procedure:

- Install

prinseq++(version >=2.0.0). - Execute command:

prinseq++ -fastq sample_R1_trimmed.fastq.gz -fastq2 sample_R2_trimmed.fastq.gz -out_format 3 -out_good sample_qualified -min_len 70 -min_qual_mean 20 -ns_max_p 0 -derep 1 -lc_method entropy -lc_threshold 70 - Outputs:

sample_qualified_1.fastqandsample_qualified_2.fastq. Verify metrics insample_qualified.log.

Protocol 2.3: Read Error Correction with Lighter Objective: To correct sequencing errors using a k-mer spectrum approach without over-correction. Materials: Quality-filtered FASTQ files, pre-computed genome k-mer count (for reference-guided mode). Procedure:

- Install

Lighter(version >=1.1.2). - For reference-free correction on human WGS:

lighter -r sample_qualified_1.fastq -r sample_qualified_2.fastq -k 21 5500000000 - Outputs:

sample_qualified_1.cor.fqandsample_qualified_2.cor.fq. Assess correction via pre/post k-mer uniqueness plots.

3. Visual Workflow and Toolkit

Title: WGS Preprocessing Workflow with Key Tools

Table 2: The Scientist's Toolkit – Essential Research Reagents & Solutions

| Item | Function/Description |

|---|---|

| fastp | All-in-one FASTQ preprocessor for adapter trimming, quality filtering, and reporting. |

| PRINSEQ++ | Filters reads by quality, length, complexity, and duplicates; reduces dataset bias. |

| Lighter | Fast, memory-efficient read correction tool using Bloom filters for k-mer counting. |

| SAMtools/SeqKit | Utilities for file format validation, conversion, and basic quality metric extraction. |

| MultiQC | Aggregates results from all preprocessing tools into a single, interactive HTML report. |

| SRA Toolkit | Validates final FASTQ integrity and prepares files for NCBI Sequence Read Archive (SRA) submission. |

Application Notes

Post-alignment quality control (QC) is a critical step in whole-genome sequencing (WGS) analysis within an NCBI-focused research pipeline. It ensures the reliability of downstream variant calling and interpretation. This phase assesses the quality of the aligned sequence data (BAM files) against reference genomes, verifying metrics such as coverage uniformity, mapping accuracy, and potential artifacts.

SAMtools provides robust utilities for manipulating and querying alignments, enabling basic sanity checks and filtering. QualiMap offers a comprehensive, visualization-rich evaluation of alignment characteristics against known genomic features. BedTools complements this by facilitating coverage analysis across specific regions of interest (e.g., exomes, panel genes). Integrating these tools forms a cohesive QC framework, flagging samples that fail established SOP thresholds before progression to variant discovery, thereby upholding data integrity for deposition in NCBI repositories like dbGaP or SRA.

Experimental Protocols

Protocol 1: Basic BAM File QC and Processing with SAMtools

Objective: To generate alignment statistics, sort, index, and filter BAM files. Materials: High-performance computing environment, SAMtools v1.20+ installed, aligned BAM file. Method:

- Sort BAM file by genomic coordinate:

samtools sort -@ 8 -o sample.sorted.bam sample.bam - Index the sorted BAM file:

samtools index sample.sorted.bam - Generate basic alignment statistics (flagstat):

samtools flagstat sample.sorted.bam > sample.flagstat.txt - Generate detailed statistics (stats):

samtools stats sample.sorted.bam > sample.stats.txt - (Optional) Filter alignments: e.g., to keep properly paired reads with mapping quality ≥20:

samtools view -b -f 2 -q 20 sample.sorted.bam > sample.filtered.bam

Protocol 2: Comprehensive Alignment QC with QualiMap

Objective: To assess coverage, insert size, and genomic feature coverage. Materials: QualiMap v2.3+ installed, Java Runtime Environment, reference genome FASTA and GTF annotation files. Method:

- Run

bamqcanalysis:qualimap bamqc -bam sample.sorted.bam -outdir ./qualimap_results --java-mem-size=8G - For targeted sequencing (e.g., exomes), run

rnaseqormulti-bamqcmode with a BED file:qualimap multi-bamqc -d bam_list.txt -outdir ./multi_qualimap - Interpret the HTML report: Review key sections: "Globals," "Coverage," "Insert Size," and "Genomic Origin."

Protocol 3: Targeted Coverage Analysis with BedTools

Objective: To calculate depth of coverage over specific genomic intervals (e.g., coding regions). Materials: BedTools v2.32+ installed, BED file defining target regions. Method:

- Calculate coverage per target:

bedtools coverage -a target_regions.bed -b sample.sorted.bam -hist > sample.coverage.hist.txt - Generate a genome coverage file:

bedtools genomecov -bga -ibam sample.sorted.bam > sample.genomecoverage.bedgraph - Determine mean coverage per target:

bedtools coverage -a target_regions.bed -b sample.sorted.bam -mean > sample.mean_coverage.txt

Data Presentation

Table 1: Key QC Metrics and Recommended Thresholds for WGS (Human, 30x)

| Metric | Tool | Calculation/Description | Acceptable Threshold (Typical) |

|---|---|---|---|

| Total Reads | SAMtools flagstat | Total number of sequencing reads. | Project-dependent |

| Mapping Rate (%) | SAMtools flagstat / QualiMap | (Mapped reads / Total reads) * 100. | > 95% |

| Properly Paired Rate (%) | SAMtools flagstat | Reads mapped in proper pairs. | > 90% |

| Mean Depth | QualiMap / BedTools | Average read depth across genome/target. | ≥ 30x (WGS) |

| Coverage Uniformity (≥1x) | QualiMap | % of genome/target covered at ≥1x depth. | > 98% (WGS) |

| Coverage Uniformity (≥10x) | QualiMap | % of genome/target covered at ≥10x depth. | > 95% (WGS) |

| Duplication Rate (%) | QualiMap (estimated) | Percentage of PCR/optical duplicates. | < 10% (WGS) |

| Median Insert Size | QualiMap | Median fragment library size. | 300-500 bp (varies) |

| Target Bases ≥30x (%) | BedTools | % of target bases (e.g., exome) at ≥30x. | > 85% (Exome) |

Mandatory Visualization

Title: Post-Alignment QC Workflow for WGS

The Scientist's Toolkit

Table 2: Essential Research Reagent Solutions & Materials

| Item | Function/Description |

|---|---|

| High-Performance Computing Cluster | Essential for running memory- and CPU-intensive alignment and QC tools. |

| SAMtools (v1.20+) | Core utility for manipulating SAM/BAM files, providing basic statistics (flagstat, stats) and filtering. |

| QualiMap (v2.3+) | Java-based tool for generating comprehensive, HTML-based QC reports with visualizations for NGS data. |

| BedTools Suite (v2.32+) | Toolkit for set-theoretic operations on genomic intervals; crucial for coverage analysis on target regions. |

| Reference Genome FASTA | The reference sequence (e.g., GRCh38) used for alignment and as a baseline for QC metrics. |

| Genome Annotation (GTF/GFF) | File defining gene/feature coordinates; used by QualiMap for genomic origin analysis. |

| Target Regions BED File | File specifying coordinates for exomes or gene panels; used for targeted coverage analysis with BedTools. |

| Java Runtime Environment (JRE) | Required to run the QualiMap software package. |

| QC Metrics Summary Table | Internal document/SOP defining pass/fail thresholds (as in Table 1) for the project. |

Application Notes

Compiling and documenting Quality Control (QC) reports is a critical, final verification step before submitting Whole Genome Sequence (WGS) data to the NCBI Sequence Read Archive (SRA). This process directly supports the reproducibility and auditability requirements central to modern genomic research and regulatory submissions in drug development. Effective documentation serves dual purposes: it ensures compliance with NCBI’s stringent metadata requirements and creates a transparent audit trail for internal reviews, publication peer-review, or regulatory agency scrutiny (e.g., FDA for investigational antimicrobials or oncology biomarkers). A structured report links raw QC metrics, processed data, and curated metadata into a coherent narrative, explicitly highlighting any deviations from the pre-defined SOP and their justifications. This step formalizes the "quality story" of the dataset, transforming technical outputs into defensible research assets.

Protocol: Compiling the Comprehensive QC Report

This protocol details the assembly of a final QC report integrating outputs from prior WGS QA steps (raw read QC, assembly assessment, contamination checks).

2.1. Materials and Data Inputs

- Primary QC Outputs: FastQC/MultiQC reports, Quast assembly reports, Kraken2/Bracken abundance reports, CheckM completeness/contamination tables, AMRfinder++ output.

- Metadata: Fully populated SRA metadata spreadsheet (BioProject, BioSample, SRA experiment, and run attributes).

- SOP Document: The governing Standard Operating Procedure for WGS QA.

- Documentation Template: A standardized report template (e.g., in Markdown, PDF, or Word).

2.2. Procedure

Part A: Data Aggregation and Summary Table Creation

- Extract Key Metrics: From all primary QC outputs, extract the critical pass/fail metrics as defined in the SOP. Key metrics include, but are not limited to:

- Total sequenced bases, read count, and mean read quality (Q-score).

- Adapter content percentage.

- Total assembly length, N50, number of contigs.

- Estimated genome completeness (%) and contamination (%).

- Coverage depth (mean and breadth).

- Contaminant detection (e.g., % reads classified to non-target taxa).

- Populate the Master QC Summary Table: Create a table collating all metrics for each sample in the submission batch. Include columns for the observed value, the SOP-defined threshold, and a pass/fail status.

Part B: Narrative Documentation and Justification

- Executive Summary: Write a brief overview stating the purpose of the sequencing project, the total number of samples, and an overall statement of data quality (e.g., "All 50 samples passed critical QC thresholds and are suitable for submission").

- Methodology Summary: Reference the specific versions of all tools, databases, and the SOP used in the analysis pipeline.

- Results and Deviations:

- For each QC dimension (Read Quality, Assembly, Contamination), present a summary of the results.

- Crucially, document any sample that failed any QC metric as per the SOP. For each failure, provide:

- A description of the anomaly.

- An assessment of its potential biological or technical cause.

- A justification for why the data is still being submitted (e.g., "Sample S123 failed mean read quality threshold (Q28 vs. Q30) but assembly metrics are excellent; likely due to a known sequencer flow cell anomaly on lane 5. Data retained as it does not impact downstream variant calling.").

- Any corrective or investigative actions taken.

Part C: NCBI Metadata Audit and Integration

- Metadata-Verification Cross-Check: Explicitly link QC findings to SRA metadata. For example:

- Note if a low coverage depth is consistent with the

library_layout(e.g., single-end) orlibrary_selectionmethod stated. - Confirm that the

instrument_modelmatches the platform generating the reported Q-scores. - Document that the

scientific_namein BioSample aligns with the primary taxon identified in the contamination screen.

- Note if a low coverage depth is consistent with the

- Final Report Assembly: Combine the summary table, narrative sections, and visualizations (see below) into a single document. Version and date the report.

Table: Example Master QC Summary for a Bacterial WGS Submission Batch

| Sample ID | Total Bases (Gb) | Mean Q-Score | % Adapters | Contigs | N50 (kb) | Completeness (%) | Contamination (%) | Primary QC Status |

|---|---|---|---|---|---|---|---|---|

| ISO_001 | 1.5 | 35 | 0.5 | 85 | 150.2 | 99.5 | 0.8 | PASS |

| ISO_002 | 1.8 | 34 | 0.3 | 92 | 145.7 | 99.1 | 1.2 | PASS |

| ISO_003 | 1.2 | 28 | 1.8 | 210 | 45.5 | 98.8 | 0.5 | FLAG |

| SOP Threshold | >1.0 | >30 | <2.0 | <200 | >50 | >98.0 | <2.0 |

Note for ISO_003: Flagged due to low mean Q-score and elevated contig count. Investigation traced to a minor library prep impurity. Assembly is contiguous and complete; sample submitted with note.

Visualization: QC Report Compilation Workflow

Diagram Title: QC Report Assembly and Audit Process

The Scientist's Toolkit: Essential Reagents & Materials

Table: Key Research Reagent Solutions for WGS QC and Reporting

| Item | Function/Application in QC Reporting |

|---|---|

| MultiQC | Aggregates results from multiple bioinformatics tools (FastQC, Quast, etc.) into a single, interactive HTML report, serving as the primary data source for metric extraction. |

| NCBI SRA Metadata Templates | Spreadsheet templates (e.g., SRA_Metadata.xlsx) downloaded from the NCBI portal provide the standardized format for capturing experiment, sample, and library attributes, enabling automated validation. |

| Kraken2 & Bracken Databases | Pre-formatted genomic databases (e.g., Standard, PlusPF) enable precise taxonomic classification for contamination screening, a critical QC metric requiring documentation. |

| CheckM Data Files | Lineage-specific marker gene sets (.hmms, .tsv) are required for accurate estimation of genome completeness and contamination, the gold-standard metrics for assembly QA. |

| Documentation Software (e.g., R Markdown, Jupyter) | Tools that combine code, text, and tables allow for dynamic generation of QC reports, ensuring reproducibility and easy updating when analysis parameters change. |

| Version Control System (e.g., Git) | Essential for tracking changes to the QC report, analysis scripts, and SOP documents, creating an immutable audit trail for reviewer inspection. |

Troubleshooting Common WGS QC Failures and Optimizing Your Pipeline

Diagnosing and Resolving Poor Base Quality Scores and Failed Quality Curves

Within the Standard Operating Procedure (SOP) for Whole Genome Sequencing (WGS) quality assessment for NCBI submission, the analysis of base quality scores is a critical checkpoint. Poor quality scores and anomalous quality distribution curves directly compromise downstream variant calling, assembly, and the validity of deposited data. This document provides detailed application notes and protocols for diagnosing the root causes of these failures and implementing effective remediation strategies.

Core Metrics and Quantitative Benchmarks

Base quality, expressed as a Phred-scaled score (Q), is logarithmically related to the probability of an incorrect base call. A Q-score of 30 (Q30) denotes a 0.1% error probability and is a standard benchmark for high-quality sequencing.

Table 1: Key Base Quality Metrics and Interpretation

| Metric | Ideal Value/Range | Warning Threshold | Failure Threshold | Implication of Failure |

|---|---|---|---|---|

| % Bases ≥ Q30 | >85% for Illumina | 75-85% | <75% | High error rate, unreliable variant calls. |

| Mean Quality Score (Read) | >30 across all cycles | 28-30 | <28 | Systematic read-wide quality issues. |

| Quality Score by Cycle | Stable or very gradual decline | Sharp drop >5 points | Severe drop or oscillations | Chemistry, focus, or surface issues at specific cycle. |

| Quality Score by Base | A=T=C=G | Deviation >2 Q points between bases | Deviation >5 Q points | Nucleotide-specific chemistry or detection issue. |

Diagnostic Protocol: Identifying Root Causes

Protocol 3.1: Multi-Tool Quality Assessment Workflow

Objective: To generate a comprehensive diagnostic profile of base quality issues from raw FASTQ files. Input: Paired-end or single-end FASTQ files from WGS run. Software: FastQC (v0.12.0+), MultiQC (v1.15+), Python (Pandas, Matplotlib). Duration: 1-2 hours.

Steps:

- Initial Profiling: Run FastQC on all FASTQ files:

fastqc *.fastq.gz -t [number_of_threads]. - Aggregate Report: Compile results using MultiQC:

multiqc . -n multiqc_report. - Critical Analysis: Examine the

multiqc_report.htmlwith focus on:- "Per base sequence quality" plot: Identify cycles with median quality below Q28. Note the shape of the curve (sharp drop, gradual decline, oscillations).

- "Per sequence quality scores" plot: Check for bimodal distribution, indicating a subset of very poor-quality reads.

- "Per base sequence content" plot: Abnormal fluctuations, especially in early cycles (<10), may indicate adapter contamination or overclustering affecting quality.

- Cross-Reference with Run Metrics: Correlate quality drops with machine-reported metrics (cluster density, phasing/prephasing, intensity metrics) from the sequencing platform's run summary file.

Diagram: Diagnostic Workflow for Base Quality Issues

Remediation Protocols

Based on the diagnostic outcome, select and apply the appropriate protocol.

Protocol 4.1: Addressing Overclustering and Phasing/Prephasing

Applicable Cause: High cluster density leading to signal overlap; improper chemistry balance causing loss of sync. Action:

- Wet-Lab: For subsequent runs, reduce loading concentration by 10-20%. Ensure appropriate library molarity and purity (260/280 ~1.8, 260/230 >2.0).

- Bioinformatic Trimming: Use Trimmomatic or fastp to aggressively trim read-ends where quality drops.

- Example Command (Trimmomatic):

java -jar trimmomatic.jar PE -phred33 input_R1.fastq input_R2.fastq output_R1_paired.fastq output_R1_unpaired.fastq output_R2_paired.fastq output_R2_unpaired.fastq SLIDINGWINDOW:4:20 MINLEN:36

- Example Command (Trimmomatic):

Protocol 4.2: Addressing Library or Sample Preparation Issues

Applicable Cause: PCR artifacts, adapter contamination, or degraded DNA. Action:

- Wet-Lab: Re-assess DNA integrity via Bioanalyzer/TapeStation (DV200 > 80% for WGS). Optimize PCR cycles. Use bead-based double-size selection for library prep.

- Bioinformatic Cleaning: Use adapter trimmers (e.g.,

--detect_adapter_for_pein fastp) and consider deduplication tools like Picard MarkDuplicates if PCR duplicates are prevalent.

Protocol 4.3: Addressing Instrument-Specific Issues

Applicable Cause: Focus drift, fluidics blockage, or camera issues manifesting as spatial or cyclical patterns. Action:

- Operational: Follow manufacturer's preventive maintenance (PM) schedule. Perform pre-run diagnostics and calibration (e.g., Illumina's

Cycle ACRandFocuschecks). - Post-Hoc: If the issue is lane- or tile-specific, consider using tools like

sav(Spatial Analysis of Variance) to identify and potentially mask affected areas before base calling re-analysis, if raw intensity files (.bcl) are available.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents and Kits for Quality Remediation

| Item | Function in Quality Control | Example Product(s) |

|---|---|---|

| High-Sensitivity DNA Assay Kit | Accurately quantifies low-input and fragmented DNA libraries prior to pooling and loading, preventing over/under-clustering. | Agilent Bioanalyzer HS DNA kit, Qubit dsDNA HS Assay. |

| Magnetic Bead Cleanup Kits | Performs size selection and purification of libraries, removing adapter dimers and primer artifacts that interfere with clustering. | SPRIselect beads (Beckman), AMPure XP beads. |

| Library Quantification Kit | qPCR-based absolute quantification of "clonally amplifiable" library fragments, superior to fluorometry for loading calculation. | KAPA Library Quantification Kit, NEBNext Library Quant Kit. |

| Phasing/Prephasing Control Calibration Kit | Used during sequencer PM to calibrate the signal and correct for progressive lag/lead errors. | Illumina's PhiX Control v3. |

| Nuclease-Free Water & Buffers | Critical for all dilution steps; contaminants can degrade sequencing chemistry. | TE Buffer, IDTE, certified nuclease-free water. |

Diagram: Relationship Between Root Cause and Remediation Path

Verification and SOP Integration

Following remediation, verification is mandatory before NCBI submission.

Protocol 6.1: Post-Remediation Verification

- Re-run the diagnostic workflow (Protocol 3.1) on the cleaned/trimmed FASTQ files.

- Confirm that %Q30 and mean quality meet the thresholds in Table 1.

- Document all actions taken (trimming parameters, loading concentration changes, etc.) in the WGS project's Quality Assessment Report.

- SOP Update: If a systemic issue is identified (e.g., consistent overclustering from a specific library prep method), formalize the successful remediation step into the institutional WGS SOP.

Addressing Adapter Contamination and Low-Complexity Sequences

Within a Standard Operating Procedure (SOP) for Whole Genome Sequencing (NCBI) quality assessment, addressing data artifacts is paramount for downstream analysis validity. Adapter contamination and low-complexity sequences represent two critical pre-analytical challenges that, if unmitigated, can lead to misalignment, erroneous variant calls, and biased genomic interpretations, ultimately compromising research integrity and drug development pipelines.

Table 1: Common Adapter Contamination Metrics and Impact

| Metric | Typical Threshold for WGS (Human) | Consequence of Exceeding Threshold | Common Source |

|---|---|---|---|

| % Adapter Content (per read) | < 0.5% (post-trimming) | Reduced mappable reads; false structural variant calls. | Incomplete enzymatic cleavage; short fragment sizes. |

| % Bases with Q<30 | > 85% of bases | Reduced confidence in base calling near adapters. | Adapter dimer carryover. |

| Insert Size Deviation | > 25% from library prep target | Inaccurate paired-end mapping; coverage gaps. | Size selection failure; adapter ligation bias. |

Table 2: Low-Complexity Sequence Characteristics and Filtering Criteria

| Sequence Type | Definition | Genomic Impact | Common Filtering Approach |

|---|---|---|---|

| Homopolymer Runs | ≥ 8 identical consecutive bases. | Sequencing errors; alignment ambiguity. | Hard-mask or soft-clip in BAM. |

| Simple Repeats | Short tandem repeats (e.g., (AT)n, (CG)n). | Misalignment; false positive SNVs. | Tandem Repeat Finder annotation. |

| AT/GC Extremes | > 80% AT or GC content in a 50bp window. | Poor mapping quality; coverage dropouts. | Sliding window analysis and flagging. |

Experimental Protocols

Protocol 3.1: Detection and Removal of Adapter Contamination

Objective: To identify and trim adapter sequences from raw WGS FASTQ files.

Materials: Raw paired-end FASTQ files, high-performance computing cluster.

Software: fastp (v0.23.4), Cutadapt (v4.6).

Procedure:

- Quality Assessment: Run

fastp --detect_adapter_for_pe --thread 8 -i sample_R1.fq.gz -I sample_R2.fq.gz -o sample_trimmed_R1.fq.gz -O sample_trimmed_R2.fq.gz -j sample_fastp.json -h sample_fastp.html. - Adapter Sequence Specification: If known custom adapters are used, provide sequences to

Cutadapt:cutadapt -a AGATCGGAAGAGC -A AGATCGGAAGAGC -o out1.fastq -p out2.fastq in1.fastq in2.fastq. - Validation: Inspect the

fastpHTML report, focusing on the "adapter trimming" graph and the post-filtering summary table. Confirm adapter content is below the 0.5% threshold. - Post-trimming QC: Re-run basic FASTQC on trimmed files to confirm improvement in per-base sequence content and k-mer profiles.

Protocol 3.2: Identification and Masking of Low-Complexity Regions

Objective: To flag genomic regions dominated by low-complexity sequences for downstream exclusion or careful interpretation.

Materials: Reference genome (e.g., GRCh38), aligned BAM files (post-adapter trimming).

Software: BEDTools (v2.31.0), RepeatMasker (open-4.1.6), SAMtools (v1.19).

Procedure:

- Genome Annotation: Run

RepeatMaskeron the reference genome FASTA file using the-species humanparameter to generate a BED file of known repetitive elements. - Homopolymer Identification: Use a custom script or

BEDToolsnucfunction to scan the reference for homopolymer runs ≥8bp, outputting a BED file. - Merge Annotations: Combine the RepeatMasker and homopolymer BED files using

bedtools sortandbedtools merge. - Application to Aligned Data: Use

bedtools intersectto flag reads in the BAM file that originate primarily from these merged regions. Optionally, hard-mask the reference sequence withNs in these regions prior to alignment.

Visualizations

Title: WGS Adapter and Low-Complexity Sequence Cleanup Workflow

Title: Adapter Contamination Causes and Consequences

The Scientist's Toolkit

Table 3: Key Research Reagent Solutions for Adapter and Complexity Control

| Item | Function in Protocol | Example Product/Kit | Critical Parameters |

|---|---|---|---|

| Size Selection Beads | Precisely removes short fragments (including adapter dimers) post-ligation. | SPRIselect Beads (Beckman Coulter) | Bead-to-sample ratio; incubation time. |

| High-Fidelity DNA Ligase | Efficiently ligates adapters to insert DNA, minimizing blunt-end adapter-dimer formation. | Quick T4 DNA Ligase (NEB) | Concentration; reaction temperature & time. |

| Low-Input Library Prep Kit | Optimized for limited samples, often includes robust adapter clean-up steps. | KAPA HyperPlus Kit (Roche) | Input DNA mass; number of PCR cycles. |

| Hybridization Blockers | Suppress signal from known high-abundance low-complexity regions (e.g., Cot-1 DNA). | Human Cot-1 DNA (Thermo Fisher) | Concentration; hybridization temperature. |

| PCR Depletion Kit | Selectively removes abundant repeat sequences (e.g., rDNA) pre-sequencing. | NEBNext rRNA Depletion Kit | Probe design; depletion efficiency. |

Mitigating PCR Duplication Bias and Its Impact on Variant Calling

Within a Standard Operating Procedure (SOP) for Whole Genome Sequencing (WGS) quality assessment for NCBI-submissible research, managing PCR duplication bias is a critical pre-variant calling QC step. PCR duplicates, identical read pairs arising from clonal amplification of a single original DNA fragment during library preparation, falsely inflate coverage metrics and can lead to erroneous variant calls, especially in low-complexity regions. This application note details protocols for identification, mitigation, and impact assessment of PCR duplication bias to ensure data integrity for downstream analysis.

Table 1: Common Duplication Metrics from Sequencing QC Tools (Per Sample)

| Metric | Typical Calculation | Acceptable Threshold (WGS) | Impact of High Value |

|---|---|---|---|

| Duplication Rate | (Duplicate Reads / Total Reads) * 100 | < 10-20% | Wasted sequencing depth; inflated coverage confidence. |

| Estimated Library Complexity | Unique molecular identifiers (UMI)-based or positional deduplication estimate. | Sample-specific; higher is better. | Low complexity indicates severe bias, poor library prep. |

| Mean Coverage Post-Deduplication | Total aligned bases / genome size after duplicate removal. | ≥30X for human germline. | True measure of usable sequencing depth for variant calling. |

Table 2: Impact of Duplicate Removal on Variant Calling Metrics

| Variant Class | Effect of Not Removing Duplicates (Falsely) | Effect of Overly Aggressive Deduplication |

|---|---|---|

| False Positive SNVs | May increase in low-complexity/ high-GC regions. | Minimal increase for most tools. |

| False Negative SNVs | May mask real low-allele-fraction variants. | Can increase, especially for low-VAF somatic variants. |

| False Positive Indels | Significant increase in homopolymer regions. | Potential loss of real, PCR-prone indel alleles. |

| Allele Frequency Estimation | Skewed toward duplicated fragments' allele. | More accurate for germline; may be biased for somatic. |

Experimental Protocols

Protocol 3.1: Identification and Quantification of PCR Duplicates

Objective: To assess the level of PCR duplication in a sequenced library using alignment-based software. Materials: FASTQ files, reference genome (e.g., GRCh38), high-performance computing cluster. Software: Picard Tools MarkDuplicates, samtools, FASTQC (post-alignment metrics).

Method:

- Alignment: Align paired-end reads to the reference genome using a splice-aware aligner (e.g., BWA-MEM for DNA, HISAT2 for RNA). Convert output to coordinate-sorted BAM file.

- Mark Duplicates: Execute Picard MarkDuplicates to identify reads with identical external coordinates (5' position of each mate pair) and assign duplicate flags.

- Metrics Analysis: Examine the

marked_dup_metrics.txtfile. Key outputs:PERCENT_DUPLICATION,ESTIMATED_LIBRARY_SIZE,READ_PAIRS_EXAMINED. - Visualization: Generate post-alignment FASTQC report on the marked BAM file to view duplication level plots.

Protocol 3.2: Duplicate Removal for Germline Variant Calling

Objective: To remove duplicate reads prior to germline variant calling to prevent bias. Materials: BAM file with duplicate flags from Protocol 3.1. Software: samtools, GATK.

Method:

- Filter Duplicates: Use

samtools viewto exclude reads marked as duplicates (flag 1024). - Index BAM: Index the new deduplicated BAM file.

- Proceed to Variant Calling: Use the

deduplicated.bamfile as input for your germline variant caller (e.g., GATK HaplotypeCaller, DeepVariant). - Validation: Compare coverage depth (

samtools depth) and variant counts in high-duplication regions before and after deduplication.

Protocol 3.3: UMI-Based Deduplication for Somatic Variant Calling

Objective: To accurately identify true unique DNA fragments using Unique Molecular Identifiers (UMIs) for sensitive low-VAF detection. Materials: FASTQ from UMI-tagged library prep (e.g., duplex sequencing), reference genome. Software: fgbio, Picard, GATK.

Method:

- Extract UMIs: Annotate reads in the BAM file with their UMI sequences from the read headers.

- Group Reads by UMI Family: Cluster reads that share the same start position and UMI.

- Call Consensus Reads: Create a consensus read for each UMI family, significantly reducing PCR and sequencing errors.

- Deduplicate Consensus BAM: Use standard coordinate-based deduplication on the consensus BAM.

- Variant Calling: Use the final BAM for high-sensitivity somatic variant callers (e.g., GATK Mutect2).

Visualizations

Title: WGS PCR Duplicate Handling Decision Workflow

Title: UMI-Based Deduplication Logic from Reads to Consensus

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Managing PCR Duplication Bias

| Item / Reagent | Function in Mitigating Duplication Bias | Example Product / Kit |

|---|---|---|

| PCR-Free Library Prep Kit | Eliminates duplication source by avoiding amplification; ideal for high-input DNA WGS. | Illumina DNA PCR-Free Prep, KAPA HyperPlus PCR-Free. |

| Low-Input/Ultra-Low Input Library Kit | Uses specialized ligation or transposase chemistry to maximize complexity from limited samples. | Nextera XT, SMARTer ThruPLEX. |

| UMI Adapter Kit | Incorporates unique molecular identifiers into each original molecule for true duplicate identification. | IDT for Illumina UMI Adapters, Twist UMI Adaptase Kit. |

| High-Fidelity DNA Polymerase | Reduces PCR errors during amplification steps, improving accuracy of consensus calls with UMIs. | KAPA HiFi, Q5 High-Fidelity. |

| Duplex Sequencing Adapters | Specialized UMIs for identifying original double-stranded molecules, enabling error correction to ~10^-9. | DUPLEX-SEQ Adapters. |

| Methylated Spike-in Control DNA | Assesses potential bias introduced by PCR amplification in specific genomic contexts (e.g., GC-rich). | Spike-in Control (E. coli) from Zymo Research. |

Optimizing Coverage Uniformity and Addressing High/Low GC Bias